Every device you touch — phone, laptop, smartwatch, the server rendering this page — traces its lineage to a handful of ideas that seemed absurd when first proposed. A Victorian countess writing algorithms for a machine that wouldn't be built for another century. A 24-year-old mathematician proving that a strip of tape and a read/write head could compute anything computable. Three physicists at Bell Labs replacing a room of glowing vacuum tubes with a sliver of germanium the size of a pencil eraser.

This is the story of how we got from mechanical gears to AI agents that build software autonomously. Not a list of dates and names — a connected narrative where each breakthrough made the next one inevitable. 🧬

TL;DR: Computing evolved through 7 distinct eras — from Babbage's 1837 Analytical Engine to AI agents that build, deploy, and automate in 2026. The throughline: every generation abstracts away the complexity of the last. Today, Taskade Genesis completes the arc — one prompt builds a living application with embedded agents, automations, and 100+ integrations. Try it free →

⚙️ How Computers Represent Numbers: Binary from First Principles

Before we can understand how a computer works, we need to understand its language. And that language has exactly two words: zero and one.

This isn't arbitrary. It's an engineering decision rooted in physics. Electricity is noisy and unpredictable — voltage fluctuates, signals degrade over distance, interference corrupts precision. Trying to distinguish between ten voltage levels (representing digits 0–9) is unreliable. But distinguishing between two states — current flowing or not flowing, above a threshold or below it — is robust and cheap to manufacture at scale.

"The fundamental insight of digital computing is that reliability trumps efficiency. Two symbols are enough to represent anything — if you're clever about how you combine them." — Claude Shannon, MIT Master's Thesis (1937)

Decimal vs. Binary: The Same Idea, Different Base

You already know how positional number systems work — you just don't think about it. The number 724 in decimal (base 10) means:

7 × 10² + 2 × 10¹ + 4 × 10⁰

= 7 × 100 + 2 × 10 + 4 × 1

= 700 + 20 + 4

= 724

Binary (base 2) follows the exact same rule, but each position is a power of 2 instead of 10, and each digit can only be 0 or 1:

Binary: 1 0 1 1

│ │ │ └─ 1 × 2⁰ = 1 × 1 = 1

│ │ └─── 1 × 2¹ = 1 × 2 = 2

│ └───── 0 × 2² = 0 × 4 = 0

└─────── 1 × 2³ = 1 × 8 = 8

──

Total: 11 (decimal)

Any number you can think of can be expressed as a sum of powers of 2. This is the foundation everything else is built on.

Binary Arithmetic: How Computers Add

Addition in binary follows the same carry-over rules you learned in elementary school. The only difference: you carry when you hit 2, not 10.

Decimal: 1 + 1 = 2 → "put 0, carry 1"

Binary: 1 + 1 = 10₂ → same idea!

1 0 1 (5 in decimal)

- 1 1 1 (7 in decimal)

─────────

1 1 0 0 (12 in decimal ✓)

Here's the carry-over pattern:

| A | B | Carry In | Sum | Carry Out |

|---|---|---|---|---|

| 0 | 0 | 0 | 0 | 0 |

| 0 | 1 | 0 | 1 | 0 |

| 1 | 0 | 0 | 1 | 0 |

| 1 | 1 | 0 | 0 | 1 |

| 1 | 1 | 1 | 1 | 1 |

This table is the complete specification for a full adder — the circuit that performs addition inside every CPU on Earth. With just this truth table and billions of transistors, you can build a machine that runs ChatGPT.

Negative Numbers: Two's Complement

How do you represent negative numbers with only 0s and 1s? The elegant solution is two's complement: flip every bit and add 1.

+5 in 8-bit: 0 0 0 0 0 1 0 1

Flip all bits: 1 1 1 1 1 0 1 0

Add 1: 1 1 1 1 1 0 1 1 ← this is -5

The beauty: addition just works. Adding +5 and -5 produces all zeros (with a carry that overflows and disappears). No special subtraction circuit needed — the same adder hardware handles both.

From Numbers to Everything: ASCII, Unicode, and Beyond

Once you can represent numbers, you can represent anything by assigning numbers to things:

| Data Type | Encoding | Example |

|---|---|---|

| Letters | ASCII (1963) | A = 65 = 01000001 |

| All scripts | Unicode (1991) | 漢 = U+6F22 |

| Colors | RGB (8-bit each) | Red = (255, 0, 0) |

| Images | Pixel grids | 1920×1080 × 3 bytes = 6.2 MB |

| Sound | Sample amplitudes | 44,100 samples/sec (CD quality) |

| Video | Image sequences | 30 frames/sec × pixels × colors |

Every photo, song, video, email, and AI model you've ever interacted with is — at the deepest level — a sequence of 0s and 1s interpreted through layers of agreed-upon encoding.

🔧 The Mechanical Era: Counting Machines Before Electricity (1642–1936)

The desire to automate calculation is older than electricity, older than the steam engine, older than the printing press reaching mass adoption. It begins with the simple, human frustration of arithmetic errors.

Pascal, Leibniz, and the Dream of Mechanical Thought

In 1642, 19-year-old Blaise Pascal built the Pascaline — a mechanical calculator that could add and subtract using a system of interlocking gears. Each gear had 10 teeth (one per digit), and when a gear completed a full rotation, it advanced the next gear by one position. It was the carry mechanism from long addition, made physical.

Gottfried Wilhelm Leibniz extended Pascal's design in 1694 with the Step Reckoner, which could also multiply and divide. But Leibniz's more lasting contribution was conceptual: he described binary arithmetic in his 1703 paper Explication de l'Arithmétique Binaire — the same base-2 system that every computer uses today, conceived 240 years before the first electronic computer.

┌─────────────────────────────────────────────────┐

│ MECHANICAL COMPUTING TIMELINE │

├──────────┬──────────────────────────────────────┤

│ 1642 │ Pascaline (add/subtract) │

│ 1694 │ Leibniz Step Reckoner (×, ÷) │

│ 1703 │ Leibniz publishes binary arithmetic │

│ 1801 │ Jacquard loom (punched cards) │

│ 1822 │ Babbage Difference Engine │

│ 1837 │ Babbage Analytical Engine (design) │

│ 1843 │ Ada Lovelace's first algorithm │

│ 1854 │ Boole's Laws of Thought │

│ 1890 │ Hollerith tabulator (US Census) │

└──────────┴──────────────────────────────────────┘

Babbage's Analytical Engine: The First General-Purpose Computer

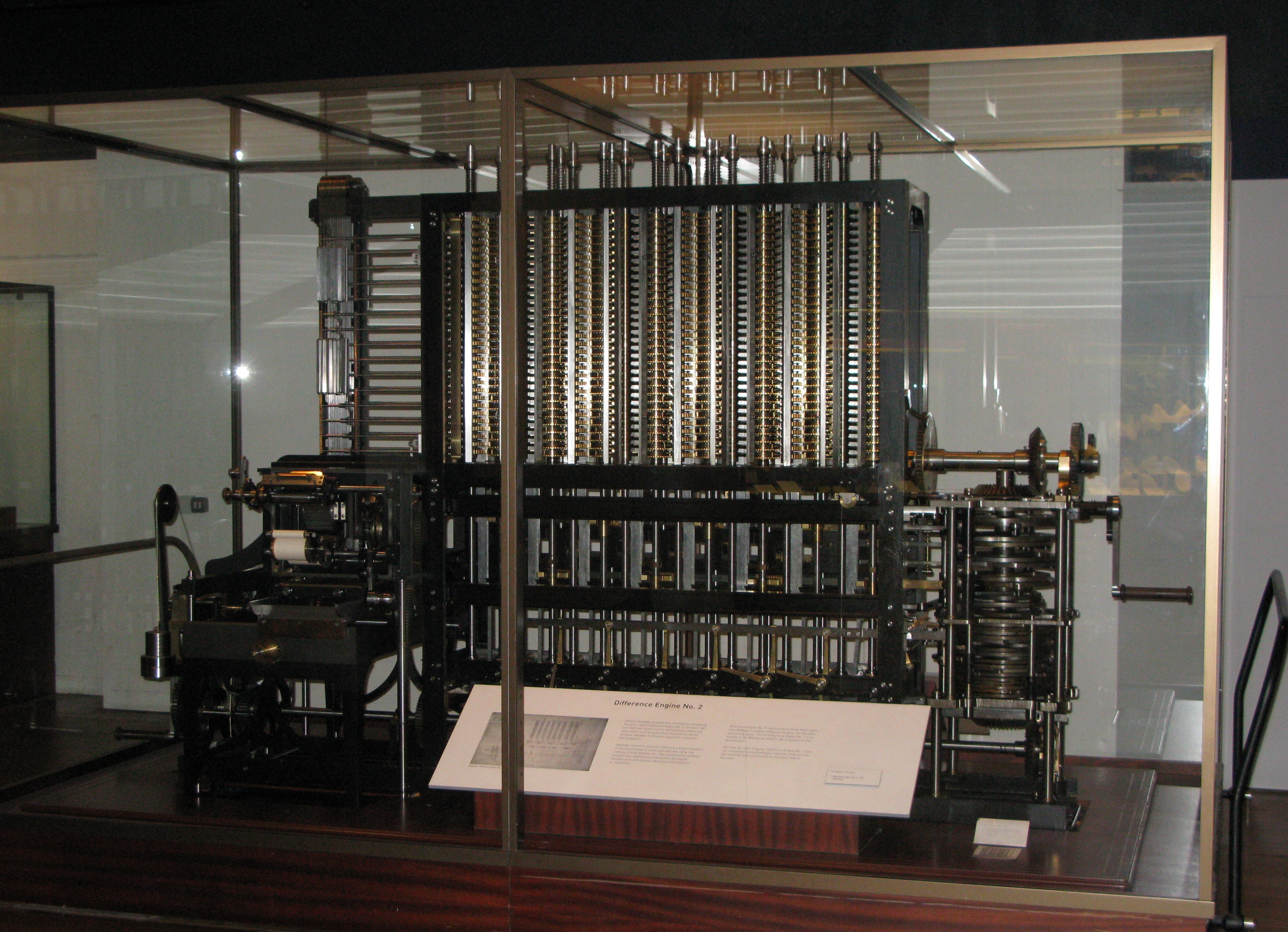

Charles Babbage is the architect of computing's grand vision. His Difference Engine (1822) was a specialized calculator for polynomial tables. But his Analytical Engine (1837) was something else entirely — the first design for a general-purpose, programmable computing machine.

The Analytical Engine had:

- A "mill" (processor) for arithmetic operations

- A "store" (memory) capable of holding 1,000 numbers of 50 digits each

- Conditional branching — the ability to jump to different instructions based on results

- Punched card input — borrowed from the Jacquard loom (1801)

It was never built in Babbage's lifetime. The precision machining required exceeded Victorian manufacturing capabilities. But the design was complete, and it was Turing-complete — meaning it could, in theory, compute anything a modern computer can compute.

Charles Babbage's Difference Engine No. 2, completed posthumously from his original designs. Science Museum, London. Image credit: Wikimedia Commons(1)

Ada Lovelace: The First Programmer

Augusta Ada King, Countess of Lovelace, wrote what is recognized as the first computer program in 1843 — an algorithm for the Analytical Engine to compute Bernoulli numbers, published as Note G in her translation of Luigi Menabrea's article on the Engine. She chose a deliberately complex method, noting: "the object is not simplicity or facility of computation, but the illustration of the powers of the engine." Her insight went far beyond a single algorithm.

In her published notes (Note G), Lovelace anticipated ideas that wouldn't be formalized for another century:

"The Analytical Engine has no pretensions whatever to originate anything. It can do whatever we know how to order it to perform."

She recognized that the machine could manipulate symbols, not just numbers — potentially composing music or generating graphics. She was describing general-purpose computation a century before Turing formalized it.

Ada Lovelace (1815–1852), mathematician and writer. Her notes on the Analytical Engine contain the first published algorithm. Image credit: Wikimedia Commons(2)

Boole and Shannon: The Bridge from Logic to Circuits

George Boole published The Laws of Thought in 1854, reducing logical propositions to algebra: AND, OR, NOT — operations on true/false values. At the time, it was pure mathematics with no practical application in sight.

Eighty-three years later, a 21-year-old MIT master's student named Claude Shannon wrote what has been called "the most important master's thesis of the 20th century." In A Symbolic Analysis of Relay and Switching Circuits (1937), Shannon proved that Boolean algebra maps directly to electrical circuits. Every AND, OR, NOT operation could be implemented with a switch.

┌──────────────────────────────────────────────┐

│ BOOLEAN LOGIC → PHYSICAL GATES │

├──────────────┬──────────────┬────────────────┤

│ Boolean │ Circuit │ Truth Table │

├──────────────┼──────────────┼────────────────┤

│ A AND B │ Series │ 1 only if │

│ │ switches │ both are 1 │

├──────────────┼──────────────┼────────────────┤

│ A OR B │ Parallel │ 1 if either │

│ │ switches │ is 1 │

├──────────────┼──────────────┼────────────────┤

│ NOT A │ Inverter │ Flips the │

│ │ │ input │

└──────────────┴──────────────┴────────────────┘

This was the Rosetta Stone. From this point forward, anyone who could build a switch could build a computer. The question was no longer if — but how fast and how small.

💡 Turing's Universal Machine: The Theoretical Foundation (1936)

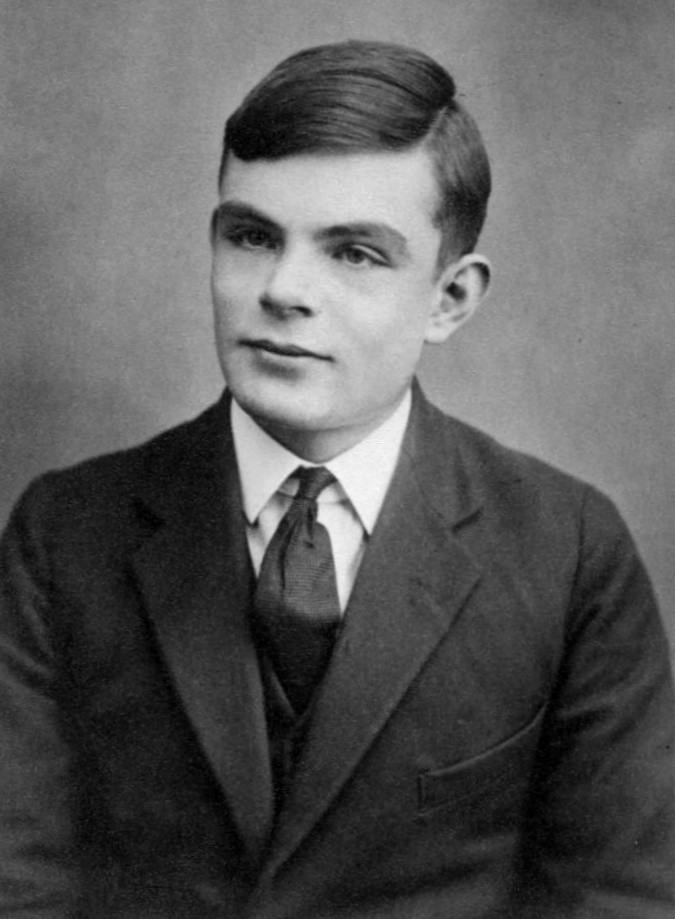

In the same year Shannon was wiring up switches at MIT, a 24-year-old Cambridge mathematician named Alan Turing published a paper that would define the limits of computation itself.

On Computable Numbers, with an Application to the Entscheidungsproblem (1936) introduced the Turing machine — not a physical device, but a mathematical abstraction:

┌─────────────────────────────────────────────────────────┐

│ TURING MACHINE │

│ │

│ ┌───┬───┬───┬───┬───┬───┬───┬───┬───┬───┬───┐ │

│ │ 0 │ 1 │ 1 │ 0 │ 1 │ 0 │ 0 │ 1 │ 1 │ 0 │...│ ← Infinite tape │

│ └───┴───┴─▲─┴───┴───┴───┴───┴───┴───┴───┴───┘ │

│ │ │

│ ┌───┴───┐ │

│ │ HEAD │ ← Read/Write head │

│ │ State │ (moves left or right) │

│ │ q₃ │ │

│ └───────┘ │

│ │

│ Rules: (current state, symbol read) │

│ → (new state, symbol to write, move direction) │

└─────────────────────────────────────────────────────────┘

With just an infinite tape, a read/write head, a state register, and a finite set of rules, Turing proved this machine could compute anything that is computable. Every algorithm, every program, every AI model running today — all are computations a Turing machine could perform (given enough time and tape).

More profoundly, Turing proved that a Universal Turing Machine exists — one that can simulate any other Turing machine. Feed it a description of another machine plus its input, and it reproduces the output exactly. This is the theoretical basis for general-purpose computers: one machine that can run any program.

He also proved that some problems are undecidable — no machine can solve them in general. The halting problem (predicting whether a program will finish or run forever) being the most famous example. There are limits to computation, and Turing drew the map.

Alan Turing (1912–1954), mathematician, logician, cryptanalyst, and father of theoretical computer science. Image credit: Wikimedia Commons(3)

Turing at Bletchley Park: Computing Meets War

Theory became urgency during World War II. At Bletchley Park, Turing led the effort to crack the German Enigma cipher. His electromechanical Bombe machine could test possible Enigma settings at superhuman speed, rejecting invalid configurations until only the correct key remained.

Meanwhile, engineer Tommy Flowers built Colossus (1943) — the first programmable electronic digital computer — to break the even more complex Lorenz cipher. Colossus Mark 1 used 1,600 vacuum tubes (later models up to 2,500) and could process 5,000 characters per second. Its existence remained classified until the 1970s.

🔥 The Electronic Era: Vacuum Tubes and the First Computers (1943–1956)

The transition from mechanical to electronic computing happened under the pressure of war, and it changed everything. Where gears could cycle a few times per second, vacuum tubes could switch thousands of times per second. Speed increased by orders of magnitude overnight.

ENIAC: The Room-Sized Calculator

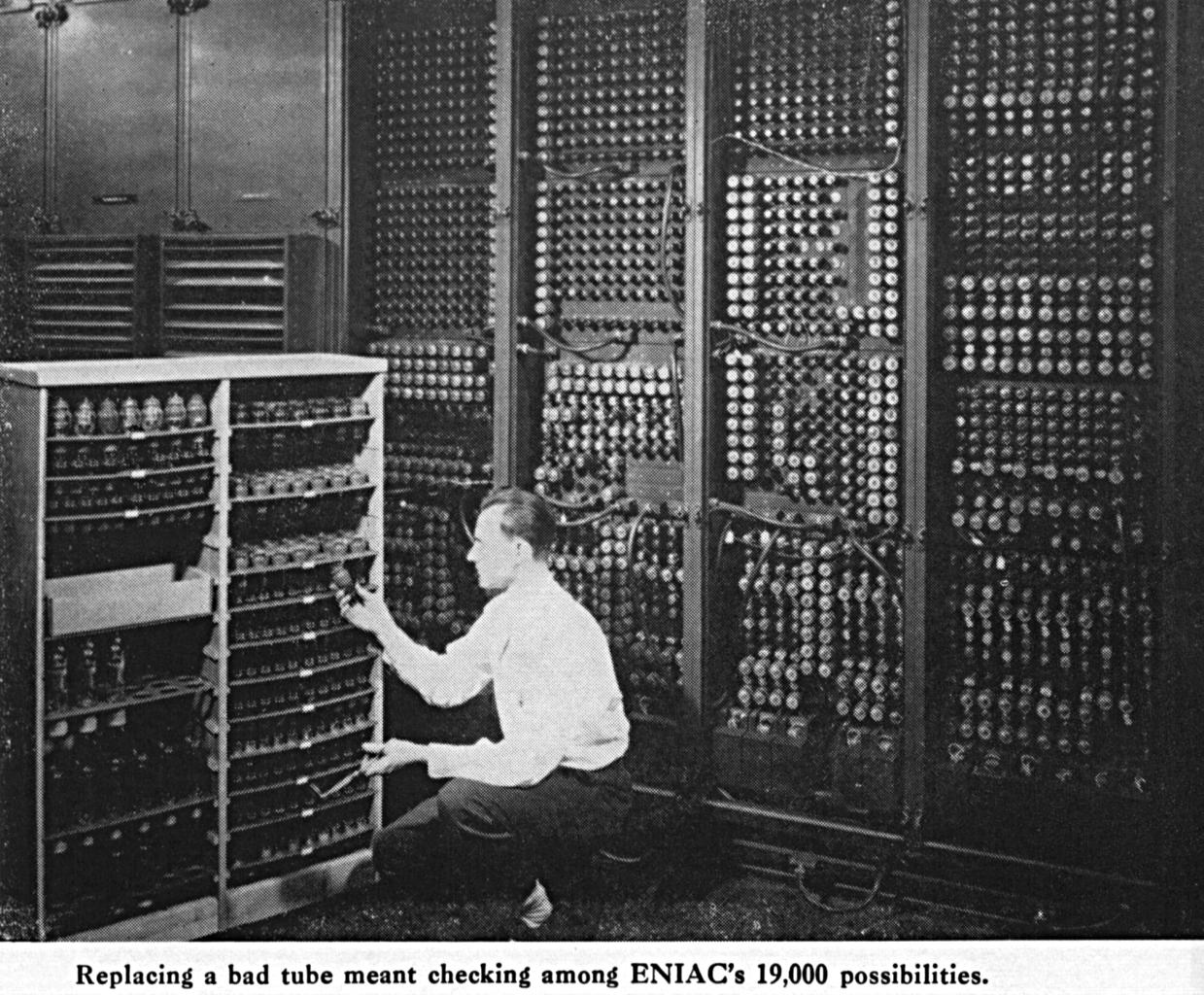

The Electronic Numerical Integrator and Computer (ENIAC), completed in 1945 at the University of Pennsylvania, was the first general-purpose electronic computer.

| Specification | ENIAC (1945) | iPhone 16 (2024) |

|---|---|---|

| Weight | 30 tons | 170 grams |

| Floor space | 1,500 sq ft | 5.8 inches |

| Power consumption | 150 kilowatts | ~5 watts |

| Vacuum tubes / transistors | 17,468 tubes | 19 billion transistors |

| Clock speed | 100 kHz | 3.78 GHz |

| Operations/second | 5,000 | ~17 trillion |

| Cost (adjusted) | ~$7.5 million (2026) | $799 |

ENIAC could perform 5,000 additions per second — revolutionary at the time, but less computing power than a modern musical greeting card. It consumed enough electricity to dim the lights in an entire Philadelphia city block when switched on.

The ENIAC computer, 1946. U.S. Army photo. Two programmers (Kay McNulty and Betty Jennings) are shown operating the machine. Image credit: U.S. Army / Wikimedia Commons(4)

Von Neumann Architecture: The Blueprint for Every Modern Computer

ENIAC had a critical limitation: it was programmed by physically rewiring cables and flipping switches. Changing a program took days.

In 1945, mathematician John von Neumann proposed a revolutionary idea in his First Draft of a Report on the EDVAC: store the program in memory alongside the data. This meant a computer could modify its own instructions — enabling loops, conditionals, and self-modifying code without touching hardware.

┌─────────────────────────────────────────────┐

│ VON NEUMANN ARCHITECTURE │

│ │

│ ┌───────────┐ ┌────────────────────┐ │

│ │ │◄────►│ │ │

│ │ CPU │ │ MEMORY │ │

│ │ │◄────►│ │ │

│ │ ┌───────┐ │ │ ┌──────────────┐ │ │

│ │ │ ALU │ │ │ │ Instructions │ │ │

│ │ └───────┘ │ │ ├──────────────┤ │ │

│ │ ┌───────┐ │ │ │ Data │ │ │

│ │ │Control│ │ │ └──────────────┘ │ │

│ │ │ Unit │ │ │ │ │

│ │ └───────┘ │ └────────────────────┘ │

│ └─────┬─────┘ │

│ │ │

│ ┌────┴─────┐ │

│ │ Input / │ │

│ │ Output │ │

│ └──────────┘ │

└─────────────────────────────────────────────┘

The key insight: instructions and data live in the same memory and are accessed through the same bus. This is called the stored-program concept, and it is the architecture of virtually every computer built since 1949.

The first machine to implement it was the Manchester Baby (June 21, 1948), which ran its first stored program — a 52-minute search for the highest factor of 2¹⁸. It wasn't useful. It was historic.

Grace Hopper and the First Compiler

In 1952, Grace Hopper created the A-0 System — the first compiler, which translated human-readable mathematical notation into machine code. When she presented the idea to her colleagues, they told her computers could only do arithmetic, not programming. She built it anyway.

Hopper later led the development of COBOL (1959), one of the first English-like programming languages. Her philosophy: programming languages should be accessible to non-mathematicians. Billions of lines of COBOL still run the world's banking systems today.

"The most dangerous phrase in the language is: 'We've always done it this way.'" — Grace Hopper

⚡ The Transistor Revolution: Smaller, Faster, Cheaper (1947–1971)

Vacuum tubes were miraculous but terrible: fragile, hot, power-hungry, and unreliable. ENIAC's 17,468 tubes failed at a rate of about one every two days. The search for something better led to the most important invention of the 20th century.

The Transistor: Three Physicists Change Everything

On December 23, 1947, John Bardeen, Walter Brattain, and William Shockley at Bell Labs demonstrated the first working transistor. It did exactly what a vacuum tube did — amplify and switch electronic signals — but it was solid-state: no glass envelope, no heated filament, no vacuum required.

A replica of the first point-contact transistor, invented at Bell Labs in December 1947. Bardeen, Brattain, and Shockley shared the 1956 Nobel Prize in Physics. Image credit: Wikimedia Commons(5)

The transistor's advantages were overwhelming:

| Property | Vacuum Tube | Transistor |

|---|---|---|

| Size | Baseball-sized | Grain of sand (1960s) |

| Power | Watts per tube | Milliwatts |

| Heat | Extreme | Minimal |

| Reliability | ~1,000 hours | Decades |

| Switching speed | Microseconds | Nanoseconds |

| Cost (1960) | ~$1 per tube | ~$0.10 |

The Integrated Circuit: Kilby and Noyce

Individual transistors were better than tubes, but wiring thousands of them together by hand was still slow, expensive, and error-prone. Two inventors, working independently, solved this simultaneously.

In September 1958, Jack Kilby at Texas Instruments demonstrated the first integrated circuit (IC) — multiple transistors on a single piece of germanium. Six months later, Robert Noyce at Fairchild Semiconductor created a superior version using silicon and a planar process that was easier to manufacture.

Noyce's approach won commercially. He co-founded Intel in 1968 with Gordon Moore — and the silicon in Silicon Valley got its name.

Moore's Law: The Engine of Exponential Progress

In 1965, Gordon Moore published an observation in Electronics magazine: the number of transistors on a chip was doubling approximately every year (later revised to every two years). This wasn't a law of physics — it was a self-fulfilling prophecy driven by engineering ambition and market economics.

┌────────────────────────────────────────────────────────┐

│ MOORE'S LAW IN ACTION │

│ Transistors per chip (log scale) │

│ │

│ 10¹² │ ▄ M2 Ultra │

│ │ ▄▀ (134B) │

│ 10¹⁰ │ ▄▄▀' │

│ │ ▄▄▀' │

│ 10⁸ │ ▄▄▀' │

│ │ ▄▄▀' Pentium 4 │

│ 10⁶ │ ▄▄▀' (42M) │

│ │ ▄▄▀' │

│ 10⁴ │ ▄▄▀' 8086 │

│ │ ▄▄▀' (29K) │

│ 10² │ ▄▀ 4004 │

│ │▀ (2,300) │

│ ├────────────────────────────────────────────── │

│ 1970 1980 1990 2000 2010 2020 │

└────────────────────────────────────────────────────────┘

| Year | Chip | Transistors | Process Node |

|---|---|---|---|

| 1971 | Intel 4004 | 2,300 | 10 μm |

| 1978 | Intel 8086 | 29,000 | 3 μm |

| 1993 | Pentium | 3.1 million | 0.8 μm |

| 2000 | Pentium 4 | 42 million | 180 nm |

| 2012 | Intel i7-3770 | 1.4 billion | 22 nm |

| 2022 | Apple M2 | 20 billion | 5 nm |

| 2023 | Apple M2 Ultra | 134 billion | 5 nm |

| 2024 | NVIDIA B200 | 208 billion | 4 nm |

Moore's Law isn't just a curiosity — it's the economic engine that made personal computers, smartphones, and AI possible. When compute gets 2× cheaper every two years, what was impossibly expensive today becomes free tomorrow.

🖥️ Building a CPU: The Fetch-Decode-Execute Cycle

Now that we have transistors that can switch billions of times per second, how do we arrange them to actually compute?

Logic Gates: The Atoms of Computation

Transistors are combined into logic gates — circuits that implement Boolean operations. From just three gate types (AND, OR, NOT), you can build any computation:

AND Gate OR Gate NOT Gate

───────── ───────── ─────────

A ─┐ A ─┐ A ─┐

│─── Output │─── Output │─── Output

B ─┘ B ─┘ │

│

1 AND 1 = 1 1 OR 0 = 1 NOT 1 = 0

1 AND 0 = 0 0 OR 0 = 0 NOT 0 = 1

From these primitives, you build:

- Half adder → adds two bits

- Full adder → adds two bits plus carry

- Ripple-carry adder → chains full adders to add multi-bit numbers

- ALU (Arithmetic Logic Unit) → handles addition, subtraction, AND, OR, XOR, comparison

- Registers → small, fast storage built from flip-flops (circuits that remember one bit)

- Multiplexers → route data between components

The CPU Cycle: Fetch, Decode, Execute, Store

Every program you've ever run — from Pong to GPT-5 — executes through the same four-step cycle, billions of times per second:

┌─────────────────────────────────────────────────────┐

│ THE CPU CYCLE (simplified) │

│ │

│ ┌──────────┐ ┌──────────┐ │

│ │ FETCH │────►│ DECODE │ │

│ │ │ │ │ │

│ │ Get next │ │ What op? │ │

│ │ instruc- │ │ What │ │

│ │ tion from│ │ operands?│ │

│ │ memory │ │ │ │

│ └──────────┘ └────┬─────┘ │

│ ▲ │ │

│ │ ▼ │

│ ┌────┴─────┐ ┌──────────┐ │

│ │ STORE │◄────│ EXECUTE │ │

│ │ │ │ │ │

│ │ Write │ │ ALU does │ │

│ │ result │ │ the math │ │

│ │ back to │ │ or logic │ │

│ │ memory │ │ │ │

│ └──────────┘ └──────────┘ │

│ │

│ This cycle repeats billions of times per second │

│ on a modern processor (3+ GHz = 3B cycles/sec) │

└─────────────────────────────────────────────────────┘

The Intel 4004: A CPU on a Chip (1971)

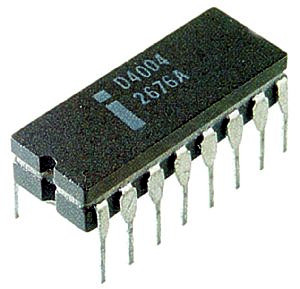

The Intel 4004, released in November 1971, was the first commercially available microprocessor — an entire CPU on a single integrated circuit. Designed by Federico Faggin, Ted Hoff, and Stanley Mazor for a Japanese calculator company (Busicom), it packed 2,300 transistors into a chip the size of a fingernail.

The Intel 4004 (1971) — the first commercial microprocessor. 2,300 transistors, 740 kHz clock speed, 4-bit data bus. It had roughly the computing power of ENIAC but fit on your fingertip. Image credit: Wikimedia Commons(6)

The 4004 could execute 92,000 instructions per second. Your phone's processor executes roughly 17 trillion. But the architecture is fundamentally the same: fetch, decode, execute, store. The principles haven't changed — only the scale.

🖥️ The Personal Computer Revolution (1973–1995)

For the first three decades of electronic computing, computers were institutional — owned by governments, universities, and corporations, operated by specialists in white lab coats. The personal computer revolution put computing power directly into the hands of individuals, and it was driven by people who rejected the establishment's vision of what computers were for.

Xerox PARC: The Future That Xerox Gave Away

In 1973, researchers at Xerox PARC (Palo Alto Research Center) built the Alto — a computer with a graphical user interface, a mouse, Ethernet networking, WYSIWYG text editing, and a bitmap display. It was the future, fully formed, a decade ahead of anything else.

Xerox's management didn't understand what they had. The Alto was never commercialized. Instead, the ideas leaked out through demonstrations — most famously to a 24-year-old named Steve Jobs, who visited PARC in December 1979 and immediately understood what he was seeing.

"They showed me really three things. But I was so blinded by the first one I didn't even really see the other two." — Steve Jobs, on seeing the GUI at Xerox PARC

Apple, IBM, and the Democratization of Computing

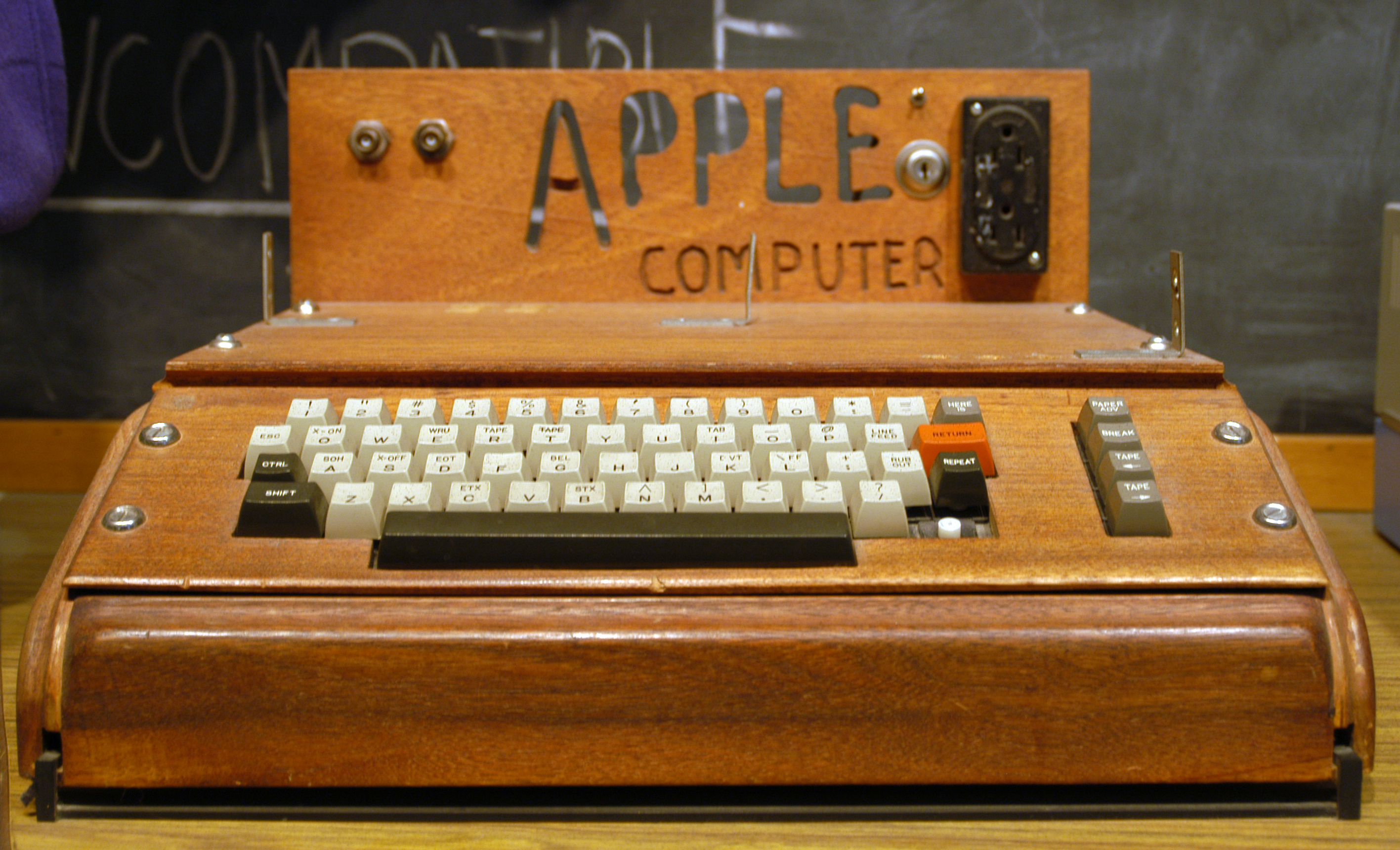

Steve Wozniak and Steve Jobs built the Apple I (1976) in a garage and the Apple II (1977) became one of the first mass-produced personal computers, selling millions of units. The Apple II's killer app was VisiCalc (1979) — the first spreadsheet — which gave business users a reason to buy a personal computer.

IBM entered the PC market in 1981 with the IBM PC, legitimizing personal computing for corporate America. IBM made a fateful decision: they used an open architecture with off-the-shelf components and licensed the operating system from a 25-year-old named Bill Gates. This allowed clones, which created the "IBM-compatible" ecosystem that dominates to this day.

The Apple I (1976), hand-built by Steve Wozniak. Only about 200 units were produced. Today, surviving units sell for over $400,000 at auction. Image credit: Wikimedia Commons(7)

The Macintosh and the GUI Revolution (1984)

The Macintosh (January 24, 1984) brought the graphical user interface to consumers. Behind it was Bill Atkinson, who wrote QuickDraw — the graphics engine that rendered every pixel on the Mac's screen. Atkinson's code was so efficient that it could draw circles, lines, and filled regions faster than dedicated graphics hardware.

Atkinson also created MacPaint and later HyperCard (1987) — one of the first multimedia authoring tools, arguably a precursor to the World Wide Web. HyperCard let non-programmers create interactive applications by linking "cards" together — an idea that anticipated hyperlinks, no-code tools, and even Taskade's workspace-based app builder by decades.

| Milestone | Year | Significance |

|---|---|---|

| Xerox Alto | 1973 | First GUI computer (never commercialized) |

| Apple II | 1977 | First mass-market personal computer |

| VisiCalc | 1979 | First spreadsheet — the original "killer app" |

| IBM PC | 1981 | Made PCs legitimate for business |

| Macintosh | 1984 | Brought GUI to consumers |

| HyperCard | 1987 | First multimedia authoring for non-programmers |

| Windows 3.0 | 1990 | GUI goes mainstream on IBM-compatibles |

| Linux kernel | 1991 | Open-source OS that now runs the world |

| Windows 95 | 1995 | Start menu, taskbar, 1M copies sold in 4 days |

Linux: Linus Torvalds and the Open-Source Revolution (1991)

On August 25, 1991, a 21-year-old Finnish student named Linus Torvalds posted a message to the comp.os.minix newsgroup:

"I'm doing a (free) operating system (just a hobby, won't be big and professional like gnu) for 386(486) AT clones."

That "hobby" became Linux — the operating system kernel that now powers:

- 96.3% of the world's top 1 million web servers

- 100% of the world's top 500 supercomputers

- Every Android phone (3+ billion devices)

- Most cloud infrastructure (AWS, Azure, GCP)

- The majority of IoT devices, routers, and embedded systems

Torvalds's approach — release early, accept contributions from anyone, maintain ruthless quality standards — created the open-source development model that underpins modern software engineering.

🌐 The Internet and the World Wide Web (1969–2000)

The personal computer gave individuals computing power. The internet connected those computers together. The combination changed civilization.

From ARPANET to TCP/IP

┌────────────────────────────────────────────────────┐

│ THE INTERNET TIMELINE │

├──────────┬─────────────────────────────────────────┤

│ 1969 │ ARPANET: first message sent │

│ │ (UCLA → Stanford, "LO" — crashed │

│ │ before completing "LOGIN") │

├──────────┼─────────────────────────────────────────┤

│ 1971 │ First email (Ray Tomlinson, @ symbol) │

├──────────┼─────────────────────────────────────────┤

│ 1973 │ TCP/IP protocol designed │

│ │ (Vint Cerf & Bob Kahn) │

├──────────┼─────────────────────────────────────────┤

│ 1983 │ ARPANET switches to TCP/IP │

│ │ ("Flag Day" — the internet is born) │

├──────────┼─────────────────────────────────────────┤

│ 1989 │ Tim Berners-Lee proposes the Web │

├──────────┼─────────────────────────────────────────┤

│ 1991 │ First website goes live (CERN) │

├──────────┼─────────────────────────────────────────┤

│ 1993 │ Mosaic browser (images + text) │

├──────────┼─────────────────────────────────────────┤

│ 1994 │ Netscape Navigator, Amazon, Yahoo │

├──────────┼─────────────────────────────────────────┤

│ 1995 │ JavaScript created (10 days) │

├──────────┼─────────────────────────────────────────┤

│ 1998 │ Google founded (PageRank algorithm) │

├──────────┼─────────────────────────────────────────┤

│ 2000 │ Dot-com bubble bursts │

└──────────┴─────────────────────────────────────────┘

The first ARPANET message was sent on October 29, 1969, from UCLA to Stanford Research Institute. The intended message was "LOGIN." The system crashed after transmitting "LO." Fitting, perhaps — the first word the internet ever spoke was "Lo," as in "Lo and behold."

Tim Berners-Lee and the World Wide Web

In 1989, Tim Berners-Lee, a physicist at CERN, proposed a system for sharing documents between researchers using hypertext. By 1991, he had built three things that would reshape human communication:

- HTML — a markup language for creating web pages

- HTTP — a protocol for transmitting them

- URLs — a system for addressing them

The first website (info.cern.ch) went live on August 6, 1991. It was a simple page explaining what the World Wide Web was. There was no Google, no CSS, no JavaScript — just blue hyperlinks on a gray background.

Tim Berners-Lee at CERN, where he invented the World Wide Web in 1989. He chose not to patent it, making the web free for everyone. Image credit: Wikimedia Commons(8)

The Mosaic browser (1993), created by Marc Andreessen at NCSA, added images to web pages and made the web visual. Andreessen co-founded Netscape in 1994 with Jim Clark. Netscape's IPO on August 9, 1995 — stock priced at $28, opened at $71 — ignited the dot-com boom. By 1995, the web was growing at 2,300% per year.

The Platform Era: Google, Amazon, and Cloud Computing

Google (1998) indexed the web and made information findable. Amazon (1994) sold books online, then everything else, then launched Amazon Web Services (2006) — renting computing power by the hour. AWS turned computing into a utility, like electricity. You didn't need a server room; you needed a credit card.

| Platform | Year | Impact |

|---|---|---|

| Amazon | 1994 | E-commerce, then cloud infrastructure |

| 1998 | Search, then ads, then AI | |

| AWS | 2006 | Computing becomes a utility |

| iPhone | 2007 | Computing becomes mobile |

| App Store | 2008 | Software distribution democratized |

| GitHub | 2008 | Open-source collaboration at scale |

Cloud computing created a new paradigm: instead of buying hardware, you rented it. Instead of deploying software to desktops, you served it through browsers. This was SaaS (Software as a Service), and it changed how productivity tools work — including how Taskade delivers AI-powered workspaces.

📱 The Mobile and Cloud Era (2007–2019)

On January 9, 2007, Steve Jobs walked onto a stage in San Francisco and said: "Today, Apple is going to reinvent the phone." The iPhone wasn't the first smartphone, but it was the first one that felt like the future.

Within a decade, smartphones outnumbered humans. By 2026, there are over 4.7 billion smartphone owners worldwide — with 7.4 billion mobile connections across the globe.

┌─────────────────────────────────────────────────────┐

│ COMPUTING POWER IN YOUR POCKET │

│ │

│ ┌──────────┐ ┌──────────┐ ┌──────────────────┐ │

│ │ ENIAC │ │ Cray-1 │ │ iPhone 16 │ │

│ │ (1945) │ │ (1976) │ │ (2024) │ │

│ ├──────────┤ ├──────────┤ ├──────────────────┤ │

│ │ 30 tons │ │ 5.5 tons │ │ 170 grams │ │

│ │ 1500 sqft│ │ ~80 sqft │ │ 5.8 inches │ │

│ │ 5K ops/s │ │ 160M │ │ 17T ops/s │ │

│ │ │ │ FLOPS │ │ │ │

│ │ $7.5M │ │ $8.8M │ │ $799 │ │

│ │ (2026$) │ │ (2026$) │ │ │ │

│ └──────────┘ └──────────┘ └──────────────────┘ │

│ │

│ 80 years: 30 tons → 170g, $7.5M → $799 │

│ Performance increase: ~3,400,000,000× │

└─────────────────────────────────────────────────────┘

The mobile era completed the democratization of computing. A farmer in rural India and a Wall Street trader carry essentially the same computer in their pockets. The hardware gap closed. The question shifted from "who has access to computers?" to "who has access to the best software?"

🤖 The AI Era: From Neural Networks to Agents (2012–2026)

Artificial intelligence is not new. The term was coined in 1956. Neural networks were proposed in 1943 — and the perceptron, the first learning machine, was built in 1957 by Frank Rosenblatt, a graduate of Bronx High School of Science. Backpropagation was described in 1986. So why did AI suddenly explode in the 2010s?

Three things converged simultaneously:

- Data: The internet generated unprecedented training data (text, images, video)

- Compute: GPUs (originally for gaming) proved ideal for parallel matrix operations

- Algorithms: Deep learning architectures learned to extract patterns at scale — and the algorithmic complexity that once separated good programmers from great ones became embedded in the platforms themselves

The Deep Learning Breakthrough (2012)

In 2012, Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton entered AlexNet into the ImageNet competition — a contest to classify 1.2 million images into 1,000 categories. AlexNet used deep convolutional neural networks trained on GPUs. It won by a landslide, cutting the error rate nearly in half (from 26% to 16%).

This single result triggered the modern AI boom. Every major tech company pivoted to deep learning within two years.

Transformers: "Attention Is All You Need" (2017)

The architecture behind today's AI revolution — the transformer — was introduced in a 2017 Google paper titled Attention Is All You Need. The key innovation: self-attention, which lets a model weigh the relevance of every word in a sequence against every other word simultaneously, rather than processing sequentially.

┌─────────────────────────────────────────────────────────┐

│ THE TRANSFORMER REVOLUTION │

│ (parameter count, log scale) │

│ │

│ 10¹² │ ▄ GPT-5 │

│ │ ▄▀ │

│ 10¹¹ │ ▄▄▀' GPT-4 │

│ │ ▄▄▀' (1.8T) │

│ 10¹⁰ │ ▄▄▀' │

│ │ ▄▄▀' GPT-3 │

│ 10⁹ │ ▄▄▀' (175B) │

│ │ ▄▄▀' │

│ 10⁸ │ ▄▄▀' GPT-2 │

│ │ ▄▄▀' (1.5B) │

│ 10⁷ │ ▄▀ GPT-1 │

│ │▀ (117M) │

│ ├────────────────────────────────────────────── │

│ 2018 2019 2020 2022 2024 2026 │

└─────────────────────────────────────────────────────────┘

| Model | Year | Parameters | Training Data | Key Capability |

|---|---|---|---|---|

| GPT-1 | 2018 | 117 million | BookCorpus | Basic text generation |

| GPT-2 | 2019 | 1.5 billion | 8M web pages | Coherent paragraphs |

| GPT-3 | 2020 | 175 billion | 45 TB text | Few-shot learning |

| ChatGPT | 2022 | GPT-3.5 based | + RLHF | Conversational AI |

| GPT-4 | 2023 | ~1.8 trillion (est.) | Multimodal | Reasoning, vision |

| Claude 3 | 2024 | Undisclosed | Undisclosed | Long context, safety |

| GPT-5 | 2025 | Undisclosed | Algorithmic efficiency focus | Scientific reasoning |

ChatGPT launched on November 30, 2022, and reached 100 million users in two months — the fastest-growing consumer application in history. It proved that natural language was the ultimate interface.

AI Agents: From Chatbots to Autonomous Workers (2024–2026)

The current frontier isn't AI that answers questions — it's AI that takes action. AI agents can:

- Break complex goals into sub-tasks

- Use tools (search, code execution, APIs)

- Maintain persistent memory across sessions

- Collaborate with other agents

- Build and deploy complete applications from a single prompt

┌────────────────────────────────────────────────────────────┐

│ THE EVOLUTION OF HUMAN-COMPUTER INTERACTION │

│ │

│ 1940s Rewiring cables │

│ 1950s Punch cards & batch processing │

│ 1960s Command-line terminals │

│ 1970s Text editors & shells │

│ 1984 Graphical user interfaces (mouse + windows) │

│ 1995 Web browsers (hyperlinks + pages) │

│ 2007 Touch screens (gestures + apps) │

│ 2011 Voice assistants (Siri, "Hey Google") │

│ 2022 Chat interfaces (natural language) │

│ 2024 AI agents (autonomous multi-step execution) │

│ 2026 Living software (workspace + agents + auto) │

│ │

│ ──────────────────────────────────────────────────────── │

│ Trend: Each era removes a layer of abstraction between │

│ human intent and machine execution │

└────────────────────────────────────────────────────────────┘

This is the throughline of the entire history of computing: every generation abstracts away the complexity of the last.

- Babbage abstracted away manual calculation

- Assembly language abstracted away machine code

- Compilers abstracted away assembly

- GUIs abstracted away command lines

- The web abstracted away local software

- Cloud abstracted away hardware

- AI agents abstract away software development itself

🧬 The Complete Timeline: 384 Years of Computing

┌──────────────────────────────────────────────────────────────┐

│ THE COMPLETE COMPUTING TIMELINE │

│ │

│ 1642 ──── Pascaline (mechanical calculator) │

│ 1694 ──── Leibniz Step Reckoner │

│ 1801 ──── Jacquard loom (punched cards) │

│ 1837 ──── Babbage's Analytical Engine │

│ 1843 ──── Ada Lovelace's first algorithm │

│ 1854 ──── Boole's Boolean algebra │

│ 1890 ──── Hollerith tabulator (US Census) │

│ 1936 ──── Turing's "On Computable Numbers" │

│ 1937 ──── Shannon: Boolean logic = circuits │

│ 1943 ──── Colossus (first programmable digital computer) │

│ 1945 ──── ENIAC (first general-purpose electronic computer) │

│ 1945 ──── Von Neumann architecture │

│ 1947 ──── Transistor invented (Bell Labs) │

│ 1948 ──── Manchester Baby (first stored program) │

│ 1952 ──── Grace Hopper's A-0 compiler │

│ 1958 ──── First integrated circuit (Kilby) │

│ 1965 ──── Moore's Law published │

│ 1969 ──── ARPANET first message / Unix created │

│ 1971 ──── Intel 4004 (first microprocessor) │

│ 1973 ──── Xerox Alto (first GUI computer) │

│ 1976 ──── Apple I │

│ 1981 ──── IBM PC │

│ 1984 ──── Macintosh (GUI for consumers) │

│ 1989 ──── Tim Berners-Lee proposes the Web │

│ 1991 ──── Linux kernel / first website │

│ 1993 ──── Mosaic browser │

│ 1998 ──── Google founded │

│ 2006 ──── Amazon Web Services │

│ 2007 ──── iPhone │

│ 2012 ──── AlexNet (deep learning breakthrough) │

│ 2017 ──── "Attention Is All You Need" (transformers) │

│ 2022 ──── ChatGPT (100M users in 2 months) │

│ 2024 ──── AI agents go mainstream │

│ 2026 ──── Living software: agents + automations + memory │

│ │

└──────────────────────────────────────────────────────────────┘

🔮 From Binary to Living Software: Connecting the Dots

Stand back far enough and the entire history of computing tells one story: the relentless elimination of friction between human intent and machine action.

Pascal wanted to stop making arithmetic errors. Babbage wanted to automate the production of mathematical tables. Turing wanted to define the boundaries of what machines could solve. Shannon wanted to connect logic to physics. Von Neumann wanted to stop rewiring computers between programs. Grace Hopper wanted anyone to be able to program — not just mathematicians.

Each of them removed a layer. And each layer removed made the next removal possible.

Today, we've reached a qualitative threshold. With AI agents and natural language interfaces, the gap between "what you want" and "what the machine does" has collapsed to nearly zero. You don't need to learn binary, assembly, C, Python, or cloud deployment. You describe your intent in plain English, and the system builds, deploys, and maintains the solution.

This is what Taskade Genesis represents — not just another tool in the lineage, but a completion of the arc. The Workspace DNA model — Memory (projects and knowledge), Intelligence (AI agents), Execution (automations) — mirrors the core computing cycle we traced from von Neumann's architecture:

┌────────────────────────────────────────────────────────┐

│ COMPUTING CYCLE → WORKSPACE DNA │

│ │

│ Von Neumann (1945) Taskade Genesis (2026) │

│ ────────────────── ────────────────────── │

│ Memory (RAM/Storage) → Memory (Projects/Knowledge) │

│ CPU (Processing) → Intelligence (AI Agents) │

│ I/O (Input/Output) → Execution (Automations) │

│ │

│ The architecture is the same. │

│ The abstraction level is different. │

│ The user is no longer a programmer. │

│ The user is everyone. │

└────────────────────────────────────────────────────────┘

Babbage would recognize the pattern. Turing would appreciate the universality. Ada Lovelace — who foresaw machines manipulating symbols, composing music, doing "whatever we know how to order it to perform" — would finally see her vision realized. Not as a single engine, but as living software that thinks, remembers, and acts.

📊 The Numbers: Computing's Exponential Journey

| Metric | 1945 (ENIAC) | 1971 (4004) | 1995 (Pentium) | 2007 (iPhone) | 2026 (Today) |

|---|---|---|---|---|---|

| Transistors | 17,468 tubes | 2,300 | 3.1M | 137M | 134B+ (M2 Ultra) |

| Clock speed | 100 kHz | 740 kHz | 75 MHz | 412 MHz | 3.78 GHz |

| Memory | 200 digits | 640 bytes | 8 MB | 128 MB | 192 GB (unified) |

| Storage | None | None | 500 MB HDD | 4 GB flash | 8 TB SSD |

| Power | 150 kW | 1 W | 15 W | 1 W | 5 W (mobile) |

| Cost (2026$) | ~$7.5M | ~$200 | ~$3,000 | ~$600 | $799 |

| Internet users | 0 | 0 | 16M | 1.2B | 5.5B |

| AI capability | None | None | None | Basic ML | Autonomous agents |

The pattern is unmistakable: every metric improves by orders of magnitude per decade, while cost drops to a fraction. This isn't luck — it's the compounding effect of abstraction. Each layer makes building the next layer cheaper and faster.

⚡️ What Comes Next: The Post-Computing Era

We are living through the transition from computing (humans instruct machines) to cognition (machines understand intent). The milestones ahead:

- Quantum computing: Solving problems intractable for classical computers (drug discovery, cryptography, optimization)

- Neuromorphic chips: Hardware that mimics brain architecture (event-driven, ultra-low power)

- Embodied AI: Agents that interact with the physical world through robotics

- Artificial general intelligence (AGI): Systems that match human cognitive flexibility — the question is when, not if

- Living software: Applications that evolve, learn, and improve autonomously — not static codebases but dynamic systems with embedded intelligence

The gap between "idea" and "execution" is closing. A century ago, turning an idea into software required years of engineering. A decade ago, it required months. Today, with Taskade Genesis, it requires one prompt — and the application comes alive with AI agents, automations, and 100+ integrations built in.

🐑 Before you go... Ready to experience the latest evolution in computing — AI-powered workspaces that build, think, and automate?

- 🤖 AI Agents: Deploy autonomous AI teammates with custom tools, persistent memory, and multi-agent collaboration. 22+ built-in tools, slash commands, and public embedding.

⚡ Genesis App Builder: Describe what you need in plain language. Genesis builds a complete, deployed application — with custom domains, password protection, and live data. No code required.

🔄 Workflow Automations: durable execution, branching/looping/filtering, 100+ integrations. From manual tasks to autonomous workflows.

Want to see what 384 years of computing history leads to? Create a free Taskade account and build your first living application. 👈

🔗 Resources

- https://en.wikipedia.org/wiki/Difference_engine

- https://en.wikipedia.org/wiki/Ada_Lovelace

- https://en.wikipedia.org/wiki/Alan_Turing

- https://en.wikipedia.org/wiki/ENIAC

- https://en.wikipedia.org/wiki/Transistor

- https://en.wikipedia.org/wiki/Intel_4004

- https://en.wikipedia.org/wiki/Apple_I

- https://en.wikipedia.org/wiki/Tim_Berners-Lee

- https://www.youtube.com/watch?v=rl0jkP9kOMw — "How Does a Computer Actually Work?" by Sebastian Lague

💬 Frequently Asked Questions About the History of Computing

Who built the first computer?

There is no single "first computer." Charles Babbage designed the first general-purpose mechanical computer (Analytical Engine, 1837) but never completed it. Konrad Zuse built the Z3 (1941), the first working programmable, fully automatic digital computer. The British Colossus (1943) was the first programmable electronic computer. ENIAC (1945) was the first general-purpose electronic computer. The Manchester Baby (1948) was the first to run a stored program.

Why do computers use binary (0s and 1s)?

Computers use binary because electronic circuits are most reliable when distinguishing between just two states: on and off. Distinguishing between 10 different voltage levels (for decimal) is error-prone due to electrical noise, temperature fluctuations, and signal degradation. Binary gives maximum reliability with minimum complexity, and Claude Shannon proved in 1937 that Boolean logic (which uses two values) maps perfectly to electrical switching circuits.

What is the most important invention in computing history?

The transistor (Bell Labs, 1947) is arguably the most important. It replaced vacuum tubes with a solid-state device that was smaller, faster, cheaper, and vastly more reliable — enabling everything from integrated circuits to microprocessors to the smartphones and AI accelerators we use today. Without the transistor, none of the subsequent advances in computing would have been possible at scale.

How did we go from room-sized computers to smartphones?

Through four waves of miniaturization: (1) vacuum tubes to transistors (1947), making computers desk-sized; (2) transistors to integrated circuits (1958), putting entire circuits on a chip; (3) Moore's Law scaling (1965–present), doubling transistor density every 2 years; (4) system-on-chip (SoC) design, combining CPU, GPU, memory, and radios on a single die. The Apple A18 Pro in an iPhone 16 has 19 billion transistors — more than ENIAC had vacuum tubes by a factor of over one million.

What is the difference between hardware and software?

Hardware is the physical circuitry — transistors, wires, chips, screens. Software is the set of instructions that tells the hardware what to do. Von Neumann's key insight (1945) was that software could be stored in memory alongside data, meaning the same hardware could run any program without being rewired. This separation is what makes modern general-purpose computing possible.

Who was the first programmer?

Ada Lovelace is recognized as the first computer programmer. In 1843, she wrote an algorithm for Charles Babbage's Analytical Engine to compute Bernoulli numbers — the first published algorithm designed for machine execution. She also foresaw that computers could manipulate symbols beyond pure mathematics, anticipating general-purpose computing by a century.

How fast is computing advancing compared to other technologies?

Computing advances roughly 1,000× per decade in performance per dollar — far faster than any other technology. Between 1945 and 2026, compute performance increased by approximately 10 trillion times (ENIAC's 5,000 operations/second to modern GPUs performing 10¹⁵ operations/second). For comparison, car engines have improved roughly 3× in efficiency over the same period, and aircraft speeds have actually decreased since the Concorde was retired.

What is the connection between computing and AI agents?

AI agents represent the latest abstraction layer in computing history. Just as compilers abstracted away machine code and GUIs abstracted away command lines, AI agents abstract away software development itself. An agent can plan, use tools, maintain memory, and execute multi-step workflows autonomously. Platforms like Taskade Genesis combine agents, automations, and workspace intelligence into living software — the logical endpoint of the computing abstraction trajectory.

Will quantum computers replace classical computers?

No — quantum computers solve specific types of problems (optimization, simulation, cryptography) exponentially faster than classical computers, but they are not general replacements. Most everyday computing — web browsing, word processing, AI agent workflows — will continue to run on classical hardware. Quantum and classical computing will coexist, each handling the tasks they're best suited for.

🧬 Build on 384 Years of Computing History

The entire history of computing has been building toward this moment: machines that understand what you need and build it for you. Taskade Genesis is living software — describe an application in natural language, and it deploys with embedded AI agents, workflow automations, and 100+ integrations. From binary to vibe coding — explore ready-made AI apps or build your own.