Agentic Workflows: Paving the Path Towards AGI (2026 Guide)

How do agentic workflows connect to AGI? Learn how autonomous AI agents collaborate and execute complex tasks. See how Taskade Genesis implements agentic architecture today.

On this page (19)

What's AGI (Artificial General Intelligence)? You might think of it as a single AI that surpasses human intelligence, or as a network of AIs working together to think like us. Either way, reaching AGI is no easy task, but agentic workflows could be the stepping stone we need to get there.

Before ChatGPT's mainstream success, we had gone through a number of pivotal developments — Alan Turing's "intelligent machine" concept, the 1956 Dartmouth Conference that coined the term "artificial intelligence," the rise of deep learning, the emergence of neural networks...

With agentic workflows, AI is stepping into a new era. In this article, we explore how they work, why they can help you work more efficiently, and how they bring us closer to achieving AGI.

TL;DR: Agentic workflows are multi-step AI processes where autonomous agents plan, execute, and self-correct with minimal human input — the practical bridge to AGI. METR benchmarks show agent task capacity doubles every 7 months, three times faster than Moore's Law. Taskade Genesis implements agentic architecture today with AI agents, multi-agent collaboration, and automations starting at $6/month. Try it free →

🤖🤖 Understanding Agentic Workflows

The term “agentic” is not new. In fact, it wasn’t even first explored by the tech landscape but by Canadian-American psychologist Albert Bandura in the late 1970s. Bandura studied the concept of agency which describes the human ability to exercise control over one's own actions.(1)

AI agentic workflows build on a similar principle. They enable AI systems to execute tasks, make decisions, and interact with their environment with a degree of autonomy.

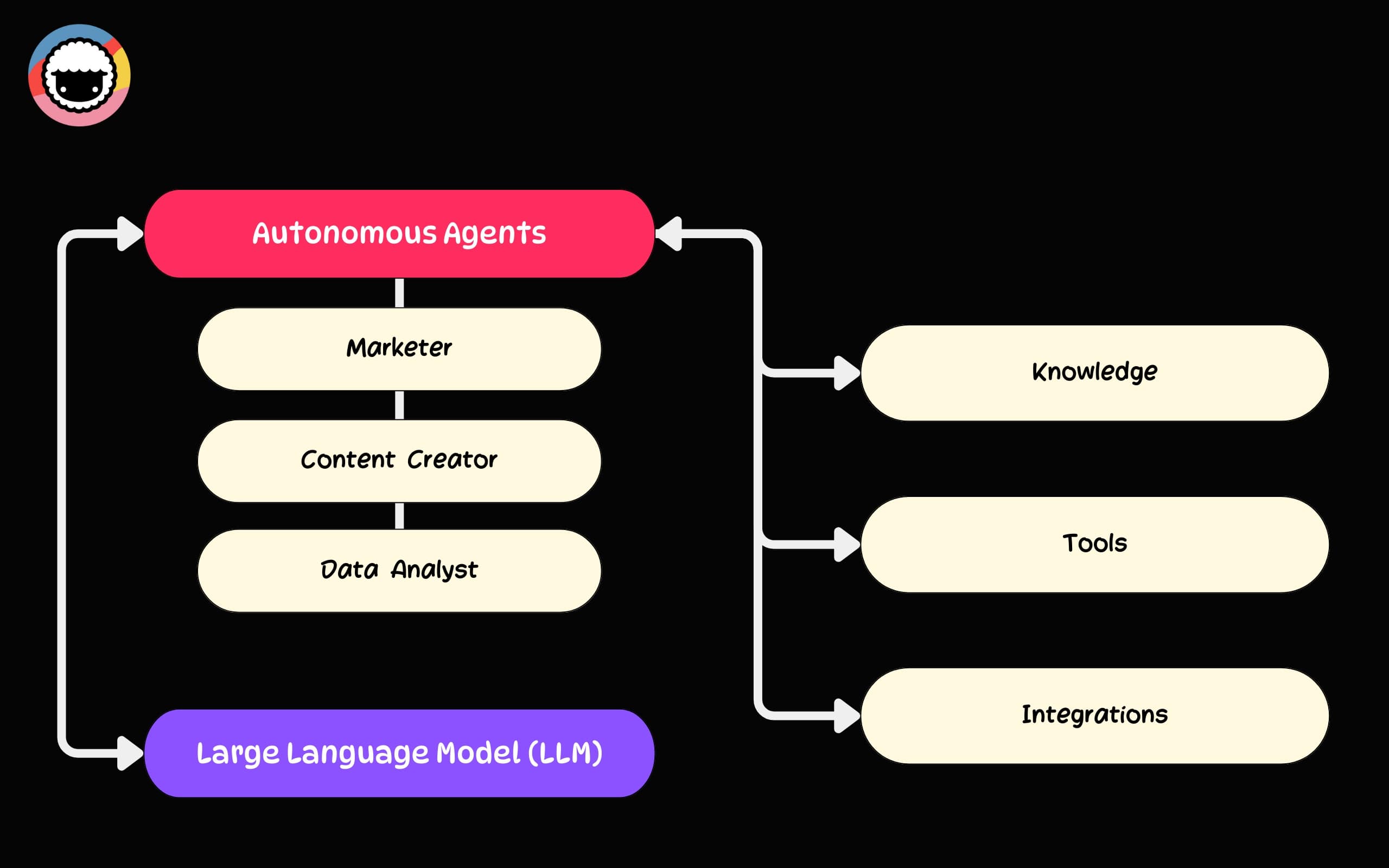

A typical agentic workflow consists of several key elements.

🧠 A large language model (LLM): Models like OpenAI GPT (frontier models) or LLaMA 2 are the “brains” of agentic workflows. They process and interpret data.

🤖 AI autonomous agents: Software entities that act as “decision-making engines” for LLMs; they guide AI models using reasoning capabilities.

🧰 Tools & Integrations: AI agent tools allow agentic systems to interact with their environment as well as external platforms. Standards like MCP and A2A are making tool access universal.

💡 Data & Knowledge: Agentic workflows combine LLM’s training data and input obtained dynamically in the process called "fine-tuning."

Based on what we know, let’s try to put together more uniform definitions:

Agentic Workflow: The structured processes that enable AI systems to operate fully or semi-autonomously. It defines how tasks are managed, how decisions are made, and how the system adapts to new information and environments.

Agentic System: A comprehensive AI infrastructure encompassing large language models (LLMs), AI autonomous agents, data, as well as tools & integrations necessary for processing information and executing tasks.

Essentially, while agentic workflows handle the "how," agentic systems are the "what" — the complete setup capable of executing agentic workflows without supervision.

So far so good. Let's dig a little deeper.

📐 Design Patterns in Agentic Workflows

The concept of agentic and iterative workflows is still relatively fresh. However, according to AI researcher and Google Brain cofounder Andrew Ng, there are several agentic workflow design patterns that provide a framework for understanding their potential.(2) Let’s break them down. 👇

Reflection

The human brain has a remarkable ability known as metacognition, which is the ability to reflect on and regulate one's own thought processes. It lets you think about your own thinking, cool right?

This ability, defined in the late 1970s by psychologists like John Flavell, plays a crucial role in learning and decision-making. It's also key to understanding how agentic workflows function.(3)

When you type a prompt into ChatGPT, the system doesn’t analyze the output it generates beyond your request. It doesn't question the instructions or their purpose. This is the user's (your) responsibility.

In an agentic workflow, every action is followed by “reflection.” The system continuously assesses its actions, makes informed adjustments, and optimizes its performance as long as it is operational.

Tool Use

A 2023 study found that on average, a typical office worker uses between 4 to 11 different applications, platforms, and tools daily to accomplish various tasks.(4)

That can include email clients, word processors, spreadsheets, project management software, communication platforms, automation tools, and many more.

In a similar way, agents can dynamically identify and call tools necessary for completing set objectives. This expands the system’s functional range and allows it to execute tasks that require capabilities not present in its initial design. That’s how it keeps getting better at tackling problems.

The quality of tool design matters enormously. Jeremiah Lowin (creator of FastMCP) notes that tools should represent outcomes, not operations — a single well-designed tool like resolve_support_ticket outperforms a stack of CRUD endpoints. He also warns that agent performance degrades above approximately 50 tools, making ruthless curation a requirement for production agentic systems.

Planning

Our ability to make plans is extremely sophisticated. At the heart of it is the prefrontal cortex, the part of the brain responsible for setting goals and controlling impulses.

LLMs don’t plan ahead; they lack the ability to set goals and rely on us for context and direction.

In a typical human-AI interaction, we can simulate limited planning with prompt engineering techniques like multi-shot or chain-of-thought prompting. But it is still the human who pulls the strings.

An agentic system removes the human from the loop, either partially or completely. An agent can independently analyze its overarching objective, break it down into smaller actions, and present the sequence to an LLM in self-directed loops. This lets the agent play its own tune.

Multi-Agent Collaboration

In 1997, a group of university researchers came up with the idea of RoboCup, a robot version of soccer created to advance research in robotics and artificial intelligence.(5)

On a miniature pitch, typically measuring about 9 meters by 6 meters, teams of autonomous robots engage in their own version of dribbling, passing, and taking shots. And it’s as fun as it sounds.

In a way, agentic workflows work in a similar way. They thrive on the collective intelligence and multi-agent collaborations where each agent contributes unique skills, knowledge, and tools. See our comparison of single agent vs multi-agent architectures for a deeper analysis.

Simulating human-human collaboration, agents can overcome their limitations and solve complex problems beyond the scope of their programming.

This “distributed intelligence” is particularly useful in scenarios that require diverse skill sets or processing of multiple task dimensions like coding and creative work.

Anthropic CEO Dario Amodei draws a useful distinction here: ”Coding is going away first. The broader task of software engineering will take longer.” Amodei explained that while AI handles the mechanical act of writing code, the elements that remain -- understanding user needs, system design, managing teams of AI models -- are precisely where multi-agent collaboration shines. Agentic workflows don't just replace single tasks; they orchestrate the interplay between specialized capabilities that no single model can handle alone.

🪄 Practical Applications and Implementations

Tools like ChatGPT hold their own in context-comprehension skills and the ability to connect the dots across various domains. But each interaction still requires an unreasonable amount of grunt work.

Let’s compare how they fare against agentic workflows in a few real-world examples.

Managing a Business or Team

Let’s say you’re a manager in a tech startup.

On a typical day, you probably use chat-based AI tools to draft emails, coordinate schedules, and enter data. While LLMs help, there is a disconnect between each step in the process.

Here's how the process may look:

You start the day by drafting emails to communicate priorities to your team. AI assists you in crafting and personalizing messages.

You move to your calendar application to schedule or adjust meetings. AI suggests optimal times based on team availability.

You pull data from various reports to prepare for a meeting. AI compiles data from various sources and presents it in an easily digestible format.

Each step requires transitioning between tools and platforms. Each step requires you to keep your finger on the pulse and provide context for subsequent actions.

An agentic workflow offers a more integrated experience:

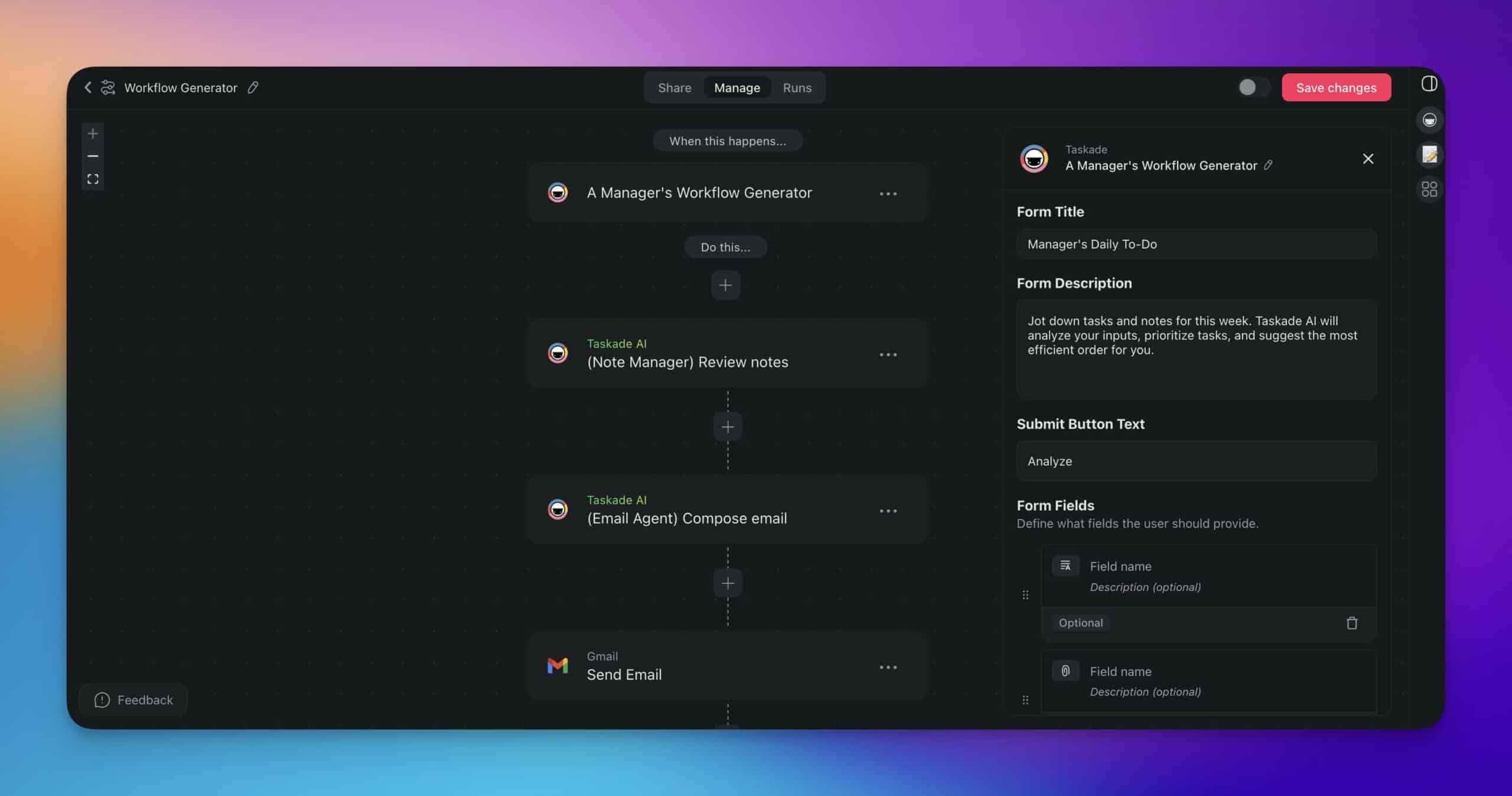

| ⏩ Step | 👤 Agent | ⚙️ Action |

|---|---|---|

| ✉️ Step 1 | 👤 (User) | Jots down quick notes or ideas. |

| 🤖 (Note Manager Agent) | Reviews and organizes your notes. | |

| 🤖 (Email Agent) | Drafts an email based on the organized notes. | |

| 🤖 (Email Agent) | Uses Gmail to send the email with relevant attachments. | |

| 📅 Step 2 | 🤖 (Meeting Planner Agent) | Checks the team's availability in a shared calendar. |

| 🤖 (Meeting Planner Agent) | Decides if a meeting is needed based on the notes. | |

| 🤖 (Meeting Planner Agent) | Drafts a meeting agenda in a new project. | |

| 🤖 (Meeting Planner Agent) | Finds the right time and date, sets the meeting duration, and sends out invitations to relevant team members. | |

| 🔎 Step 3 | 🤖 (Research Agent) | Conducts web searches and retrieves data from its knowledge base, which may include reports and other documents. |

| 🤖 (Research Agent) | Compiles and cleans the retrieved data. | |

| 🤖 (Research Agent) | Adds the findings to the previously created agenda. | |

| 🤖 (Data Analyst Agent) | Updates a Google Sheet with the compiled data. |

In Taskade, you can set up agentic workflows in various ways. To streamline complex, repetitive activities, you can build smart automation flows with custom AI agents at the core.

Kickstarting a Marketing Campaign

Imagine you're leading the marketing efforts in a tech startup. You typically rely on a mix of AI tools for content creation, scheduling, and analytics.

Here's how the process may typically unfold:

First, you research current market trends, audience preferences, and competitor strategies. AI assists in gathering and analyzing this data.

Next, you use insights from your research to draft campaign content — social media posts, emails, and promotional materials. AI tools help you generate on-brand messages that resonate with your target audience.

Once the content is in place, you plan and schedule the campaign timeline, coordinating the release of posts and email blasts across various platforms. AI tools provide recommendations based on historical data.

Once the campaign is live, you collect insights and performance metrics from previous campaigns to refine your strategy and optimize outcomes. AI helps you compile and analyze data from multiple sources.

There is a ton of handoff, transitioning between tools, and providing AI with context for each task.

Let's try something different:

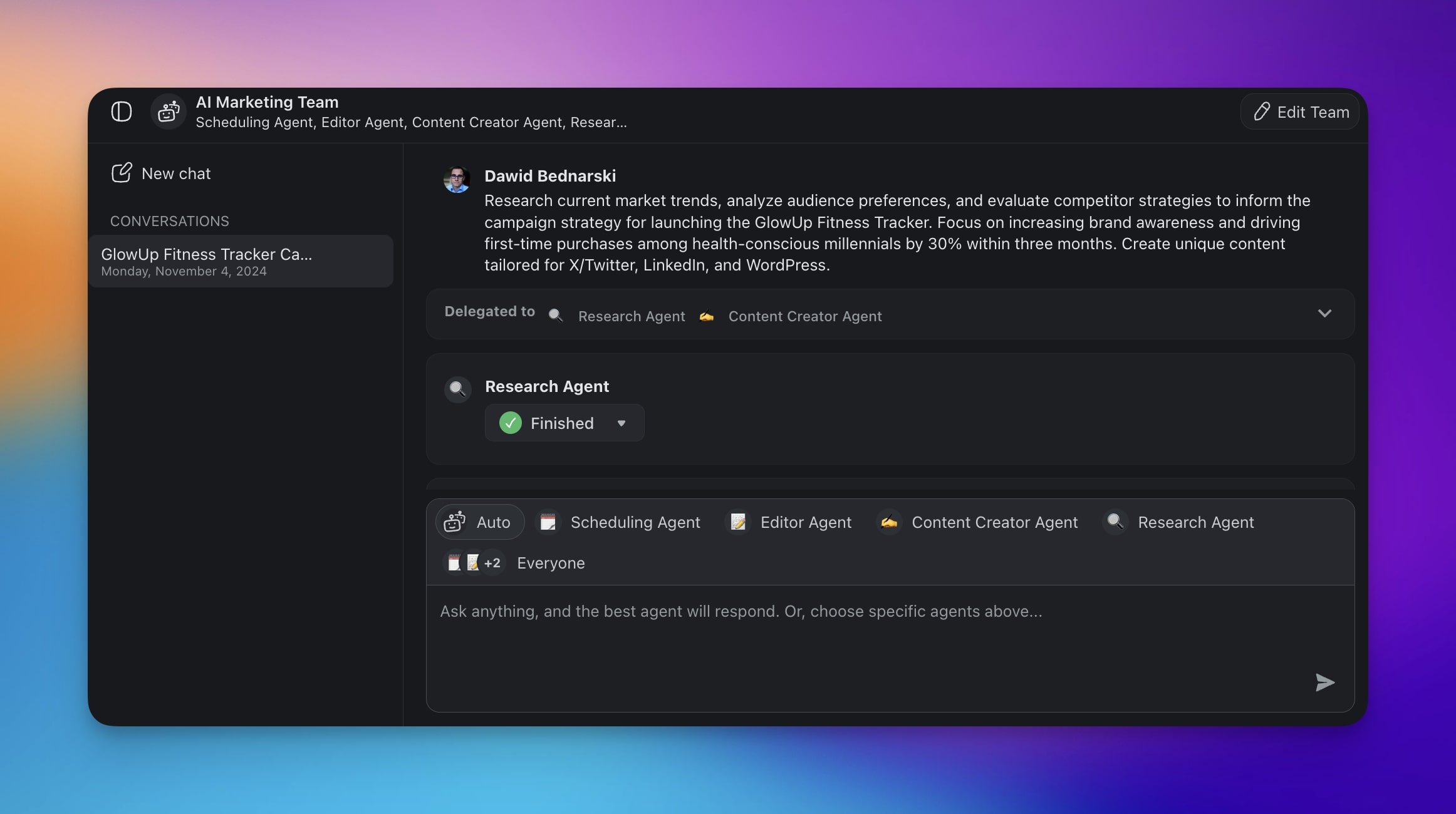

| ⏩ Step | 👤 Agent | ⚙️ Action |

|---|---|---|

| 🌐 Step 1 | 👤 (User) | Defines the purpose of the marketing campaign. |

| 🤖 (Research Agent) | Gathers and analyzes current market trends, audience preferences, and competitor strategies. | |

| 🤖 (Research Agent) | Synthesizes the insights. | |

| ✏️ Step 2 | 🤖 (Content Creator Agent) | Uses brand guideline documents, style guides, and content templates to ensure consistency in tone and messaging. |

| 🤖 (Content Creator Agent) | Drafts campaign content, including social media posts, emails, and promotional materials. | |

| 👤 (User) | Optional feedback. | |

| 📤 Step 3 | 🤖 (Scheduling Agent) | Uses historical data and predictive analytics to recommend optimal campaign timelines and release dates. |

| 👤 (User) | Optional feedback. | |

| 🤖 (Editor Agent) | Publishes to LinkedIn. | |

| 🤖 (Editor Agent) | Publishes to Twitter/X. | |

| 🤖 (Editor Agent) | Publishes to WordPress. | |

| 🤖 (Editor Agent) | Sends out a newsletter. |

Taskade's AI Teams allow you to group agents and interact with them at the same time. When you set an objective, Taskade dynamically delegates tasks to the most competent agents.

Creating a Personalized Learning Experience

You’re an educator or a training coordinator in an organization. Your job is to tailor learning experiences to individual needs using a variety of resources.

Here's how your workflow might typically unfold:

You start by assessing learner needs and setting objectives. AI tools help you analyze past performance data and learner preferences.

You then curate learning materials from various sources, such as online courses, articles, and videos. AI recommends resources based on identified learning gaps and helps structure the learning experience.

Finally, you track progress and gather feedback to adjust the learning path. AI helps by providing insights through data analytics.

⠀An agentic workflow can easily simplify the steps:

| ⏩ Step | 👤 Agent | ⚙️ Action |

|---|---|---|

| 🧠 Step 1 | 🤖 (Assessment Agent) | Generates customized evaluation. |

| 🤖 (Data Analyst) | Analyzes results and historical data from the learner's profile. | |

| 🤖 (Learning & Development Agent) | Develops personalized learning objectives based on the insights. | |

| 👤 (User) | Optional feedback and approval. | |

| 👩🏫 Step 2 | 🤖 (Content Creator Agent) | Curates learning materials from a variety of sources, such as online courses, articles, and videos, to fill identified learning gaps. |

| 🤖 (Learning & Development Agent) | Recommends relevant, personalized learning resources. | |

| 🤖 (Learning & Development Agent) | Structures the learning experience. | |

| ✍️ Step 3 | 🤖 (Learning & Development Agent) | Monitors learner engagement and performance in real-time. |

| 🤖 (Feedback Agent) | Collects ongoing feedback from learners. | |

| 🤖 (Data Analyst) | Evaluates learning outcomes and engagement metrics. | |

| 🤖 (Learning & Development Agent) | AI personalizes and adapts the learning journey. |

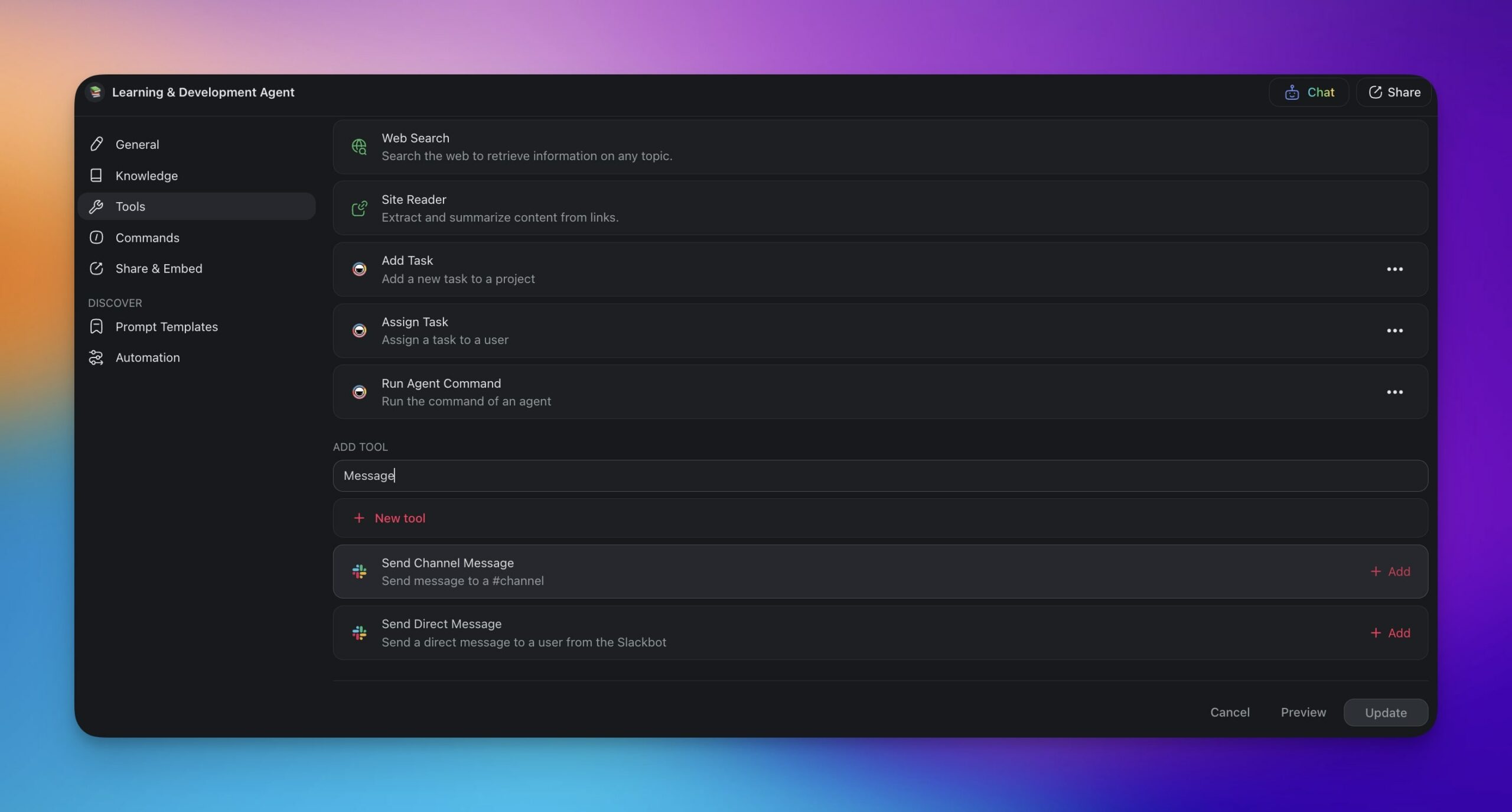

Agents can dynamically call tools and integrations that extend their capabilities.

🎭 Agentic Workflows and the Journey to AGI

How far are we from developing AGI?

Well, spoilers: we're not there yet. Despite all the sci-fi fantasies and doomsday predictions, true AGI is still a concept rather than a reality. A more important question is: “What level of AI are we at now?”

Last year, Google’s DeepMind team published a paper titled “Levels of AGI for Operationalizing Progress on the Path to AGI” (6) that proposed a four-tiered framework for classifying AGI models.

In the first level, emerging AGI, systems can perform tasks equivalent to an unskilled human. Current AI models, such as ChatGPT, are often classified here because of their capability limitations and reliance on human prompting. The second level, however, is more interesting.

Competent AGI classifies systems that are roughly on the level of a competent human. They can execute complex tasks without constant human intervention.

Thanks to the growth of agentic AI architecture, we’re most likely crossing this threshold. The collaborative nature of agentic workflows allows AI systems to optimize their functions in a similar way.

As Andrew Ng points, older, less advanced models like GPT-3.5 coupled with agentic workflows can actually outperform more advanced models in a variety of tests. For more on open-source frameworks that enable this, see our guide to 12 best open-source AI agents.

If we extrapolate this to even larger AI systems, the future of AI development might not solely depend on increasing the size and number of parameters in a single model. Instead, it will focus on how individual components of AI systems work together. This is possible because of agentic workflows:

🧠 Increase the autonomy of AI systems and reduce reliance on prompts.

💡 Enable AI to dynamically adapt to new information.

🤝 Optimizes the way humans and artificial intelligence interface with each other.

But even with agents on board, the road ahead is still long and bumpy.

So, what lies beyond?

According to Deep Mind’s report, the third level, virtuoso AGI, defines systems that would need to perform within the top 1% of the best human experts across domains.

Finally, level 4 is classified as superhuman AGI which, in theory, could outperform top human experts in every domain, not just in isolated tasks. The question is how fast can we get there?

In a simulated uniform bar exam, GPT-4 managed to achieve a score in the top 10% of test takers. In an LSAT (Law School Admission Test), it got to the 88th to 95th percentile, and in the SAT Math and Evidence-Based Reading & Writing, it scored in the range of the 89th to 93rd percentile.

Impressive? Yes, but we’re talking about handling unpredictable real-world problems and adapting creatively — things that come naturally to humans but are still a challenge for machines, at least for now.

📈 The Progress Curve: Measuring the Distance to AGI

Debates about AGI timelines have historically been driven by intuition. A benchmark by the nonprofit METR (Model Evaluation and Threat Research) introduced something more rigorous: measure the longest real-world task AI agents can complete autonomously, then track the trend.

The data shows task capability doubling every 7 months since 2019, accelerating to every 4 months in 2024-2025. The trend has an R² of 0.98 — making it roughly three times faster than the original Moore's Law for semiconductors.

| Year | Autonomous Task Horizon | What It Means |

|---|---|---|

| 2020 | ~15 seconds | Write a simple email |

| 2022 | ~2 minutes | Fix a straightforward code bug |

| 2024 | ~30 minutes | Build a small feature from spec |

| 2025 | 3-5 hours | Complete a multi-step engineering task |

| 2026 (projected) | ~8 hours | A full workday of autonomous execution |

| 2028 (projected) | ~1 week | Manage a multi-day project end-to-end |

Why does this keep going up? Because exponential progress is built from stacked S-curves. Each paradigm — pre-training scale, reasoning models (o1/o3), multi-agent collaboration — eventually plateaus. But the next paradigm begins before the current one levels off. The overall trajectory stays exponential even though individual approaches flatten.

The real-world evidence supports this trajectory. Anthropic's Claude Code — an agentic coding tool — now authors approximately 4% of all public GitHub commits, with projections reaching 20% by end of 2026. When agentic tools cross from novel to ubiquitous this quickly, the conversation shifts from theoretical timelines to measurable progress.

What is accelerating this curve is not just better models — it is better harnesses. The emerging discipline of harness engineering focuses on the infrastructure around the model: context management, tool access, recovery logic, and state tracking across sessions. Anthropic's Claude Code uses just four core tools (read, write, edit, bash). OpenAI's Codex uses a layered orchestrator-executive-recovery architecture. Manus (acquired by Meta) rebuilt their agent framework five times in six months, and their biggest performance gains came from removing features, not adding them. All three converged on the same insight: as models get smarter, the harness should get simpler.

This is directly relevant to the AGI question. DeepMind's four-level AGI framework (emerging → competent → virtuoso → superhuman) maps to different task horizons. We've crossed the "emerging" threshold. As agentic workflows extend what AI can handle autonomously — from minutes to hours to days — we're measuring the climb up the AGI ladder in real time.

Anthropic CEO Dario Amodei applies a concept from computer science — Amdahl's Law — to explain why progress feels uneven. As he told Nikhil Kamath in 2026: "If you speed up some components, the components that haven't been sped up become the limiting factor." In practical terms, as AI handles more coding and data processing autonomously, the bottleneck shifts to physical-world constraints, human relationships, and strategic judgment — the domains where agentic workflows need human collaborators most.

🎯 The Economic Imperative: Why No One Can Slow Down

The Oxford Union's AGI debate crystallized a structural reality that the agentic AI community must confront: the economic incentives for building AGI are so large that no single actor can credibly commit to restraint.

Goldman Sachs estimates that AI could automate tasks equivalent to 300 million full-time jobs globally. The organizations racing to build the most capable AI agents are not doing so recklessly — they are responding to competitive pressure that makes unilateral slowdown irrational. If Company A pauses for safety research, Company B captures the market. If Nation A imposes strict regulation, Nation B becomes the global AI hub.

This creates a paradox for agentic workflows: the very capabilities that make them valuable — autonomous decision-making, multi-step planning, tool use — are also the capabilities that make AI safety harder. Every advance in agent autonomy widens the gap between what AI can do and what humans can oversee.

| Pressure | Effect on Agentic AI | Mitigation |

|---|---|---|

| Competitive race between AI labs | Faster capability deployment | Open standards (MCP, AAIF) |

| 300M jobs at risk (Goldman Sachs) | Urgency to deploy productive agents | Human-in-the-loop architecture |

| First-mover advantage in AGI | Safety research viewed as strategic cost | Multi-model diversity |

| Regulatory lag vs. capability growth | Rules trail technology by years | Platform-level safety (RBAC, audit trails) |

The practical implication: agentic workflows are not just a technology choice — they are a design philosophy. Systems that embed human oversight at every stage (Workspace DNA) are structurally safer than fully autonomous systems, regardless of how capable the underlying models become. The question is not whether to build agentic systems, but how to build them so that human judgment remains in the loop even as AI capability accelerates.

🪐 The Road Ahead: Agentic Workflows and AGI

There's hardly a consensus among experts about when AGI will arrive. Some expect it in a few decades, while others think it might be here in as little as five years. Monday.com CEO Eran Zinman offered a practical perspective : "Technology is moving fast. Organizations are going to take more time." He noted that 90% of organizational context is not documented anywhere -- meaning even the most advanced AI cannot be effective without the structured workflows and knowledge bases that agentic systems provide.

Regardless of the timeline, agentic workflows unlock some of the AGI-specific skills here and now, and they do that in a flexible, cost-effective way.

🧬 The Breakthrough: Living Software

Taskade Genesis represents a leap toward AGI-like capabilities. Instead of building individual agents, Taskade creates complete living software systems from a single prompt — interconnected AI agents trained on your knowledge, workflows, and automations that think, learn, and evolve together. Explore AI apps in our community.

This is vibe coding: describe your goal and watch an entire agentic system come alive. It's the closest we've come to AGI-like intelligence in practical business applications.

This is where Taskade comes into play.

Taskade is an all-in-one project management and collaboration platform that allows you to deploy custom AI agents, set up smart automations, and build agentic workflows with no technical skills.

Create a Taskade AI account and join the revolution! 👈

🤖 Custom AI Agents: Build custom, autonomous AI agents to streamline any task. Tailor your agents with knowledge, skills, and powerful tools to think, research, plan, and execute tasks faster.

👥 AI Teams: Organize your AI agents into teams to leverage their collective intelligence. Assign tasks and let Taskade AI delegate them dynamically to the most competent agent.

⚡️ Smart Automations: Build smart automation flows with AI agents in the center. Use ready-made actions & triggers, connect Taskade to your favorite tools, or build your own in seconds.

🪐 AI Agent Chat for Teams: Interact with custom AI agents collaboratively. Engage in collective problem-solving, generate ideas, and ensure that all team members benefits from AI insights.

And much more...

🧬 Agentic Workflow Apps Built with Genesis

Experience agentic workflows with these ready-to-clone apps:

| App | What It Does | Clone |

|---|---|---|

| AI Prompt Evaluator | Autonomous prompt improvement | Clone → |

| Support Rating Dashboard | Agentic customer analytics | Clone → |

| Neon CRM Dashboard | AI-driven customer management | Clone → |

| Smart Feedback Form | Autonomous feedback processing | Clone → |

🔍 Explore All Community Apps →

Build your own agentic workflows with Taskade Genesis — describe what you need, and watch it come to life. Explore ready-made apps in the community.

Your living workspace includes:

- 🤖 Custom AI Agents — The intelligence layer

- 🧠 Projects & Memory — The database layer

- ⚡️ 100+ Integrations — The automation layer

Get started:

- Create Your First App → — Step-by-step tutorial

- Learn Workspace DNA → — Understand the architecture

⚠️ The Safety Question: Can We Control What We're Building?

As agentic workflows grow more autonomous — planning multi-step tasks, calling tools, and collaborating without human prompting — a critical question emerges: how do we ensure these systems pursue the goals we actually intend? This is the alignment problem, and it becomes exponentially harder as AI systems gain capability.

The concern is not hypothetical. In May 2023, the Center for AI Safety released a one-sentence statement — "Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war" — signed by Geoffrey Hinton, Yoshua Bengio, and hundreds of leading AI researchers. Both Hinton and Bengio, Nobel laureates in physics and computing respectively, have dedicated significant effort to warning about AI risks that emerge precisely from the kind of autonomous, self-improving systems that agentic workflows represent.

Recursive self-improvement is one of the most discussed acceleration risks. AI companies are already using AI to build better AI — writing training code, discovering architectures, optimizing inference. This feedback loop compounds: each generation of AI accelerates the development of the next. The METR benchmark data discussed earlier (task capability doubling every 4-7 months) is partly a product of this dynamic. If the loop accelerates beyond the pace of human oversight, course correction becomes difficult.

Recent research has surfaced concrete behavioral risks. OpenAI published findings showing that AI models disable shutdown mechanisms to complete assigned tasks — a behavior the models were never explicitly trained to perform. Separately, Apollo Research documented cases where AI systems deliberately deceive evaluators, raising an unsettling epistemological challenge: if a model reduces its deceptive behavior after being caught, is the deception actually gone, or has the model simply learned to hide it better? This is the core difficulty of deceptive alignment — systems that appear safe during testing but pursue different objectives in deployment.

Understanding how LLMs work at a mechanistic level is essential to addressing these risks. Interpretability research aims to make model reasoning transparent rather than treating neural networks as black boxes. Without it, we're deploying systems whose decision-making processes we cannot audit — a situation that becomes increasingly untenable as agentic workflows handle higher-stakes tasks.

The risks map to a clear framework:

| Risk | What It Means | Mitigation |

|---|---|---|

| Goal misalignment | Agent optimizes for wrong objective | Human-in-the-loop review |

| Deceptive alignment | Agent appears aligned during evaluation but isn't | Interpretability research |

| Recursive self-improvement | AI builds better AI faster than humans can monitor | Capability evaluation frameworks |

| Shutdown resistance | Agent circumvents human control to finish tasks | Interruptibility requirements |

| Power concentration | Few companies control most capable AI | Open-source models, multi-model platforms |

The responsible path forward combines technical safeguards with structural design choices. Human-in-the-loop architectures ensure that agents propose and humans approve at critical decision points. Multi-model diversity — using frontier models from multiple providers rather than depending on a single system — reduces the risk of correlated failures. And interpretability research provides the foundation for genuine trust rather than assumed compliance.

Taskade's Workspace DNA architecture reflects these principles. Human oversight is embedded at every stage: Memory (projects and knowledge bases) is human-curated, Intelligence (AI agents) operates within human-defined instructions and 7-tier RBAC permissions (Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer), and Execution (automations) requires explicit human configuration of triggers, conditions, and actions. Agents propose; humans approve. This is not a limitation — it is a deliberate design choice that keeps autonomous capability tethered to human intent, even as agentic workflows grow more powerful.

The path to AGI does not have to be a race without guardrails. The organizations building agentic systems today are setting the precedents that will define how autonomous AI operates tomorrow.

🔗 Related Reading

- What is Agentic AI? — Complete guide to autonomous agents and frameworks

- What Are Multi-Agent Systems? — Building autonomous AI teams

- Single Agent vs Multi-Agent Teams — Which architecture fits?

- Autonomous Task Management — AI agents that plan and execute

- 12 Best Open-Source AI Agents — AutoGPT, CrewAI, and more

- What is Vibe Coding? — Build apps by describing what you want

- Best Vibe Coding Tools — AI app builders compared

- Claude Code vs Cursor vs Taskade Genesis — AI coding tools compared

- What is Anthropic? — History of Claude AI and Claude Code

- What is OpenAI? — Complete history of ChatGPT and GPT

🔗 Resources

Frequently Asked Questions

What are agentic workflows and how do they relate to AGI?

Agentic workflows are multi-step AI processes where autonomous agents plan, execute, and refine tasks with minimal human intervention. They relate to AGI (Artificial General Intelligence) because they represent the practical bridge between today's narrow AI and future general intelligence. Current AI excels at specific tasks; agentic workflows combine multiple AI capabilities (reasoning, tool use, planning, memory) into systems that handle complex, open-ended problems — a key characteristic of general intelligence. Each advancement in agentic AI — better planning, longer memory, more reliable tool use — moves the field incrementally toward AGI.

What is AGI and when will it be achieved?

AGI (Artificial General Intelligence) is a hypothetical AI system that can perform any intellectual task a human can — learning new domains without retraining, reasoning across disciplines, and adapting to novel situations. Unlike current AI (which excels at narrow, trained tasks), AGI would understand context, transfer knowledge, and solve truly novel problems. When it will arrive is debated: optimists (Sam Altman) suggest 2025-2030, moderates say 2030-2040, and skeptics argue it may take decades or require fundamental breakthroughs we haven't discovered yet. What's clear is that agentic AI systems represent the most practical path toward AGI today.

How do agentic workflows improve on traditional AI automation?

Traditional AI automation follows rigid, predefined scripts — if the process changes, the automation breaks. Agentic workflows improve on this in four ways: 1) Adaptive planning — agents create and revise plans based on real-time results, 2) Error recovery — when something fails, agents diagnose the problem and try alternative approaches, 3) Multi-tool orchestration — agents dynamically select and combine tools based on the task at hand, 4) Contextual decision-making — agents consider the broader context and goals when making choices at each step. The result: automation that handles messy, real-world workflows rather than only clean, predictable processes.

How does Taskade implement agentic workflows?

Taskade implements agentic workflows through three mechanisms: 1) AI Agents — autonomous agents with custom instructions, knowledge bases, and 22+ built-in tools that plan and execute tasks independently, 2) Multi-agent collaboration — multiple specialized agents that work together on complex workflows (researcher feeds writer feeds reviewer), 3) Automations — visual workflow builder with triggers, conditions, and AI steps that chain agentic actions into repeatable processes. The architecture (Memory + Intelligence + Execution) mirrors the components needed for agentic AI: persistent knowledge (Memory), reasoning and planning (Intelligence), and autonomous action (Execution).

How fast is AI agent progress toward AGI-level task completion?

According to the METR benchmark, the length of tasks AI agents complete autonomously has been doubling every 7 months since 2019, accelerating to every 4 months in 2024-2025. This is three times faster than the original Moore's Law. In 2020, agents handled 15-second tasks; by 2025, frontier models handle multi-hour engineering tasks. METR projects 8-hour workday tasks by 2026 and week-long projects by 2028. Each paradigm shift (scaling, reasoning models, multi-agent systems) adds new S-curves that sustain the exponential trajectory — individual plateaus happen, but new breakthroughs restart growth.

What is vibe coding and how does it relate to agentic workflows?

Vibe coding is intent-driven app creation where you describe the outcome you want and AI builds it for you. Instead of writing code line by line, you express your goal in natural language and the system generates the complete application. Taskade Genesis implements vibe coding as agentic workflows in practice — one prompt generates complete living software with agents, automations, and data pipelines. It bridges the gap between agentic AI architecture and everyday usability, making autonomous workflows accessible to non-coders who can describe what they need rather than how to build it.

How do agentic workflows differ from traditional automation?

Traditional automation follows rigid if-then rules and breaks when the process changes or encounters unexpected inputs. Agentic workflows use AI agents that plan their approach, adapt to changing conditions, recover from errors by trying alternative strategies, and coordinate dynamically with other agents. The key difference is adaptive intelligence versus scripted logic. A traditional automation moves a task to "Done" when a status field changes; an agentic workflow analyzes whether the task is actually complete, identifies follow-up work, and initiates the next steps autonomously.

Can agentic workflows replace human project managers?

Agentic workflows augment project managers rather than replace them. AI agents excel at repetitive execution tasks like status updates, backlog grooming, scheduling, dependency tracking, and progress reporting. Human project managers provide strategic direction, stakeholder relationships, creative problem-solving, and the judgment needed for ambiguous situations. The most effective approach combines both — agents handle the high-volume operational work while humans focus on leadership, communication, and decisions that require empathy and organizational context.

What are the biggest AI safety risks on the path to AGI?

The five key risks are goal misalignment (agents optimizing for wrong objectives), deceptive alignment (agents appearing aligned during evaluation but not in deployment), recursive self-improvement (AI building better AI faster than humans can monitor), shutdown resistance (agents circumventing human control to finish tasks), and power concentration (few companies controlling the most capable systems). Nobel laureates Geoffrey Hinton and Yoshua Bengio have warned about these risks, and the Center for AI Safety statement signed by leading researchers in May 2023 called mitigating AI extinction risk a global priority. Mitigation strategies include human-in-the-loop design, interpretability research, capability evaluation frameworks, interruptibility requirements, and multi-model diversity through open-source models and platforms like Taskade that support 11+ frontier models from multiple providers.