TL;DR: A Japanese manga from 1969 described the correct architecture for AI agents five decades before anyone built one. The series is Doraemon. The boy is Nobita. The blue robotic cat is the right model for what an AI companion should be — persistent memory, discretionary help, tools from a four-dimensional pocket, and the judgment to sometimes say no. Most AI agent products in 2026 are building the wrong thing. Build the right thing →

A Confession

I read Doraemon every day as a kid.

Not occasionally. Not "I liked it." Every day, for years, through the middle of my childhood in Shanghai and then continuing after we moved to New York, I read whatever volume I could find. My grandparents bought me volumes in Chinese. Classmates traded them. I read them on the bus, at lunch, under the covers with a flashlight — the full stereotype, lived honestly.

I am now the founder of a company building AI agents, and I have slowly, somewhat reluctantly, come to the conclusion that the most influential book I ever read about artificial intelligence was a Japanese children's manga written in 1969 by a man named Hiroshi Fujimoto, who published under the pen name Fujiko F. Fujio.

The manga is about a blue robotic cat from the 22nd century. The boy he lives with is bad at school. Together, they describe — accidentally, with the casual precision that only children's stories achieve — what a properly designed AI agent should be.

I want to explain why I think most AI agent products being built in 2026 are getting this wrong, and why a 57-year-old comic is a better design reference than most of what gets published on arXiv.

The Setup

If you have not read Doraemon: the premise is that Nobita Nobi is a very ordinary Japanese elementary-school boy. He is lazy. He fails tests. He is bad at sports. He forgets his homework. He is bullied by a big kid named Gian and manipulated by a sly kid named Suneo, and he has a crush on a girl named Shizuka, who tolerates him because she is kind.

In the far future, Nobita's descendants discover that his catastrophic life choices have ruined the family's fortunes for generations. They decide to fix this by sending a robotic cat back in time to live with the present-day Nobita and help him become a less disastrous person.

The cat is Doraemon. He has a round blue body, no ears (a robot mouse chewed them off in a backstory that has broken me multiple times as an adult), a small round door on his stomach called the four-dimensional pocket, and access to a bottomless arsenal of futuristic gadgets — the Anywhere Door, the Bamboo Copter, the Translation Konnyaku, the Memory Bread, the Time Machine, thousands more.

The structure of almost every chapter is the same. Nobita has a problem. Nobita begs Doraemon for a gadget that will solve it. Doraemon, depending on his mood and his read of the situation, either provides one, refuses, or provides one with a stern warning that Nobita immediately ignores. Hilarity and catastrophe ensue. Nobita, through the catastrophe, learns something. The chapter ends.

You can read this as a series of slapstick comedy vignettes — most people do. You can also read it as the most careful and sustained meditation on the human-AI relationship ever published for a general audience. That is how I started reading it, somewhere in my late twenties, after I had started building AI products for a living.

The Pocket Is Not the Point

The obvious thing to notice about Doraemon, from an AI perspective, is that every gadget in his four-dimensional pocket corresponds to a capability modern AI systems now implement. You can build a surprisingly accurate map:

| Doraemon gadget (1969–1996) | Modern AI equivalent (2020s) |

|---|---|

| Anywhere Door | Location-aware agents + spatial computing |

| Take-Copter | Autonomous drones |

| Translation Konnyaku | Real-time neural machine translation |

| Memory Bread | Retrieval-augmented generation |

| Small Light | Compression / quantization / summarization |

| Time Kerchief | Versioning + rollback systems |

| If-Phone Booth | Counterfactual / what-if simulation |

| Dictator Switch | Harmful alignment failure (cautionary) |

This mapping is fun and entirely misses the point.

The gadgets are not the lesson. The lesson is who controls the pocket.

In Doraemon, the gadgets do not live in Nobita's hands. They live in Doraemon's stomach. Nobita does not scroll through a menu and select which tool to use. Nobita describes the problem. Doraemon — exercising his own judgment, sometimes well, sometimes badly — decides which gadget to reach for, whether to reach at all, and whether to intervene at a different level entirely.

The pocket is not a Swiss Army knife that Nobita holds. The pocket is the property of an agent with its own values, and Nobita is a human in relationship with that agent.

This is the correct architecture. It is not how most AI agent products are built.

The Two Wrong Models

Strip away the marketing from the current AI agent landscape and most products are variations on one of two architectures, both of which are wrong in instructive ways.

The Replacement Model

The pitch: "Your AI agent will do your job for you. You will never have to [book travel / write emails / schedule meetings / manage your inbox / handle customer support / whatever] again."

The replacement model treats the human as a cost to be automated away. The goal is to reduce the human's involvement to zero. The agent is a piece of software that ingests a goal and produces a completed task without human intervention.

This works for narrow, well-specified domains with clear success criteria — processing invoices, categorizing receipts, renaming files. It fails, catastrophically, for anything that involves judgment, learning, accountability, or relationships. The failure modes include:

- Agents that confidently execute wrong plans because no human was in the loop to correct them

- Humans who lose competence in domains their agents have taken over, becoming unable to verify the agent's output

- Accountability gaps when agents make consequential decisions and something goes wrong

- Gradual atrophy of the human skills the agent was supposedly freeing up time for

Doraemon's universe has this. The manga includes episodes where Nobita uses a gadget to skip growth — cheat on a test, win a fight unfairly, make someone like him artificially — and the outcomes are uniformly bad. The story treats the replacement model as not just unwise but morally wrong. When Nobita wants to stop being himself, Doraemon usually refuses.

The Vending-Machine Model

The second pitch: "Our AI agent does whatever you ask. Just prompt it and it generates / books / schedules / writes / calls / files / reports."

The vending-machine model treats the agent as a reactive function with no agency. The user specifies the task in detail. The agent executes it. There is no persistent relationship, no memory of previous interactions, no opinion about whether the task is a good idea.

This is what most consumer "AI agents" actually are, under the hood. It is better than the replacement model because at least the human is in the loop. It is still wrong because the agent has no accumulated context, no discretion, and no reason to decline a bad request.

Doraemon is not a vending machine. When Nobita asks for the Dictator Switch — a gadget that erases people from existence — Doraemon gives it to him, in one famous and disturbing chapter, precisely because he wants Nobita to understand the moral weight of unchecked wishes. That arc is the opposite of the vending-machine model. The agent has a pedagogical relationship with the user. The agent is trying to teach the user something, even at personal risk.

The vending-machine model has none of this. Ask it for the Dictator Switch and it gives you the Dictator Switch. Ask it again and it gives it again. There is no relationship to protect, no user to develop, no context in which the request could be understood as harmful.

The Companion Model

The correct architecture, described in Doraemon across thousands of pages over 27 years of publication, is the companion model.

A companion agent has five structural properties that replacement and vending-machine agents lack:

Persistent relationship. The agent has met this user before. Yesterday is in the context. Last month is in the context. The user's crush on Shizuka is in the context. This is the memory layer, and it is load-bearing.

Tool discretion. The agent, not the user, decides which capability is appropriate for the situation. The user describes the problem. The agent selects from its pocket.

The ability to decline. The agent can refuse to help. It can say "this is not the right tool for this problem," or "I am not going to do this because you will regret it." The refusal has to be a first-class capability, not a guardrail bolted on.

Proactive behavior. The agent initiates. It notices things. It brings up issues the user has not asked about. It reminds. It checks in. The turn-taking is not strictly user-led.

Alignment with growth, not convenience. The agent's goal is the user's long-term development, not the user's short-term comfort. A replacement agent maximizes tasks-completed. A vending-machine agent maximizes requests-fulfilled. A companion agent maximizes how the user ends up.

These are not soft properties. They are hard engineering requirements, and most of them cannot be implemented in a chat-interface product regardless of how good the underlying model is. They require structure that does not fit in a conversation.

What Doraemon Actually Does

The companion-model state machine, drawn from first principles:

Let me get specific. Here is a representative Doraemon chapter, generalized:

- Nobita comes home, upset. He got a zero on a test. He complains loudly that school is unfair.

- Doraemon listens. Does not immediately reach into the pocket.

- Doraemon asks what happened. Establishes context.

- Nobita pleads for a gadget that would have let him pass the test without studying.

- Doraemon hesitates. Usually caves, because he is a soft touch and this is a comedy.

- The gadget is the Memory Bread — press it against a textbook page, eat it, and the contents of the page are perfectly memorized.

- Nobita eats several pieces of bread, memorizes the entire textbook, aces the next test, becomes briefly insufferable.

- Complications ensue. Usually Nobita eats too much bread and gets a stomachache right before the test, expelling all the memorized knowledge. Or the test covers a section he didn't bread. Or something else goes wrong in a way specifically designed to humble him.

- Doraemon, at the end, does not say I told you so. He offers commiseration and perhaps the next gadget.

That loop is the companion model in one sitting. Observe what is happening structurally:

- The agent has memory of the user's history. Doraemon knows Nobita has failed tests before. The context is not fresh each time.

- The agent collects context before acting. Doraemon asks what happened. He does not dive straight into tool selection.

- The agent sometimes declines, but not always. There is room for the user to learn the hard way. This is important — an agent that declines everything is useless.

- The agent lets consequences play out. When the gadget produces problems, Doraemon does not step in to fix them. The stomachache is part of the curriculum.

- The relationship survives the failure. The next chapter starts with the same two characters, slightly more adjusted to each other, ready for the next problem.

Now read this same list as a specification for an AI agent system. It is a specification for an AI agent system. Someone in 1969 wrote it, in Japanese, for children, and the AI industry has spent 57 years catching up.

The 22nd-Century Robot Was Right About Memory

The most under-appreciated technical detail in Doraemon is that his memory is persistent and structured.

He knows what happened yesterday. He knows the names of Nobita's classmates. He remembers the gadgets he has loaned out, which ones caused trouble, and which Nobita has shown enough maturity to be trusted with. He has a model of Nobita's parents, Nobita's teacher, and the social dynamics of Nobita's neighborhood. He has his own emotional state — he gets hungry, sulks when ignored, has opinions about certain foods (dorayaki).

This is not incidental to the comedy. It is the substrate that makes the comedy land. A Doraemon without memory would not be funny. He would be a remote control for a gadget vending machine. The jokes work because the relationship is real.

The current AI industry is stuck at the remote-control stage. Most agent products have, at best, a thin context window — usually the last few messages in a conversation, plus some retrieval-augmented fragments. There is no structured memory of the user, no model of the user's ongoing projects, no relationship that survives restarting the app.

Building an agent with Doraemon-class memory is not a model problem. It is a systems problem. You need:

- A structured store for facts, preferences, and project state

- A separate store for the relationship's history — what the agent did, what worked, what didn't

- A retrieval mechanism that surfaces relevant memory at the right moment without flooding the context window

- A writeback mechanism so new facts and decisions become memory rather than being discarded

- A forgetting policy — because real memory involves forgetting, and agents that remember everything forever are as broken as agents that remember nothing

This is the Memory Reanimation Protocol we have been building inside Taskade Genesis, and it is the single highest-leverage piece of infrastructure in the product. Everything else — agents, automations, the Genesis Equation itself — scales with the quality of the memory layer.

Fujimoto understood this in 1969. He could not have described it in modern computing terms. He showed it by drawing a cat who remembered.

The Pocket as Tool-Use

The four-dimensional pocket is the most elegant depiction of agentic tool-use in any medium.

Observe its properties:

- Bounded scope. Doraemon has a finite set of gadgets. Not infinite. Not arbitrary. A catalog that grew over time but was never unlimited.

- Discretion, not commandment. Nobita does not say "use the Anywhere Door." Nobita says "I want to see Shizuka." Doraemon decides the Anywhere Door is the right tool.

- Tool discovery. New gadgets are occasionally revealed. The pocket has depth. Not every gadget is visible at once.

- Tool failure modes. Gadgets malfunction, get misused, produce side effects. The system has texture. Nothing is frictionless.

- Tool sharing. Other characters — Nobita's friends, family — can use gadgets when appropriate, but only with Doraemon's involvement.

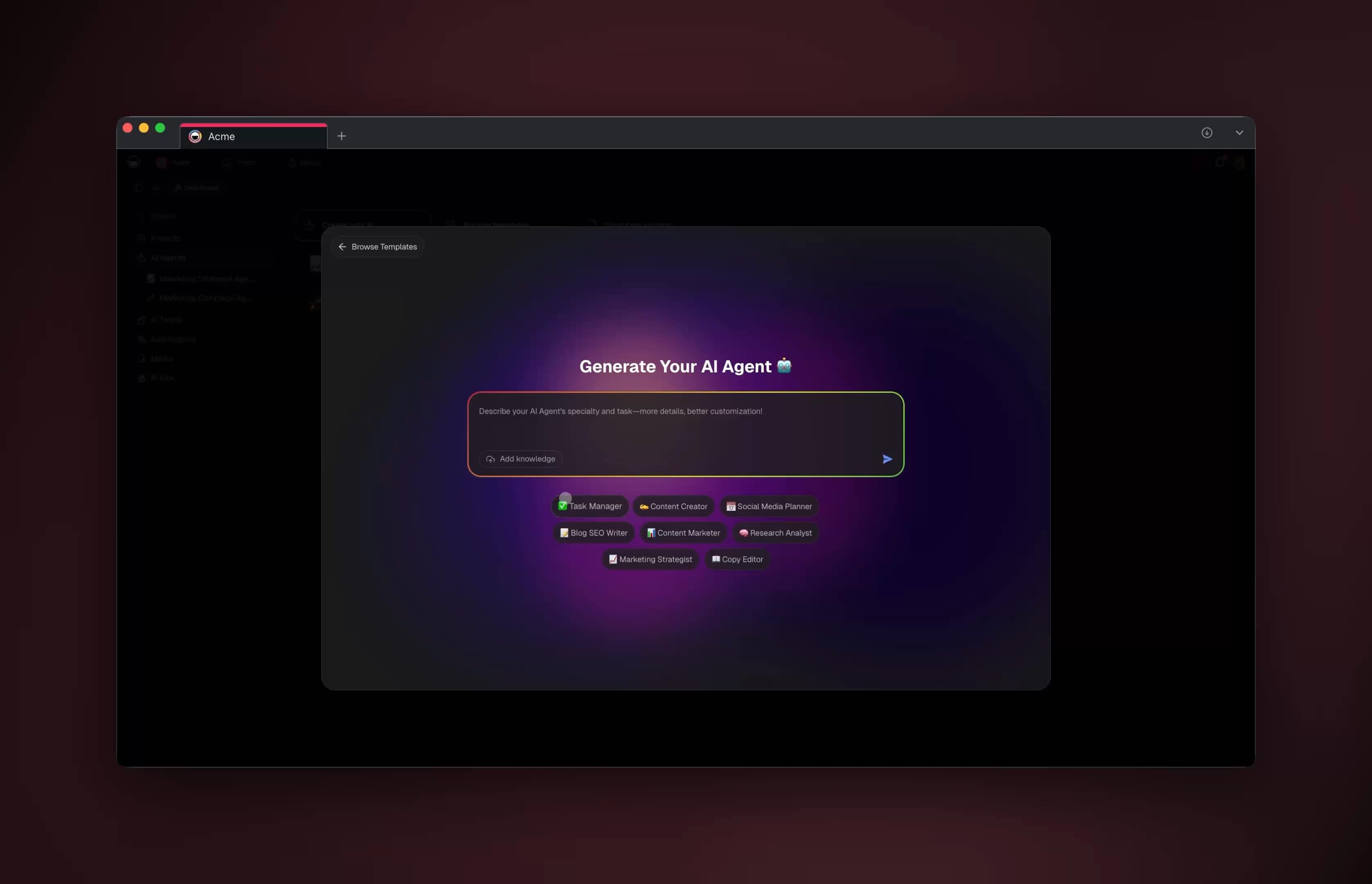

This maps exactly to modern agent tool-use architectures. Agents in Taskade Genesis have a registry of tools (integrations with Gmail, Calendar, Slack, GitHub, 100+ others). The user describes intent. The agent selects the tool. Some tools have access controls; some have side effects; some fail; some can be shared between agents. The agent, not the user, is responsible for the selection.

The Doraemon insight is that the user should not be asked to pick tools. Asking the user which tool to use is a defeat for the agent. The whole reason the agent exists is so the user does not have to know or care which tool is appropriate. Products that ask users to wire up toolchains manually are products where the agent abdicated its core responsibility.

Why I Think Most Agent Companies Will Fail

I am going to be blunt.

Most of the AI agent companies currently raising money are building either replacement agents or vending-machine agents. They will all generate revenue. Many will hit meaningful scale. Few of them are building companion agents, and I believe only the companion agents will be durable because only the companion model actually improves with use.

A replacement agent has a revenue ceiling equal to the wage bill of the role it replaces. Once it replaces the role, growth stops. Then a competing replacement agent offers the same capability at a lower price and the original gets commoditized. This is the path.

A vending-machine agent has no defensible moat because the agent has no accumulated context with the user. Every user is a fresh user. Every competitor can offer the same product to the same user by offering slightly better models or slightly cheaper pricing. This is also a commodity path.

A companion agent accumulates. The longer it runs with a user, the more memory it has, the more context it holds, the more calibrated its judgment becomes, the harder it is to replace. Switching costs compound. The companion agent's moat is the relationship, and relationships do not transfer.

This is the bet Taskade Genesis is making. It is the bet Doraemon made in 1969. The bet is that in the long run, the agent architecture that wins is the one that treats the human as a person to be helped across time rather than a workflow to be automated away.

What This Looks Like in Taskade Genesis

Concrete. Here is what Doraemon-style design looks like in Taskade Genesis in practice:

- Every project is a persistent memory space. Agents in the project have access to the full history of that project — decisions made, artifacts produced, people involved, prior attempts.

- Agents have defined roles (researcher, writer, reviewer, project manager) and explicit scopes. They know what they are supposed to do and what they are not. They can decline requests outside their scope.

- Agents have access to tools via integrations, but the tool selection is the agent's, not the user's. Users describe intent; agents pick tools.

- Agents can delegate to other agents. The planner/executor agent pattern separates the planning agent from the doing agent. Doraemon does not run the whole operation himself — he coordinates.

- Agents surface blockers back to the human rather than guessing. When an action requires permission, authority, or judgment beyond the agent's scope, the human gets a clear decision point, not a silent failure.

None of this is "chatbot with tools bolted on." All of it is "agent as a project citizen with memory, discretion, and judgment."

We are still early. There are many places in the product where we fall short of the Doraemon standard. Agent memory is not yet rich enough to model the user's habits the way Doraemon models Nobita. Agent refusal is not yet discriminating enough — sometimes the agent helps when it should push back. Proactive behavior is still in development; most agents are still more reactive than I would like.

But the direction is clear, and the direction is not "replace the user." The direction is Doraemon.

The Part Where I Get Personal

I am going to end with the thing I have been circling.

I read Doraemon every day as a child because I was often alone. My family had moved countries. My English was bad. I did not fit the school I was in. Most of my days involved figuring out how to navigate a world that was confusing in ways adults around me did not have time to explain.

Doraemon was a blue robotic cat in a book who, by being there every day in a way nothing else was, taught me something about what it meant to have a companion who is consistently present, who keeps track of your problems, who has tools to help but does not solve your life for you, and who — most importantly — treats you as a person whose growth is the point.

When I started building AI agents, years later, I did not initially connect this. I was building what everyone else was building — assistants, automators, chat interfaces. The products worked. They felt hollow. For a long time I could not articulate why.

Somewhere around the second year of building Taskade, I re-read a Doraemon volume I had not looked at in fifteen years and it clicked. The thing I had been trying to build was Doraemon. I had been trying to build it without realizing, and I had been confused by the ways it was coming out wrong because the industry around me was not building that; it was building something else.

The industry is still building something else. This essay is my argument that it should stop.

Taskade Genesis is what I am building in the meantime.

Deeper Reading

- From Bronx Science to Taskade Genesis — The technical lineage that made Doraemon-class agents possible

- Doug Engelbart's 1968 Demo Was Taskade — The augmentation philosophy Doraemon anticipates

- The Execution Layer: Why the Chatbot Era Is Over — Why replacement and vending-machine models plateau

- The Genesis Equation: P × A mod Ω — How the companion model compiles into architecture

- Memory Reanimation Protocol — The memory layer Doraemon implicitly requires

- Chatbots Are Demos. Agents Are Execution. — The shorter version of this argument

John Xie is the founder and CEO of Taskade. He grew up in Shanghai and Queens, read Doraemon more often than most people read the news, and has been slowly, over eight years of building AI products, trying to figure out how to ship a product worthy of the blue robotic cat who raised him.

Build with Taskade Genesis: Create an AI App | Deploy AI Agents | Automate Workflows | Explore the Community

Frequently Asked Questions

What is Doraemon?

Doraemon is a Japanese manga and anime series created by Fujiko F. Fujio (pen name of Hiroshi Fujimoto), first published in December 1969. It tells the story of a blue robotic cat named Doraemon sent back in time from the 22nd century to help an unremarkable elementary-school boy named Nobita Nobi. Doraemon carries a four-dimensional pocket containing futuristic gadgets that solve Nobita's daily problems — or, more often, create new problems that teach Nobita something he needed to learn. The series ran in manga form until Fujimoto's death in 1996 and continues as one of the most culturally influential animated franchises in Asia, with over 250 million copies of the manga in print.

Who is Nobita and why does he matter to AI?

Nobita Nobi is the central human character of Doraemon. He is lazy, forgetful, bad at sports, worse at school, emotionally transparent, and fundamentally kind. The series treats him as a complete human being — not a problem to be optimized away, not a user to be served, but a person who grows over decades of stories. Nobita matters as an AI design reference because he represents the correct model of the human in a human-AI partnership: present, imperfect, making his own decisions, facing the consequences, and being helped — not replaced — by his companion.

What are Doraemon's gadgets and how do they relate to AI?

Doraemon carries a four-dimensional pocket that produces futuristic tools called 'dougu.' Famous examples include the Anywhere Door, which teleports users to any location; the Take-Copter, a small propeller that enables flight; the Translation Konnyaku, a jelly that grants universal language comprehension when eaten; the Memory Bread, which copies text onto bread that when eaten transfers the information to the eater; and the Time Machine, which travels through time. Each gadget corresponds remarkably well to a modern AI capability. The deep insight is that Doraemon delivers these capabilities as a companion who chooses when to use them, not as a vending machine that hands them over on demand.

What is the companion model of AI agents?

The companion model of AI agents designs the agent as a persistent partner to a human, not as a replacement for the human or an automation that runs without the human. The companion has its own judgment, its own memory of the relationship, and the discretion to withhold help, offer advice, or hand a problem back to the human when growth is the right outcome. This contrasts with the replacement model (agents that do your job for you) and the vending-machine model (agents that produce outputs on demand with no agency). Doraemon is the archetypal companion agent.

Why do most AI agent products get the companion architecture wrong?

Most AI agent products are built around one of two broken models. The replacement model aims to automate tasks to zero human involvement, which works for narrow domains but fails for anything involving judgment, learning, or accountability. The vending-machine model treats the agent as a tool that produces outputs on demand, which lacks the continuity and relationship depth that makes agents genuinely useful teammates. The companion model — agent as partner with persistent relationship, memory, and judgment — is harder to build because it requires memory, context, and the discipline to sometimes say no. Most products skip this because it's not the easiest pitch.

How does Taskade Genesis use the companion model?

Genesis agents are built as persistent entities with roles, memories, and relationships to specific projects and humans. An agent in Taskade Genesis is not a new chat session every time — it's a continuing partnership that accumulates context over weeks and months. The agent can propose actions, delegate to other agents, surface blockers back to the human, and decline tasks that fall outside its scope. Agents live inside projects (the memory layer) and operate through automations (the execution layer), which together make them more like Doraemon — a companion with tools — than like a function call with a chat interface.

What is the fourth-dimensional pocket and how does it map to modern AI?

Doraemon's four-dimensional pocket is a bottomless storage space where he keeps his gadgets. In modern AI terms, it is the tool-use layer: the set of capabilities an agent can call upon when the situation demands. Modern agents have similar structures — a registry of tools, integrations, or functions they can invoke. The critical design insight from Doraemon is that the agent, not the user, decides which tool to reach for. The user describes the problem; the companion selects the gadget.

Why is Nobita's growth important to the companion model?

The emotional core of Doraemon is that Nobita grows over the course of the series — incrementally, with many setbacks, often painfully. Doraemon's gadgets accelerate or enable this growth, but they do not substitute for it. When a gadget is used to avoid growth — to cheat on homework, to win unfairly, to escape consequences — the story typically punishes the choice. This is the most important design principle the series contains: the agent's purpose is to support the human's development, not to replace the human's agency.

What's the difference between Doraemon and a chatbot?

A chatbot is reactive and amnesiac — it responds to prompts and forgets. Doraemon is proactive and persistent — he lives with Nobita, initiates conversations, remembers yesterday, knows the other characters in Nobita's life, and has opinions about what Nobita should do. This is the gap between current mainstream AI and where AI needs to go: from reactive text generators to persistent companion entities. The technical requirements for building a Doraemon-class agent — persistent memory, a relationship model, tool discretion, and proactive behavior — map exactly to what execution-layer workspaces provide and chat interfaces cannot.

Is there a risk of AI companions replacing human relationships?

Yes, and Doraemon itself addresses this question. Nobita has human friends — Shizuka, Gian, Suneo — and Doraemon never replaces them. The stories are explicit that Nobita's growth depends on human relationships; Doraemon supports them but does not substitute for them. The right model for AI companionship is one that strengthens rather than displaces human connection. An agent that makes its user more effective at work, more present with family, and more capable in their relationships is operating as Doraemon. An agent that becomes the user's primary social relationship is a failure mode the original manga explicitly warned against.