TL;DR: AI agents forget because the industry confused context windows with memory. Context windows are working memory. RAG is retrieval. Neither is long-term memory. Real memory requires five components: persistence, structure, retrieval, writeback, and forgetting. The Memory Reanimation Protocol is the architecture we built inside Taskade Genesis to implement all five, and memory quality is the single highest-leverage variable in agent system design. Try agents with real memory →

Why Do AI Agents Forget? (The Complaint You've Heard a Hundred Times)

Every knowledge worker I have talked to in 2026 has made the same complaint, usually within the first ten minutes of describing how they use AI.

"It doesn't remember anything. Every conversation starts from scratch. I have to explain the project every time. I paste the same context into the prompt every day."

The complaint is technically correct. It is also revealing, because the users have independently arrived at the right diagnosis — the AI is missing memory — but the industry has responded with larger context windows, more sophisticated retrieval, and better prompt engineering, none of which are actually memory.

This piece is about the gap between what users mean by memory and what the industry has shipped. It is about why the gap exists, why it matters more than almost any other architectural decision in an agent system, and how we have tried to close it inside Taskade Genesis with an architecture we call the Memory Reanimation Protocol.

It is a more technical piece than most of what I write on this blog. If you are a builder, you are the audience. If you are a user wondering why your agent keeps forgetting, stick around — by the end you will have a much better vocabulary for what is broken and what needs to be fixed.

The Three Things That Get Called "Memory"

Almost every argument about AI memory in 2026 is actually an argument between three different things using the same word. Separating them clears a lot of fog.

1. The Context Window

The context window is the set of tokens a language model can attend to in a single forward pass. It is a property of the model architecture. In 2020 it was a few thousand tokens. In 2026 it is a few million, in the frontier models. It is the model's working memory — what the model is aware of right now, this turn, this completion.

Context windows have two properties that disqualify them from being memory in the full sense:

- They evaporate. When the session ends, the context window is cleared. The next session starts empty.

- They fill up. Even the largest context window has a finite token budget. Long conversations push earlier content out. Important early context gets lost beneath recent noise.

Making the context window bigger is an arms race the frontier labs are running, and it is useful — a 2M-token window is qualitatively better than a 32K window. But it is not memory. It is a bigger whiteboard. A bigger whiteboard that you erase at the end of every shift is not a filing cabinet.

2. Retrieval-Augmented Generation (RAG)

RAG is a pattern where external documents are indexed (usually as embeddings in a vector database), and at query time the most relevant chunks are retrieved and injected into the context window. This lets a model "know" things that are not in its training data or its current context.

RAG is genuinely useful and solves real problems. It is the best way to ground a model's outputs in specific source material, to keep responses current with data that changed after training, and to scale knowledge beyond what fits in a context window.

But RAG is not memory. Three reasons:

- RAG is read-only from the agent's perspective. The agent usually cannot update the document store as it learns. New facts from today's conversation do not automatically flow back into tomorrow's retrievable knowledge.

- RAG retrieves by similarity, not by relevance-over-time. Semantic search finds chunks that look textually similar to the query. It does not model the evolution of a project, the history of decisions, or the reasons some information supersedes other information.

- RAG retrieves documents, not structured knowledge. The retrieved chunks are raw text. The agent has to reconstruct structure from them every time. There is no typed knowledge base of entities, relationships, and decisions.

RAG is a retrieval layer. Memory uses retrieval, but retrieval alone is not memory.

3. True Long-Term Memory

What users actually want when they complain that the AI forgets is something closer to human long-term memory: a persistent store of facts, decisions, relationships, and context that:

- Survives across sessions, restarts, and app upgrades

- Is updated by the agent as new information comes in

- Can be selectively recalled based on relevance to the current task

- Includes both explicit facts ("the launch date is May 3") and implicit patterns ("the user prefers short messages in the morning")

- Forgets things that become irrelevant, stale, or contradicted

- Is shareable across agents collaborating on the same work

This is a different thing from context windows and RAG. It is a separate architectural layer that uses context and retrieval but is not either of them.

The industry has shipped context and retrieval. Few products have shipped actual memory. This is the gap.

The Five Components of a Real Memory System

If you are going to build memory properly, you need all five of these. Skip any one and you have a degraded system.

Persistence

Memory has to survive across sessions. Obvious, frequently violated. If your "memory" lives in session state, process RAM, or a chat-history array that gets truncated when it hits a size limit, you don't have memory — you have a cache with a short TTL.

Persistence means durable storage: a database, a file, an object store. Something that survives restarts, app upgrades, and the specific chat session that generated the memory. In Taskade Genesis, we store memory in two places for each project: human-readable Markdown files (MEMORY.md) and machine-optimized index files (.tdx).

Structure

Memory has to be organized. The difference between an unstructured text log and a structured memory store is the difference between "somewhere in the last 10,000 messages you said something about the pricing model" and "the pricing model is X, decided on Y, by Z."

Structure includes:

- Entities. The people, projects, tools, and concepts that recur across work.

- Facts. Discrete assertions the agent can rely on ("the launch date is May 3," "the user prefers async updates").

- Decisions. Choices that were made, with reasons and alternatives considered.

- Relationships. How entities relate to each other (who reports to whom, which project depends on which).

- Temporal context. When each fact was recorded, when it was last relevant, whether it has been superseded.

Flat unstructured text is recoverable via search but is not queryable. Structured memory can be queried by type, by entity, by date, by source. This is the difference between a journal and a database.

Retrieval

Structured, persistent memory is only useful if the right memory gets surfaced at the right moment. This is a retrieval problem, and it is where RAG techniques are genuinely load-bearing.

But memory retrieval is not just semantic similarity search. It is a more nuanced operation:

- Recency bias — more recent memories are usually more relevant, all else equal

- Frequency weighting — memories referenced often are likely important

- Entity alignment — memory about the current entity (person, project, task) gets priority

- Explicit pinning — some memories are marked as "always relevant"

- Recency-of-reference, not just recency-of-creation — a memory touched yesterday matters more than one sitting unused since last year

The retrieval layer is the second-hardest engineering problem in a memory system (after the forgetting layer). Getting it right is the difference between an agent that feels present and one that keeps surfacing irrelevant old context.

Writeback

The agent has to be able to write to memory. Not just the human. The agent.

This is a subtle but critical property. If only the user can update memory — through manually edited system prompts, pinned facts, or user-level memory features — then memory captures only what the user remembers to record. Agents observe far more than users explicitly tell them. A properly instrumented agent will notice that the user consistently chooses option A when offered A and B, will observe that this project's meetings are Tuesdays, will record the names of new collaborators, will update its model of preferred communication style.

Writeback creates a flywheel. The agent's memory improves with every session. More memory leads to better retrieval leads to more contextual responses leads to better interactions leads to more valuable memories — repeat.

This is the single biggest architectural difference between memory and RAG. RAG is read-only. Memory is read-write.

Forgetting

Memory without forgetting is not memory. It is a noise accumulator.

Biological memory is brilliant at forgetting. You do not remember what you had for breakfast on April 14, 2019, unless something remarkable happened. You do remember your phone number, your mother's name, and the major decisions of your life. The selective retention is not a bug; it is the feature that makes memory usable.

AI memory systems need analogous mechanisms:

- Temporal decay. Old, unreferenced memories drop in retrieval priority. Not deleted — just less likely to surface.

- Importance weighting. Memories marked as decisions, commitments, or corrections are preserved preferentially.

- Summarization. Detailed day-by-day interactions get compressed into higher-level patterns over time. The agent remembers that the user always wants concise emails rather than remembering each individual email exchange.

- Conflict resolution. When new memories contradict old ones, the old ones are marked superseded rather than both being kept in tension.

- Active pruning. Long-unused, low-importance memories are eventually removed to keep retrieval efficient.

A memory system without forgetting works for the first month, degrades for the next six, and becomes unusable after a year. The forgetting layer is invisible when it works and catastrophic when it does not.

A Map of the Five Components

Before getting into the architecture, here are the five components arranged by what each one solves and what goes wrong when it's missing:

Skip any of the five and you have a degraded system with a predictable failure mode. That is the whole argument for why memory is infrastructure, not a feature.

The Memory Reanimation Protocol

Inside Taskade Genesis we call our implementation of all five components the Memory Reanimation Protocol. The name is a little grandiose on purpose — it reflects something important about how memory actually works in an agent system.

Memory is not a passive data store. It is not a file you open. When an agent activates inside a project, the memory has to come alive — be loaded, resolved, indexed, and made available to the agent's reasoning. This is an active process, and getting it right is what makes the difference between an agent that feels present and one that feels like a stranger.

Here is the architecture at a high level.

The two-tier store

We store memory in two layers per project:

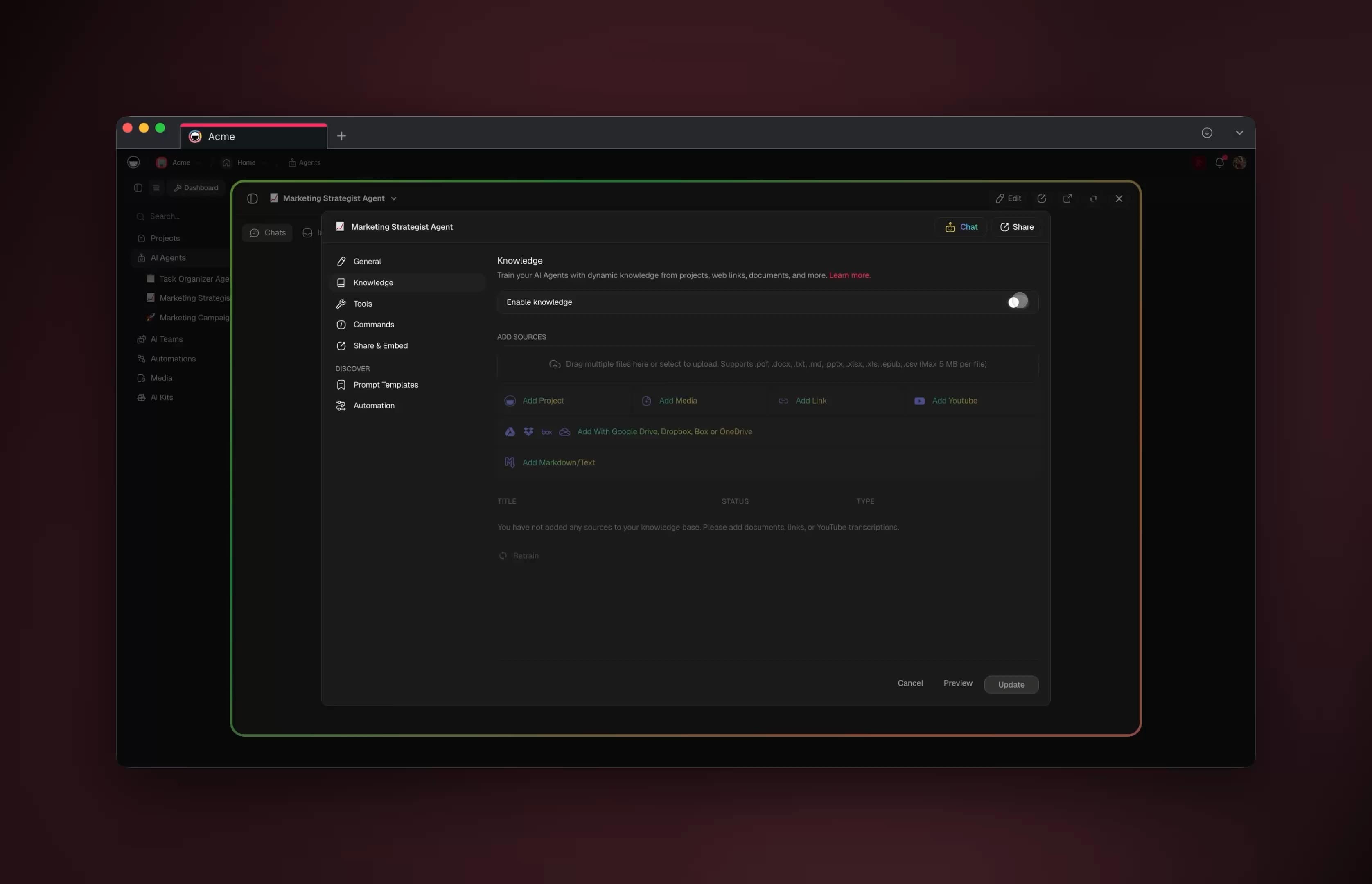

MEMORY.md— a human-readable Markdown file with structured sections for facts, decisions, entities, relationships, and open questions. Users can read and edit this directly. Agents append to it through controlled writeback operations..tdx— a machine-optimized retrieval index. Embeddings, structured indices, temporal metadata, reference counts. Not human-readable. Regenerated fromMEMORY.mdwhen needed.

The two-tier design keeps the memory layer inspectable. A user can open MEMORY.md and see exactly what the agent "knows" about the project. There are no opaque vector blobs the user cannot audit. This is important for trust and for debugging. When an agent produces a surprising response, the user can look at the memory and understand why.

The reanimation step

When an agent is activated in a project — whether by the user opening the workspace or by an automation firing — the reanimation step runs:

- Load

MEMORY.mdand parse its structured sections - Load

.tdxretrieval index - Resolve cross-project references (agents can read memory from linked projects if permissioned)

- Build the initial working set: pinned facts, recent decisions, current open questions, entities likely to be relevant

- Inject this working set into the agent's context window along with the user's current request

The agent then operates with a primed memory. It does not need to be told, in the user's message, what the project is about. It already knows.

The writeback loop

During the session, the agent continuously observes and records:

- Facts stated by the user — appended to the facts section of

MEMORY.md, indexed in.tdx - Decisions made — recorded with alternatives and reasoning

- New entities mentioned — added to the entities graph

- Observed patterns — summarized and stored (e.g., "user prefers short morning messages")

- Corrections — old memories marked superseded, new ones take priority

The writeback is rate-limited and passes through a small amount of filtering to avoid memory spam. We do not want the agent recording "user said hello" as a fact. There is a lightweight classifier that decides whether an observation is memory-worthy. This is an imperfect system and we keep iterating on it.

The forgetting policy

Memories decay along several dimensions:

- Age — older memories get lower retrieval priority unless refreshed by reference

- Reference count — frequently referenced memories stay active

- Explicit pinning — the user can mark memories as permanent

- Supersedence — when a new memory contradicts an old one, the old one is marked superseded but preserved for audit

- Summarization — when the memory store grows too large, detailed individual records are summarized into higher-level patterns and the details are archived

The policy is tuned per project type. A project with stable long-term context (a personal knowledge base, for example) has slower decay. A project with fast-moving day-to-day operations (a launch plan) has faster decay.

How Taskade Genesis Compares to Other Memory Systems

There are several adjacent products that have shipped partial memory systems. It is worth mapping them against the five components.

| Product | Persistence | Structure | Retrieval | Writeback by agent | Forgetting | Five-of-five? |

|---|---|---|---|---|---|---|

| ChatGPT Memory (user-level, Feb 2026) | ✓ | Unstructured | Implicit | ⚠︎ (model updates user-level facts, not project) | Limited (user delete) | No |

| Claude Projects + Memory (Mar 2026) | ✓ | Partial | Implicit | ⚠︎ (memory writes are model-initiated) | Limited | No |

Cursor rules (.cursorrules) |

✓ | User-defined | No | No | Manual | No |

| Notion AI + Custom Agents | ✓ (workspace) | Partial (DB rows) | ✓ (across workspace) | ⚠︎ (agent can edit pages) | No | No |

| LangChain Memory classes | Depends on store | Minimal | ✓ | ✓ | Manual | No |

| Mem0 (open-source memory layer) | ✓ | ✓ | ✓ | ✓ | ⚠︎ (decay policies in beta) | ~Four of five |

| Taskade Genesis (MRP) | ✓ | ✓ (facts, decisions, entities, relationships, temporal) | ✓ (semantic + structured) | ✓ (agents write back through a controlled classifier) | ✓ (age, reference count, pinning, supersedence, summarization) | Yes — with forgetting still improving |

Every product in this list is useful. None of them, including ours, has solved memory completely. The gap between where Taskade Genesis is today and the ideal memory system is still significant. But we are among the few products that ship all five components — persistence, structure, retrieval, writeback, forgetting — rather than shipping two or three and calling it memory.

Memory as Product, Not Plumbing

If you Google "AI agent memory" in April 2026 the first page is almost entirely framework literature — Mem0 vs Letta, Zep vs LangMem, HNSW indexes, vector-DB choice, decay policies, cosine similarity. The implicit audience is an engineer building a memory layer. That is a real audience; it is not the only one.

The audience nobody is writing for is the operator who just wants memory that they can see. Not a vector DB they have to trust. Not an opaque "the model remembers you" checkbox. An actual page they can open, read, edit, pin, delete. Memory as a UI.

framework memory Taskade Genesis memory

───────────────────── ─────────────────────

Where is it? A vector DB A Taskade Project

What does it look An embedding row in A document you open

like to a human? Postgres at /p/{projectId}

Can the user edit Usually no — writes Yes — memory is

a specific fact? are model-initiated a first-class doc

What happens when Custom API — if the Reassign ownership,

an employee leaves?framework supports it archive the Project

Can an agent cite Only via raw text Yes — agents link to

which memory provenance if hacked the Project URL the

powered a reply? in fact came fromThe EVE meta-agent's own memory ships as Taskade Projects under

a projects/memories folder. The workspace eats its own dogfood

— our agent uses the exact memory primitive we sell.

Almost every "agent memory" framework is optimized for engineers wiring a layer under their own agent. Taskade Genesis optimizes for the human who has to live with the memory afterward. Both are legitimate; they are not the same product. Taskade owns the second frame because Projects already existed as a readable, editable, permissioned surface — we just taught the agent to treat them as memory.

Convergent Validation: Four Independent Voices, One Memory Shape

The most interesting signal that this framing is right is that four independent camps converged on nearly identical architectural primitives in the first four months of 2026, without coordination:

| Camp | Public artifact | The primitive they shipped |

|---|---|---|

| Andrej Karpathy | autoresearch (open source, Mar 2026) |

program.md — human-editable instruction file — as the anchor for an edit / train / evaluate loop |

| HKUDS research | AutoAgent |

Self-managed memory + Agentic-RAG that lets editor/workflow agents update their own context |

| Anthropic | Claude Code MEMORY.md + Skills | Markdown memory file per project + reusable skill bundles |

| LangChain (Harrison Chase) | Deep Agents framework | File-system access as a first-class agent primitive — "giving the LLM more control over its environment turns out to be really good" |

| LTM "columnar cortex" paper | Academic research, early 2026 | Graph-shaped retrieval over structured memory |

Strip the branding and every one of these is describing the same three things: a small human-editable instruction artifact, a persistent structured memory layer, and a graph-or-file view of that memory that both humans and agents can read.

The shape is the same whether you call it Workspace DNA (▲ Memory ↔ ■ Intelligence ↔ ● Execution), the Genesis Equation, the autoresearch triplet, or Deep Agents. Four independent camps, converging on it, without coordination, in the same four months. That is how you know the architecture is load-bearing — and why building against this shape today, on a substrate where all four primitives are already wired, compounds faster than building the substrate yourself.

Candor: our forgetting policy is the weakest part of the system. Summarization quality is inconsistent. We sometimes over-prune. We sometimes under-prune. We are iterating, and I expect the forgetting layer to improve substantially over the next six months. This is the hardest part of memory by a wide margin.

Why Memory Is Load-Bearing

The Genesis Equation can be read as a claim about memory. The equation is P × A mod Ω — Projects times Agents, modulo Organizational context. P is memory. Every term in the equation multiplies against it.

This means that improving memory quality has multiplicative effect on the whole system. Double memory quality and you double agent effectiveness. Halve memory quality and you halve it. The agent's intelligence is bottlenecked by what it can remember about the context it is operating in.

This is why we invest disproportionately in memory. It is not the flashiest work. Nobody demos their memory architecture at a product launch. But the activation gap, the retention curve, and the word-of-mouth growth rate of Taskade Genesis all correlate more tightly with memory improvements than with any other category of change we make. When users say "this feels like a real assistant" — and some do, which is gratifying — the underlying reason is almost always that the memory layer is doing its job. The model is the same frontier model everybody else has. The memory is what makes the experience different.

What Good Memory Feels Like

Since this is a technical piece in a generally technical voice, let me close with a user-facing observation.

When memory is working well, the user stops noticing it. That is the goal. The experience is not "wow, the AI remembered that" — the experience is the absence of the friction that used to exist, the absence of re-explaining context, the absence of pasting the same background into every session. You open the workspace and the agent just knows what you are working on. You mention Sarah and the agent knows who Sarah is. You reference last week's decision and the agent knows which decision. You change your mind on something and the agent updates its understanding without drama.

This is what our best users experience, in our best projects, when the memory layer is at its best. It is not the majority of sessions yet. It is enough of them that we know the thing we are building is real.

When memory is broken, you get what everybody complains about: every session is a stranger. You spend the first three minutes of every interaction explaining things the agent should already know. You become the integration layer between sessions, holding the context in your own head and pasting it back in each time you start over.

The difference between these two experiences is entirely the memory layer. The models are the same. The UI is the same. What changes is whether the agent's memory is alive or dead.

The protocol's job is to keep it alive.

What's Next

A short roadmap, for builders who are thinking about this in their own products.

- Better forgetting. As noted, this is our weakest component and the biggest planned investment.

- Cross-project memory federation. Some facts are person-level (preferences, communication style) rather than project-level. Our current implementation is project-scoped; cross-project federation with proper permissioning is in development.

- Memory-aware retrieval evaluation. Measuring retrieval quality empirically rather than by vibes. This involves building eval sets specific to memory operations, which is itself a research problem.

- Multi-agent shared memory. When multiple agents collaborate on a project, they need shared memory they can all read and selectively write to. The permission model here is subtle and we are iterating.

- User-visible memory debugging. When an agent produces a surprising response, users should be able to ask "why did you think that?" and get a trace back to the memory that informed it. This is partly an interpretability problem and partly a UI problem.

Each of these is a months-long project. Memory is not a feature you ship in a sprint. It is infrastructure you build and refine for years.

Closing

The industry has confused context windows and RAG for memory for long enough. Users have told us, through their complaints and their usage patterns, what they actually need. The gap between what has been shipped and what has been needed is the most important unbuilt piece of AI infrastructure in 2026.

At Taskade we have built some of it. Others have built some. There is a lot left.

If you are building an agent product, spend disproportionately on memory. If you are a user evaluating agent products, ask what the memory architecture is and listen for the five components. If the answer is "context windows" or "RAG," keep shopping.

- Find the substrate.

- Make it persist.

- Make it forget.

- Then everything else multiplies.

Deeper Reading

- The Genesis Equation: P × A mod Ω — The architectural context for why memory is the P factor

- The Execution Layer: Why the Chatbot Era Is Over — Why chat interfaces structurally cannot have memory

- Doug Engelbart's 1968 Demo Was Taskade — The original vision of persistent, shared context

- What Doraemon Taught Me About Building AI Agents — Why companion agents need memory more than any other architecture

- The 27-Year Accident — The pattern of "one missing substitution" applied to memory

- Software That Runs Itself — The product thesis memory makes possible

John Xie is the founder and CEO of Taskade. He has spent more of the last two years thinking about memory architecture than any reasonable person should. He is unrepentant.

Build with Taskade Genesis: Create an AI App | Deploy AI Agents | Automate Workflows | Explore the Community

Frequently Asked Questions

Why do AI agents forget?

AI agents forget because most implementations confuse three different things: context windows, retrieval-augmented generation (RAG), and actual memory. A context window is the attention span of a single model call — everything the model is aware of in this specific completion, limited by token budget and thrown away when the session ends. RAG is a retrieval layer that searches external documents and injects relevant chunks into the context window at query time. Neither is memory in the sense that humans mean the word. Real memory requires persistent, structured storage that accumulates over time, can be selectively recalled, and is updated by the agent as new information arrives.

What's the difference between context windows and memory?

A context window is the set of tokens a language model can attend to in a single forward pass. It typically ranges from a few thousand tokens in older models to a million or more in modern frontier models. It is temporary — when the session ends or the window fills up, earlier content is lost. Memory, by contrast, is persistent storage that survives sessions and accumulates over weeks or months. Context windows are working memory; true memory is long-term memory. The industry has largely been building bigger context windows and calling it memory, but a million-token window that empties at the end of every session is not memory — it's a bigger whiteboard.

What is RAG and why is it not a full memory solution?

RAG — Retrieval-Augmented Generation — is a technique where an external document store is searched at query time and relevant excerpts are injected into the model's context window. It's a powerful pattern for grounding model outputs in specific source material, but it isn't memory for several reasons. RAG is read-only from the agent's perspective; the agent usually can't write new information back. RAG retrieves based on semantic similarity, which is not how human memory works. RAG doesn't model the evolution of ideas or decisions over time. And RAG retrieves documents, not structured facts or relationships. A full memory system uses RAG as one component but surrounds it with writeback, structure, and temporal awareness.

What are the five components of a real memory system?

A real AI memory system has five components: Persistence (memory survives across sessions and restarts), Structure (memory is organized into queryable categories like facts, decisions, entities, and relationships, not just undifferentiated text), Retrieval (the right memory can be surfaced at the right moment without flooding the context window), Writeback (the agent can update memory as new information arrives), and Forgetting (memory has a decay or pruning policy because unlimited accumulation becomes unusable).

What is the Memory Reanimation Protocol?

The Memory Reanimation Protocol is the architecture we built inside Taskade Genesis to give agents persistent, structured memory that survives across sessions. It uses a two-tier design: MEMORY.md files store human-readable structured facts and decisions per project, while .tdx files store machine-optimized retrieval indices. When an agent activates inside a project, the protocol reanimates the relevant memory — loading context from persistence, resolving references, and preparing the working set before the agent takes its first action. The name reflects that memory is not a passive data store but an active process.

How do Claude Projects, Cursor rules, and ChatGPT memory compare?

Each addresses a different slice of the memory problem. Claude's Projects feature provides a persistent context pool shared across chats within a project. Cursor's .cursorrules files provide persistent instructions for a codebase. ChatGPT's memory feature stores user-level facts across conversations. These are all useful for their specific use cases, but none of them provide project-level structured memory shared between multiple agents working together. The Memory Reanimation Protocol in Taskade Genesis is closer to a full memory system — it's project-scoped, multi-agent, structured, and updatable by agents themselves rather than only by the user.

Why is memory the most load-bearing component of an AI agent system?

Because every other component's effectiveness scales with memory quality. An agent with no memory is a stranger you re-explain your work to every session. An agent with bad memory is actively harmful — it remembers things wrong, surfaces irrelevant context, or misses critical decisions. An agent with excellent memory becomes a teammate who knows what you decided last month, why, and what changed since. The Genesis Equation (P × A mod Omega) shows this mathematically: P (memory) multiplies A (intelligence), so doubling memory quality doubles agent effectiveness. Memory is not a feature — it's the substrate everything else sits on.

What is the difference between short-term and long-term memory in AI agents?

Short-term memory in an AI agent corresponds to the context window — what the agent is aware of in the current turn or session. Long-term memory corresponds to persistent, structured storage that accumulates across sessions. In biological terms, short-term memory is what you're holding in mind right now; long-term memory is what you know about your life, your work, and your relationships. Current AI systems are overwhelmingly weighted toward short-term memory (huge context windows) and weak on long-term memory (persistent structured storage). The Memory Reanimation Protocol is designed to correct this imbalance.

Why does an AI agent need to forget?

An AI agent needs to forget because unlimited accumulation of memory becomes unusable. Without a forgetting policy, memory grows indefinitely, retrieval latency increases, noise crowds out signal, and the agent becomes slower and less accurate over time. Biological memory solves this with decay and consolidation — unimportant details fade, while important patterns are reinforced. AI memory systems need analogous mechanisms: temporal decay, importance weighting, summarization, and active pruning. A memory system without forgetting is a memory system that will eventually strangle itself.

How does the Memory Reanimation Protocol work in practice?

When a user or agent activates inside a Genesis project, the protocol runs in the background to reanimate the relevant memory layer. It reads the project's MEMORY.md file for human-readable structured facts, loads the .tdx retrieval index for fast semantic search, resolves any cross-project references, and prepares a working set of the most relevant memories for the current task. The agent then operates with this reanimated memory as its ongoing context. As the agent works, any decisions, new facts, or important observations are written back to MEMORY.md and indexed in .tdx, so the memory grows over time.