The Hype and the Letdown

Every era of technology has its shiny demo.

Today, it is the chatbot.

Type a question, watch AI type back. Screenshot it, share it, call it the future.

But here is the truth: chatbots are demos. They are clever, they are flashy, and they collapse under the weight of real work. Chat is not execution. Conversation is not collaboration.

TL;DR: Chatbots respond in conversation windows. AI agents execute in workspaces. The EPICS benchmark shows frontier models complete real professional tasks only 24% of the time because they lack execution infrastructure. Taskade Genesis provides that infrastructure: Memory (projects), Intelligence (22+ agent tools), and Execution (100+ automations). Companies like Monday.com have already replaced entire teams with agents. Start building with agents ->

Projects do not move forward because of a clever conversation.

Businesses do not scale because of screenshots. Execution is what matters.

And that is where agents come in, as teammates that actually ship.

The 8-Dimension Comparison: Chatbots vs. AI Agents

Before diving into why chatbots fail, here is the complete comparison across every dimension that matters for real work.

| Dimension | Chatbot | AI Agent (Taskade Genesis) |

|---|---|---|

| Memory | Stateless (forgets between sessions) | Persistent (remembers across sessions, projects, and conversations) |

| Scope | Single conversation window | Entire workspace with projects, databases, and files |

| Execution | Text output only | Creates tasks, sends emails, updates databases, triggers workflows |

| Integration | None (copy-paste to other tools) | 100+ native integrations (Slack, Gmail, HubSpot, Stripe, and more) |

| Autonomy | Waits for each prompt | Runs on triggers, schedules, and events without human input |

| Collaboration | One user, one thread | Multi-agent teams working on shared workspace data |

| Learning | No context between sessions | Trained on workspace knowledge, updates daily to real-time |

| Reliability | Hallucinates freely, no guardrails | Grounded in workspace data with tool-based verification |

The gap is not about intelligence. Both chatbots and agents use the same frontier models from OpenAI, Anthropic, and Google. The gap is about infrastructure: what surrounds the model and determines whether its output becomes action.

Why Chatbots Fail at Real Work

Chatbots break down the moment you move from conversation to execution:

- Unreliable: They hallucinate, contradict themselves, and lose context.

- Isolated: Stuck in a window, disconnected from tools, projects, and workflows.

- Passive: They wait for prompts. No initiative, no monitoring, no loops.

- Output-Only: Text instead of systems. Suggestions instead of solutions.

The result? Endless conversations, zero execution.

The EPICS Agent benchmark quantified this gap: frontier models score above 90% on standard benchmarks but complete real professional tasks only 24% of the time. The failures are not about intelligence. They are about execution and orchestration. Agents get lost after too many steps, loop on approaches that already failed, and lose track of what they were supposed to be doing. The fix is not a better model. It is a better harness: the infrastructure around the model that manages context, tools, recovery, and state.

The companies that understand this are already acting on it. Monday.com CEO Eran Zinman revealed on the 20VC podcast (2026) that Monday.com replaced its entire 100-person SDR team with AI agents, cutting response times from 24 hours to 3 minutes and improving conversion rates across every metric. That is what the shift from chatbots to agents looks like at enterprise scale: not better conversations, but better outcomes.

What Real Execution Requires

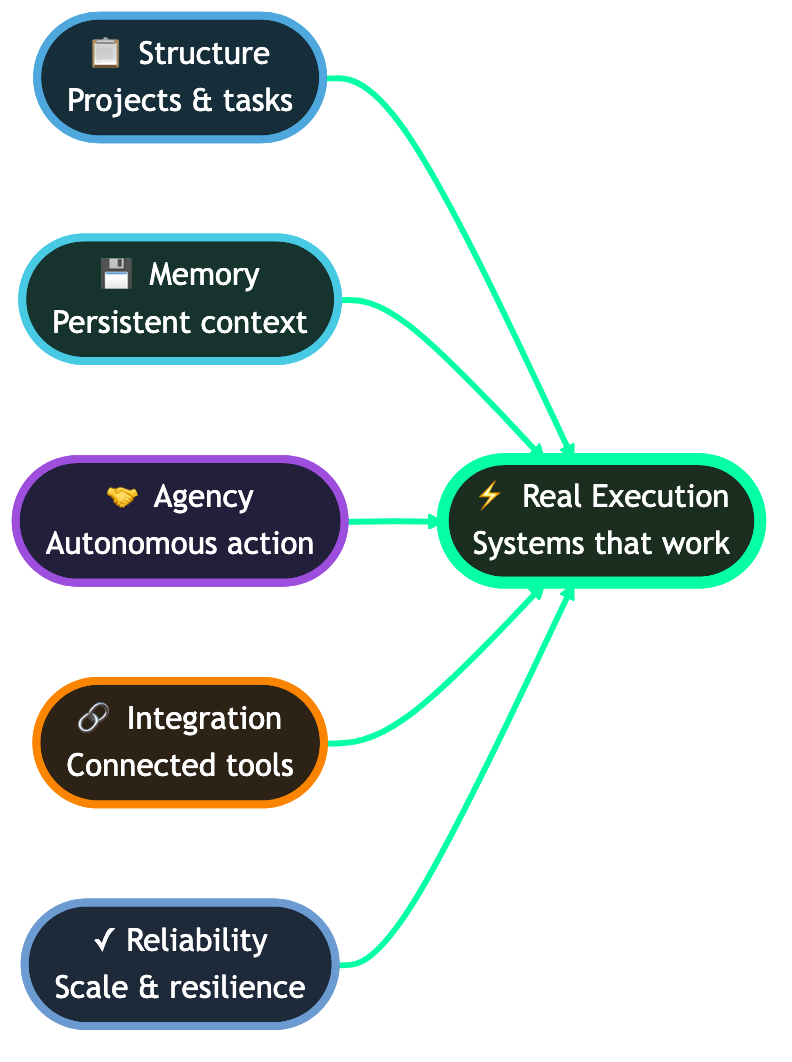

Execution is a system, not a chat. It requires:

- Structure -- projects, tasks, dependencies, deadlines

- Memory -- persistence across time and context

- Agency -- delegation, coordination, autonomous action

- Integration -- direct connection to your workflows and tools

- Reliability -- resilience at scale and under failure

That is not a chatbot. That is a workspace that thinks, remembers, and acts.

| Requirement | What It Means | How Taskade Delivers |

|---|---|---|

| Structure | Tasks, dependencies, deadlines | 7 project views: List, Board, Calendar, Table, Mind Map, Gantt, Org Chart |

| Memory | Persistence across sessions | Workspace DNA Memory layer with auto-syncing knowledge |

| Agency | Autonomous delegation | AI agents with 22+ built-in tools and slash commands |

| Integration | Connection to your stack | 100+ integrations across 10 categories |

| Reliability | Resilient under failure | Background agents (Pro+) with persistent execution |

Agents: The Leap from Demo to Execution

An AI agent is much more than a chatbot. It is a teammate.

- It remembers context across every interaction.

- It plans multi-step workflows and decomposes complex goals.

- It acts continuously, not reactively.

- It collaborates with humans and other agents.

- It builds systems that persist and scale.

This is the foundation of Taskade Genesis.

The execution layer for human + AI collaboration.

The Economic Case for Agents

The shift from chatbots to agents is not just a technology story. It is an economics story. Companies are already replacing entire functions with agent-based systems and measuring the results.

| Company | Action | Result | Source |

|---|---|---|---|

| Monday.com | Replaced 100-person SDR team with AI agents | Response time: 24 hours to 3 minutes. Conversion rates improved across all metrics. | 20VC podcast (2026) |

| Block (Square) | 40% workforce reduction, replaced support and SDR teams with agents | Cost reduction + service quality maintained | 20VC analysis (2026) |

| Anthropic (internal) | Engineers run 10+ parallel agent threads simultaneously | Engineers direct agents and review output instead of writing code | Claude Code team reveal |

| OpenAI (internal) | 95% of engineers use Codex daily, 100% of PRs reviewed by AI | Agent-assisted development is the default operating model | Sherwin Wu presentation |

The pattern is clear: companies are not buying chatbot subscriptions. They are deploying agent infrastructure that replaces headcount with execution capacity.

The cost comparison for a 10-person team:

| Function | Human Cost (Annual) | Agent Cost (Taskade Pro) |

|---|---|---|

| SDR team (5 people) | $250,000-500,000 | $16/user/month (agents included) |

| Customer support (3 people) | $120,000-240,000 | Agents handle tier-1 autonomously |

| Report generation (weekly) | 20+ hours/week manual work | Automated with dashboard agents |

| Lead qualification | 4-8 hours/day manual scoring | AI agent scores instantly on submission |

| Follow-up sequences | Manual email chains | Triggered automations with personalization |

The Scale of Genesis Power

Genesis is not one clever agent. It is an execution platform.

| Genesis Capabilities | How It Works |

|---|---|

| 500+ Expert-Crafted Prompts | Unlock templates across 15+ functions: sales, marketing, engineering, ops, legal, and more. |

| Smart File and PDF Intelligence | Instantly extract, summarize, and integrate file data into your workflows. |

| Automated AI Reporting Pipelines | Go from spreadsheet to dashboard to scheduled report, fully automated. |

| 100+ App Integrations | Automate tasks across Google Sheets, Slack, Gmail, Notion, Figma, Salesforce, and more. |

| Modular Workspaces by Design | Each space runs as its own Genesis app, focused, scalable, and purpose-built. |

| 7-Tier Access Control | Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer for precise permissions. |

This is what execution at scale looks like.

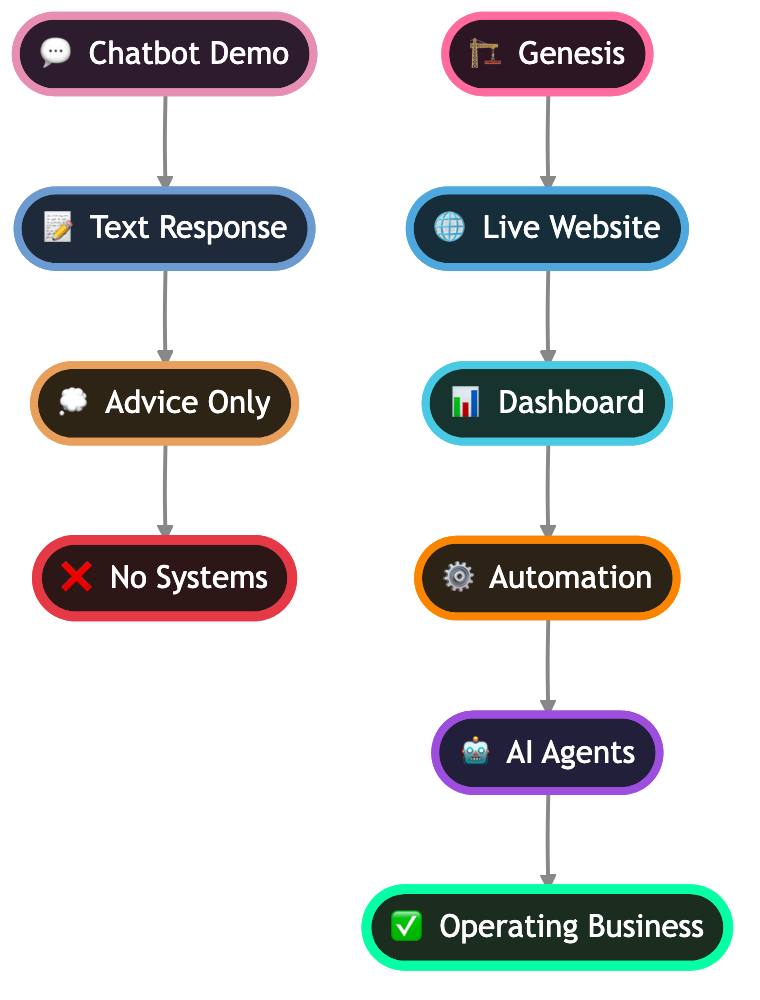

The Execution Walkthrough: From Prompt to Running Business

Here is exactly how an agent-based system executes a complete business workflow, step by step. This is not a demo. This is how real teams use Taskade Genesis today.

Scenario: Automated Lead-to-Client Pipeline

Step 1: Lead submits form. The form lives on a published Genesis app with a custom domain. No third-party form builder needed.

Step 2: Scoring agent evaluates. The agent reads the submission, checks against your ideal customer profile stored in workspace memory, and assigns a score (1-100). This happens in seconds, not hours.

Step 3: Qualification agent routes. Based on the score, the lead enters the correct pipeline stage. Hot leads get fast-tracked. Cold leads enter a nurture sequence.

Step 4: Execution triggers fire. Simultaneously: a personalized follow-up email sends, the CRM pipeline updates, a booking link delivers, and your team gets a Slack notification. All through 100+ native integrations.

Step 5: Memory updates. Every interaction, email open, and response is stored in the workspace. The agent has full context for the next interaction.

Step 6: Re-engagement loop. Cold leads are not forgotten. The re-engagement agent checks back at configured intervals, sending new value propositions based on updated workspace intelligence.

Total human time required: zero after initial setup.

Demo vs Execution: Real Scenarios

Software Consultancy

Chatbot World: tips on websites, CRMs, and client acquisition.

Genesis World:

- Website with proposals + AI sales assistant

- Dashboard tracking leads and profitability

- Workflows automating follow-ups and onboarding

- Agents for sales, delivery, and client success

Outcome: a consultancy system, not a plan.

E-Commerce Store

Chatbot World: advice on ads and SEO.

Genesis World:

- Website storefront with AI customer support

- Dashboard for sales funnels and inventory

- Workflows for abandoned carts, shipping, notifications

- Agents monitoring reviews, ads, and suppliers

Outcome: scale without headcount.

Startup Fundraise

Chatbot World: a checklist of fundraising tips.

Genesis World:

- Investor portal with AI assistant

- Dashboard tracking outreach and milestones

- Workflows for follow-ups and scheduling

- Agents for research, decks, and financial models

Outcome: a fundraising machine, not advice.

The Power of Integrated Intelligence

The difference is integration.

- Websites feed leads directly into dashboards.

- Workflows trigger off real project data.

- Agents operate with full business context, not just a prompt.

This is unified execution intelligence: systems that remember, connect, and act.

Chatbots cannot do that. Genesis does.

| Capability | Standalone Chatbot | Genesis Integrated Agent |

|---|---|---|

| Read project data | No access | Full workspace context |

| Create tasks | Cannot | Creates, assigns, sets deadlines |

| Send emails | Cannot | Gmail, Outlook via automations |

| Update CRM | Cannot | HubSpot, Salesforce integration |

| Schedule meetings | Cannot | Calendar integration |

| Generate reports | Text-only summary | Live dashboard with charts |

| Run while you sleep | Stops when chat closes | Background execution (Pro+) |

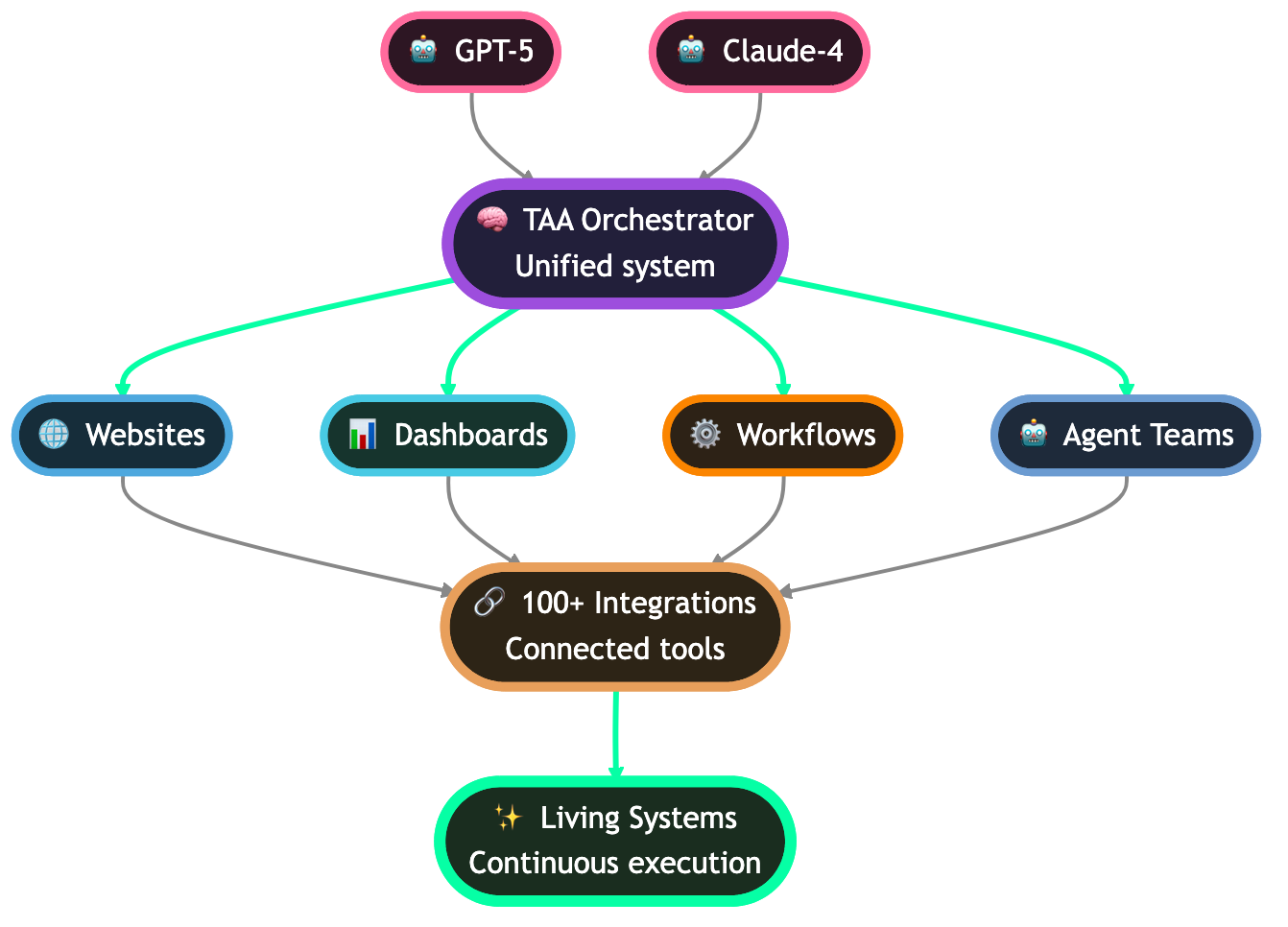

The Technical Revolution Behind Genesis

At the core of Genesis is a unified system that coordinates multiple LLMs (11+ frontier models from OpenAI, Anthropic, and Google), specialized tools, and workspace data.

The system allows you to create, edit, and manage every aspect of your work with:

- Persistent Context across sessions and projects

- Direct Tool Integration into your stack via standards like MCP

- Multi-Agent Collaboration like a true team with 22+ built-in tools

- Continuous Learning from workflows in use

Tool integration quality is what separates real execution platforms from chatbot wrappers. Jeremiah Lowin (creator of FastMCP) emphasizes that agent tools should represent outcomes, like resolve_support_ticket, not low-level operations like get_ticket + update_ticket + close_ticket. Fewer, smarter tools beat a sprawl of endpoints.

The Future of Work Is Agentic

Rosenblatt's perceptron (1957) became today's transformers.

Billions of artificial neurons power modern LLMs.

But intelligence alone is not enough. The missing layer has always been execution.

Block's 40% workforce reduction in 2026, with Jack Dorsey replacing support teams and SDRs with AI agents, shows where this is heading. As the 20VC podcast (2026) analyzed, companies now face a binary choice: "either reaccelerate growth or cut costs dramatically." Agents are the mechanism for both.

Anthropic CEO Dario Amodei described the broader trajectory in his interview with Nikhil Kamath (2026) as "an expanding sphere of what is possible." AI is not replacing a fixed set of tasks, but continuously enlarging what autonomous systems can do. Every month, the boundary between "requires a human" and "an agent handles this" shifts further.

Genesis closes that gap. Where AI stops performing and starts collaborating. Where businesses stop prompting and start building.

This is what agentic engineering looks like in practice: building systems where AI agents own execution, not just conversation. The evidence is already here. At Anthropic, the Claude Code team revealed that their engineers run 10+ parallel agent threads simultaneously, not writing code, but directing agents and reviewing output. At OpenAI, Sherwin Wu shared that 95% of engineers use Codex daily and 100% of PRs are reviewed by AI agents. The shift from chatbot to execution agent is not theoretical. It is the operating model at the companies building the models.

Not another chatbot. Not another graveyard of abandoned projects.

The execution layer for the future of work.

Real Users, Real Results

Genesis is already powering execution across industries:

- Agencies running campaigns end-to-end with multi-agent workflows

- Consultants scaling with automation instead of headcount

- Startups fundraising and automating growth pipelines

- Enterprises deploying systems in weeks, not quarters

- Solopreneurs building client portals, booking systems, and dashboards in minutes

The common thread? They stopped chatting with AI and started building with it.

Browse real examples in the Taskade Community Gallery with 150,000+ apps built by users.

Your Move

Chatbots impress. But demos do not build companies. Demos do not scale. Demos do not ship.

Agents do.

That is why we built Genesis: the execution layer where humans and AI collaborate to get real work done. So stop playing with demos. Start building with agents.

The future is not conversations with AI. It is collaboration. It is systems that remember, connect, and execute your vision while you sleep.

Start building with Genesis ->

Frequently Asked Questions

What is the main difference between a chatbot and an AI agent?

A chatbot responds to prompts in a conversation window. An AI agent executes tasks autonomously within a workspace where it has access to memory (project data), 22+ built-in tools, and execution capabilities (100+ automations). Chatbots talk. Agents act.

When should I use a chatbot versus an AI agent?

Use a chatbot for simple Q&A and conversational interfaces where the output is text. Use an AI agent when you need autonomous execution: updating project status, processing form submissions, triggering workflows, or coordinating multi-step processes. If the task dies when the chat window closes, you need an agent.

Can AI agents perform tasks autonomously?

Yes. Agents can monitor triggers (form submissions, schedules, webhooks), process data, make decisions, and execute actions without human intervention. Taskade agents run in the background on Pro plans ($16/month) and above, continuing work after you close the tab.

Why do chatbots fail at real business workflows?

Conversations are stateless. Each session starts fresh with no memory, no access to live data, and no ability to trigger actions. Real workflows require persistent state, system integration, and autonomous execution.

What is the economic case for switching to agents?

Monday.com replaced 100 SDRs with agents (24-hour response time to 3 minutes). Block cut 40% of workforce with agent replacements. The savings come from eliminating human bottlenecks in repetitive execution, not from cheaper conversations.

How do agents integrate with existing tools?

Taskade agents connect to 100+ integrations across 10 categories: communication (Slack, Teams), email (Gmail, Outlook), CRM (HubSpot, Salesforce), development (GitHub), payments (Stripe), and more.

What does agentic engineering mean?

Building systems where AI agents own execution, not just conversation. The human role shifts from doing the work to directing and reviewing agent output. See our agentic engineering guide for details.

What is the EPICS Agent benchmark?

A benchmark measuring real professional task completion. Frontier models score 90%+ on standard tests but complete real tasks only 24% of the time. The gap is execution infrastructure, not intelligence.

Read more: How to Train AI Agents on Your Own Living Knowledge | What Are AI Agents? | The End of the App Store | Build Without Permission | How Workspace DNA Works | AI App Beginner Examples | 5 Genesis Apps in 10 Minutes