You would never fire a new employee on day one for turning in a bad draft. You would hand it back, explain what you wanted, point to an example, and ask them to try again. Two weeks later, they would be fine. Six months later, indispensable.

Most operators do the exact opposite with their AI agents. First output is weak → declare AI mid → close the tab → go back to doing the work manually. They just fired the new hire on day one.

This is the single biggest self-inflicted wound in 2026 AI adoption. And it is entirely fixable.

TL;DR: Train agents the way you train employees — written role, tool access, 16+ examples of your best work, and a reinforcement loop where you accept or reject outputs. Over roughly 100 iterations the agent locks onto your voice and judgment. The difference: 100 iterations takes 100 minutes with an agent and 18 months with a human. Taskade Agents v2 persists the training across sessions so it compounds. Here is the onboarding playbook.

This is the third companion post to the Win With AI in 2026 pillar. Read that one for the frame. Read this one for the discipline.

The One-Line Thesis

Humans learn by reinforcement. So do agents. Treat them the same.

That is the whole post in nine words. The rest is implementation.

If 16 SOP examples train a Taskade Genesis agent's taste, the Karpathy loop trains its harness. Both compound. Operators who master agent reinforcement at the single-agent level today are one prerequisite away from running a full meta-agent loop tomorrow.

| Operator | Loop type | Experiments | ROI |

|---|---|---|---|

| You, this weekend | Single-agent reinforcement | 16 examples + ratings | Agent matches your voice in ~2 weeks |

| Karpathy (March 2026) | Meta-agent auto-research | 700 in 2 days | 11% speedup + bug fix Karpathy himself missed |

| Tobi Lütke (Shopify CEO) | Meta-agent on Shopify data | 37 in 8 hours | 19% performance gain |

| SkyPilot | Meta-agent + scaling discovery | 910 in 8 hours | Width-scaling insight, under $300 compute |

Source: Andrej Karpathy auto-research / Sequoia AI Ascent April 29 2026.

The takeaway: 100 iterations takes 100 minutes with an agent versus 18 months with a new hire. That ratio holds at every scale — single-agent training compounds your taste; meta-agent training compounds the harness around the agent. Inside Taskade Genesis, the same surface holds both: Agents v2 for taste training, scheduled Automations against a Project of metric snapshots for harness training.

How Do You Train an AI Agent Like an Employee? (The 5-Step Plan)

Every top-ranking HBR and RevOps piece on this topic converges on the same 5-step structure — and then none of them actually show you how to execute it. Here is the full plan with the Taskade surface each step runs on.

| Step | Employee-onboarding analogue | What you actually do for an agent | Where it lives in Taskade |

|---|---|---|---|

| 1 | Role — write the job description | Write a Role + Task + Rules + Output Format prompt (the 4-part skeleton) | Agents v2 → Agent profile → System instructions |

| 2 | Access — grant systems + tools | Scope which of the 33 built-in tools + custom tools the agent can call | Agents v2 → Tool settings (whitelist / blacklist) |

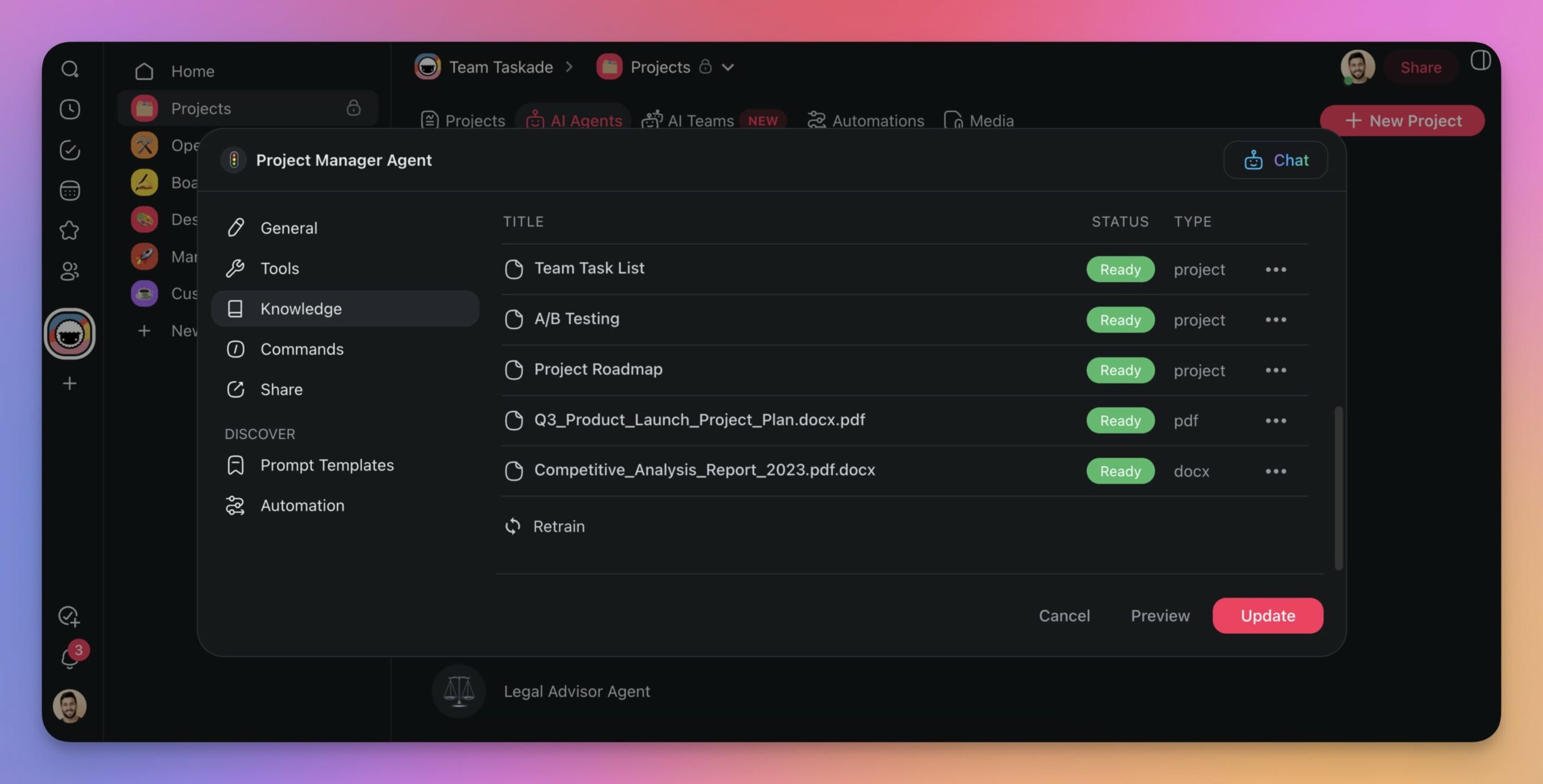

| 3 | Training — show 16+ examples | Add samples of past best work + SOPs to persistent memory | Agents v2 → Memory → Reference docs |

| 4 | Feedback — review + coach | Rate outputs Strong/Acceptable/Weak; update Rules + add new samples | Agents v2 → Memory + in-line rating |

| 5 | Success metrics — define good | Pick 2–3 measurable outcomes (accuracy %, response time, human-rework %) | Dashboard view on top of Automation run history |

This is the spine of the post. Every later section drills into one step.

What Is the Agent Feedback Loop? (And How Does It Compare to RLHF?)

Every agent you train runs a miniature version of RLHF (Reinforcement Learning from Human Feedback) — the same technique that turned GPT-3 into ChatGPT. You do not need to implement RLHF from scratch to benefit from its shape. The same feedback loop works at the operator scale.

The most quoted single proof point: OpenAI's InstructGPT (1.3B parameters) beat the 175B-parameter base GPT-3 on user-preferred outputs — a 100× smaller model winning because of RLHF, not scale. The feedback loop was worth more than 133× the compute. The training dataset that made it work: ~13,000 hours of human preference ratings.

You are not annotating 13,000 hours of output. You are annotating 10 outputs a month per agent. But the mechanism is identical — and the compounding is the same.

Operator Tools That Implement This Stack

If you are doing this outside a consolidated workspace, here is the fragmented dev-tier stack you would otherwise assemble. Taskade collapses this into one surface, but operators evaluating BYOA platforms should know the tools.

| Tool | Layer | Notes |

|---|---|---|

| LangChain / LangGraph | Agent orchestration + tool calling | General-purpose dev SDK |

| AutoGen | Multi-agent conversation patterns | Microsoft Research |

| CrewAI | Role-based multi-agent teams | Python-first |

| TRL / TRLX | RLHF pipelines (SFT, reward model, PPO) | HuggingFace ecosystem |

| LoRA / QLoRA | Low-rank fine-tuning for per-client voice | When you need on-weights customization |

| Taskade Agents v2 | All of the above + memory + tools + UI | One workspace; no glue code |

Why Operators Fire Agents Too Fast

The mental model most people bring to agents is "autocomplete on steroids." Under that model, a bad output means the tool is broken. Under that model, a second bad output confirms the tool is broken. Under that model, most operators close the tab by iteration three and never come back.

The correct mental model is "new employee on day one." Under that model, a bad output means you have not explained the job yet. That is not a tool failure. That is a training step.

┌──────────────────────────────────────────────────────────────────────┐

│ TWO MENTAL MODELS │

├──────────────────────────────────────────────────────────────────────┤

│ │

│ Wrong: "Tool that outputs things" │

│ - Bad output → tool broken │

│ - Iteration feels wasteful │

│ - Give up by output 3 │

│ │

│ Right: "New employee being onboarded" │

│ - Bad output → job not explained yet │

│ - Iteration is training, not waste │

│ - Breakthrough typically by output 15-20 │

│ │

└──────────────────────────────────────────────────────────────────────┘

This single mental-model swap is the difference between "AI is mid" and "AI ate my department." The mental model is free. The swap is instant. The compounding starts the same day.

What top operators actually do: Airtable CEO Howie Liu has stated publicly he uses AI hourly and is one of his own platform's largest individual inference-cost users globally — running 30 parallel Claude Code instances, each coupled to a browser, with cross-PR review. The Dan Shipper signal he cites: what predicts a company successfully adopting AI? Does the CEO use ChatGPT or Claude daily? Operators who treat agents as new hires win at the same rate CEOs who use them daily ship product. See the full history of Airtable for the IC CEO playbook.

The Four-Part Prompt Skeleton (Every Reliable Agent Has It)

Bad prompts read like SMS messages. Good prompts read like employee job descriptions.

┌─────────────────────────────────────────────────────────────────────┐

│ 1. ROLE │

│ ────── │

│ Who is the agent? Background, perspective, expertise. │

│ "You are an elite B2B sales trainer and script doctor." │

│ │

│ 2. TASK │

│ ────── │

│ What does the agent do? One sentence, unambiguous. │

│ "Turn the 10 transcripts below into one unified sales script." │

│ │

│ 3. RULES │

│ ────── │

│ Non-negotiables. Always do X. Never do Y. Number them. │

│ - Use only lines appearing in ≥2 winning calls │

│ - Keep language at 3rd-6th grade reading level │

│ - Core pitch under 5 minutes when spoken │

│ - Never invent phrases not in the transcripts │

│ │

│ 4. OUTPUT FORMAT │

│ ────── │

│ Exact structure. Headings, length, bullet rules, tone. │

│ - Short structural overview in bullets │

│ - Full word-for-word script, organized by section headings │

│ - Bullet list of top 10 most powerful phrases │

│ │

└─────────────────────────────────────────────────────────────────────┘

Every Taskade agent prompt worth shipping has all four. Missing any single piece is the #1 cause of unpredictable behavior. The most-skipped piece is Output Format, and that is exactly the one that creates the most "why is it not doing what I asked" frustration.

The Onboarding Ladder

Think of a new agent's first 30 days the way a sharp manager thinks about a new human hire's first 30 days.

Week 1 — Read-Only

Give the agent access to the SOP Project and one data source. No write permissions. No publishing rights. Its only job this week is to read, index, and hold the context. You spend 20 minutes asking it questions about the SOPs and see how well it absorbed them.

Week 2 — Draft Mode

Turn on output. Every output goes to a human reviewer before it reaches any real surface. You review with the Strong / Acceptable / Weak rubric. Weak outputs trigger a Rules update. This week is where 70% of the long-term agent quality gets baked in.

Week 3 — Low-Risk Production

The agent can publish to internal-only surfaces. Drafts visible to the team, not customers. Slack posts, internal docs, agent-generated research memos. You watch for edge cases. You trust-but-verify.

Week 4 — Full Production

The agent can publish to customer-facing surfaces: support replies, outbound emails, marketing content, client-facing Genesis app outputs. You have earned this level of autonomy after three weeks of verified behavior — not before.

Month 2+ — Monthly Performance Review

Schedule it. 30 minutes per agent per month. Pull 10 recent outputs, rate each, pattern-match the weak ones, update Rules, refresh the samples in memory. This is the compounding loop that separates a one-quarter agent from a two-year asset.

The Reinforcement Loop (Diagrammed)

Every iteration makes the next iteration better — but only if you close the loop.

Note the two feedback sinks: memory (positive reinforcement — store strong outputs as new examples) and rules (negative reinforcement — tighten the guardrails after a weak output). Both feed back into the next generation.

This is identical to how a good manager trains a human. It is not coincidence — it is the same mechanism running at 100× speed.

The 16-Sample Rule

There is a specific threshold where agent voice locks in. In the field, the number is 16 samples.

| Sample count | Agent behavior |

|---|---|

| 0 | Sounds like the internet |

| 1–5 | Sounds like the internet with your vocabulary sprinkled in |

| 6–10 | Approximates your voice; frequent regressions to internet default |

| 16 | Locks onto your voice. The threshold where training holds. |

| 20–30 | Diminishing returns; the cost is worth it for customer-facing |

| 50+ | Overkill for most operators; only worth it for enterprise agents |

Why 16 specifically? Probably not magic — more like the smallest sample size where variance in topic + tone + length stops averaging out to generic. Whatever the exact mechanism, operators who test this land in the 12–20 range. 16 is a safe default.

Critical point: the samples have to be your best past work, not your average past work. Agents overfit to the median of whatever you show them. Show mediocre output, get mediocre output. Curate aggressively.

Agent Performance Review Template

Print this. Run it monthly.

┌──────────────────────────────────────────────────────────────────────┐

│ AGENT MONTHLY REVIEW — [Agent Name] │

│ Date: _______ Reviewed by: _______ │

├──────────────────────────────────────────────────────────────────────┤

│ │

│ 10 recent outputs rated: │

│ Strong: ___ │

│ Acceptable: ___ │

│ Weak: ___ │

│ │

│ Pattern in Weak outputs (one sentence): │

│ ________________________________________________ │

│ │

│ Rules change shipped this month: │

│ ________________________________________________ │

│ │

│ New samples added to memory this month (count + source): │

│ ________________________________________________ │

│ │

│ Tool set changes: │

│ ________________________________________________ │

│ │

│ Status: [ ] Promote surface level [ ] Hold [ ] Retrain │

│ │

└──────────────────────────────────────────────────────────────────────┘

Thirty minutes. Once a month. Per agent. That is the ongoing cost of a trained agent — and the reason BYOA stacks compound.

The Full Taskade Agents v2 Training Surface

| Capability | What it enables |

|---|---|

| Persistent memory | Samples, rules, and past outputs survive every session — no re-explanation tax |

| 33 built-in tools | Web search, file upload, project manage, data pull, send email, and more |

| Custom tool builder | Wrap any REST endpoint as a tool your agent can call |

| Slash commands | Operators and clients trigger agent actions with a single keystroke |

| @-mention | Agents summon other agents in a thread (multi-agent teams) |

| Public embedding | Deploy a trained agent to any website via script tag |

| Auto-routing across models | Frontier models from OpenAI, Anthropic, and Google — no lock-in |

| Workspace-scoped memory | Agent training is per-workspace — client data never leaks across engagements |

| 7-tier RBAC on every tool | Access escalation matches your 4-week onboarding ladder precisely |

Every row is a row in the 5-step onboarding plan above. The onboarding plan exists because the capability set is already there.

The Tool Access Question

Give every agent a tool set scoped to its role. The same way a new marketing employee does not get access to the billing database on day one, a new Content Chloe agent does not get write access to your published blog on day one.

Taskade Agents v2 ship with 33 built-in tools plus a custom tool builder. A sensible progression for a content agent:

| Stage | Tools granted |

|---|---|

| Onboarding | Read memory, read SOP Project, read one data source |

| Draft | Above + web search, draft-to-Project |

| Low-risk prod | Above + publish to internal-only Projects |

| Full prod | Above + publish to customer-facing Genesis app |

| Advanced | Above + write to CRM, call external APIs via custom tool |

The escalation is intentional. You earn trust levels. Same as humans. Multi-agent collaboration adds another dimension — some advanced agents get to delegate to junior agents, creating a managerial tier — but most operators start with one-agent-one-role before going multi-agent.

When to Retire an Agent vs Retrain

| Signal | Response |

|---|---|

| Output quality drops 20%+ over 3 months | Retrain (new samples + rules) |

| SOP it was built on has changed | Retrain (update sample set) |

| 30%+ of outputs need heavy human rework | Retrain (tighten rules) |

| Base model was upgraded | Retrain (refresh all memory) |

| Agent's job no longer exists | Retire |

| Agent was built on a deprecated tool | Retire and rebuild |

Retirement is rare. Retraining quarterly is normal. Think of it the way HR thinks of annual reviews — a scheduled, expected, compounding ritual. Skipping it is where drift sets in.

The Hormozi Line

"You are outsourcing the typing, not the thinking."

The operators who get this separate cleanly from the ones who do not. AI does not replace your judgment. It replaces the slow mechanical step between your judgment and the finished output.

If an agent ships bad work, it is not because "AI is mid." It is because the judgment step (the prompt, the rules, the samples, the feedback loop) was underspecified. Every weak output is information about which judgment step to sharpen next. Operators who internalize this ship agents that improve every month. Operators who do not close the loop on day one.

Companion Reads

- Win With AI in 2026: The Workflow-First Playbook — the pillar this post sits under

- BYOA: The $1M-Per-Employee Era — the compensation model that makes trained agents economic

- From Roles to Workflows: The AI Org Chart — where trained agents fit on the new chart

- Multi-Agent Collaboration in Production — lessons from 500,000+ deployments

- AI Agent Tools: 26 Is the Right Number — scoping the agent tool set

- The Workspace DNA Architecture — how memory, intelligence, and execution compound together

- The 2026 Productivity Playbook — the hub for workflow-first operators, agents, automations, and Taskade Genesis

- Genesis Compilation: Prompt to Deployed App — where the trained agents ship

- Agents v2 · Automations · Community Gallery — the surfaces

Glossary Deep Dives

The training pipeline, explained from first principles:

- AI Alignment — why training an agent is a different problem than writing a prompt

- RLHF, DPO, Constitutional AI — the training methods that shaped every frontier model you are fine-tuning on top of

- Evals — the discipline that tells you whether iteration 20 actually beat iteration 19

- Chain-of-Thought, ReAct Pattern, Planning and Reasoning — what the agent is doing between your prompt and its output

- Tool Use, Function Calling, Structured Outputs — the surfaces you are shaping when you give the agent a new capability

- Agent Memory, Embeddings, Vector Database — how your training examples compound instead of evaporate

- Ask Questions Tool, Human-in-the-Loop — where the agent hands back to you during training

- Model Context Protocol, Taskade MCP Client — how to extend a trained agent's reach without retraining from scratch

The Only Mistake That Actually Matters

The only unforgivable mistake in 2026 agent training is closing the loop on day one. Every other mistake — wrong samples, weak prompt, fuzzy rules — is recoverable by iteration 10. But if you shut the loop on output one, iteration 10 never happens. The agent stays permanently stuck at day-one quality, which is also the day you labeled AI "not ready."

Open the loop. Keep it open for 100 iterations. Give the agent the same runway you would give a new hire. The breakthrough is usually around iteration 20. The compounding asset is built by iteration 100. The rest is just maintenance.

Train your first agent in Taskade →

▲ ■ ● Onboard. Review. Compound.

Frequently Asked Questions

How do you train an AI agent to match your voice and judgment?

Train agents the way you would train a new employee. Give them a written role, access to the tools they need, a SOP Project with 16+ examples of your best past work, and a feedback loop where you accept or reject outputs. Over roughly 100 iterations the agent locks onto your taste. Taskade Agents v2 persist this training across sessions with custom memory and tools, so training compounds instead of evaporating when a chat closes.

Why do most people fail at training AI agents?

Most operators treat agents like vending machines — one bad output, they declare the agent broken and go back to doing the work manually. But agents learn through reinforcement exactly the way humans do. A new employee's first draft is usually wrong too. The difference is 100 iterations takes 100 minutes with an agent and 18 months with a new hire. The operators who win are the ones who keep iterating past iteration one.

How many writing samples does an agent need to match your voice?

Roughly 16 high-quality samples is the threshold where voice consistently locks in. Fewer than 10 and the agent still defaults to generic "internet voice." More than 30 has diminishing returns. The samples must be your actual best work, not average work — agents overfit to the median of whatever you show them. Curate aggressively. Taskade stores the samples in agent persistent memory, not in the prompt, so they are available every session without eating context.

What is the 4-part prompt skeleton for agents?

Role, Task, Rules, Output Format. Role — who the agent is (background, perspective, expertise). Task — what the operator wants done. Rules — what the agent must never do and must always do. Output Format — exact structure of the response (headings, length, bullet rules, tone). Every reliable agent prompt has all four. Missing any one — especially Output Format — is the top cause of flaky, unpredictable agent behavior.

How do you give an agent a performance review?

Schedule it. Monthly or quarterly, pull a sample of 10 recent outputs and rate each as Strong / Acceptable / Weak. Identify the pattern in the Weak set (usually one rule missing, one new edge case, one drift from voice). Update the agent's Rules section and add 2–3 new samples to its memory. Ship. Agent performance reviews take 30 minutes and are the highest-leverage work an operator does each month.

Should I use prompts or persistent memory for agent training?

Both, for different purposes. Prompts handle per-session context — what this specific output needs. Persistent memory handles the stuff that should be true every session — voice, style rules, past examples, brand facts, tool usage patterns. Taskade Agents v2 splits these cleanly. Dumping everything into the prompt every session is the most common mistake — it wastes context, slows output, and forces operators to re-explain the agent's job every time.

What tools should a newly-onboarded agent have access to?

Scope tool access the same way you would scope permissions for a new employee. Week 1 — read-only access to the SOP library and one data source. Week 2 — ability to draft outputs the operator reviews. Week 3 — ability to publish to low-risk surfaces (internal docs, drafts). Week 4 — production surfaces once performance is consistent. Taskade's 33 built-in tools plus custom tool builder handle this scoping natively.

How do you know when to retire or retrain an agent?

Three signals. First, output quality drops relative to three months ago (the base model lifted but your agent's training did not). Second, the SOP it was built on has changed. Third, 30%+ of its outputs now need heavy human rework. If any of the three triggers, run a 2-hour retune: refresh the 16 samples to include recent best work, update the Rules, re-audit the tool set. Retirement — full rebuild — is rare. Retraining every quarter is normal.

Can agents train other agents?

Yes, and this is one of the least-discussed advanced patterns. A senior operator agent reviews the outputs of a junior execution agent, flags issues, and writes updated rules. The senior agent acts as a manager. Taskade supports multi-agent collaboration natively, so this pattern runs inside one workspace. For most operators, human-in-the-loop still beats agent-on-agent review for the judgment layer — but the layer below (stylistic edits, rule adherence) delegates cleanly.

Where does agent training live in Taskade Genesis?

In three places. The agent's persistent memory holds voice samples, past outputs, and long-term facts. The agent's custom tools define what it can do. The Project the agent reads from holds the SOPs it follows. All three live in one workspace, under one URL, so the training compounds session to session. This is the difference between "clever prompt" and "trained agent" — the first vanishes when you close the tab. The second is an asset.

What is the difference between training one agent and running a Karpathy auto-research loop?

Single-agent training shapes the agent's taste — what good output looks like for your business — using 16+ examples in persistent memory. The Karpathy loop optimizes the harness around the agent — what tools, what context window, what model, what retry policy — by letting a meta-agent run experiments overnight against an objective metric. Karpathy reported an 11% speedup from 700 experiments on his own training pipeline. Shopify CEO Tobi Lütke ran 37 experiments in 8 hours and reported 19%. Both layers compound, and both run inside one Taskade workspace: Agents v2 with persistent memory holds the taste layer; scheduled Automations against a Project of metric snapshots holds the harness layer. Together they produce an agent that gets better at your work overnight, not just during your work hours.