Manus AI burst onto the scene in March 2025 with a viral demo that showed an AI agent booking flights, writing code, and filling out spreadsheets — all inside a virtual computer, without any human hand-holding. The hype was immediate. The waitlist hit six figures in 48 hours. And the question on every team lead's mind was simple: is this the agent that finally replaces half my SaaS stack?

Twelve months later, the answer is more nuanced than the hype suggests. In this review, we break down what Manus AI actually does, where it falls short, and which alternatives deliver more value for teams shipping in 2026.

TL;DR: Manus AI is a solo-use, invite-only general-purpose agent that runs inside a virtual computer. It is impressive for individual research and web tasks, but it has no team collaboration, no persistent workspace, no integrations, and no free tier. For teams that need multi-agent orchestration, shared memory, and production-grade automation, Taskade Genesis delivers more — with a free tier, 100+ integrations, and 500,000+ agents already deployed. Try it free →

What Is Manus AI?

Manus AI is a general-purpose AI agent built by Monica.im, a China-based startup founded by former Alibaba and ByteDance engineers. The platform's core idea is simple but ambitious: give an AI agent a full virtual computer — browser, terminal, file system — and let it complete multi-step tasks autonomously.

Unlike chatbots that wait for your next prompt, Manus operates in a continuous loop. You describe a goal, and the agent plans, executes, and iterates until the task is done or it runs out of credits.

The Butterfly Effect: March 2025

The Manus story begins with a single demo video posted to social media on March 6, 2025. In the clip, the agent:

- Searched the web for apartment listings matching specific criteria

- Opened a spreadsheet and populated it with structured data

- Navigated airline booking sites and compared prices

- Generated a travel itinerary document with embedded links

The demo racked up millions of views in 48 hours. Waitlist registrations exceeded 100,000 within a week. Chinese tech media dubbed it "the ChatGPT killer," and Western outlets followed with breathless coverage comparing it to Devin, the autonomous coding agent that had gone viral a year earlier.

The reality, as early testers quickly discovered, was more complicated.

Virtual Computer Architecture

Manus runs each task inside a sandboxed virtual machine. Here is what that stack looks like:

+----------------------------------------------------------+

| MANUS AGENT LAYER |

| +----------------------------------------------------+ |

| | Planning Module Execution Module | |

| | - Goal decomposition - Step-by-step actions | |

| | - Task graph - Error recovery | |

| | - Priority queue - Progress tracking | |

| +----------------------------------------------------+ |

| |

| +----------------------------------------------------+ |

| | VIRTUAL COMPUTER (Sandboxed VM per session) | |

| | +----------+ +----------+ +------------------+ | |

| | | Browser | | Terminal | | File System | | |

| | | (Chrome) | | (bash) | | (isolated /tmp) | | |

| | +----------+ +----------+ +------------------+ | |

| +----------------------------------------------------+ |

| |

| +----------------------------------------------------+ |

| | LLM BACKBONE | |

| | - Claude / GPT-4 class models (provider varies) | |

| | - Context window: ~128K tokens | |

| | - Vision: screenshots parsed for navigation | |

| +----------------------------------------------------+ |

+----------------------------------------------------------+

Each session gets a fresh VM. When the session ends, the VM is destroyed. There is no persistent file system, no shared storage, and no way to carry context from one task to the next — a critical limitation we will revisit in the weaknesses section.

Manus AI vs ChatGPT

The comparison people make most often is Manus vs ChatGPT. It is an understandable but misleading comparison.

| Dimension | ChatGPT | Manus AI |

|---|---|---|

| Interaction model | Conversational chat | Autonomous agent loop |

| Environment | Chat window only | Virtual computer (browser + terminal + files) |

| Task completion | User guides each step | Agent plans and executes end-to-end |

| Web access | Browse with plugins (limited) | Full browser with real navigation |

| Code execution | Code Interpreter sandbox | Full terminal + file system |

| Persistence | Chat history saved | Session destroyed after task |

| Team features | ChatGPT Teams workspace | None |

| Pricing | $20-25/month (public) | Invite-only, credits-based (~$39/month) |

ChatGPT is a conversation partner. Manus is a task executor. The difference matters: ChatGPT waits for you to tell it what to do next; Manus tries to figure out the next step on its own. Whether that autonomous loop actually works reliably is the central question of this review.

The Invite-Only Hype Machine

As of early 2026, Manus remains invite-only. The waitlist has reportedly exceeded 500,000 users. The company has not announced a public launch date.

This scarcity model has created a secondary market for invite codes and a culture of demo-sharing that amplifies perceived capability beyond actual reliability. Early testers with access tend to share their most impressive results, while failures — broken task loops, hallucinated browser clicks, incomplete deliverables — get less visibility.

For teams evaluating Manus for production use, the invite gate is itself a red flag. You cannot trial the tool, you cannot benchmark it against your actual workflows, and you cannot add teammates without additional invitations.

Manus AI Features: What Can It Actually Do?

Autonomous Web Research

Manus's strongest use case is open-ended web research. You give it a research question, and the agent:

- Opens a browser session

- Searches Google, Bing, or specified sites

- Reads and extracts content from multiple pages

- Synthesizes findings into a structured document

- Saves the output as a downloadable file

For example, "Research the top 10 AI agent platforms launched in 2025, compare their pricing, and create a spreadsheet" is a task Manus can handle with moderate success. The output quality depends heavily on the websites it encounters and whether they block automated browsing.

Code Writing and Execution

Manus can write Python, JavaScript, and shell scripts, then execute them in its terminal environment. This is useful for:

- Data processing (CSV/JSON transformations)

- Web scraping (BeautifulSoup, Playwright)

- Simple API integrations (REST calls, webhook pings)

- Chart generation (matplotlib, plotly)

The limitation is that nothing persists. Every script runs in a disposable VM. There is no package manager state, no installed libraries carried between sessions, and no version control.

File Management and Document Creation

The agent can create, edit, and organize files within its sandboxed file system. Supported outputs include:

- Markdown documents

- CSV and Excel spreadsheets

- PDF reports (via code generation)

- HTML pages

Browser Automation

Manus uses a real Chromium browser instance and can:

- Fill out web forms

- Click buttons and navigate multi-page workflows

- Take screenshots for visual verification

- Handle basic login flows (though sharing credentials is a security risk)

Task Planning and Decomposition

When given a complex goal, Manus generates a task plan — a sequence of steps with dependencies. Users can see this plan before execution begins. The agent updates progress as it moves through each step, though the progress tracking is not always accurate.

Manus AI Pricing

| Plan | Cost | Credits | Access | Support |

|---|---|---|---|---|

| Waitlist (Free) | $0 | Limited daily credits (rumored 5-10 tasks/day) | Invite-only | Community only |

| Pro | ~$39/month | Increased daily credits | Invite-only | |

| Enterprise | Undisclosed | Custom | Direct sales | Dedicated |

| Taskade Free | $0 | 3,000 one-time credits + full workspace | Public signup | Community + docs |

| Taskade Starter | $6/month | Included credits + workspace | Public signup | Priority |

| Taskade Pro | $16/month (10 users) | Included credits + workspace | Public signup | Priority |

Manus does not publish a stable pricing page. The numbers above are based on early tester reports from Q1 2026 and may have changed. The lack of transparent pricing makes it difficult to forecast costs or get budget approval.

Manus AI Strengths

1. Genuinely Autonomous Execution

Manus does not need hand-holding. For well-defined tasks — "find the cheapest flight from SFO to JFK on March 15" — the agent can plan, execute, and deliver without further input. This is a real step beyond chatbot-style AI.

2. Virtual Computer Gives Real Tool Access

The sandboxed VM approach means Manus can use real web browsers, real terminals, and real file systems. This is more capable than plugin-based approaches that simulate tool access.

3. Strong Research and Data Gathering

For open-ended research tasks that require visiting multiple websites, extracting data, and synthesizing findings, Manus performs above average compared to other agent platforms.

4. Visual Verification via Screenshots

Manus takes screenshots during browser navigation and uses vision models to verify that actions completed correctly. This reduces (but does not eliminate) the problem of agents clicking the wrong button.

5. Transparent Task Planning

The ability to see the agent's plan before execution begins gives users a chance to intervene before the agent goes down a wrong path. Not all agent platforms expose their planning step.

Manus AI Weaknesses

1. No Persistent Memory

Every Manus session starts from zero. The agent has no memory of previous tasks, no knowledge of your preferences, no accumulated context about your business. Each task is isolated. For teams that need agents with persistent memory and shared context, this is a dealbreaker.

2. No Team Collaboration

Manus is designed for individual use. There is no shared workspace, no role-based access control, no way for multiple team members to see, edit, or build on each other's agent outputs. In contrast, platforms like Taskade offer 7-tier RBAC (Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer) and real-time collaboration across all agent interactions.

3. Invite-Only Access

You cannot sign up. You cannot trial it. You cannot add your team. The invite-only model that built hype is now a barrier to adoption. Teams cannot evaluate Manus against their actual workflows.

4. No Integrations

Manus has no native integrations with tools like Slack, Gmail, Google Sheets, Salesforce, GitHub, or any of the platforms teams use daily. Every interaction with external tools must go through the browser, which is fragile and breaks when sites update their UI.

5. Session-Based Pricing Is Unpredictable

Credits are consumed per task, but task complexity varies wildly. A simple web search might use 1 credit; a multi-step research project might consume 10. Without clear credit-to-cost mapping, budgeting is guesswork.

6. Security Concerns

The virtual computer model requires users to trust Manus with browser access to potentially sensitive sites. The company has not published SOC 2, ISO 27001, or equivalent certifications. Entering credentials for any service into the Manus browser is a security risk with no documented safeguards.

10 Real Use Cases for Manus AI

Where Manus works (and where it does not):

| # | Use Case | Manus Fit | Notes |

|---|---|---|---|

| 1 | Competitive research | Strong | Multi-site data gathering is its sweet spot |

| 2 | Lead list building | Moderate | Works when target sites allow scraping |

| 3 | Price comparison | Moderate | Breaks on sites with anti-bot measures |

| 4 | Travel planning | Moderate | Demo-worthy but unreliable for booking |

| 5 | Data entry automation | Weak | No integrations = browser-only approach |

| 6 | Code prototyping | Moderate | Disposable VM means no iteration |

| 7 | Report generation | Strong | Synthesizing web data into docs |

| 8 | Form filling | Weak | Fragile across different form frameworks |

| 9 | Team workflow automation | Not supported | No team features, no shared memory |

| 10 | Customer support triage | Not supported | No persistent context, no integrations |

The pattern is clear: Manus works for solo, one-shot, research-heavy tasks. It fails for anything requiring team collaboration, persistent context, or integration with existing tools.

What Users Actually Report

Early testers on Reddit and Hacker News converge on a few recurring observations:

- Research tasks work 70-80% of the time. The agent successfully gathers data from most public websites, but struggles with JavaScript-heavy single-page apps, sites behind Cloudflare bot protection, and pages that require scrolling to load content.

- Complex multi-step tasks fail frequently. Tasks with more than 5 dependent steps have a high failure rate. The agent loses track of its position in the plan, repeats steps, or gets stuck in loops.

- Output formatting is inconsistent. Sometimes you get a clean spreadsheet; sometimes you get a malformed CSV with merged cells. The quality depends on which code path the agent's LLM generates.

- Credit consumption is opaque. Users report widely varying credit costs for similar tasks. A 10-minute research task might consume 1 credit or 5 credits depending on how many retries the agent needs internally.

These reports align with the fundamental challenge of autonomous agents in 2026: LLMs are powerful but unreliable at multi-step planning. The virtual computer architecture gives Manus impressive tool access, but it does not solve the reliability problem at the core of autonomous execution.

7 Best Manus AI Alternatives in 2026

1. Taskade Genesis — Best for Teams That Need Multi-Agent Orchestration

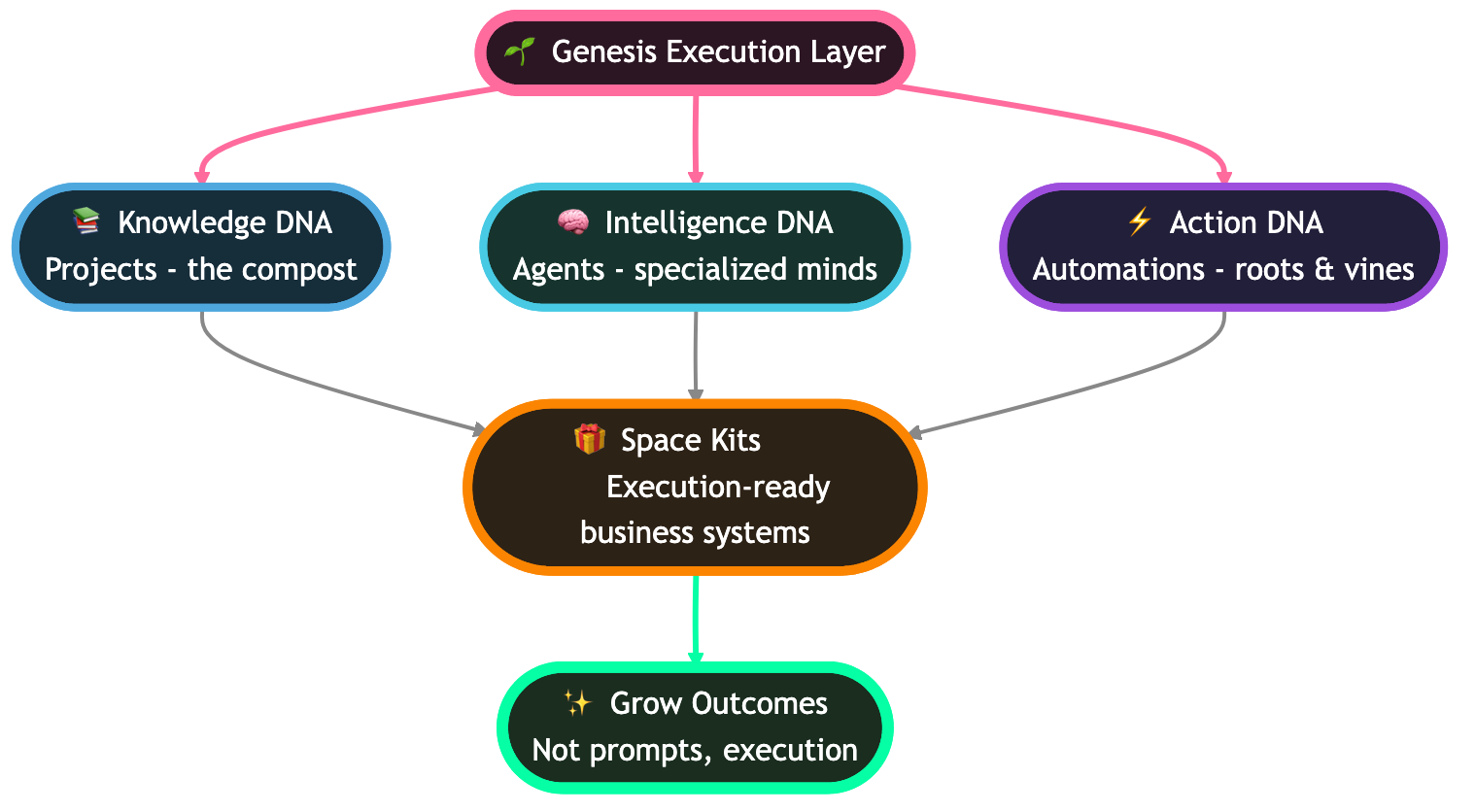

Taskade Genesis is the AI-native workspace where you build, deploy, and run multi-agent systems from a single prompt — with shared memory, automations, and a full workspace backing every agent.

Where Manus gives you one generalist agent operating in a disposable virtual machine, Taskade Genesis gives you a team of specialist agents with shared project memory, custom tools, and reliable automation workflows that persist across sessions. This is the fundamental architectural difference: Manus is a solo player; Taskade Genesis is an entire roster.

Why Taskade Genesis wins for teams:

Taskade's Workspace DNA architecture — Memory + Intelligence + Execution — creates a self-reinforcing loop that Manus cannot replicate. Your projects and data (Memory) feed your AI agents (Intelligence), which trigger automations (Execution), which create more data. Every agent interaction enriches the workspace; every automation reinforces context.

Here is how that plays out in practice:

- Multi-agent orchestration: Deploy specialized agents for research, writing, coding, and analysis — all sharing the same project context. Manus has exactly one agent with one context window. Taskade lets you run 500,000+ agents across your workspace, each with custom tools and persistent memory.

- 22+ built-in tools: Agents come with tools for web search, code execution, document parsing, image generation, and more — no browser hacking required.

- 100+ integrations: Connect to Slack, Gmail, Google Sheets, GitHub, Salesforce, Shopify, and 94 more platforms natively. Manus has zero integrations; it clicks through browser UIs that break when sites update.

- 11+ frontier models: Choose from models by OpenAI, Anthropic, and Google. Manus locks you to whatever model the company chooses.

- 7 project views: Visualize and manage agent output in List, Board, Calendar, Table, Mind Map, Gantt, and Org Chart views.

- 7-tier RBAC: Control access with Owner, Maintainer, Editor, Commenter, Collaborator, Participant, and Viewer roles. Manus has no access control at all.

- Free tier: 3,000 credits, full workspace access, AI agents included. No invite code, no waitlist, no gatekeeping.

- 150,000+ Genesis apps built and a Community Gallery with 130,000+ shared apps prove this is not vaporware — it is production infrastructure.

+----------------------------------------------------------+

| TASKADE MULTI-AGENT WORKSPACE |

| +----------------------------------------------------+ |

| | WORKSPACE DNA (Persistent) | |

| | Memory <--> Intelligence <--> Execution | |

| | (Projects) (AI Agents) (Automations) | |

| +----------------------------------------------------+ |

| |

| +------------+ +------------+ +------------------+ |

| | Research | | Writing | | Analysis | |

| | Agent | | Agent | | Agent | |

| | - Web tool | | - Doc tool | | - Data tool | |

| | - Memory | | - Memory | | - Memory | |

| +-----+------+ +-----+------+ +--------+---------+ |

| | | | |

| +----------------------------------------------------+ |

| | SHARED PROJECT MEMORY (Persistent across sessions)| |

| | Vectors + KV + Full-text + File OCR | |

| +----------------------------------------------------+ |

| |

| +----------------------------------------------------+ |

| | 100+ INTEGRATIONS | |

| | Slack | Gmail | Sheets | GitHub | Salesforce ... | |

| +----------------------------------------------------+ |

+----------------------------------------------------------+

Pricing: Free / $6/month Starter / $16/month Pro (10 users) / $40/month Business / Enterprise custom.

2. Claude Agent SDK — Best for Developers Building Custom Agent Loops

The Claude Agent SDK from Anthropic provides a Python framework for building agentic applications on top of Claude models. It gives developers fine-grained control over tool use, memory management, and multi-step reasoning.

The SDK is particularly strong for use cases where you need to define custom tool schemas, implement complex retry logic, or build agents that interact with proprietary APIs. Anthropic's Claude models consistently rank among the best for multi-step reasoning and instruction following, which translates to more reliable autonomous loops compared to alternatives.

However, the Claude Agent SDK is a developer tool, not a product. There is no dashboard, no drag-and-drop builder, and no way for non-technical team members to create or manage agents. You also need to provision your own infrastructure (servers, databases, monitoring) and manage API billing separately.

- Strengths: Best-in-class reasoning (Claude models), extensive tool use API, streaming support, computer use capability

- Weaknesses: Code-only (no UI for non-developers), no built-in workspace, requires infrastructure management

- Pricing: Pay-per-token via Anthropic API

- Best for: Engineering teams building custom autonomous agents with specific tool requirements

3. ChatGPT Operator — Best for Browser-Based Task Automation in the OpenAI Ecosystem

ChatGPT Operator is OpenAI's entry into the autonomous agent space. It uses a model specifically trained for browser interactions to complete tasks like booking restaurants, purchasing products, and filling forms.

Unlike Manus, Operator is tightly integrated into the ChatGPT interface, which means billions of existing users can access it without a separate app or waitlist. The trade-off is scope: Operator handles browser tasks only. There is no terminal, no file system, and no code execution environment. If your use case is "fill out this form" or "find the cheapest price for this product," Operator is more polished and reliable than Manus. If you need to write scripts, process data, or generate documents, Operator cannot help.

- Strengths: Backed by OpenAI infrastructure, familiar ChatGPT interface, integrated with GPT model family

- Weaknesses: Limited to browser tasks, no code execution, no file system, ChatGPT Plus required ($20/month)

- Pricing: Included with ChatGPT Plus ($20/month) and Team ($25/month) plans

- Best for: Individual users already in the OpenAI ecosystem who want browser-based task automation

4. Devin — Best for Autonomous Software Engineering

Devin by Cognition AI is purpose-built for software engineering. It gets its own development environment with IDE, browser, and terminal and can write, test, debug, and deploy code autonomously.

- Strengths: Deep engineering focus, can handle multi-file refactors, creates PRs, runs test suites

- Weaknesses: $500/month price tag, engineering-only (not a general-purpose agent), requires codebase access

- Pricing: $500/month (Team plan)

- Best for: Engineering teams with budget for an AI pair programmer on complex codebases

5. AutoGPT — Best for Open-Source Agent Experimentation

AutoGPT is the open-source project that kicked off the autonomous agent wave in April 2023. It chains LLM calls in a goal-directed loop with memory and tool access. The project was the first mainstream demonstration that LLMs could be given tools and goals and left to iterate autonomously — an idea that directly inspired Manus and dozens of other agent startups.

In 2026, AutoGPT has matured into a more structured platform with a builder UI and a marketplace for sharing agent configurations. However, production reliability remains a challenge. Autonomous loops still get stuck, token costs compound unpredictably, and error handling requires manual intervention. For experimentation and prototyping, AutoGPT is unmatched. For production deployment, most teams graduate to managed platforms.

- Strengths: Fully open-source (MIT), large community (160K+ GitHub stars), highly customizable

- Weaknesses: Unstable in production, high token consumption, requires significant prompt engineering

- Pricing: Free (self-hosted), cloud version varies

- Best for: Developers and researchers experimenting with autonomous agent architectures

6. CrewAI — Best for Multi-Agent Python Frameworks

CrewAI provides a Python framework for orchestrating teams of AI agents with defined roles, goals, and backstories. Each agent can use different tools and collaborate through a structured delegation system.

- Strengths: Multi-agent by design, role-based agent definitions, good documentation, active development

- Weaknesses: Python-only, no UI for non-developers, requires LLM API keys and infrastructure

- Pricing: Open-source framework (free), Enterprise platform pricing varies

- Best for: Python developers building multi-agent applications with role specialization

7. OpenAI Assistants API — Best for Production Agent Deployment

The OpenAI Assistants API provides a managed runtime for building AI agents with tools (code interpreter, file search, function calling), threads for conversation history, and file management.

- Strengths: Managed infrastructure, code interpreter built in, file search with retrieval, function calling

- Weaknesses: Locked to OpenAI models, no autonomous browser access, per-token pricing adds up

- Pricing: Pay-per-token (GPT-4 class models)

- Best for: Teams building production applications that need a managed agent runtime with OpenAI models

Mega Comparison Matrix: Manus AI vs All 7 Alternatives

| Feature | Manus AI | Taskade Genesis | Claude SDK | ChatGPT Operator | Devin | AutoGPT | CrewAI | OpenAI Assistants |

|---|---|---|---|---|---|---|---|---|

| Multi-agent | No | Yes | Manual | No | No | Limited | Yes | No |

| Persistent memory | No | Yes | Manual | Chat history | Session | Manual | Manual | Threads |

| Team collaboration | No | Yes (real-time) | No | Teams plan | Slack | No | No | No |

| RBAC | No | 7-tier | No | Basic | Basic | No | No | No |

| Integrations | 0 | 100+ | API only | Plugins | GitHub | API only | API only | Function calling |

| Free tier | No | Yes (3,000 credits) | No | No | No | Yes (self-host) | Yes (OSS) | No |

| Browser automation | Yes | Via agents | Computer use | Yes | Yes | Plugin | Plugin | No |

| Code execution | Yes (VM) | Yes (agents) | Yes | No | Yes (IDE) | Yes | Yes | Code interpreter |

| No-code access | No | Yes | No | Yes | No | No | No | No |

| Entry price | ~$39/month | Free | Pay-per-token | $20/month | $500/month | Free (self-host) | Free (OSS) | Pay-per-token |

| Models available | 1 (provider chosen) | 11+ | Claude family | GPT family | GPT-4 class | Any (via API) | Any (via API) | GPT family |

| Project views | 0 | 8 | 0 | 0 | 0 | 0 | 0 | 0 |

| Autonomous execution | Yes | Yes | Configurable | Yes | Yes | Yes | Yes | Via functions |

| Security certs | None published | Enterprise | SOC 2 | SOC 2 | SOC 2 | N/A (self-host) | N/A (self-host) | SOC 2 |

| Public access | Invite-only | Open signup | Open API | ChatGPT Plus | Waitlist | Open-source | Open-source | Open API |

Should You Use Manus AI in 2026?

The honest answer depends on three variables: your team size, your task type, and your tolerance for unpredictability.

Use Manus if:

- You are a solo researcher or analyst

- Your tasks are one-shot and web-heavy (competitive research, price comparison, data gathering)

- You have an invite code and are comfortable with credits-based pricing

- You do not need to share agent outputs with a team

Skip Manus if:

- You need team collaboration or shared agent workspaces

- You need persistent memory across sessions

- You need integrations with Slack, Gmail, Sheets, CRMs, or developer tools

- You need transparent, predictable pricing

- You need agents that learn from past interactions

- You need automation workflows connected to your agent output

For most teams in 2026, the calculus is simple. Manus solves a narrow problem (autonomous web tasks for individuals) and leaves everything else — collaboration, memory, integrations, automation — on the table.

Architecture: Manus vs Taskade Genesis

Understanding the fundamental architecture difference helps explain why Manus and Taskade serve different users.

Manus: Solo Agent, Disposable Environment

+------------------------------------------+

| MANUS SESSION (Ephemeral) |

| |

| User ---> [Agent] ---> [VM] |

| | ^ | |

| v | v |

| [Plan] [Act] [Browser] |

| [Terminal] |

| [Files] |

| |

| Session ends ---> VM destroyed |

| No memory carried ---> Start fresh |

+------------------------------------------+

Taskade Genesis: Multi-Agent, Persistent Workspace

The key difference: in Manus, the agent's world is born and dies with each session. In Taskade, the workspace is permanent, the memory accumulates, and agents get smarter over time because they share context with the team.

Decision Flowchart: Which Agent Platform Should You Choose?

Free Tier Generosity: Who Actually Lets You Start for Free?

Manus, ChatGPT Operator, and Devin offer zero free access. AutoGPT and CrewAI are free because they are open-source (but you pay for hosting and API keys). Taskade is the only platform offering a managed free tier with AI agents, workspace, and credits included — no infrastructure required, no invite code needed.

Multi-Agent Workflow: How Taskade Specialist Agents Collaborate

In Manus, this workflow is impossible. There is no way to hand off between agents, no shared memory, and no automation trigger. Each step would require a separate, isolated session with manual copy-pasting between them.

Deeper Architecture: The Virtual Computer Stack Visualized

Manus's core architectural bet is the virtual computer — a sandboxed VM containing a browser, terminal, file system, and code runtime, orchestrated by an LLM planner. This approach is conceptually clean but operationally fragile. The diagram below maps the full stack as it exists in the shipping product.

Now compare the Taskade Genesis multi-agent stack — where the workspace itself is the runtime, agents are first-class citizens with persistent memory, and execution is durable rather than ephemeral.

The difference is not cosmetic. Manus collapses all intelligence into one generalist operating in an ephemeral shell. Taskade Genesis splits the work across specialist agents that share a durable workspace — the same Workspace DNA loop described in the Living App Movement.

Single Generalist vs Team of Specialists

Watch what happens when the same task — "research 5 competitors, build a comparison, distribute to stakeholders" — lands on each architecture.

Two architectures, two outcomes. Manus hands you a file. Taskade hands you a repeatable, memoried, team-visible workflow.

Reliability Data: Where the Hype Meets the Ground

Community reports from the first 12 months of Manus access (Reddit r/singularity, r/LocalLLaMA, Hacker News, Product Hunt reviews) converge on a reliability profile that degrades sharply with task length. The chart below approximates reported success rates by task step count.

Short tasks work. Long tasks break. This is the fundamental LLM-planner reliability wall that every autonomous agent hits, and Manus does not have a structural solution to it — the virtual computer gives the agent more tools but not more reliable planning.

Now look at failure modes (what actually breaks):

The top three failure modes — hallucinated clicks, timeouts, and anti-bot blocks — all trace back to the browser-only integration strategy. Manus cannot call a Slack API or a Gmail API directly. It has to click through UIs. When the UI changes, the agent breaks. Taskade's 100+ integrations are first-class API connections, not browser puppetry.

And pricing transparency (or lack thereof):

Taskade is the only managed platform with a permanent free tier that includes AI agents and workspace.

The Autonomy vs Reliability Tradeoff

Every agent platform sits on a tradeoff curve. More autonomy means the agent makes more decisions without you. More reliability means more of those decisions are correct. Manus is high-autonomy, medium-reliability — which sounds exciting until you need to ship.

The AI agents taxonomy covers this tradeoff in depth and places Manus in the "solo browser-native autonomous" quadrant alongside ChatGPT Operator.

Manus or Alternative? A Decision Flowchart

The 8-Alternative Landscape Map

Plot the 8 platforms across two axes: DIY framework vs managed platform, and solo vs multi-agent.

Taskade Genesis occupies the managed-multi-agent quadrant alone. That is not a coincidence — it is the hardest quadrant to build because it requires persistent memory, real-time collaboration, 7-tier RBAC, an integration layer, and a workspace backend all working together.

Workspace DNA: The Self-Reinforcing Loop

Memory feeds Intelligence. Intelligence triggers Execution. Execution writes back to Memory. Every loop iteration makes the workspace smarter. Manus has no such loop — the VM dies, the context evaporates, the next task starts cold.

Memory Types: Ephemeral vs Persistent

Task Routing: When to Use What

Capability Matrix: 8 Platforms, 10 Dimensions

+-------------------+-------+---------+--------+---------+-------+---------+--------+----------+

| Capability | Manus | Taskade | Claude | Operator| Devin | AutoGPT | CrewAI | OpenAI |

+-------------------+-------+---------+--------+---------+-------+---------+--------+----------+

| Multi-agent | No | Yes | Manual | No | No | Limited | Yes | No |

| Persistent memory | No | Yes | Manual | Chat | Sess | Manual | Manual | Threads |

| Team collab/RBAC | No | 7-tier | No | Basic | Basic | No | No | No |

| Integrations | 0 | 100+ | API | Plugins | Git | API | API | Function |

| Free tier | No | 3000cr | No | No | No | OSS | OSS | No |

| No-code UI | No | Yes | No | Yes | No | No | No | No |

| Models available | 1 | 11+ | Claude | GPT fam | GPT-4 | Any | Any | GPT fam |

| Project views | 0 | 8 | 0 | 0 | 0 | 0 | 0 | 0 |

| Browser autonomy | Yes | via agt | CompU | Yes | Yes | Plugin | Plugin | No |

| Security certs | None | Ent | SOC2 | SOC2 | SOC2 | N/A | N/A | SOC2 |

+-------------------+-------+---------+--------+---------+-------+---------+--------+----------+

Failure Mode Reference Card

+----------------------+----------+-------------------------+-------------------------+

| Failure mode | Frequency| Root cause | Taskade mitigation |

+----------------------+----------+-------------------------+-------------------------+

| Hallucinated click | 28% | Vision misreads DOM | Native API integrations |

| Browser timeout | 22% | Heavy JS / slow network | Durable retry engine |

| Anti-bot block | 18% | Cloudflare / captcha | OAuth API auth |

| Wrong tool selected | 14% | Weak tool routing | Manager Agent + 22+ tools|

| Plan loop (repeat) | 12% | LLM planning drift | Persistent memory check |

| Out of credits mid | 6% | Opaque pricing | Predictable plan tiers |

+----------------------+----------+-------------------------+-------------------------+

Expanded Manus Architecture Stack

+--------------------------------------------------------------+

| MANUS AGENT LAYER |

| +--------------------------------------------------------+ |

| | Planner -> Task Queue -> Executor -> Observer | |

| | (goal (dependency (tool call (vision + | |

| | decomp) graph) dispatch) text parse) | |

| +--------------------------------------------------------+ |

| |

| +--------------------------------------------------------+ |

| | EPHEMERAL VIRTUAL COMPUTER (destroyed per session) | |

| | +---------+ +---------+ +----------+ +---------+ | |

| | | Chrome | | Bash | | /tmp fs | | Python | | |

| | | browser | | shell | | scratch | | runtime | | |

| | +----+----+ +----+----+ +-----+----+ +----+----+ | |

| | | | | | | |

| | +---- Screenshot + DOM scrape ----------+ | |

| +--------------------------------------------------------+ |

| |

| +--------------------------------------------------------+ |

| | LLM BACKBONE (provider-chosen, not user-chosen) | |

| | ~128K ctx window, vision, no persistent state | |

| +--------------------------------------------------------+ |

| |

| NO team layer NO RBAC NO integrations NO memory |

+--------------------------------------------------------------+

Expanded Taskade Genesis Stack

+--------------------------------------------------------------+

| TASKADE GENESIS MULTI-AGENT WORKSPACE |

| +--------------------------------------------------------+ |

| | 7-TIER RBAC (Owner > Viewer) + Real-time collab | |

| +--------------------------------------------------------+ |

| |

| +--------------------------------------------------------+ |

| | WORKSPACE DNA (durable, self-reinforcing loop) | |

| | Memory <-> Intelligence <-> Execution | |

| | (projects, (11+ frontier (automations, | |

| | 7 views, models, 22+ 100+ integrations, | |

| | vectors, built-in tools, Shopify, Slack, | |

| | OCR, KV) custom tools Gmail, GitHub ...) | |

| +--------------------------------------------------------+ |

| |

| +----------+ +----------+ +----------+ +------------+ |

| | Research | | Writing | | Editor | | Analysis | |

| | Agent | | Agent | | Agent | | Agent | |

| | persistent persistent persistent persistent | |

| | memory | | memory | | memory | | memory | |

| +----+-----+ +----+-----+ +----+-----+ +-----+------+ |

| | | | | |

| +-------- shared Workspace DNA -------------+ |

| |

| 500,000+ agents deployed | 150,000+ Genesis apps shipped |

| 130,000+ community apps | Free tier: 3,000 credits |

+--------------------------------------------------------------+

Why Taskade Genesis Is the #1 Manus Alternative

We mentioned Taskade Genesis briefly in the alternatives section. It deserves a deeper card because the architectural gap is that wide.

Specialist agents, not a single generalist. Manus runs one LLM in a VM. Taskade Genesis lets you deploy a Manager Agent that routes work to Research, Writing, Editor, and Analysis specialists — each with their own persistent memory, custom tool set, and model choice. A researcher agent might run on Claude for reasoning; a coder agent might run on GPT for tool calling; an analyzer might run on Gemini for long-context summarization. Manus locks you to a single provider-chosen model per task.

11+ frontier models, picked per agent. The model choice matters more than hype lets on. Claude Opus is stronger at careful multi-step reasoning; GPT-class models are stronger at tool call schemas; Gemini is stronger at long-context synthesis. Taskade lets you pick. Manus does not.

22+ built-in tools plus Custom Agent Tools (v6.99). Web search, code execution, document parsing, image generation, data analysis, OCR, and more — all native. Custom Agent Tools lets you wire an agent to any internal API with a schema. Manus cannot call APIs natively; it has to click through browser UIs, which is why 28% of its failures are "hallucinated clicks."

100+ integrations (v6.97). Slack, Gmail, Google Sheets, Notion, GitHub, Salesforce, HubSpot, Shopify, Stripe, Discord, Jira, Linear, Airtable, Zapier, Make — first-class OAuth integrations, not browser puppetry. See Zapier alternatives for the broader context.

Persistent memory — Manus's single biggest weakness. Every Manus session starts from zero. Taskade's Workspace DNA stores vectors, key-value state, OCR'd files, and full chat history across sessions, making each new task smarter than the last.

7 project views for tracking agent work. List, Board, Calendar, Table, Mind Map, Gantt, and Org Chart — pick the view that fits how your team thinks about agent output. Manus gives you a file download and walks away.

7-tier RBAC for agent ownership. Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer — control who can deploy agents, who can edit prompts, who can view outputs. Manus has no access control at all.

Free tier with 3,000 credits. No invite code, no waitlist, no gatekeeping. Start building today at /create. See best AI agent builders and the AI agents taxonomy for the full category landscape.

Social proof that is not vaporware. 500,000+ agents deployed across the platform. 150,000+ Genesis apps built and running. 130,000+ community apps in the Community Gallery — every one of which you can clone and fork. Compare that to Manus's 500,000-person waitlist where nobody can actually log in without an invite.

Manus Pricing, Expanded

| Plan | Listed price | Credits | Access | What you actually get |

|---|---|---|---|---|

| Waitlist | Free | ~5-10 tasks/day | Invite required | Limited daily credits, unstable uptime |

| Pro (rumored) | ~$39/month | Higher daily cap | Invite required | Email support, expanded credits |

| Enterprise | Undisclosed | Custom | Direct sales | Dedicated instance, custom SLAs |

| Taskade Free | $0 | 3,000 one-time + full workspace | Public signup | AI agents, 100+ integrations, 11+ models |

| Taskade Starter | $6/month | Included credits | Public signup | Priority support, full workspace |

| Taskade Pro | $16/month (10 users) | Included credits | Public signup | Team collaboration, 7-tier RBAC |

| Taskade Business | $40/month | Included credits | Public signup | Advanced team controls, SSO |

| Taskade Enterprise | Custom | Custom | Sales | Compliance, dedicated support |

Hallucination Mitigation Playbook

| Symptom | Root cause | Manus response | Taskade Genesis response |

|---|---|---|---|

| Clicked wrong button | Vision misread DOM | Retry from screenshot | Native API call, no click required |

| Made up a URL | LLM hallucination | Fail silently or loop | Source citations in Workspace DNA |

| Invented a data field | LLM drift | Malformed CSV output | Schema-typed outputs via Custom Tools |

| Skipped a required step | Planning drift | Plan corruption | Manager Agent re-planning + memory check |

| Used wrong credentials | No credential store | User must re-enter | OAuth integration layer handles auth |

| Forgot prior context | No persistent memory | Start fresh every session | Workspace DNA persists across sessions |

Autonomy Levels Comparison

| Autonomy level | Definition | Example platforms |

|---|---|---|

| L0 — Chat | User drives every turn | ChatGPT, Claude chat |

| L1 — Tool use | LLM picks tools, user approves | OpenAI Assistants |

| L2 — Short autonomy | Agent runs 1-3 steps alone | ChatGPT Operator |

| L3 — Medium autonomy | Agent runs 3-8 steps alone | Manus AI, CrewAI |

| L4 — Long autonomy | Agent runs 8+ steps, self-corrects | Devin, AutoGPT |

| L5 — Team autonomy | Multi-agent with shared memory | Taskade Genesis |

Manus sits at L3. Taskade Genesis sits at L5 — the only managed platform offering true team autonomy with shared Workspace DNA.

Tool-Use Reliability Table

| Tool category | Manus reliability | Taskade reliability | Why |

|---|---|---|---|

| Web search | High | High | Both use real search APIs |

| DOM click | Medium | N/A | Taskade uses APIs, not clicks |

| Form submit | Low | High | Taskade OAuth, Manus click-through |

| Code execution | Medium | High | Taskade sandboxed, persistent |

| File generation | Medium | High | Persistent storage in Taskade |

| Slack post | Fragile (browser) | Native API | Manus has no Slack integration |

| Gmail send | Fragile (browser) | Native API | Manus has no Gmail integration |

| Sheets write | Fragile (browser) | Native API | Manus has no Sheets integration |

Persistent Memory Comparison

| Memory feature | Manus | Taskade Genesis |

|---|---|---|

| Cross-session recall | No | Yes |

| Vector retrieval | No | HNSW 1536-dim |

| Full-text search | No | Multi-layer |

| File OCR indexing | No | Yes |

| Chat history across agents | No | Yes |

| Project-level context | No | Yes |

| Team-level knowledge | No | Yes (7-tier RBAC) |

| Writeback from automations | No | Yes |

Cost Per Successful Task

| Platform | Nominal price | Success rate (est.) | Effective cost per success |

|---|---|---|---|

| Manus Pro | ~$39/mo | 55% (medium tasks) | Implicit premium per retry |

| ChatGPT Operator | $20/mo | ~65% (browser only) | Moderate |

| Devin | $500/mo | ~60% (code tasks) | Very high |

| Claude Agent SDK | Pay-per-token | Depends on dev | Variable |

| Taskade Starter | $6/mo | High (team workflows) | Lowest managed option |

| AutoGPT (self-host) | Free + API | ~40% | Hidden infra cost |

| CrewAI (self-host) | Free + API | ~50% | Hidden infra cost |

Connecting the Dots: Sprint Sibling Reads

This review lives inside a broader sprint about the shape of the AI agent market in 2026. If you want the full picture, read alongside:

- The Living App Movement — why static SaaS is losing to AI-native, workspace-backed apps

- AI Agents Taxonomy — the canonical taxonomy that places Manus, Operator, and Genesis

- Best AI Agent Builders in 2026 — 15 platforms compared head-to-head

- NemoClaw Review — the enterprise NVIDIA-forked contrast to Manus

- Gizmo Review — micro-app contrast to Manus's generalist ambition

- Best AI Workspace Tools — workspace-native AI rather than VM-in-a-box

- Community Gallery SEO — how 130K+ community apps drive organic growth

- Taskade Genesis vs ChatGPT Custom GPTs — the other big comparison in this sprint

- Best MCP Servers — the protocol layer Manus does not support

- Best OpenClaw Alternatives — open-source autonomous agents

- Best Claude Code Alternatives — AI coding agents and IDE tools

- What Is Agentic Engineering? — the discipline behind building reliable agents

Hub pages worth bookmarking: /agents, /create, /community.

Related Reading

Explore more from this sprint and our deep-dive library:

- Best AI Agent Builders in 2026 — 15 platforms compared for building autonomous agents

- AI Agents Taxonomy: Types, Architectures, and Use Cases — understand the agent landscape

- 15 Best Zapier Alternatives in 2026 — automation platforms with AI agent support

- The Living App Movement — why static software is giving way to AI-native apps

- NemoClaw Review 2026 — NVIDIA's enterprise agent fork tested

- Best AI Dashboard Builders — build dashboards with AI in minutes

- AI Prompt Generators — tools for crafting better agent prompts

- Best AI Flowchart Makers — diagram your agent workflows

- Best Free AI App Builders — build apps without code or budget

- Best OpenClaw Alternatives — open-source agent frameworks compared

- Best Claude Code Alternatives — AI coding agents and IDE tools

- Community Gallery SEO — how community-built apps drive organic growth

- Best AI Translation Tools — AI-powered translation for global teams

- Best PDF to Notes AI — extract structured notes from PDFs

- Best YouTube to Notes AI — turn video content into actionable notes

- What Is Agentic AI? — the complete framework guide

Verdict: Manus AI Is a Proof of Concept, Not a Platform

Manus AI proved that a general-purpose autonomous agent could capture the imagination of millions. The virtual computer architecture is genuinely novel, and the demo-first launch strategy was a masterclass in hype generation.

But a demo is not a product. And in 2026, teams need more than a solo agent running in a disposable VM. They need persistent memory, shared workspaces, multi-agent collaboration, production-grade automations, and integrations with the tools they already use.

Here is the core problem with Manus in one sentence: every session starts from zero. Your agent does not remember what it learned yesterday. It does not know your team's preferences, your company's data, or the context from the last 50 tasks it completed. It wakes up in a blank VM, does its job, and disappears.

Compare that to the Workspace DNA approach: Memory feeds Intelligence, Intelligence triggers Execution, Execution creates Memory. Every interaction makes the system smarter. Every automation enriches the context. Every agent contribution is stored, searchable, and available to the next agent — or the next team member.

Manus is a proof of concept. Taskade Genesis is a platform.

The market is moving fast. The AI agent builder landscape has exploded with options — from open-source frameworks like AutoGPT and CrewAI to enterprise offerings like NemoClaw. But for teams that want to deploy agents without hiring an MLOps team, without managing infrastructure, and without waiting for an invite code, the answer is Taskade:

- Free tier with 3,000 credits

- 100+ integrations out of the box

- 11+ frontier models from OpenAI, Anthropic, and Google

- 500,000+ agents deployed across the platform

- 150,000+ Genesis apps built and running

- 130,000+ community-built apps in the Community Gallery

- No waitlist, no invite code, no gatekeeping

If you are a solo researcher who got an invite code and wants to run one-off web tasks, Manus is worth trying. For everyone else — especially teams — the math points to Taskade.

Build your first multi-agent workspace free →

Frequently Asked Questions

What is Manus AI?

Manus AI is a general-purpose AI agent developed by Monica.im that operates inside a virtual computer environment. It can autonomously browse the web, write and execute code, manage files, and complete multi-step tasks without continuous human guidance. The platform gained widespread attention after a viral demo in March 2025 showing the agent booking flights and building spreadsheets. As of 2026, Manus remains invite-only with a waitlist exceeding 500,000 users.

How much does Manus AI cost?

Manus AI uses a credits-based pricing model. Invite-only access provides limited daily credits (estimated at 5-10 tasks per day). Paid plans reportedly start at approximately $39 per month for additional credits, though pricing has changed multiple times and is not listed on a stable pricing page. By comparison, Taskade starts free with 3,000 credits and paid plans begin at $6 per month.

Is Manus AI available without an invite?

No. Manus AI remains invite-only as of early 2026. Users must join a waitlist or receive an invitation code from an existing user. The company has not announced a public launch timeline. For teams needing immediate access to AI agents, Taskade Genesis offers open signup with a free tier.

Manus AI vs ChatGPT — what is the difference?

ChatGPT is a conversational assistant that responds to prompts in a chat interface. Manus AI is an autonomous agent that operates inside a virtual computer with a real browser, terminal, and file system. ChatGPT requires the user to guide each step of the conversation; Manus attempts to plan and execute entire tasks end-to-end autonomously. ChatGPT offers team workspaces; Manus is individual-only.

Can Manus AI work with a team?

No. Manus AI is designed for individual use and offers no team collaboration features. There is no shared workspace, no role-based access control, no real-time co-editing, and no way for team members to share agent outputs within the platform. Teams that need collaborative AI agents with shared memory and 7-tier RBAC should evaluate Taskade instead.

What are the best Manus AI alternatives in 2026?

The top seven alternatives are: (1) Taskade Genesis for teams needing multi-agent orchestration with 100+ integrations and a free tier; (2) Claude Agent SDK for developers building custom agent loops; (3) ChatGPT Operator for browser-based automation in the OpenAI ecosystem; (4) Devin for autonomous software engineering; (5) AutoGPT for open-source agent experimentation; (6) CrewAI for multi-agent Python frameworks; (7) OpenAI Assistants API for production agent deployment.

Does Manus AI have a free tier?

No. Manus AI does not offer a permanent free tier. New users who receive an invitation get limited free credits that are consumed per task. Once exhausted, users must purchase additional credits. Taskade offers a free tier with 3,000 one-time credits, full workspace access, AI agents, and multi-agent automation — no invite required.

Is Manus AI safe to use for sensitive data?

Manus AI has not published SOC 2, ISO 27001, or equivalent security certifications. The agent operates inside a virtual machine with full browser access, meaning it can navigate to any website and interact with login forms. Users should not input credentials, API keys, or sensitive business data until the company publishes formal security documentation. For enterprise-grade security, evaluate platforms with published compliance certifications and data handling policies.