ElevenLabs is the AI voice company that made machines sound human. In four years, two childhood friends from Poland turned a weekend project into an $11 billion platform that generates voices, music, and sound effects used by millions — from Hollywood studios to solo content creators.

But behind the rocket-ship growth is a story rooted in something deeply personal: the frustration of watching every foreign movie dubbed by a single monotone narrator. In this article, we trace the complete history of ElevenLabs — from Polish dubbing booths to the race to pass the vocal Turing test. 🔮

From $0 to $11B: The ElevenLabs Story — Andreessen Horowitz (a16z) interview with CEO Mati Staniszewski.

TL;DR: ElevenLabs grew from a 2022 weekend project to an $11B AI voice platform with $330M+ ARR and 300+ employees. $500M Series C from Sequoia, 10,000 voices on its marketplace, $10M paid to creators, 90+ languages, and a licensed music model. Now racing to pass the vocal Turing test. Try AI agents in Taskade →

🔊 What Is ElevenLabs?

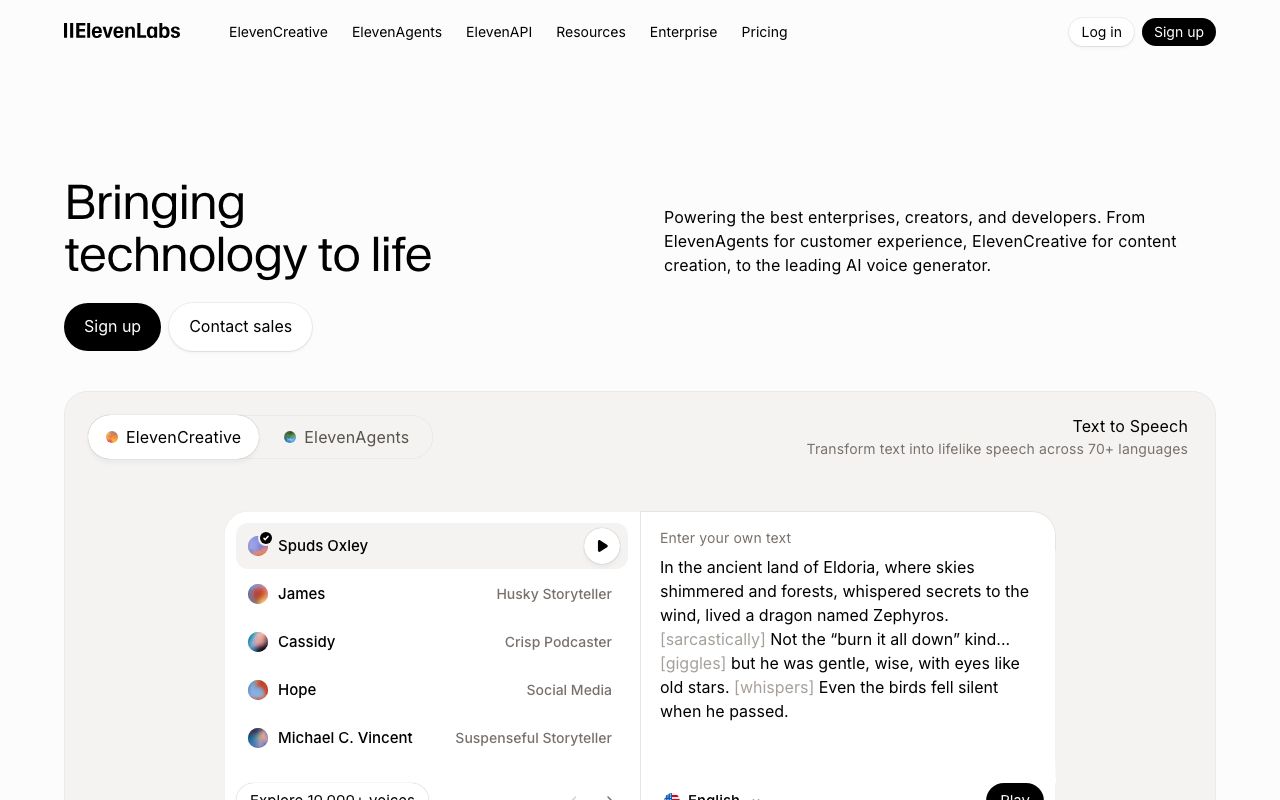

ElevenLabs is an AI audio technology company founded in 2022 by Mateusz "Mati" Staniszewski (CEO) and Piotr "Peter" Dąbkowski (CTO). The company builds AI models that generate realistic human speech, clone voices, produce music and sound effects, dub content across languages, and power real-time voice agents — all from a single platform organized into three product lines: ElevenCreative, ElevenAgents, and ElevenAPI.

"Voice is the only AI modality that can actually make you feel something. Text can give you a poem or a story, but it doesn't give you that same kind of emotive feel as when you hear a voice — whether it's ASMR whispering or a deep booming cinematic voice, it can really transport you."

Mati Staniszewski, CEO of ElevenLabs

What started as a text-to-speech tool has evolved into a full-stack audio AI platform spanning voice synthesis, voice cloning, speech-to-text, voice agents, sound effects, and licensed music generation. The product lineup serves everyone from individual creators narrating YouTube videos to enterprises building conversational AI agents at scale.

ElevenLabs reached an $11 billion valuation after raising $500 million in its Series C from Sequoia Capital, has over 300 employees across 11+ offices worldwide, crossed $330 million in annual recurring revenue, and has paid $10 million back to voice creators through its Voice Library marketplace. The company processes millions of audio generation requests daily across 90+ languages.

But the ambition goes far beyond text-to-speech.

Staniszewski has described the company's ultimate research goal as passing the vocal Turing test — creating AI that sounds indistinguishable from a human in real-time, two-way conversation. Not just reading text aloud, but truly understanding emotion, context, and cadence well enough to make you forget you're talking to a machine.

The ElevenLabs platform homepage. Source: elevenlabs.io

🥚 The History of ElevenLabs

The Polish Dubbing Problem

The story of ElevenLabs begins in Poland — specifically, in front of a television set.

In Poland, foreign movies and TV shows are dubbed using a single narrator who reads all the dialogue regardless of character. One male voice speaks every line — the female lead, the villain, the child. All emotionality, all intonation disappears into a flat monotone reading.

"If you watch a foreign movie in Poland, all the voices — whether it's a male or female voice — are narrated with one single character. One voice speaks all the lines. All the emotionality, all the intonation just disappears."

Mati Staniszewski

This isn't a quirk — it's an economic reality. Poland, a post-communist country, adopted the cheapest possible approach to localization. Full dubbing with multiple actors is expensive. A single narrator reading over the original audio is not. The result is that millions of Polish viewers have never experienced a foreign film the way it was meant to be heard.

For Mati Staniszewski and Piotr Dabkowski, childhood friends who grew up together in Poland, this was more than an annoyance. It was a problem that technology should have solved decades ago.

And as they would discover, it wasn't just a Polish problem. As one investor from China later noted during a conversation with Staniszewski: "We have a lot of western movies dubbed in Chinese — monotoned. So bad."

The same pattern repeats across dozens of countries. Billions of people consume media through a compression layer that strips away the very thing that makes human communication powerful: voice.

From Palantir and Google to a Weekend Project (2021-2022)

By 2021, Staniszewski was working at Palantir and Dabkowski was at Google DeepMind. Both had deep technical backgrounds — Dabkowski in particular was, by multiple accounts, extraordinarily gifted in AI research.

"The second smartest person I know is significantly less smart than him."

Mati Staniszewski on Piotr Dabkowski

On weekends, the two friends explored AI projects together. The state of voice synthesis in 2021 was stuck in a frustrating middle ground. Systems like Siri had moved past the robotic voices of early digital synthesizers, producing speech that sounded "more realistic" on the surface. But it still didn't cross what Staniszewski called "the threshold of actually sounding like a human and actually making you feel something."

The history of synthetic speech stretches back centuries — literally to the 1700s, when inventors built mechanical devices to mimic the human voice. The first electronic speech synthesizer appeared in the early 1900s. Digital text-to-speech systems emerged in the 1960s and 1970s. Then came Siri in 2011, which brought voice assistants to the mainstream but with a distinctly artificial quality.

A Brief History of Synthetic Speech:

| Year | Milestone | Limitation |

|---|---|---|

| 1770s | Wolfgang von Kempelen builds a mechanical speaking machine | Barely intelligible |

| 1930s | Homer Dudley creates the Voder at Bell Labs | Required trained operators |

| 1960s | First digital text-to-speech systems | Robotic, monotone output |

| 2011 | Apple launches Siri | Better but clearly artificial |

| 2016 | Google DeepMind's WaveNet | Near-human quality but slow generation |

| 2018 | Google Duplex demo | Context-aware but narrow use case |

| 2021 | State of the art | Better quality, but no emotion or personality |

| 2023 | ElevenLabs launches | Context-aware emotion, personality, and cross-lingual cloning |

The Voice Quality Gap (1770s → 2023)

Mechanical (1770s) ░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░

Digital TTS (1960s) ██░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░

Siri (2011) ██████████░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░

WaveNet (2016) ████████████████████░░░░░░░░░░░░░░░░░░░░░░

ElevenLabs (2023) ████████████████████████████████████████░░░

Vocal Turing Test ██████████████████████████████████████████ ← goal

▲ ▲

Robotic Indistinguishable

Staniszewski and Dabkowski invited the first group of testers and started iterating. They explored different approaches, gathered signal on which use cases would resonate most, and slowly built conviction that the timing was right for a breakthrough.

The key insight was that previous generations of voice technology treated synthesis as a signal processing problem — take text, convert to phonemes, generate waveforms. ElevenLabs would treat it as an AI understanding problem — teach a model to comprehend what text means and how it should sound based on context, emotion, and character.

The Launch That Broke Expectations (January 2023)

When ElevenLabs launched publicly in January 2023, they had a few thousand users lined up from their beta testing period. They knew there was interest. They did not know the interest was about to multiply by a hundred.

Within weeks, those few thousand users turned into a few hundred thousand — "a magnitude probably higher than we expected in the first order," as Staniszewski later recalled.

The timing was perfect. ChatGPT had launched two months earlier in November 2022, igniting a global AI frenzy. Every developer, creator, and entrepreneur was looking for the next AI tool that could transform their workflow. ElevenLabs arrived with a product that felt like magic: type text, select a voice, and hear speech so realistic that listeners couldn't tell it was AI-generated.

The early product was focused on two core capabilities:

- Text-to-speech synthesis — Generate realistic speech from any text input with natural intonation and emotion

- Voice cloning — Upload a short audio sample and create a digital replica of any voice

Both capabilities were dramatically better than anything else on the market. Where competitors like Amazon Polly and Google Cloud TTS produced functional but flat output, ElevenLabs voices had warmth, personality, and emotional range.

The use cases that emerged were diverse and unexpected:

- Content creators narrating YouTube videos without recording their own voice

- Audiobook publishers producing books at a fraction of traditional studio costs

- Game developers voicing hundreds of NPCs without hiring hundreds of actors

- Accessibility tools giving natural-sounding voices to people who couldn't speak

- Language learning platforms providing native-sounding pronunciation examples

- Podcast producers creating show intros and ad reads

Rapid Funding and Explosive Growth (2023-2024)

The explosive user growth immediately attracted venture capital. ElevenLabs moved fast through funding rounds:

ElevenLabs Funding Timeline:

| Date | Round | Amount | Valuation | Lead Investors |

|---|---|---|---|---|

| Jan 2023 | Pre-seed | $2M | Undisclosed | CEAS Investments |

| Jun 2023 | Series A | $19M | ~$100M | Nat Friedman, Daniel Gross, Andreessen Horowitz |

| Jan 2024 | Series B | $80M | $1.1B | Andreessen Horowitz, Sequoia Capital, Smash Capital, SV Angel |

| Dec 2024 | Series B extension | Undisclosed | $6.6B | — |

| Feb 2026 | Series C | $500M | $11B | Sequoia Capital |

At the Series A, the team was just seven people. The company went from two founders to seven employees to a few dozen within a year, and then scaled rapidly to over 300 employees by 2026 — doubling headcount approximately every six months. Total funding now exceeds $600 million.

The Series B in January 2024 made ElevenLabs a unicorn at $1.1 billion, just one year after launching publicly. By December 2024, the valuation had climbed to $6.6 billion. Then in February 2026, Sequoia Capital led a massive $500 million Series C at $11 billion — cementing ElevenLabs as one of the most valuable AI startups in the world.

By this point, ElevenLabs had crossed $330 million in annual recurring revenue — a staggering figure for a company that launched just three years earlier.

"Honestly, what really got us excited about investing in the company was chatting with the founders, Mati and Piotr. They had a really unique vision of what the world could look like in the future that a lot of people didn't see yet."

ElevenLabs investor

The fundraising velocity reflected not just user growth but the breadth of the opportunity ElevenLabs was capturing. Voice wasn't just a feature — it was an entirely new interface layer for computing.

Building the Voice Marketplace (2023-2024)

One of ElevenLabs most innovative moves was launching the Voice Library — a marketplace where anyone could create an AI voice clone and share it with the community. When other users used a shared voice, the creator earned money.

By 2026, the Voice Library hosted nearly 10,000 voices, and ElevenLabs had paid $10 million back to voice creators.

The marketplace produced unexpected discoveries. One of the earliest contributed voices was a deep Spanish male voice. The magic of ElevenLabs cross-lingual technology meant the same voice was instantly available in 90+ different languages. But the voice didn't gain traction in Spain. Instead, it exploded in popularity in English-speaking countries because of its distinctive deepness and richness. That voice became one of the platform's top three most-used voices across all use cases.

"We had the Spanish voice join us and it wasn't picking up in Spain. Nobody really liked it as much. And then it picked up in an English-speaking country because of that deepness. Now it's our top three voice for all use cases."

Mati Staniszewski

The ElevenLabs Voice Library marketplace hosts nearly 10,000 community voices across 90+ languages. Source: elevenlabs.io

The Voice Library solved a critical scaling challenge: to serve the enormous diversity of use cases across languages, accents, ages, genders, and speaking styles, ElevenLabs needed a massive voice catalog. Building it in-house would be impossibly expensive. The marketplace crowdsourced the solution while creating an economic flywheel — more voices attracted more users, which attracted more voice creators.

The Research Foundation: Context-Aware Synthesis

What made ElevenLabs models fundamentally different from previous text-to-speech systems was a philosophical choice: resist the urge to add manual controls.

Early users frequently requested a speed slider — the ability to manually adjust how fast or slow the generated voice spoke. The engineering solution was trivial. But ElevenLabs co-founder Piotr Dabkowski insisted on solving it at the research level instead.

"We don't want to become the same as the previous generation of editing suites. Instead, let's solve it on the research level where it will know, based on the voice, exactly how it should speak — with the right speed."

Mati Staniszewski

The team resisted adding the slider for nine months while the research team tried to build a model that would automatically determine the correct speaking speed based on the voice character and text context. When the research approach eventually stalled, they did add the product-level control — but the philosophy remained: whenever possible, let the AI understand context rather than forcing users to manually adjust parameters.

This research-first approach extended across the product:

- Emotion detection: Models automatically adjust tone based on text sentiment — questions sound different from exclamations, sad passages sound different from exciting ones

- Character consistency: A cloned voice maintains its personality across languages, not just its acoustic signature

- Contextual pacing: The model speeds up during action sequences and slows down during contemplative passages without any manual input

The balance between research ambition and product pragmatism became a defining tension at ElevenLabs. As Staniszewski described the internal framework:

- If the research team estimates a capability will take less than three months to solve, the product team waits

- If it will take more than three months, the product team can ship interim solutions using other approaches

This framework allowed ElevenLabs to ship quickly while maintaining research-led innovation as the long-term engine.

🏢 The ElevenLabs Team and Culture

Hiring From Non-Traditional Backgrounds

ElevenLabs built its team with a deliberate bias toward unconventional talent. In the early days especially, the company sought people with "proof of excellence" outside traditional credentials — open-source projects, personal research, gaming leaderboards, anything that demonstrated exceptional ability and obsessive drive.

The results produced some remarkable origin stories:

- One researcher was running a text-to-speech project while completing his master's degree. Dabkowski found his work online and recruited him directly. When he joined, ElevenLabs had 11 desks. Now the company spans 11+ cities.

- Another researcher was working in a call center while simultaneously building an open-source text-to-speech model. ElevenLabs hired him, and he became one of the most brilliant researchers on the data processing team.

- One employee had spent their ambition on competitive gaming, reaching rank 250 on the European Dota 2 leaderboard with 12,000 hours played. That competitive intensity translated directly into work ethic.

- A team member was working at the White House for President Biden when an ElevenLabs investor told them to "do everything in your power to try to go work there."

"We were, especially in the early days, hiring from very non-traditional backgrounds. We wanted to hire for some proof of excellence — and it could be an open-source project, it could be doing something outside of work."

Mati Staniszewski

The unconventional hiring philosophy was partly necessity — ElevenLabs estimated there were only 50 to 100 researchers in the world working at the frontier of voice AI research. To hire the best, they needed to look everywhere, which led naturally to a remote-first, globally distributed team structure.

No Titles, Flat Hierarchy

ElevenLabs removed all job titles from the company — a decision that served as both a cultural signal and a filtering mechanism.

"We removed all the titles and it's a great way of filtering for people who are very low ego. If you're coming in and saying 'I want to be VP of blah blah,' you're not going to get VP. And that actually turns off those people — but I'd argue that's a good thing."

Mati Staniszewski

The flat structure eliminated implicit bias in asking questions, requesting help, or proposing ideas. Without an explicit hierarchy, a new hire could challenge a senior researcher's approach without the social friction that titles create.

The company organized around roughly 20 small product teams, each with 5-10 people and full autonomy to ship independently. When a new team formed, it had six months to prove its value. If the work delivered results, the team continued. If not, it was dissolved.

This small-team model came with trade-offs — occasional duplicate work, uneven shipping speeds across teams. But the ownership it created was, according to Staniszewski, worth the cost. Each team knew that results were entirely down to them.

Remote-First With Hubs

ElevenLabs started fully remote and has maintained that structure as a core principle, though it evolved the approach as the company scaled past 30 people.

The initial logic was simple: the best voice AI researchers were scattered across the globe. Constraining hiring to San Francisco or London would mean missing world-class talent in Warsaw, Seoul, Singapore, or smaller cities.

As the team grew, ElevenLabs established physical hubs in London, Warsaw, San Francisco, New York, and other cities — not as mandatory offices but as spaces where employees could work alongside colleagues, especially those early in their careers who benefit from in-person immersion.

The hybrid model worked on a simple principle:

- Early career: Hired into a hub for cultural immersion and mentorship

- Experienced and autonomous: Work from anywhere, with hub access whenever wanted

- Everyone: Connected through small-team structure and transparent communication

One unexpected lesson: giving employees access to all Slack channels actually hurt productivity. People read every message even when they didn't need to, creating constant distraction. ElevenLabs learned to deliberately limit information access to force attention — a counterintuitive move in an era that worships transparency.

The Ruthless Sales Machine

ElevenLabs go-to-market operation, led by Carles Reina, is built on intensity that would make most SaaS companies uncomfortable.

The sales quota at ElevenLabs is 20x base salary — meaning an account executive earning $100,000 per year carries a $2 million annual quota. For context, the standard SaaS quota is 6-10x base salary. Over 80% of ElevenLabs reps hit their number.

"If you don't achieve your quota, then you're going to be out. And we're ruthless on that end."

Carles Reina, Head of Go-to-Market

The sales culture is defined by several distinctive practices:

Monthly pipeline reviews are conducted remotely, with all account executives and CSMs presenting in front of their peers. Each rep gets 7-8 minutes to present closed deals, pipeline status, and expected closes for the next 30 days. During presentations, leadership pulls up random deals from the pipeline to test whether reps have genuine command of their accounts — a mechanism designed to catch inflated pipelines.

Public accountability is a deliberate cultural choice. Underperformance is called out in front of the team, not behind closed doors. This approach contradicts the conventional wisdom of "praise in public, criticize in private."

"If someone hasn't done their job, they haven't done their job. You need to publicly tell them so they understand, and everyone else knows they're getting called out for it."

Carles Reina

Conservative forecasting is enforced from top to bottom. If a deal is projected at $500,000, the team logs it at $24,000. The philosophy: underestimate pipeline value to avoid credibility-destroying misses with the board, and create a forcing function that requires building a larger pipeline to hit targets.

Outbound obsession: When ElevenLabs was doing 90% of deals through inbound leads, Reina set a goal to reach 50/50 inbound-outbound by year end. He published weekly reports naming every account executive and SDR, tracking whether they hit their outbound targets. The constant pressure shifted the mix from 10% outbound to 40% within the year.

The sales team of roughly 90 people globally is expected to travel extensively. Reina himself travels 75% of his time — San Francisco, Mexico City, Tokyo, Seoul, Singapore, London, Dubai in a three-week span. Salespeople who spend too many days in the office raise red flags.

🎙️ The ElevenLabs Product Platform

ElevenLabs has expanded from a single text-to-speech tool into a comprehensive audio AI platform. Here's the full product lineup as of 2026:

ElevenCreative — Studio-quality content creation:

| Product | Description | Key Capability |

|---|---|---|

| Text-to-Speech | Generate realistic speech from text input | 90+ languages, context-aware emotion and pacing |

| Voice Cloning | Create digital replicas from audio samples | Instant (IVC) and Professional (PVC) cloning across 90+ languages |

| Voice Library | Marketplace for sharing and monetizing voices | ~10,000 voices, $10M paid to creators |

| Voice Design | Create entirely new synthetic voices from scratch | Custom age, gender, accent, style from text prompts |

| Voice Remixing | Transform and enhance existing voices | Modify attributes of existing voice recordings |

| Dubbing | Multi-language audio/video translation | Preserves emotion, timing, and lip-sync across languages |

| Music Generation | Create fully licensed AI music | Licensed with major labels (Merlin, Cobalt) — full commercial rights |

| Sound Effects | Generate sound effects from text descriptions | Context-aware audio generation |

| Voice Isolation | Remove background noise and separate speech | Clean audio extraction from noisy recordings |

| Speech-to-Text | Transcribe audio to text | 90+ languages, real-time and batch transcription |

| Image & Video | Generate and edit visuals from text | Text-to-image and reference-based editing |

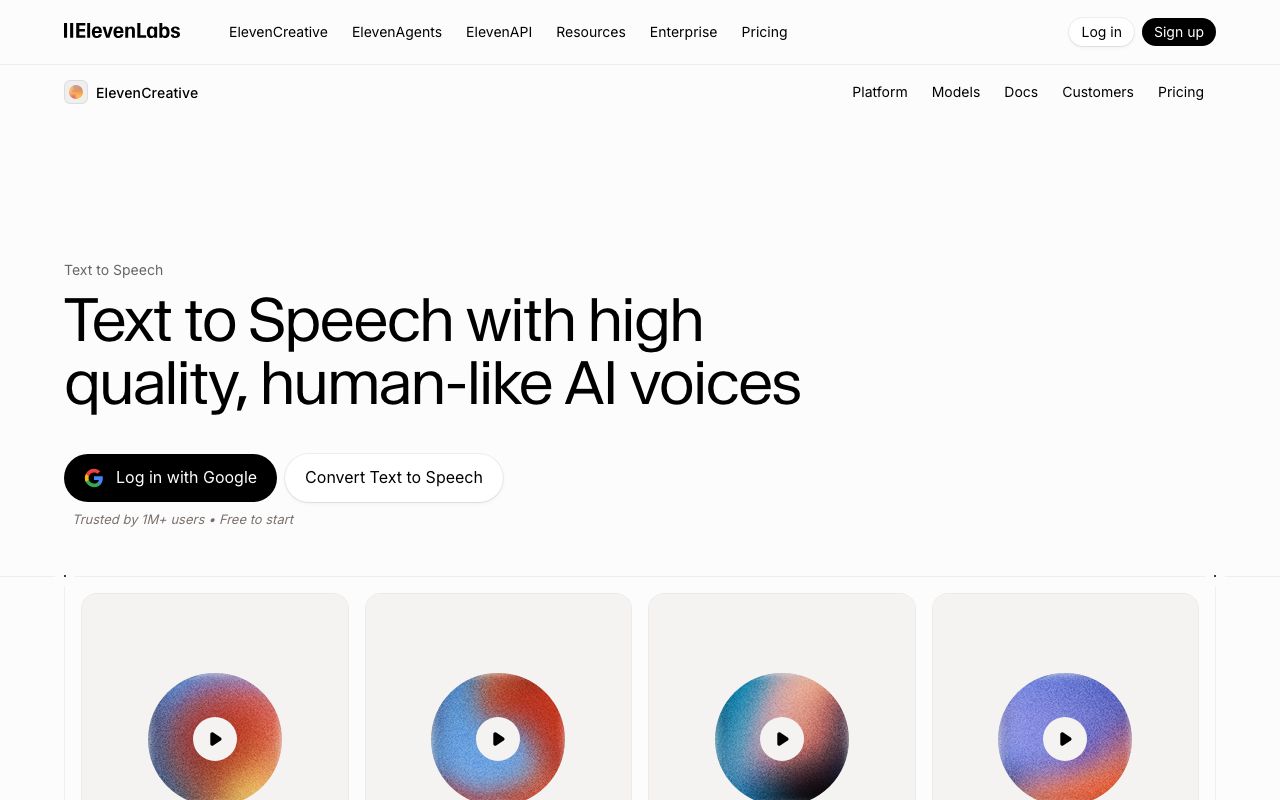

The ElevenLabs text-to-speech interface supports 90+ languages with context-aware emotion. Source: elevenlabs.io

ElevenAgents — Conversational AI:

| Product | Description | Key Capability |

|---|---|---|

| Voice Agents | Build conversational AI voice experiences | Real-time two-way voice with LLM integration |

| Telephony | Voice agents over phone lines | Twilio, Vonage, Telnyx, Plivo, SIP trunking |

| CRM Integration | Connect voice agents to business tools | Zendesk, HubSpot, Salesforce, Cal.com |

ElevenAPI — Developer tools:

| Product | Description | Key Capability |

|---|---|---|

| REST API | Full platform access via HTTP | All capabilities programmatically accessible |

| WebSocket Streaming | Real-time audio streaming | Low-latency voice generation and agent interaction |

| SDKs | Client libraries for major platforms | Python, JavaScript, React, React Native, Kotlin, Swift |

| MCP Integration | Model Context Protocol support | External tool and data source integration |

Enterprise features include HIPAA compliance, TCPA compliance, Single Sign-On (SSO), Zero Retention Mode for data privacy, workspace management with billing groups, and private deployment options.

Text-to-Speech: The Core Product

The text-to-speech engine is ElevenLabs foundational product and the one that made the company famous. Unlike traditional TTS systems that produce flat, robotic output, ElevenLabs models generate speech with natural emotional inflection, appropriate pacing, and character-consistent delivery.

The technology has evolved through three major model generations:

| Version | Release | Key Improvement |

|---|---|---|

| V1 | Jan 2023 | First model — dramatic quality improvement over competitors |

| V2 | Mid 2023 | Better stability, reduced artifacts, expanded language support |

| V3 (Voice Design) | 2024 | Create entirely new voices from text descriptions |

Each generation has pushed closer to what Staniszewski calls "crossing the threshold" — the point where AI-generated speech is indistinguishable from a human recording.

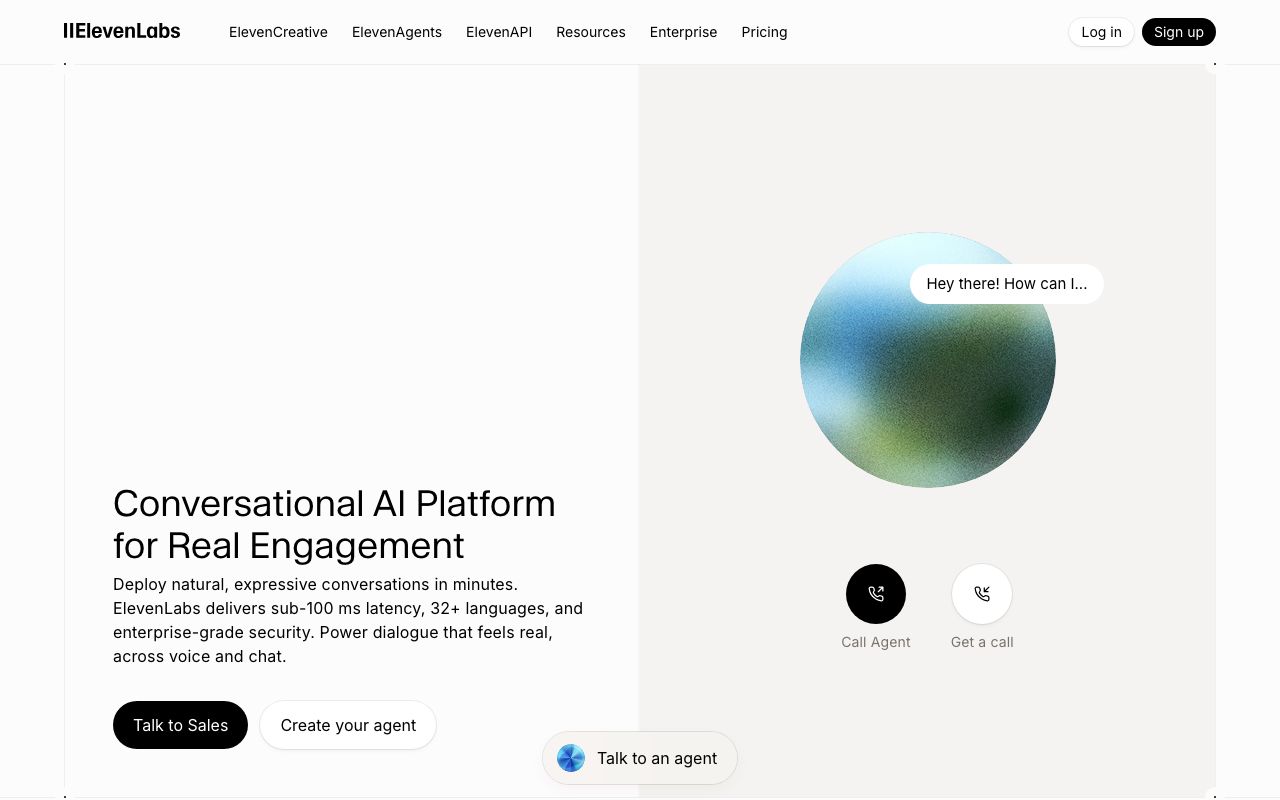

Voice Agents: The Next Interface

ElevenLabs voice agents platform — building conversational AI with real-time voice interaction. Source: elevenlabs.io

ElevenLabs voice agent platform represents the company's bet that voice will become the primary interface between humans and computers — just as significant as the shift from keyboards to touchscreens.

"A lot of things are screen-first. Most people will have the laptop, the phone most of the day in front of them. I think a lot of that will move into the background where you will be able to be a lot more present."

Mati Staniszewski

The vision extends far beyond customer service chatbots. Staniszewski imagines a future where:

- Education: Students wear headphones and have a personal AI tutor — the world's best physicist, mathematician, or historian — guiding them through any subject in real time

- Travel: Visitors to foreign countries speak any language fluently through real-time voice translation, understanding not just what is said but how it's said — cultural nuance preserved alongside linguistic meaning

- Communication: Most human-machine interaction shifts to audio because it's faster and more information-rich than text

The technical argument for audio-first AI is compelling: training models on raw audio rather than text tokens captures information that text-based training misses entirely. Tone, hesitation, emphasis, sarcasm, warmth — all encoded in the audio signal, invisible to text-only models.

"If you train a model on text, you're using text units — tokens created by humans. If you train a general audio generation model, you're training on raw audio. If you can make a model that is smart in audio, you can imagine you can make a model that is smart in any raw data domain."

Mati Staniszewski

ElevenLabs CEO Mati Staniszewski on why voice is the next AI interface — from product philosophy to global hiring strategy.

Music and the Universal Audio Model

ElevenLabs music generation platform — creating studio-grade music with natural language prompts, fully licensed with major labels. Source: elevenlabs.io

In 2024-2025, ElevenLabs expanded beyond speech into music and sound effects generation. The music model was built through an 18-month licensing negotiation with labels including Merlin and Cobalt, covering four of the major music labels.

The licensing process was arduous. Staniszewski used "forcing functions" — deadline-driven ultimatums that created urgency: "We either do this together or we do it separately." The forcing functions had to be moved a few times, but they ultimately drove the deals to completion.

The result: ElevenLabs is one of the few AI music generation platforms that provides full commercial rights to generated music, backed by legitimate licensing agreements. Users can generate music for commercial projects without copyright risk.

But the music model isn't the endgame — it's a stepping stone toward what ElevenLabs calls the universal audio model:

"Currently, we have specialized models for audio, for sound effects, and for music. The future of sound is having one model which can generate any kind of audio. You could imagine converting voice to music, or changing singing into sound effects."

Mati Staniszewski

The universal audio model — a single system that seamlessly generates and transforms any type of sound — represents ElevenLabs long-term research ambition and the logical extension of their audio-first AI thesis.

Today (Specialized Models) Future (Universal Audio Model)

┌─────────┐ ┌─────────┐ ┌────────┐ ┌──────────────────────────┐

│ Voice │ │ Music │ │ SFX │ │ One Model — Any Sound │

│ Model │ │ Model │ │ Model │ → │ Voice ↔ Music ↔ Effects │

└─────────┘ └─────────┘ └────────┘ │ Any language. Any style. │

Separate Separate Separate └──────────────────────────┘

📊 ElevenLabs by the Numbers

A snapshot of ElevenLabs key metrics as of early 2026:

| Metric | Value |

|---|---|

| Founded | 2022 (launched January 2023) |

| Valuation | ~$11 billion (Series C, Feb 2026) |

| Total Funding | $600M+ |

| ARR | $330M+ (as of Jan 2026) |

| Employees | 300+ across 11+ cities |

| Voice Library | ~10,000 community voices |

| Creator Payouts | $10M+ paid to voice creators |

| Languages | 90+ (TTS, STT, and voice cloning) |

| Product Lines | 3 (ElevenCreative, ElevenAgents, ElevenAPI) |

| Product Teams | ~20 squads of 5-10 people |

| Go-to-Market Team | ~90 people globally |

| Sales Quota Attainment | 80%+ of reps hit 20x base salary quota |

| Growth Rate | Headcount doubling ~every 6 months |

🏆 The Competitive Landscape

ElevenLabs operates in a rapidly expanding AI audio market with competition from both tech giants and startups:

| Company | Focus | Strength | Limitation |

|---|---|---|---|

| ElevenLabs | Full-stack audio AI | Voice quality, marketplace, cross-lingual cloning, licensed music | Premium pricing for high-volume use |

| Amazon Polly | Cloud TTS | AWS integration, low cost, reliable | Limited emotional range, no voice marketplace |

| Google Cloud TTS | Cloud TTS | WaveNet quality, language coverage, infrastructure | No voice cloning marketplace, enterprise-focused |

| Microsoft Azure Speech | Enterprise speech | Enterprise integration, custom neural voice | Complex setup, tied to Azure ecosystem |

| OpenAI (Voice Mode) | Conversational AI | ChatGPT integration, real-time conversation | Voice is a feature, not the core product |

| PlayHT | Voice cloning & TTS | Affordable pricing, API-first | Smaller voice library, fewer languages |

| Resemble.AI | Voice cloning | Real-time cloning, deepfake detection | Narrower product scope |

| Descript | Audio/video editing | Overdub feature, editing-first workflow | Voice is secondary to editing tools |

| Suno / Udio | AI music | Music-first approach, creative tools | Voice and speech are not core offerings |

ElevenLabs key competitive advantage is its full-stack approach — voice synthesis, cloning, marketplace, agents, speech-to-text, dubbing, sound effects, and licensed music in a single platform. Most competitors specialize in one or two capabilities.

The second advantage is voice quality. While Google's WaveNet and Amazon's neural voices have improved significantly, ElevenLabs models consistently produce more emotionally expressive and contextually aware output — the gap that made the company famous at launch.

The third advantage is enterprise readiness. ElevenLabs offers HIPAA compliance, SSO, Zero Retention Mode, and private deployment — features that unlock healthcare, financial services, and government use cases where competitors like PlayHT and Resemble.AI lack the certifications.

⚠️ Controversies and Safety Challenges

ElevenLabs rapid rise wasn't without controversy. The same voice cloning technology that made the platform revolutionary also created risks that the company has had to address publicly.

Voice Cloning Misuse (2023)

Within weeks of launch, users began cloning voices of public figures — celebrities, politicians, and media personalities — without consent. Clips of AI-generated speech from recognizable voices spread across social media, raising alarms about deepfakes and misinformation.

ElevenLabs responded by implementing several safeguards:

- Verification requirements for Professional Voice Cloning (PVC) — users must provide proof of consent from the voice owner

- AI-powered detection tools to identify content generated by ElevenLabs models

- Abuse reporting mechanisms and content moderation policies

- Watermarking of generated audio to enable tracing

The company acknowledged the challenge publicly and framed it as an industry-wide responsibility rather than a problem unique to ElevenLabs.

ElevenLabs Safety Stack

┌──────────────────────────────────────────────────┐

│ PREVENTION │

│ ├── PVC consent verification │

│ ├── Usage monitoring & rate limiting │

│ └── Content policy enforcement │

├──────────────────────────────────────────────────┤

│ DETECTION │

│ ├── AI-powered deepfake detection │

│ ├── Audio watermarking & provenance │

│ └── Abuse reporting mechanisms │

├──────────────────────────────────────────────────┤

│ ENTERPRISE CONTROLS │

│ ├── HIPAA & TCPA compliance │

│ ├── Zero Retention Mode │

│ ├── SSO & workspace management │

│ └── Private deployment options │

└──────────────────────────────────────────────────┘

Navigating Creative Industry Concerns

The entertainment industry initially viewed ElevenLabs with suspicion — would AI voices replace human voice actors? The Screen Actors Guild (SAG-AFTRA) negotiations during the 2023 Hollywood strikes specifically addressed AI voice replication as a labor concern.

ElevenLabs navigated this by positioning itself as a tool that empowers voice actors rather than replaces them. The Voice Library marketplace gives voice actors a new revenue stream — they create an AI version of their voice and earn royalties when it's used. The $10 million paid to voice creators by 2026 became the company's most powerful counterargument to displacement fears.

Staniszewski described the approach as "figuring out how we can bring the industry together to disrupt together rather than just to disrupt."

The Strategic Pivot: "The Real Money Isn't in Voice"

In October 2025, Staniszewski made a provocative statement: "The real money isn't in voice anymore." He predicted that AI audio models would be commoditized over time — a remarkable admission from the CEO of the world's leading voice AI company.

The statement signaled ElevenLabs strategic evolution beyond voice synthesis into conversational AI agents, enterprise platforms, and the broader goal of a universal audio model. Voice was the wedge; the platform play is the endgame. This trajectory mirrors how Taskade started as a productivity tool and evolved into a full AI workspace with Genesis app building, custom AI agents, and workflow automation — voice and text are modalities, but the real value is in the intelligence and execution layer.

🔮 The Road Ahead: The Vocal Turing Test

ElevenLabs has stated its next major research challenge explicitly: pass the vocal Turing test.

"The new challenge we've really set ourselves is: can we be the first company to cross this threshold of the vocal Turing test? How do you have an AI which really sounds like a human that you can interact back and forth with — but is super smart, super empathetic?"

Mati Staniszewski

This isn't about generating a realistic audio clip — ElevenLabs can already do that. The vocal Turing test is about real-time, two-way conversation where the AI:

- Responds with natural latency (not too fast, not too slow)

- Matches emotional tone to the conversation context

- Uses vocal fillers, pauses, and breathing patterns naturally

- Adjusts personality and warmth based on the speaker

- Maintains character consistency across long interactions

The implications of passing this threshold extend far beyond ElevenLabs as a company. Voice as an interface would transform:

- Customer experience: Every business could have 24/7 voice support indistinguishable from human agents

- Education: Personalized tutoring with AI that adapts its teaching style through vocal cues

- Healthcare: Mental health support through empathetic, always-available voice companions

- Accessibility: Natural communication for people with speech disabilities

- Entertainment: Interactive storytelling where AI characters have genuine vocal personalities

Staniszewski frames it as "the most interesting thing" — that success with audio intelligence could unlock intelligence in any raw data domain, not just text. If a model can understand the nuances of human speech, it can potentially understand any complex signal.

🤝 How Taskade Genesis Compares: From Voice AI to Workspace AI

ElevenLabs and Taskade represent two different bets on how AI will reshape work. ElevenLabs is building the voice layer — making machines sound human. Taskade Genesis is building the workspace layer — making AI teammates that think, plan, and execute alongside you.

Taskade Genesis operates on a "Living Trinity" architecture called Workspace DNA — three interconnected pillars that create a self-reinforcing loop:

- Memory (Projects): Custom databases, feedback systems, client profiles, and product catalogs that accumulate context over time

- Intelligence (AI Agents): Persistent AI teammates with custom tools, 22+ built-in tools, multi-model support (11+ frontier models from OpenAI, Anthropic, and Google), and public embedding — not stateless chatbots, but agents that continuously learn your business context

- Execution (Automations): Durable workflows powered by a durable execution engine framework with branching, looping, filtering, and 100+ integrations including Stripe, Shopify, Slack, HubSpot, and Google Sheets

Memory feeds Intelligence, Intelligence triggers Execution, Execution creates Memory — a self-reinforcing loop that gets smarter with every interaction.

Taskade Genesis — build live apps, dashboards, and workflows from a single prompt with embedded AI agents.

Here's how the two platforms compare:

| Capability | ElevenLabs | Taskade Genesis |

|---|---|---|

| Core Focus | AI voice and audio generation | AI workspace: apps, agents, and automation |

| AI Models | Proprietary voice/audio models | 11+ frontier models (OpenAI, Anthropic, Google) |

| Interface | Voice-first (audio as primary modality) | Workspace-first (7 project views: List, Board, Mind Map, etc.) |

| Agent Type | Voice agents (conversational, telephony) | Custom AI agents with persistent memory, custom tools, and public embedding |

| Automation | Voice agent workflows, CRM triggers | 100+ integration automations with durable execution |

| App Building | Audio generation platform | Build live apps from prompts — dashboards, portals, forms, workflows |

| Collaboration | Voice Library marketplace | Real-time multiplayer workspace with role-based access |

| Enterprise | HIPAA, SSO, Zero Retention | SOC 2, SSO, custom domains, password-protected apps |

| Pricing | Usage-based voice generation | Free to $40/mo for full workspace access |

| Community | Voice Library (10K voices) | Community Gallery — templates, agents, and Genesis apps |

Taskade AI Agents — persistent AI teammates with custom tools, multi-model support, and public embedding.

For teams that need AI-powered project management, task automation, and app creation — not just audio generation — Taskade Genesis lets you describe what you need in plain language and get a live, deployed application with embedded AI agents and workflow automations. No coding. No deployment. No hosting.

❓ Frequently Asked Questions

What is ElevenLabs?

ElevenLabs is an AI audio technology company founded in 2022 by Mateusz Staniszewski and Piotr Dąbkowski. The company builds AI models for voice synthesis, voice cloning, speech-to-text, dubbing, voice agents, sound effects, music generation, and image/video creation. Organized into three product lines — ElevenCreative, ElevenAgents, and ElevenAPI — ElevenLabs is valued at approximately $11 billion with $330M+ ARR and over 300 employees worldwide.

Who founded ElevenLabs?

ElevenLabs was co-founded by Mateusz "Mati" Staniszewski (CEO) and Piotr "Peter" Dąbkowski (CTO), childhood friends from Poland. Staniszewski previously worked at Palantir and Dąbkowski at Google DeepMind. They started the company as a weekend project in 2021-2022 before launching publicly in January 2023.

How much is ElevenLabs worth?

ElevenLabs reached an approximately $11 billion valuation after raising $500 million in its Series C from Sequoia Capital in February 2026. The company has raised over $600 million in total funding from investors including Sequoia Capital, Andreessen Horowitz, Smash Capital, Nat Friedman, Daniel Gross, and SV Angel. Annual recurring revenue crossed $330 million as of January 2026.

What languages does ElevenLabs support?

ElevenLabs supports 90+ languages across text-to-speech synthesis, speech-to-text transcription, and voice cloning. The cross-lingual voice cloning feature allows a single voice to speak naturally in any supported language while preserving its vocal identity and characteristics.

What is the ElevenLabs Voice Library?

The Voice Library is a marketplace where users create AI voice clones and share them publicly. When other users use a shared voice, the creator earns money. The marketplace hosts nearly 10,000 voices, and ElevenLabs has paid over $10 million back to voice creators.

How does ElevenLabs voice cloning work?

ElevenLabs offers two cloning methods: Instant Voice Cloning (IVC) from short audio samples and Professional Voice Cloning (PVC) for higher-fidelity replicas with consent verification. Both capture acoustic characteristics — tone, age, gender, dialect, accent, and style — and generate new speech across 90+ languages while preserving the original voice identity. The technology goes beyond simple acoustic matching to capture personality and emotional range.

What is ElevenLabs music generation?

ElevenLabs offers a fully licensed AI music model built through partnerships with labels including Merlin and Cobalt (covering four major labels). Unlike unlicensed music AI tools, ElevenLabs provides full commercial rights to generated music. The 18-month licensing negotiation process was one of the first of its kind in the industry.

What are ElevenLabs voice agents?

Voice agents are ElevenLabs platform for building real-time conversational AI experiences. The platform combines voice synthesis and speech-to-text with LLM integration, enabling applications across customer support, education, gaming, and immersive media. Voice agents represent ElevenLabs bet that voice will become the next fundamental human-computer interface.

How does ElevenLabs compare to Amazon Polly?

ElevenLabs differentiates from Amazon Polly through emotional expressiveness, voice cloning quality, and context-aware synthesis. Amazon Polly excels at reliable, low-cost speech generation with deep AWS integration. ElevenLabs produces more emotionally nuanced output and offers a voice marketplace, cloning, agents, and music generation.

What is the vocal Turing test?

The vocal Turing test is ElevenLabs stated research goal of creating AI speech indistinguishable from human speech in real-time two-way conversation. This goes beyond text-to-speech to include empathy, emotional responsiveness, natural pacing, and conversational dynamics — the ability to make a listener forget they're interacting with a machine.

Is ElevenLabs remote or in-person?

ElevenLabs is remote-first with physical hub offices in London, Warsaw, San Francisco, New York, and other cities across 11+ locations. The company removed all job titles and operates with roughly 20 autonomous product teams of 5-10 people. Early-career hires are typically placed in hubs for cultural immersion.

What is ElevenLabs pricing?

ElevenLabs offers a free tier with limited character generation, plus paid plans for higher volumes. Enterprise pricing is available for large-scale deployments with HIPAA compliance, SSO, and Zero Retention Mode. The API provides usage-based pricing for developers building voice-powered applications.

What is ElevenLabs dubbing?

ElevenLabs dubbing automatically translates audio and video content across languages while preserving the speaker's emotion, timing, and vocal identity. Unlike traditional dubbing that requires hiring voice actors for each language, ElevenLabs dubbing handles the translation, voice matching, and lip-sync alignment in a single automated pipeline across 90+ languages.

Is ElevenLabs safe to use for enterprise?

ElevenLabs offers enterprise-grade security features including HIPAA compliance for healthcare applications, TCPA compliance for telecommunications, Single Sign-On (SSO), Zero Retention Mode for data privacy, workspace management with billing groups and user groups, and private deployment options. The platform also includes AI-powered detection tools to identify ElevenLabs-generated content and abuse reporting mechanisms.

📚 Related Reading

AI Company Histories:

- What Is OpenAI? Complete History of ChatGPT, GPT-5 & More

- What Is Anthropic? Complete History of Claude AI & Claude Code

- What Are AI Agents? The Complete Guide

AI Tools & Comparisons:

- Best AI App Builders in 2026

- Claude Code vs Cursor vs Taskade Genesis

- What Is Vibe Coding? Complete History

- What Is Agentic Engineering? Complete History

Taskade Platform:

- Taskade AI Agents — Build Custom AI Teammates

- Taskade Genesis — Build Apps From Prompts

- Taskade Automations — Connect 100+ Integrations

- Taskade Community — Explore Templates and Apps

- Create Your First App

Last updated: March 2026