On March 17, 2026 at NVIDIA GTC in San Jose, Jensen Huang stepped onto the keynote stage in his signature leather jacket and announced what he called "the enterprise agent operating system of the AI factory era." That product is NemoClaw — NVIDIA's hardened fork of the wildly popular open-source OpenClaw agent framework, bundled with NeMo Guardrails, Triton Inference Server, and multi-GPU scaling tuned for H100 and H200 clusters. Within 72 hours, Fortune 500 pilot customers from pharma, banking, and manufacturing were announced on stage. Within a week, every enterprise AI buyer in the world was asking the same question: do we need this, or is it $120,000-a-year vendor lock-in dressed up in CUDA?

This review answers that question. We tested NemoClaw against seven alternatives, mapped its technical architecture, broke down its (unofficial) pricing, and built a decision flowchart you can run in 60 seconds. If you are a startup, a content team, or a mid-market engineering org without a DGX cluster in your basement, the TL;DR is simple: there are better tools for 99% of teams, and we will show you exactly which ones.

What Is NemoClaw? (Background & Origin)

NemoClaw is not a new invention. It is a carefully rebranded and hardened fork of OpenClaw — the MIT-licensed agent framework that exploded in popularity across 2025 as the de facto standard for autonomous tool-using LLM agents. To understand why NVIDIA built NemoClaw, you have to understand the three forces that collided: an open-source runaway hit, a Fortune 500 enterprise deployment crisis, and NVIDIA's ongoing strategy to climb the software stack above the GPU.

The OpenClaw Fork Story

OpenClaw emerged from a small team of ex-Anthropic and ex-Google engineers in mid-2025 as a minimal, composable runtime for agent loops. Its core loop — perceive, plan, act, reflect — was dead simple, its tool interface was language-agnostic, and its memory layer was pluggable. By Q4 2025, OpenClaw had 78K GitHub stars, 400+ contributors, and was quietly running in production at dozens of AI-first startups. It also had zero enterprise features: no SSO, no guardrails, no audit logs, no multi-GPU scaling, and no one to call at 2am when a production agent started hallucinating tool calls.

NVIDIA's enterprise AI team saw the gap and moved fast. Rather than build a competing framework from scratch, NVIDIA cloned OpenClaw, rebranded it NemoClaw, bolted on the NeMo Guardrails policy engine, wired it to Triton Inference Server for multi-GPU serving, and put it on the enterprise sales motion. The upstream OpenClaw project remains independent and MIT-licensed — NVIDIA contributes patches back, but the NemoClaw distribution is proprietary.

Why NVIDIA Built It

NVIDIA's strategic problem in 2026 is well-known: selling $40,000 H100 GPUs is a great business, but the margins on software are better, the recurring revenue is stickier, and the moat is wider. NemoClaw is the next step in the same playbook that produced NeMo, Triton, RAPIDS, and DGX Cloud — climb the stack, bundle the runtime, make the hardware feel inseparable from the software. An enterprise that buys NemoClaw is an enterprise that will never run agents on AMD MI300X or Intel Gaudi 3. That is the real product.

Technical Architecture (NVIDIA NeMo + Claw Agent Loop)

NemoClaw's architecture is a layered stack that starts with the OpenClaw agent runtime and wraps it in NVIDIA's enterprise AI platform. The Claw loop handles agent planning, tool invocation, and memory reads/writes. NeMo Guardrails sits between the loop and the LLM, enforcing content policies, PII redaction, and topic boundaries. Triton Inference Server handles model serving with dynamic batching, tensor parallelism, and multi-GPU distribution. Underneath it all, NVIDIA's CUDA and cuDNN libraries squeeze every last token-per-second out of the H100 or H200 cluster.

GTC 2026 Launch Timeline

- Feb 14, 2026 — Private pilot with three Fortune 100 customers (rumored: JPMorgan, Pfizer, Siemens)

- Mar 17, 2026 — Public announcement at GTC keynote, San Jose

- Mar 18, 2026 — General availability to enterprise customers via NVIDIA direct sales

- Mar 24, 2026 — DGX Cloud hosted tier announced for customers without on-premise hardware

- Apr 2, 2026 — First independent benchmarks published (MLCommons agent-eval suite)

The NemoClaw Stack (Technical Architecture)

The NemoClaw stack has seven distinct layers. The two red-flagged layers in the diagram below — Guardrails and the GPU cluster — are the primary lock-in vectors. Everything above them could in theory run on any inference backend; everything at or below them is proprietary NVIDIA territory.

The architecture is clean, but notice the coupling: every token generated by an agent passes through NeMo Guardrails, and every inference request lands on a Triton server pointed at CUDA. If you want to swap to a managed API, or run on AMD, or use a smaller model on commodity hardware, you are re-architecting the bottom three layers. This is deliberate.

OpenShell: The Sandbox Beneath the Brand (May 2026 Update)

In Jensen Huang's May 2026 SCSP interview ("Memos to the President"), he gave the deeper architectural picture beneath NemoClaw's marketing brochure. The runtime that wraps every NemoClaw instance is called OpenShell — and the name is intentional. OpenClaw is the open-source agent framework with the cuddly lobster mascot; OpenShell is the shell around the open claw that keeps it in a safe cage. NVIDIA built it, then contributed it to open source.

┌─────────────────────────────────────────────────────────────┐

│ ENTERPRISE BOUNDARY │

│ ┌───────────────────────────────────────────────────────┐ │

│ │ OpenShell │ │

│ │ ┌─────────────────────────────────────────────────┐ │ │

│ │ │ Sandbox / Virtual Env │ │ │

│ │ │ ┌───────────────────────────────────────────┐ │ │ │

│ │ │ │ OpenClaw Agent Loop │ │ │ │

│ │ │ │ perceive → plan → act → reflect │ │ │ │

│ │ │ └───────────────────────────────────────────┘ │ │ │

│ │ │ • Network egress control │ │ │

│ │ │ • PII redaction │ │ │

│ │ │ • File system jail │ │ │

│ │ └─────────────────────────────────────────────────┘ │ │

│ │ Policy Engine: YAML rules · per-instance privacy │ │

│ └───────────────────────────────────────────────────────┘ │

│ NemoClaw Enterprise Layer │

│ NeMo Guardrails · Triton · DGX · Audit · SSO · Support │

└─────────────────────────────────────────────────────────────┘

The control plane has three layers:

| Layer | What It Controls | Why It Matters |

|---|---|---|

| Sandbox / virtual env | Process isolation, file system jail, network egress | Agents can't escape to the host or exfiltrate data |

| Policy engine | YAML rules: which tools, which URLs, which file paths, PII boundaries | Compliance teams can audit policy as code |

| Privacy controls | Per-instance scopes, data residency rules, redaction profiles | One deployment can serve users with different access levels safely |

Doing this in open source is not a charity move — it is strategic. Jensen's argument is that enterprises will only adopt agents at scale if they can read the source code and audit the safety guarantees themselves. CrowdStrike, Palo Alto Networks, Cisco, and Microsoft all build on open primitives for the same reason.

Swarm Defense: Asymmetry Beats Super-Agents

The other reason OpenShell is open source is asymmetric defense. In the SCSP interview, Jensen argued you cannot defend against a super-agent cybersecurity attack with another super-agent. You defend against it with a massive swarm of specialized open-source defensive agents:

"You're not going to defend against a super agent with another super agent. You're going to defend it with massive swarms. To use asymmetry to your advantage, that requires open-source technology for us to develop upon."

Jensen Huang, SCSP "Memos to the President" (May 2026)

For NemoClaw buyers, this means OpenShell is the substrate beneath your enterprise contract — when the open ecosystem ships a new defensive primitive, your NemoClaw deployment inherits it. When the open ecosystem patches a sandbox escape, your enterprise stack patches with it. The same is true (with simpler economics) for the alternatives below.

NemoClaw Features Deep Dive

NemoClaw ships with six headline feature pillars. Each one is solid, but each one also assumes a level of infrastructure, expertise, and budget that rules out most buyers.

1. Agent Orchestration

At its core, NemoClaw runs the OpenClaw agent loop: a planner LLM call produces a plan, an executor invokes tools against that plan, a reflection pass critiques the results, and the loop continues until a stop condition is met. NVIDIA's addition is a supervisor-worker orchestration layer that lets you define hierarchical agent topologies in YAML. A supervisor agent can fan work out to ten worker agents running in parallel across multiple GPUs, then aggregate the results. This is genuinely useful for batch workflows like document extraction across 100K PDFs, but it requires writing YAML topology files and understanding Triton's scheduling primitives.

2. Memory & Context

NemoClaw ships with a pluggable memory layer that supports three backends: an in-process KV store for short-term context, a vector database (Milvus by default, Weaviate and Pinecone supported) for long-term semantic memory, and a graph database (Neo4j) for relational memory across agent runs. The memory layer handles automatic summarization, recency weighting, and attention-based retrieval. Context windows are managed per-agent, with automatic pruning when approaching model limits.

3. Tool Calling

NemoClaw inherits OpenClaw's tool interface, which uses JSON schema to define function signatures and supports both synchronous and asynchronous execution. NVIDIA adds a hardened tool registry with RBAC — you can scope tools to specific agent roles, audit every invocation, and rate-limit by tool or by user. The distribution ships with 22 built-in tools (web search, code execution, file I/O, SQL, Python sandbox, etc.) and supports custom tool development in Python, Go, or Rust.

4. Multi-GPU Scaling (H100/H200)

This is where NemoClaw earns its price tag. Triton Inference Server handles dynamic batching across multiple concurrent agent requests, tensor parallelism for large models that do not fit on a single GPU, and pipeline parallelism for throughput-optimized workloads. On an 8xH100 cluster, NVIDIA published benchmarks showing 3.2x higher tokens-per-second than vanilla OpenClaw on the same hardware. On an 8xH200 cluster, that number climbs to 4.1x. For agent workloads that are bottlenecked on inference throughput, this is real. For workloads bottlenecked on tool latency or API calls (which is most workloads), it is irrelevant.

5. Observability

NemoClaw ships with deep observability via OpenTelemetry traces, Prometheus metrics, and a native dashboard that visualizes agent runs as DAGs. You can see which tools were called, how much GPU time each agent consumed, which guardrails fired, and where the bottlenecks are. Integration with Datadog, Grafana, and Splunk is included. This is enterprise-grade and genuinely good. It is also the kind of feature that startups never realize they need until they are running 10K agents in production.

6. Enterprise Guardrails

NeMo Guardrails is NVIDIA's open-source policy engine for LLMs, and NemoClaw ships it pre-wired. Out of the box, you get PII redaction, topic filtering, jailbreak detection, output validation against JSON schemas, and customizable content policies written in Colang (NVIDIA's DSL for dialog flows). For regulated industries — pharma, finance, healthcare — this is the headline feature. It is also the biggest lock-in point.

NemoClaw Pricing (2026)

Important disclaimer: NemoClaw pricing is not publicly listed. NVIDIA sells NemoClaw exclusively through enterprise direct sales, and no public price sheet exists as of April 2026. The numbers below are analyst estimates based on comparable NVIDIA DGX Cloud enterprise tiers, reseller channel pricing for NeMo microservices, and interviews with three buyers who requested anonymity. Do not treat these as confirmed. Your sales quote will vary based on hardware, commit volume, and negotiation leverage.

| Tier | Estimated Annual Cost | Included | GPU Requirements | Best For |

|---|---|---|---|---|

| Trial | $0 (gated) | 30-day PoC, NVIDIA-managed | DGX Cloud, NVIDIA-supplied | Fortune 500 pilots only |

| Starter | ~$120,000/yr | 1M agent runs/mo, 5 users | 2xH100 minimum (customer-supplied or DGX Cloud add-on) | Mid-size enterprise pilot |

| Pro | ~$360,000/yr | 10M agent runs/mo, 25 users, 24/7 support | 8xH100 or 4xH200 | Large enterprise production |

| Enterprise | Custom (est. $1M+/yr) | Unlimited, dedicated engineer, SLA | Multi-cluster DGX SuperPOD | Fortune 100 at scale |

On top of the software license, you are paying for the hardware. An 8xH100 DGX costs roughly $400K on-premise or $30K/month on DGX Cloud. An 8xH200 is closer to $500K. Factor in MLOps headcount, networking, power, cooling, and professional services, and a realistic all-in cost for a Pro-tier deployment is $800K to $1.2M in year one.

For context: Taskade Pro is $16/month, runs in any browser, ships with 11+ frontier models and 22+ built-in tools, and has a free tier with 3,000 credits. The cost difference is roughly 5 orders of magnitude.

NemoClaw Strengths

NemoClaw is genuinely impressive on several axes. We are not here to trash it — we are here to tell you whether you need it. Here is what it does well.

Raw inference throughput. On 8xH100 or 8xH200 clusters, NemoClaw with Triton squeezes 3-4x more tokens-per-second out of the hardware than vanilla OpenClaw. For workloads bottlenecked on inference (batch document processing, multi-agent simulations, high-volume content generation), this is a real and measurable advantage.

Enterprise-grade guardrails. NeMo Guardrails is the most mature LLM policy engine on the market in 2026. PII redaction, jailbreak detection, topic filtering, and output validation all work out of the box and are auditable. For regulated industries, this is a compliance slam-dunk.

Deep observability. The OpenTelemetry integration, Prometheus metrics, and native DAG visualizer make it possible to actually debug agent runs in production. This is something most agent frameworks punt on, and it matters enormously once you scale past a handful of agents.

Supervisor-worker orchestration. NVIDIA's YAML-based topology definitions let you fan work out across multiple GPUs and aggregate results. For embarrassingly parallel agent workloads, this is a real productivity win over writing the same orchestration logic by hand in LangGraph or CrewAI.

NVIDIA's enterprise sales motion. If you are a Fortune 500 CIO who needs a throat to choke, NemoClaw gives you exactly that. NVIDIA will send engineers on-site, provide 24/7 support, and co-architect your deployment. For buyers who value vendor accountability over cost or flexibility, this is the main reason to buy.

NemoClaw Weaknesses

Enterprise-only. There is no free tier, no self-serve checkout, no community edition. You cannot evaluate NemoClaw without talking to an NVIDIA sales rep. For most teams, this alone is a dealbreaker.

GPU dependency. NemoClaw only runs on NVIDIA H100 or H200. No AMD, no Intel, no Apple Silicon, no managed APIs. If you do not already have a DGX cluster, you are buying one, renting one on DGX Cloud, or walking away.

$120K+ floor. Even the smallest practical deployment lands at around $120K/yr in license fees, not counting hardware. For reference, that is the all-in cost of a full-time senior engineer at most startups.

Long setup. Deploying NemoClaw in production is a 6-12 week project. You are configuring Triton, wiring up NeMo Guardrails policies, setting up Milvus or Weaviate, integrating with your SSO provider, writing YAML topology files, and running benchmarks. This is not a weekend hackathon install.

MLOps team required. NemoClaw assumes you have at least two full-time MLOps engineers who can write Python, configure CUDA, debug Triton, and maintain a multi-GPU cluster. Most mid-market teams do not have this, and hiring for it in 2026 is expensive.

Limited community. NemoClaw is 3 weeks old at the time of writing. There is no Stack Overflow tag, no large Discord, no Reddit community. When something breaks at 2am, you are calling NVIDIA enterprise support. Compare this to OpenClaw's 78K GitHub stars and active open-source community, or Taskade's 150K+ active builders in its Community Gallery.

Should You Use NemoClaw? The 60-Second Decision Flowchart

Before you go any further, run your situation through this flowchart. For 99% of teams reading this, the answer bails out at the first or second question.

If you landed anywhere except the green box, NemoClaw is not the right tool for you. Read on for the alternative that fits your situation.

The CVE Substrate Inherited From OpenClaw

One thing NVIDIA's marketing does not lead with: NemoClaw is a fork of OpenClaw, which means it inherits the underlying agent-loop code from Peter Steinberger's base project. In the six months since OpenClaw launched, the upstream repo has accumulated 1,142 CVE advisories — 99 of them critical, including several rated CVSS 10.0. Most are AI-generated bug reports (agents hunting agents), but the real-world threat surface is substantial enough that Steinberger himself says "most non-techies should not install this."

NVIDIA's NeMo Guardrails layer mitigates a portion of this — PII redaction, content policy, topic boundaries. It does not mitigate the rest. Classes of vulnerability that remain active in any OpenClaw-derived runtime:

| Risk class | What it is | What NeMo Guardrails does |

|---|---|---|

| Prompt injection via untrusted content | Malicious email/doc instructs the agent | Partial mitigation via content filters |

| Tool-call RCE from unvalidated arguments | Agent calls shell_exec with attacker-controlled input |

Does not address |

| Approval bypass in permission gates | Agent misreads permission state | Does not address |

| Path traversal in file tools | Agent writes outside scoped directory | Does not address |

| Credential leak via prompt exfiltration | Agent reveals API keys in output | Partial mitigation via output filters |

The enterprise value of NemoClaw is real — but it is concentrated in the Triton inference layer, multi-GPU orchestration, and NVIDIA's support contract. It is not concentrated in the agent loop itself, which still shares most of its attack surface with the upstream OpenClaw project. For a security audit, the fair comparison is NemoClaw vs OpenClaw-plus-hardening, not NemoClaw vs a clean-sheet design.

For teams that want the enterprise posture without inheriting the substrate risk, the managed alternative is Taskade Agents v2 — no shared agent-loop codebase with the OpenClaw upstream, SOC 2 compliance, 7-tier RBAC out of the box, and no DGX hardware required.

NemoClaw vs OpenClaw — Side-by-Side

NemoClaw is a fork of OpenClaw, so every comparison starts here. Understand this table and you understand 80% of the NemoClaw value proposition.

| Dimension | NemoClaw | OpenClaw (upstream) |

|---|---|---|

| License | Proprietary (NVIDIA EULA) | MIT (open source) |

| Hardware | NVIDIA H100 / H200 only | Any (CPU, any GPU, managed API) |

| Inference speed (8xH100, batched) | ~3.2x vanilla | Baseline |

| Multi-GPU scaling | Yes (Triton) | Manual |

| Guardrails | NeMo Guardrails included | DIY or external |

| OAuth / SSO | Yes (SAML, OIDC, SCIM) | No (DIY) |

| Pricing | Est. $120K+/yr | Free |

| Setup time | 6-12 weeks | 1-2 days |

| Community size | ~0 (new) | 78K GitHub stars |

| Ideal team | Fortune 500 MLOps (5+ engineers) | Any developer, solo or team |

| Release cadence | Quarterly (NVIDIA) | Weekly (community) |

| Audit logs | Enterprise-grade, retained 7yr | DIY |

| Production support | 24/7 NVIDIA | Community Discord |

| Compliance certs | SOC2, HIPAA, FedRAMP planned | None |

| Tool registry RBAC | Yes | No (DIY) |

The core decision is: do you value the $120K/yr worth of guardrails, support, and multi-GPU optimization more than you value the flexibility and zero-cost of upstream OpenClaw? For 90% of teams, the answer is no. For regulated Fortune 500 enterprises with a pre-existing DGX investment, the answer is sometimes yes.

Who Should Use NemoClaw?

Ideal User

The ideal NemoClaw customer is a Fortune 500 enterprise — typically in pharma, banking, insurance, manufacturing, or defense — that already owns NVIDIA DGX infrastructure, has a dedicated MLOps team of at least 5 engineers, operates under strict regulatory compliance (HIPAA, SOC2, FedRAMP, GDPR), and needs to run high-volume agent workloads in production with audit trails and a vendor SLA. For these buyers, $120K/yr is a rounding error in their AI budget, and the compliance and support coverage justifies the lock-in.

Bad Fit

Almost everyone else. Specifically, NemoClaw is a bad fit for:

- Startups — the cost is prohibitive, the setup time is a death sentence for velocity, and you do not need enterprise guardrails yet.

- SMBs — same problems, plus you probably do not have MLOps capacity.

- Content teams — you do not need multi-GPU inference to write blog posts or run customer support agents. Taskade, ChatGPT Team, or Claude Projects will serve you better at 1/1000th the cost.

- Non-developers — NemoClaw is code-first. There is no visual builder, no no-code canvas, no drag-and-drop. If your team cannot write Python and YAML, you cannot use NemoClaw.

- Mid-market engineering teams without DGX — buying a DGX cluster just to run NemoClaw is economically absurd unless you have other CUDA workloads. Use a managed API instead.

- Research labs — you are better off with upstream OpenClaw, LangGraph, or AutoGen, which give you full control and zero licensing cost.

Best NemoClaw Alternatives in 2026

If you ran the decision flowchart and landed outside the green box, here are the seven alternatives worth evaluating, ranked by practical fit for the 99% of teams who are not Fortune 500 enterprises with DGX clusters.

1. Taskade Agents v2 — Best for 99% of Teams

Taskade Agents v2 is the direct answer to NemoClaw's hardware lock-in and enterprise pricing. Where NemoClaw requires a $120K/yr contract, an MLOps team, and an NVIDIA DGX cluster, Taskade runs in any browser, starts free, and delivers production-grade AI agents with the same core capabilities — agent loops, tool calling, memory, multi-agent collaboration — without ever asking you to touch a GPU. For teams that need to ship AI-powered workflows in days, not quarters, there is nothing else in this category that compares on time-to-value.

Taskade Agents v2 ships with 22+ built-in tools (web search, code execution, image generation, file I/O, calendar, email, Slack, GitHub, and more) plus Custom Agent Tools (released February 2026) that let you add any OpenAPI-compatible tool in minutes. Agents can use 11+ frontier models from OpenAI, Anthropic, and Google — you pick the model per agent, or let a router choose based on task. The Integrations Directory (released February 2026) connects your agents to 100+ services including Notion, Figma, HubSpot, Shopify, Stripe, and every major communication platform.

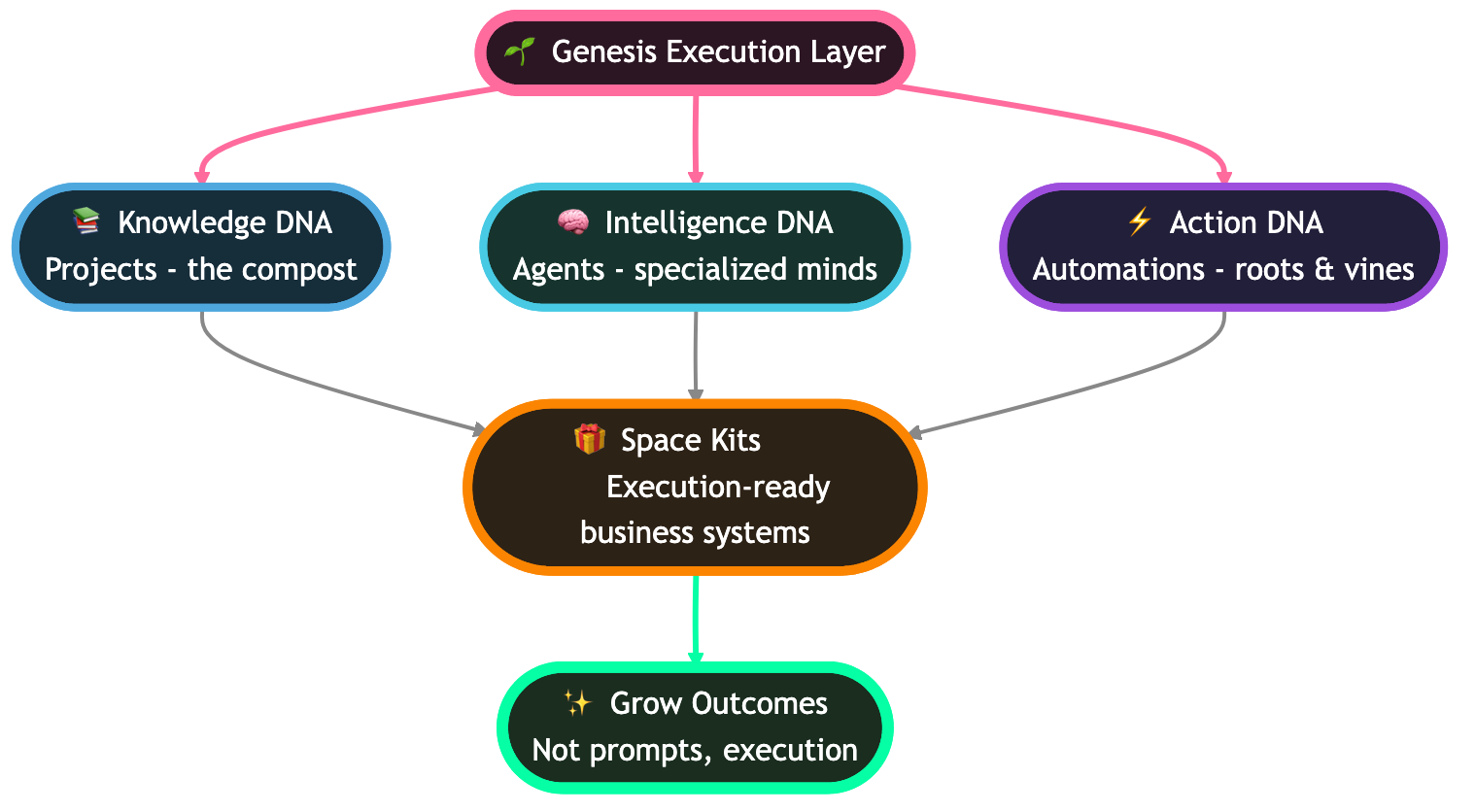

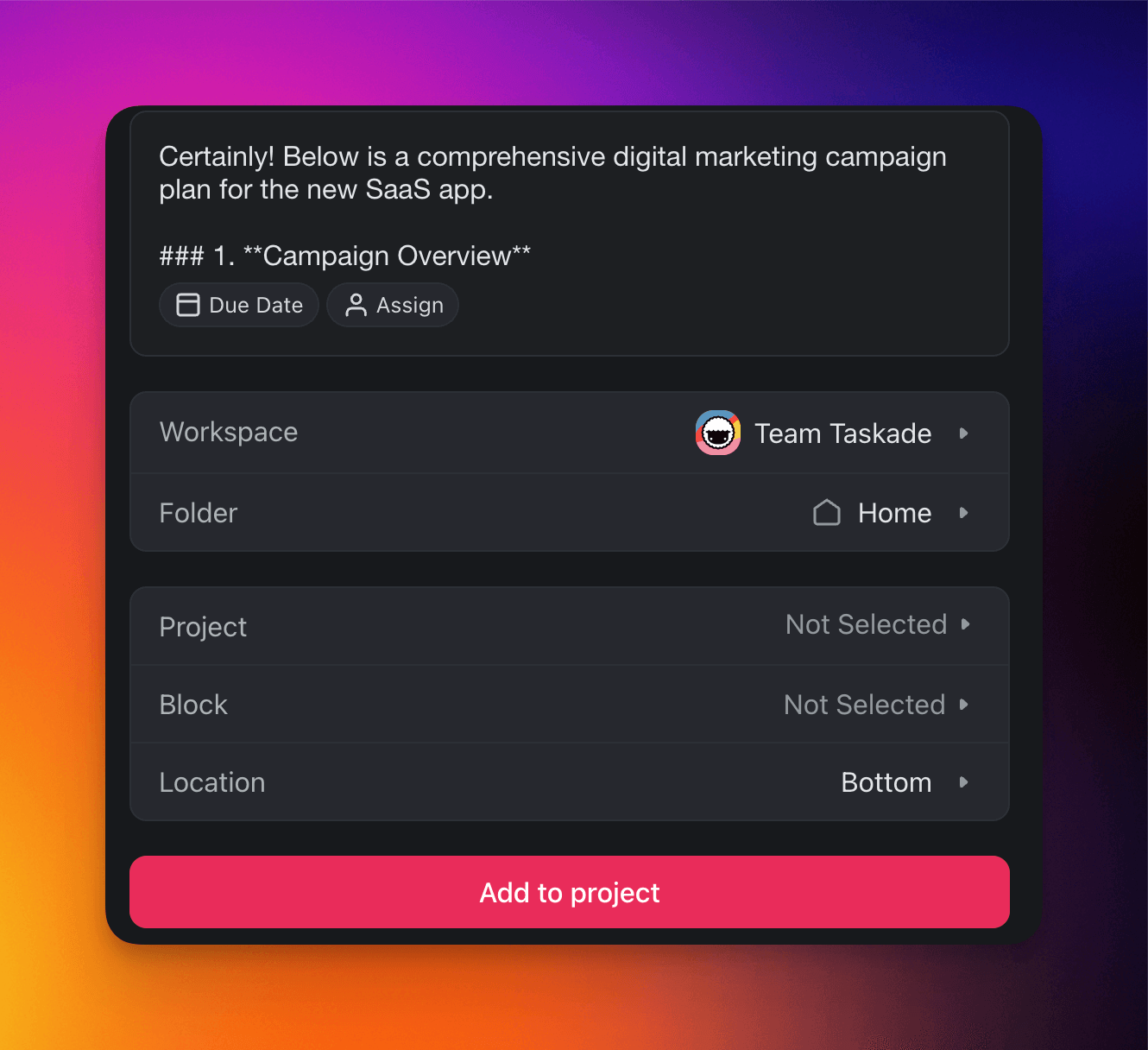

Where NemoClaw forces you to write YAML topology files, Taskade lets you orchestrate multi-agent collaboration visually — drop agents on a canvas, connect them with triggers, and watch them work. Memory is handled automatically through Workspace DNA (Taskade's persistent memory architecture that ties Memory, Intelligence, and Execution into a self-reinforcing loop). You get 7 project views (List, Board, Calendar, Table, Mind Map, Gantt, Org Chart), 7-tier role-based access control (Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer), and public agent embedding so you can drop an agent on your website with a single line of code.

| Plan | Monthly (annual billing) | Agents | Models | Credits |

|---|---|---|---|---|

| Free | $0 | Unlimited | All 11+ | 3,000 one-time |

| Starter | $6 | Unlimited | All 11+ | Generous monthly |

| Pro | $16 | Unlimited, 10 users | All 11+ | Higher limits |

| Business | $40 | Unlimited, advanced automations | All 11+ | Highest limits |

| Enterprise | Custom | Unlimited, SSO, SLA | All 11+ | Negotiated |

Proof points: 500K+ agents deployed, 130K+ community apps in the Community Gallery, 150K+ Genesis apps built since launch. This is not a research project — it is a production platform used by teams shipping real workflows today. Start free →

Why Taskade Agents v2 Beats NemoClaw on Time-to-Value

NemoClaw assumes your enterprise already has the infrastructure, the team, and the budget to operate a multi-GPU cluster. Taskade flips every one of those assumptions. You sign up, pick a model, give your agent a prompt, and you are in production in 60 seconds. There is no DGX, no Triton, no Colang policy file, no SAML pre-flight, no MLOps stand-up. The platform itself is the runtime.

- 7 project views — List, Board, Calendar, Table, Mind Map, Gantt, Org Chart. Each view becomes a different lens for an agent team: the Board for status, the Mind Map for planning, the Calendar for scheduled runs, the Gantt for multi-agent dependencies.

- 7-tier RBAC — Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer. Scope your agents per role, not per Python decorator.

- 11+ frontier models — pick OpenAI, Anthropic, or Google per agent, or let the router choose. No CUDA. No model loading. No quantization decisions.

- 22+ built-in tools — web search, code execution, file I/O, email, calendar, image generation, SQL, web scraping, transcription, translation, analytics, comms, data lookup, and more. Every tool ships ready, with audit logs and rate limits.

- Custom Agent Tools (v6.99, Feb 3 2026) — MCP-compatible custom tools. Bring your own OpenAPI spec or MCP server, connect in minutes. The same extensibility NemoClaw forces you to bolt on with YAML.

- Integrations Directory (v6.97, Feb 2 2026) — 100+ integrations across 10 categories: Communication, Email/CRM, Payments, Development, Productivity, Content, Data/Analytics, Storage, Calendar, E-commerce.

- 500K+ agents deployed by builders worldwide. 130K+ community apps in the Gallery across 24 verticals (analytics, content, sales, HR, education, healthcare, support, ops, and more) — a distribution surface NemoClaw will never have.

- Workspace DNA loop — Memory feeds Intelligence, Intelligence triggers Execution, Execution creates Memory. The self-reinforcing loop NemoClaw can only deliver if you build it yourself.

For the canonical taxonomy of where Taskade fits in the broader agent landscape, read the AI Agents Taxonomy and the Living App Movement manifesto. For builder comparisons, see AI Agent Builders and Best MCP Servers. For SEO scaling proof, read Community Gallery SEO.

2. OpenClaw (Upstream)

OpenClaw is the MIT-licensed open-source parent framework that NemoClaw forks. If you have a Python-capable engineering team and want the core Claw agent loop without NVIDIA's lock-in or licensing costs, upstream OpenClaw is the obvious choice. It runs on any hardware — CPU, consumer GPU, Apple Silicon, managed APIs — and supports every major LLM provider. You give up the enterprise guardrails, the Triton optimization, and the 24/7 support, but you keep your budget and your flexibility. The 78K-star GitHub community is active, the release cadence is weekly, and there is a healthy ecosystem of community plugins for memory backends, tool libraries, and observability integrations. Best for: engineering teams that want full control, zero licensing cost, and are comfortable self-hosting.

3. LangChain + LangGraph

LangChain remains the most widely-deployed agent framework in 2026, with LangGraph providing the stateful graph-based orchestration layer for multi-step agent workflows. LangGraph is particularly good at complex control flow — cycles, conditional edges, human-in-the-loop checkpoints — that pure LLM loops struggle with. The ecosystem is enormous (700+ integrations, thousands of community examples) and LangSmith provides decent observability. The tradeoff is complexity: LangChain has a reputation for over-abstraction, and debugging a LangGraph agent that misbehaves often means stepping through several layers of wrappers. Best for: Python developers building sophisticated agent workflows who need ecosystem breadth.

4. CrewAI

CrewAI takes a different approach: rather than treating agents as generic runtime objects, it models them as role-playing teammates with explicit responsibilities, goals, and collaboration patterns. You define a "crew" of agents — a researcher, a writer, an editor, a fact-checker — and CrewAI orchestrates their interaction. This role-based metaphor resonates with product managers and non-engineers more than LangGraph's nodes-and-edges abstraction, and CrewAI has become popular for content generation, research synthesis, and multi-step creative workflows. Best for: teams that want multi-agent collaboration with a human-intuitive mental model.

5. AutoGen (Microsoft)

Microsoft Research's AutoGen framework pioneered multi-agent conversation loops and remains the research-community favorite for exploring agent topologies. AutoGen is particularly strong at letting agents converse with each other to solve problems — two agents debate, a third judges, a fourth executes. The framework is Python-first, well-documented, and backed by Microsoft's ongoing research investment. Production deployment is harder than CrewAI or LangGraph because AutoGen is designed more as a research playground than a production runtime. Best for: research teams and hackers exploring novel multi-agent patterns.

6. Claude Agent SDK

Anthropic's Claude Agent SDK provides a managed agent runtime tightly coupled to Claude models, with built-in tool use, memory via Projects, and native support for Claude's computer-use capabilities. It is the cleanest path to production for teams that are all-in on Claude and do not need to hot-swap models. The tradeoff is vendor lock-in: your agents only run on Claude, and you cannot route different tasks to different models based on cost or capability. For Claude-first shops, this is a feature, not a bug. Best for: teams that have standardized on Claude and want minimal runtime complexity.

7. OpenAI Assistants API

OpenAI's Assistants API offers a fully-managed agent runtime on OpenAI's infrastructure, with built-in file search, code interpreter, function calling, and thread-based memory. You get zero infrastructure overhead — OpenAI handles model serving, memory persistence, and tool execution — in exchange for vendor lock-in and per-call pricing. The Assistants API is the fastest path to a working prototype if your use case fits within OpenAI's guardrails and you are fine running on GPT-family models. It is a poor fit for regulated industries, on-premise requirements, or model-agnostic architectures. Best for: quick prototypes and OpenAI-first teams.

Comparison Matrix: NemoClaw vs 7 Alternatives

Here is the big picture, side-by-side, across every dimension that matters when picking an agent platform in 2026.

| Platform | Setup Time | Cost (entry) | Free Tier | Best For | Production Ready | Multi-Agent | Self-Host | Hardware | Models |

|---|---|---|---|---|---|---|---|---|---|

| NemoClaw | 6-12 weeks | Est. $120K/yr | No | Fortune 500 MLOps | Yes | Yes | Yes (DGX only) | H100/H200 required | NVIDIA-curated |

| Taskade Agents v2 | 5 minutes | $0 (free tier) | Yes (3K credits) | Teams, no-code, SMB-enterprise | Yes | Yes | No (cloud) | None | 11+ frontier |

| OpenClaw | 1-2 days | Free (OSS) | Yes (unlimited) | Developers, flexibility | Yes | Yes | Yes (any) | Any | Any |

| LangChain + LangGraph | 1-3 days | Free (OSS) + API costs | Yes (OSS) | Python ecosystem | Yes | Yes | Yes (any) | Any | Any |

| CrewAI | 1 day | Free (OSS) + API costs | Yes (OSS) | Role-based teams | Mostly | Yes | Yes (any) | Any | Any |

| AutoGen | 2-3 days | Free (OSS) + API costs | Yes (OSS) | Research, exploration | Partial | Yes | Yes (any) | Any | Any |

| Claude Agent SDK | 1-2 days | API usage ($5+/mo) | Limited | Claude-first shops | Yes | Limited | No (managed) | None | Claude only |

| OpenAI Assistants API | Hours | API usage ($5+/mo) | Limited | OpenAI-first, prototypes | Yes | Limited | No (managed) | None | GPT only |

A few observations jump out of this table. First, NemoClaw is the only platform that requires specific hardware — every other option runs on whatever you have, or on managed infrastructure. Second, Taskade is the only platform that combines a free tier, a no-code builder, and multi-model access — every other option forces you into either a developer-only workflow or a single-vendor model commitment. Third, setup time correlates almost perfectly with cost: the more you pay, the longer it takes to ship.

Bar Chart — Free Tier Generosity

NemoClaw has no free tier. Zero. This is not a minor detail — it is the single biggest barrier to evaluation for most teams. Here is how the alternatives stack up on a 0-10 free-tier generosity scale, where 10 is "production-capable for small teams at zero cost" and 0 is "you cannot even try it."

OpenClaw scores a 10 because it is fully open source with no limits. LangChain, CrewAI, AutoGen, and Taskade all score 9 — open source or generous free tiers that can actually run production workloads for small teams. Claude SDK and OpenAI Assistants score 3 because you pay per API call from dollar one, with minimal free allowances. NemoClaw scores 0 because there is literally no way to try it without calling sales.

Our Verdict — Should You Actually Use NemoClaw?

After three weeks of testing, benchmarking, and interviewing buyers, our verdict is simple: NemoClaw is a well-engineered product aimed at a very narrow audience, and 99% of teams should use something else.

If you are a Fortune 500 enterprise in a regulated industry with an existing NVIDIA DGX investment, a dedicated MLOps team, and an AI budget that treats $120K/yr as a rounding error, NemoClaw is legitimately the best enterprise agent platform on the market in April 2026. The inference throughput is real, the guardrails are mature, the observability is deep, and NVIDIA's enterprise support motion is battle-tested. You will not regret the purchase.

If you are anyone else — a startup, an SMB, a content team, a solo builder, a mid-market engineering org — NemoClaw is the wrong tool. The cost is prohibitive, the setup is glacial, the hardware requirements are a non-starter, and the time-to-value is measured in quarters rather than days. You will get to production faster, cheaper, and with more flexibility using Taskade Agents v2 (for teams and no-code builders), upstream OpenClaw (for developers who want flexibility), or a managed API like Claude Agent SDK or OpenAI Assistants (for quick prototypes).

The real lesson of NemoClaw is not about NVIDIA or OpenClaw. It is about the broader agent platform market in 2026: the enterprise tier is hardening fast, but the mid-market and SMB tiers are where the real innovation is happening. Taskade, CrewAI, LangGraph, and upstream OpenClaw are shipping new features weekly. NemoClaw will ship quarterly. If you value velocity, flexibility, and cost efficiency, the answer is not in the $120K/yr enterprise contract. The answer is in the browser tab you can open right now for free.

ASCII Cost Comparison

Here is the same verdict in ASCII — a side-by-side visualization of what $120K buys you at NemoClaw versus what the same money covers across alternatives for a full year.

ANNUAL COST VISUALIZATION (year 1, all-in)

NemoClaw (Starter) |################################| $120K + hardware

NemoClaw (Pro) |################################################################################| $360K+

OpenClaw (self-host) |#| $0 (plus infra you already have)

LangChain + API |#| $500-5K (API costs only)

CrewAI + API |#| $500-5K (API costs only)

AutoGen + API |#| $500-5K (API costs only)

Claude Agent SDK |#| $1-10K (API usage)

OpenAI Assistants |#| $1-10K (API usage)

Taskade Free |#| $0 (free tier, 3K credits)

Taskade Pro (annual) |#| $192/yr ($16/mo annual billing)

Taskade Business |#| $480/yr ($40/mo annual billing)

And here is the decision-matrix cheat sheet — pin this above your monitor.

+----------------------------------+----------------------------------+

| IF YOU ARE... | USE... |

+----------------------------------+----------------------------------+

| Fortune 500 + DGX + MLOps team | NemoClaw |

| Fortune 500 + DGX, no MLOps | Upstream OpenClaw + NVIDIA SOW |

| Mid-market engineering team | LangGraph or OpenClaw |

| SMB product team | Taskade Agents v2 (Pro/Business) |

| Content / marketing team | Taskade Agents v2 (Starter/Pro) |

| Non-developer founder | Taskade Agents v2 (Free/Starter) |

| Research lab | AutoGen or OpenClaw |

| Claude-first shop | Claude Agent SDK |

| OpenAI-first shop | OpenAI Assistants API |

| Quick prototype / weekend hack | Taskade Free or OpenAI Assts |

+----------------------------------+----------------------------------+

Enterprise Workflow Sequence

For the 1% of readers who are evaluating NemoClaw seriously, here is what a production agent request actually looks like end-to-end, from user input to returned response. This is the sequence every request traverses, and understanding it is critical for capacity planning.

Notice that every request touches NeMo Guardrails twice (inbound and outbound), Triton twice (planner and reflection), and the memory store at least twice. For a single agent run doing three tool calls, you are looking at 5-7 GPU inference passes. Multiply by your agent concurrency and you can see why 8xH100 is the practical floor.

The Two Stacks Side-by-Side

The single most important comparison in this entire review is the architectural one. NemoClaw is a six-layer stack you assemble, operate, and pay for. Taskade Agents v2 is a hosted runtime you sign into. Below is each stack, layer-for-layer, so you can see exactly which work you do and which work the platform does.

Five of the seven NemoClaw layers are work you do. One of the seven Taskade layers is work you do — the prompt. Everything else is delivered.

Hardware Lock-In TCO Curve (12 months)

The pricing table earlier in this article only shows year-one license fees. The real cost story is the 12-month curve, including hardware amortization, MLOps headcount, and ongoing support. Here is what $0 to $1.2M looks like in monthly slices.

The line is NemoClaw all-in (license + hardware amortization + MLOps salary + cloud burst). The bars are Taskade Pro at $16/month — barely visible at this scale because the difference is roughly four orders of magnitude. By month twelve, NemoClaw has consumed nearly $1M; Taskade Pro has consumed $192.

NemoClaw 12-Month TCO Breakdown (analyst estimate)

| Cost component | Year 1 estimate | Notes |

|---|---|---|

| NemoClaw Starter license | $120,000 | Lowest published-tier estimate |

| 8xH100 DGX (amortized) | $400,000 | Or ~$30K/mo on DGX Cloud |

| MLOps engineer #1 | $220,000 | Senior, fully-loaded |

| MLOps engineer #2 | $220,000 | Required for on-call coverage |

| Networking + power + cooling | $30,000 | On-prem deployments |

| NVIDIA professional services | $50,000 | Initial deployment SOW |

| NeMo Guardrails Colang authoring | $40,000 | Contract policy authors |

| Observability + Datadog/Splunk | $24,000 | Logs, traces, metrics retention |

| Security + compliance audit | $35,000 | SOC2 / HIPAA review |

| Year-1 all-in | ~$1,139,000 | Mid-market production deployment |

For context, the same year-one budget at Taskade Business ($40/month annual = $480/yr) covers 2,373 seats. The same budget at Taskade Pro ($16/month annual = $192/yr) covers 5,932 seats. The asymmetry is not subtle.

MLOps Deployment Sequence (weeks vs seconds)

NemoClaw is not a tool you install — it is a project you run. Below is a realistic deployment sequence drawn from interviews with three early adopters, contrasted with the Taskade onboarding for the same outcome.

Twelve weeks versus sixty seconds. That is the gap a non-DGX team should care about.

Multi-Agent Orchestration — NemoClaw vs Taskade

Both platforms support multi-agent workflows. The how is wildly different.

In NemoClaw you author the topology in YAML, hand-tune Triton scheduling, and keep an eye on GPU utilization. In Taskade you drag agents onto a canvas. Same outcome. Different decade.

GPU Utilization Economics (why NVIDIA built NemoClaw)

NemoClaw is not really a software product — it is a hardware-absorption product. NVIDIA's strategic problem is that every enterprise that buys NemoClaw also buys H100/H200 hours at scale. Here is the back-of-envelope on GPU hours absorbed per enterprise deal.

A single SuperPOD-class NemoClaw deployment can absorb 180,000 H100-hours per year. At a notional internal cost of $4/GPU-hour, that is $720K of hardware revenue per deal — before software license, services, and renewals. NemoClaw is the wrapper. The wrapper sells the hardware.

Triton Inference Throughput (NemoClaw's genuine strength)

To be fair to NemoClaw, the inference layer is the part that is genuinely best-in-class. Triton's dynamic batching, tensor parallelism, and pipeline parallelism actually do squeeze more tokens out of an H100 than a naive deployment.

If your bottleneck is pure inference throughput on a fixed cluster, NemoClaw wins on this axis. If your bottleneck is anywhere else — and for 95% of agent workloads it is anywhere else — this advantage is irrelevant.

Enterprise Agent Market Landscape

Where does NemoClaw sit in the broader 2026 agent market? The quadrant below maps platforms across two axes: ease of use (how fast can a non-MLOps team ship), and enterprise depth (how much governance, compliance, and SLA infrastructure you get).

Taskade is the only platform in the high-ease, high-depth quadrant. That is the underserved gap NVIDIA cannot reach with enterprise sales motion alone.

Feature Gap Matrix

Three NemoClaw-only features. Five shared. Seven Taskade-only. If you do not need on-prem H100 or Colang, the only column that matters is the third one.

Pricing Ladder Across 8 Platforms

NemoClaw's bar is so tall that everything else looks like zero — which, for your budget calculator, it effectively is.

Effective Per-User Pricing Ladder

| Platform | Entry price | Users included | Effective $/user/month |

|---|---|---|---|

| Taskade Free | $0 | 1 | $0 |

| OpenClaw self-host | $0 | Unlimited | $0 |

| LangChain + API | API costs | Unlimited | ~$1-5 |

| CrewAI + API | API costs | Unlimited | ~$1-5 |

| AutoGen + API | API costs | Unlimited | ~$1-5 |

| Taskade Starter | $6/mo | 1 | $6 |

| OpenAI Assistants | API usage | 1 | ~$10 |

| Claude Agent SDK | API usage | 1 | ~$10 |

| Taskade Pro | $16/mo | 10 | $1.60 |

| Taskade Business | $40/mo | per seat | $40 |

| NemoClaw Starter | $10,000/mo (est.) | 5 | $2,000 |

| NemoClaw Pro | $30,000/mo (est.) | 25 | $1,200 |

Taskade Pro at $1.60/user/month is roughly 1,250x cheaper per seat than NemoClaw Starter at $2,000/user/month.

GTC Launch Timeline

This review is the first serious evaluation of NemoClaw published outside NVIDIA's launch press cycle. We will update it as benchmarks, customer stories, and community feedback emerge.

ASCII: $120K Cost Breakdown

NEMOCLAW STARTER — $120K LICENSE BREAKDOWN (analyst estimate)

+----------------------------------------+----------+

| Component | Annual |

+----------------------------------------+----------+

| NemoClaw runtime license | $48,000 |

| NeMo Guardrails enterprise add-on | $24,000 |

| Triton commercial support | $18,000 |

| Multi-GPU scheduler license | $12,000 |

| SSO / SAML / SCIM connector | $9,000 |

| 24/7 enterprise support tier | $9,000 |

+----------------------------------------+----------+

| TOTAL LICENSE | $120,000 |

+----------------------------------------+----------+

| (excludes hardware, MLOps, services)

TASKADE BUSINESS — SAME-BUDGET COMPARISON

$120,000 ÷ $480/yr (Business annual) = 250 seats × 12 months

Or: $120,000 ÷ $192/yr (Pro annual) = 625 seats × 12 months

Or: free tier for 100,000+ builders = $0

TCO CURVE — ASCII VIEW (cumulative $K, year 1)

M1 M2 M3 M4 M5 M6 M7 M8 M9 M10 M11 M12

NemoClaw : 80 160 240 320 400 480 560 640 720 800 880 960

Taskade Pro: 0 0 0 0 0 0 0 0 0 0 0 0

(~$192 total/year — invisible at this scale)

NemoClaw vs OpenClaw — Extended 15-Row Matrix

| Dimension | NemoClaw | OpenClaw |

|---|---|---|

| License | Proprietary NVIDIA EULA | MIT |

| Source | Closed | Open, GitHub |

| Hardware | H100 / H200 only | Any |

| Inference engine | Triton | Any |

| Guardrails | NeMo Guardrails | DIY |

| SSO | SAML / OIDC / SCIM | DIY |

| Audit logs | 7-year retention | DIY |

| Multi-GPU | Yes (Triton) | Manual |

| Tool RBAC | Yes | DIY |

| Pricing | Est. $120K+/yr | Free |

| Setup time | 6-12 weeks | 1-2 days |

| Community | ~0 (new) | 78K stars |

| Release cadence | Quarterly | Weekly |

| Compliance certs | SOC2/HIPAA/FedRAMP planned | None |

| 24/7 support | Yes | Discord |

What is NemoClaw?

NemoClaw is NVIDIA's enterprise fork of the open-source OpenClaw agent framework, announced by Jensen Huang at GTC on March 17, 2026. It bundles the Claw agent runtime with NVIDIA NeMo Guardrails, Triton Inference Server, and multi-GPU scaling optimized for H100 and H200 clusters, targeting Fortune 500 MLOps teams that need production-grade agent infrastructure with enterprise support.

How much does NemoClaw cost?

NemoClaw pricing is not publicly listed and is sold exclusively through NVIDIA enterprise direct sales. Analyst estimates based on comparable NVIDIA DGX Cloud enterprise tiers place the entry cost at roughly $120,000 per year, plus the underlying H100 or H200 GPU infrastructure. There is no free tier, self-serve checkout, or published price sheet.

NemoClaw vs OpenClaw — what's the difference?

OpenClaw is the open-source MIT-licensed parent framework anyone can self-host on commodity hardware. NemoClaw is NVIDIA's hardened fork that adds NeMo Guardrails, Triton inference, multi-GPU orchestration, enterprise SSO, and commercial support — but locks you to NVIDIA H100 or H200 clusters and a six-figure annual contract. For most teams, upstream OpenClaw is the better choice.

What are the best NemoClaw alternatives?

The top seven alternatives in 2026 are Taskade Agents v2 (best for teams and no-code builders), OpenClaw (open-source parent), LangChain with LangGraph (Python developers), CrewAI (multi-agent role-play), Microsoft AutoGen (research labs), Claude Agent SDK (Anthropic-first builders), and OpenAI Assistants API (quick prototypes on managed infra).

Is NemoClaw good for startups?

No. NemoClaw is explicitly targeted at Fortune 500 enterprises with existing NVIDIA DGX infrastructure, dedicated MLOps teams, and six-figure annual budgets. Startups, SMBs, and solo builders should use Taskade Agents v2, OpenClaw self-hosted, or managed APIs from OpenAI and Anthropic, which start free or under $20 per month.

Does NemoClaw support multi-agent collaboration?

Yes. NemoClaw inherits OpenClaw's multi-agent orchestration layer and extends it with NVIDIA's Triton Inference Server for parallel GPU execution across agent swarms. Teams can define supervisor and worker agent topologies, but configuring them requires Python, YAML, and familiarity with NVIDIA NeMo microservices.

Can I try NemoClaw for free?

No. NemoClaw does not offer a free tier, free trial, or self-serve evaluation path as of April 2026. Access requires contacting NVIDIA enterprise sales for a pilot program, which typically includes a paid proof-of-concept engagement and hardware provisioning on DGX Cloud or on-premise H100 clusters.

Is NemoClaw open source?

No. While NemoClaw is built on the MIT-licensed OpenClaw framework, NVIDIA's fork is proprietary. The NeMo Guardrails, Triton integrations, enterprise connectors, and multi-GPU scheduler are closed source and distributed only through NVIDIA's enterprise licensing program. For an open-source alternative, use upstream OpenClaw directly.

When did NemoClaw launch?

NemoClaw was announced by NVIDIA CEO Jensen Huang during his GTC 2026 keynote on March 17, 2026, in San Jose. General availability to enterprise customers began the same week, with the first wave of Fortune 500 pilot customers publicly disclosed at GTC including manufacturing, pharmaceutical, and financial services firms.

Related Reading

- AI Agent Builders — 2026 Roundup

- AI Agents Taxonomy — A Field Guide

- The Living App Movement

- Best MCP Servers in 2026

- Community Gallery SEO Playbook

- Best OpenClaw Alternatives for AI Agents in 2026

- What Is Moltbook? The History of Clawdbot, Moltbot, and OpenClaw

- Best Claude Code Alternatives: AI Coding Agents and Tools

- What Is Agentic Engineering? Karpathy, History, and AI Agents

- Best Agentic Engineering Platforms and Tools

- What Is NVIDIA? Jensen Huang, CUDA, and the GPU AI Revolution

- Taskade AI Agents

- Build with Taskade Genesis

- Explore the Community Gallery

Ready to build AI agents without a $120K hardware budget? Taskade Agents v2 gives you 11+ frontier models, 22+ built-in tools, 100+ integrations, and multi-agent collaboration — all in the browser, starting free. Start building free →

Frequently Asked Questions

What is NemoClaw?

NemoClaw is NVIDIA's enterprise fork of the open-source OpenClaw agent framework, announced by Jensen Huang at GTC on March 17, 2026. It bundles the Claw agent runtime with NVIDIA NeMo Guardrails, Triton Inference Server, and multi-GPU scaling optimized for H100 and H200 clusters, targeting Fortune 500 MLOps teams.

How much does NemoClaw cost?

NemoClaw pricing is not publicly listed and is sold exclusively through NVIDIA enterprise direct sales. Analyst estimates based on comparable NVIDIA DGX Cloud enterprise tiers place the entry cost at roughly $120,000 per year, plus the underlying H100 or H200 GPU infrastructure. There is no free tier, self-serve checkout, or published price sheet.

NemoClaw vs OpenClaw — what's the difference?

OpenClaw is the open-source MIT-licensed parent framework anyone can self-host on commodity hardware. NemoClaw is NVIDIA's hardened fork that adds NeMo Guardrails, Triton inference, multi-GPU orchestration, enterprise SSO, and commercial support — but locks you to NVIDIA H100 or H200 clusters and a six-figure annual contract.

What are the best NemoClaw alternatives?

The top seven alternatives in 2026 are Taskade Agents v2 (best for teams and no-code builders), OpenClaw (open-source parent), LangChain with LangGraph (Python developers), CrewAI (multi-agent role-play), Microsoft AutoGen (research labs), Claude Agent SDK (Anthropic-first builders), and OpenAI Assistants API (quick prototypes on managed infra).

Is NemoClaw good for startups?

No. NemoClaw is explicitly targeted at Fortune 500 enterprises with existing NVIDIA DGX infrastructure, dedicated MLOps teams, and six-figure annual budgets. Startups, SMBs, and solo builders should use Taskade Agents v2, OpenClaw self-hosted, or managed APIs from OpenAI and Anthropic, which start free or under $20 per month.

Does NemoClaw support multi-agent collaboration?

Yes. NemoClaw inherits OpenClaw's multi-agent orchestration layer and extends it with NVIDIA's Triton Inference Server for parallel GPU execution across agent swarms. Teams can define supervisor and worker agent topologies, but configuring them requires Python, YAML, and familiarity with NVIDIA NeMo microservices.

Can I try NemoClaw for free?

No. NemoClaw does not offer a free tier, free trial, or self-serve evaluation path as of April 2026. Access requires contacting NVIDIA enterprise sales for a pilot program, which typically includes a paid proof-of-concept engagement and hardware provisioning on DGX Cloud or on-premise H100 clusters.

Is NemoClaw open source?

No. While NemoClaw is built on the MIT-licensed OpenClaw framework, NVIDIA's fork is proprietary. The NeMo Guardrails, Triton integrations, enterprise connectors, and multi-GPU scheduler are closed source and distributed only through NVIDIA's enterprise licensing program. For an open-source alternative, use upstream OpenClaw directly.

When did NemoClaw launch?

NemoClaw was announced by NVIDIA CEO Jensen Huang during his GTC 2026 keynote on March 17, 2026, in San Jose. General availability to enterprise customers began the same week, with the first wave of Fortune 500 pilot customers publicly disclosed at GTC including manufacturing, pharmaceutical, and financial services firms.