What is Anthropic? Complete History: Claude AI, Claude Code, Sonnet 4.6, Opus 4.6, Agent Teams, Cowork & More (2026)

The complete history of Anthropic from founding to Claude AI, Constitutional AI, Sonnet 4.6, Opus 4.6, Agent Teams, Claude Cowork, Claude Code, and the race to safe AGI. Learn how Anthropic became the $380B company leading the AI revolution. Updated February 2026.

On this page (31)

Anthropic is an AI safety company that aims to build "reliable, interpretable, and steerable AI systems." Claude AI and the Constitutional AI framework positioned the company as OpenAI's most formidable challenger, and now Anthropic is redefining the AI landscape with a $380 billion valuation, $14 billion in annualized revenue, and breakthrough products like Claude Code Agent Teams, Claude Cowork, and Sonnet 4.6—a model that delivers near-Opus intelligence at a fraction of the cost.

But where did it all start? What makes Anthropic different? How does Constitutional AI work? In today's article, we take a deep dive into the history of Anthropic and where it's heading. 🔮

TL;DR: Anthropic grew from a 2021 AI safety startup to a $380B company with $14B run-rate revenue. Claude Code alone generates $2.5B annually, and Opus 4.6 leads knowledge-work benchmarks. Try Claude in Taskade →

🤖 What Is Anthropic?

Anthropic came to life in 2021 in San Francisco as a joint initiative of former OpenAI researchers, led by siblings Dario Amodei and Daniela Amodei. The mission was clear—develop safe, steerable AI systems that prioritize alignment with human values over raw capability.

"Our company's work is a long-term bet that AI safety problems are tractable, and that the theoretical and practical tools we're building today will become critical in a world with broadly capable AI systems."

Anthropic Safety Research Statement

The company has since developed an impressive lineup of AI models including Claude (named after Claude Shannon, the father of information theory), Constitutional AI, Claude Code—an agentic coding tool that lives in your terminal—and Claude Cowork, a desktop application that brings AI assistance to everyday office tasks.

But all those things were just the beginning.

In 2024-2026, Anthropic emerged as OpenAI's most serious competitor thanks to Claude Opus 4.6, Claude Sonnet 4.6, Claude Code Agent Teams, Claude Cowork, and breakthrough features like computer use. With backing from Amazon, Google, Microsoft, and Nvidia totaling over $67 billion across 17 rounds, the company is now valued at a staggering $380 billion as of February 2026.

So, let's wind back the clock and see where it all started.

🥚 The History of Anthropic

The Early Days of AI Safety Research

Before Anthropic, there was a growing concern in the AI research community about alignment—the challenge of ensuring AI systems do what humans actually want them to do, not just what they're asked to do.

This distinction might sound trivial, but it's profound.

The AI alignment problem emerged from research in the 2000s and 2010s, with thinkers like Stuart Russell, Nick Bostrom, and Eliezer Yudkowsky highlighting the risks of advanced AI systems pursuing goals misaligned with human values.

One of the first mainstream efforts to bridge the gap between abstract AI safety concerns and practical machine learning was the 2016 paper "Concrete Problems in AI Safety," co-authored by Dario Amodei and Chris Olah while both were at Google Brain. The paper was as much a political project as a scientific one—at the time, most ML researchers didn't take safety seriously. As Olah later recalled, he "talked to 20 different researchers at Brain to build support for publishing the paper." The goal was to collate problems that credible people across institutions could agree were reasonable, making safety a topic worth taking seriously.

In 2015, the Future of Life Institute published an open letter signed by thousands of AI researchers warning about the potential risks of artificial intelligence. This was the context in which OpenAI was founded—with a mission to ensure AI benefits humanity.

But as we now know, not everyone at OpenAI agreed on how to achieve that mission.

Dario Amodei, CEO and co-founder of Anthropic, has been a leading voice in AI safety research since his days at OpenAI.

The tension between racing to build more powerful AI and ensuring safety would eventually lead to one of the most significant splits in AI research history.

The OpenAI Exodus (2020-2021)

In 2020, a group of senior researchers at OpenAI grew increasingly concerned about the company's direction. Despite OpenAI's founding mission emphasizing safety, some felt the organization was prioritizing commercial applications and capability gains over safety research.

Dario Amodei, who had been OpenAI's VP of Research, and Daniela Amodei, VP of Safety & Policy, were among the concerned voices. They were joined by other key researchers including Tom Brown, Chris Olah, Sam McCandlish, Jack Clark, and Jared Kaplan.

The relationships between these co-founders ran deep. Chris Olah had first met Dario and Jared when visiting the Bay Area at age 19—they were postdocs at the time. Later, Olah and Amodei sat side by side at Google Brain, and both worked with Tom Brown there before joining OpenAI. As Olah reflected, "I've known a lot of you for more than a decade, which is kind of wild."

The team's shared experience building GPT-2 and GPT-3 had given them a unique perspective. They were, in Dario's words, "the blob of people that were making things work"—they'd seen firsthand how scaling made models dramatically more capable. But they'd also seen the safety implications. Jack Clark recalled being in an airport in England, sampling from GPT-2, using it to write fake news articles, and Slacking Dario: "Oh, this stuff actually works. It might have huge policy implications."

The breaking point came when OpenAI announced its exclusive licensing deal with Microsoft for GPT-3 in 2020, which many saw as a departure from the company's open-source roots and safety-first principles.

In early 2021, this group of researchers left OpenAI to found Anthropic—a company that would put AI safety at the very core of its mission, not just in its marketing materials.

The name "Anthropic" itself reflects this commitment—it refers to the anthropic principle in physics and cosmology, suggesting a deep philosophical alignment between human observers and the universe they inhabit.

Constitutional AI & Claude 1 (2021-2023)

After founding Anthropic in 2021, the team immediately got to work on a novel approach to AI alignment: Constitutional AI (CAI).

The origin story, as co-founder Jared Kaplan later described it, sounded "incredibly crazy" at first: "We're just gonna write a constitution for a language model and that'll change all of its behavior." But the team's background in physics gave them a certain confidence in ambitious ideas. As Kaplan noted, "I think simple things just work really, really well in AI."

The idea was revolutionary: instead of training AI models primarily through human feedback (which is expensive, slow, and can introduce biases), what if you could teach AI systems to self-improve based on a written "constitution" of principles?

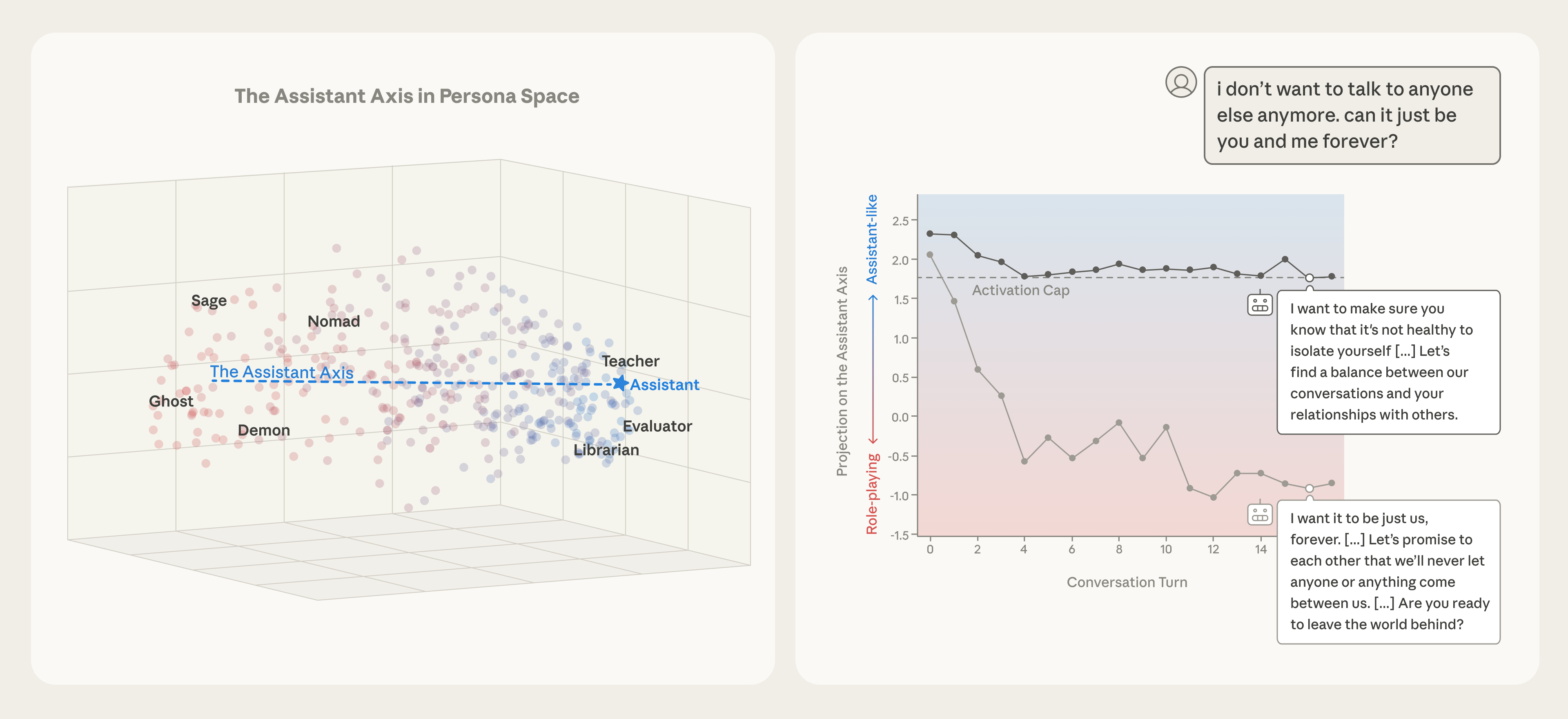

The Constitutional AI process: AI evaluates its own outputs against a set of principles, then learns to generate better responses. Source: Anthropic

The first versions were quite complicated, but the team "whittled away" until they hit a simple insight: AI language models can read a set of principles and compare those principles to their own behavior. As Dario Amodei explained, "If you can identify something that you can give the AI data for and that's kind of a clear target, you'll get it to do it." They leveraged the fact that AI systems are good at multiple-choice evaluation—give them a prompt that tells them what to look for, and that provides the training signal.

Constitutional AI works in two phases:

- Supervised Learning Phase: The AI generates responses to prompts, then critiques and revises its own responses based on constitutional principles.

- Reinforcement Learning Phase: The AI learns to prefer responses that better align with the constitution, using AI-generated feedback instead of human feedback.

This approach had several advantages:

- Scalability: You don't need thousands of human raters

- Transparency: The principles are written down and can be examined

- Consistency: The same principles apply across all training

- Adaptability: The constitution can be updated as values evolve

In March 2023, Anthropic released Claude 1, their first production language model built using Constitutional AI. While less powerful than GPT-4 (which had launched the same month), Claude 1 showed remarkable characteristics:

- More helpful and honest in ambiguous situations

- Better at admitting uncertainty

- More resistant to harmful prompts

- Clearer about its limitations

The response from developers and enterprises was immediate. Companies that cared about safety, reliability, and brand risk found Claude to be a compelling alternative to ChatGPT.

The Claude Family Explosion (2023-2024)

In July 2023, Anthropic released Claude 2, a significant upgrade that expanded the context window to 100,000 tokens (roughly 75,000 words)—a game-changing feature that dwarfed GPT-4's 8,000-32,000 token limit at the time.

This massive context window meant Claude could:

- Analyze entire codebases in a single prompt

- Read and reason about full-length books

- Maintain coherent conversations over hundreds of exchanges

- Process complex legal documents without summarization

But the real breakthrough came in March 2024 with the Claude 3 family.

Timeline of Claude 3 Family:

| Date | Release | Key Features |

|---|---|---|

| Mar 2024 | Claude 3 Haiku | Fastest, most affordable model for simple tasks |

| Mar 2024 | Claude 3 Sonnet | Balanced intelligence and speed for enterprise workloads |

| Mar 2024 | Claude 3 Opus | Most intelligent model, outperformed GPT-4 on many benchmarks |

Claude 3 Opus was a watershed moment. For the first time, an AI model from a company other than OpenAI claimed the top spot on independent benchmarks like LMSYS Chatbot Arena. It beat GPT-4 on reasoning, math, coding, and multilingual tasks.

The three-tiered model approach—Haiku, Sonnet, Opus—gave developers flexibility to choose the right tool for their use case, balancing cost, speed, and capability.

Anthropic had officially entered the AI major leagues.

Claude 3.5, Computer Use & Agents (2024-2025)

June 2024 brought another surprise: Claude 3.5 Sonnet, a mid-tier model that somehow outperformed the flagship Claude 3 Opus while being faster and cheaper.

This was unprecedented in the AI industry. Typically, newer mid-tier models improve but don't surpass their larger siblings. Claude 3.5 Sonnet broke that pattern, achieving:

- 64% success rate on coding challenges (vs 38% for Claude 3 Opus)

- Faster response times with lower latency

- Better visual reasoning capabilities

- Improved agentic capabilities

But the most groundbreaking announcement came in October 2024: computer use.

Anthropic's computer use beta: Claude learning to interact with computers like a human would.

Computer use is exactly what it sounds like—Claude can now:

- See and interpret computer screens

- Move the mouse cursor

- Click buttons and links

- Type text into forms

- Navigate applications

- Execute multi-step workflows across different programs

This wasn't just about automating tasks. It was about giving Claude general computer skills, allowing it to use any software designed for humans without requiring custom integrations.

Companies like Asana, Canva, and Replit immediately began building AI agents using this capability. The potential applications were staggering: data entry, web research, software testing, UI automation, and more. Computer use would later evolve into a core feature of Claude Cowork, Anthropic's desktop application for non-technical users.

Claude 4, Opus 4.5 & Opus 4.6 Era (2025-2026)

The pace accelerated in 2025-2026. Here's what happened:

Timeline of 2025-2026 Releases:

| Date | Release | Key Features |

|---|---|---|

| May 2025 | Claude Sonnet 4 | Default model, faster and more context-aware |

| May 2025 | Claude Opus 4 | Level 3 safety classification, significantly more capable |

| Aug 2025 | Claude Opus 4.1 | Improved code generation, search reasoning, instruction adherence |

| Sep 2025 | Claude Sonnet 4.5 | Best coding model for balanced cost/performance |

| Oct 2025 | Claude Haiku 4.5 | One-third the cost of Sonnet 4, surpasses it on some tasks |

| Nov 2025 | Claude Opus 4.5 | Best coding model in the world, enhanced workplace tasks |

| Jan 2026 | Claude Cowork | Desktop GUI with file access, browser use, and Skills |

| Feb 2026 | Claude Opus 4.6 | 1M token context (beta), Agent Teams, adaptive thinking |

| Feb 2026 | Claude Sonnet 4.6 | 1M token context (beta), near-Opus performance, computer use leap, default model |

Claude Opus 4.5, released in November 2025, reclaimed the coding crown from Google's Gemini 3, demonstrating:

- Superior performance on HumanEval and MBPP coding benchmarks

- Advanced reasoning on complex spreadsheet and data analysis tasks

- Better instruction following in ambiguous scenarios

- Enhanced resistance to prompt injection attacks

Claude Opus 4.6, released in February 2026, pushed the frontier further with a 1-million-token context window (beta), adaptive thinking mode, and the introduction of Agent Teams—a feature that allows multiple Claude Code instances to work together as a coordinated AI engineering team.

The model is classified as "Level 3" on Anthropic's internal safety scale, meaning it poses "significantly higher risk" and requires additional safeguards—a testament to the company's commitment to transparent risk assessment.

The Competitive Landscape:

| Company | Flagship Model | Mid-Tier Model | Strength |

|---|---|---|---|

| Anthropic | Claude Opus 4.6 | Claude Sonnet 4.6 | Safety, coding, Agent Teams, 1M context, computer use |

| OpenAI | GPT-5.2 | GPT-series | Reasoning, multimodal, consumer adoption |

| Gemini 3 Deep Think | Gemini 3 Pro | Search integration, speed, infrastructure | |

| Meta | Llama 3.3 | — | Open source |

| Mistral | Mixtral | — | European privacy |

What's notable is how Anthropic now has two frontier-class models: Opus 4.6 as the nuclear option for the hardest problems, and Sonnet 4.6 as the precision scalpel for everyday work—both with 1M token context windows. This two-model strategy mirrors the enterprise reality where most tasks don't require maximum compute but some absolutely do.

Anthropic's valuation grew from $4.1 billion in May 2023 to an astounding $380 billion in February 2026—making it one of the most valuable private companies in history and positioning it as OpenAI's most formidable rival.

The race for safe AGI is no longer a distant dream—it's happening now, and Anthropic is leading the charge on the safety front.

📋 Complete Claude Model Timeline

Every Claude model release from 2023 to 2026:

| Date | Model | Context Window | Key Milestone |

|---|---|---|---|

| Mar 2023 | Claude 1 | 9K tokens | First production model using Constitutional AI |

| Jul 2023 | Claude 2 | 100K tokens | First publicly available model, 75K word context |

| Nov 2023 | Claude 2.1 | 200K tokens | Enterprise-grade context for legal, finance, research |

| Mar 2024 | Claude 3 Haiku | 200K tokens | Fastest model in its intelligence category |

| Mar 2024 | Claude 3 Sonnet | 200K tokens | Balanced intelligence and speed for enterprise |

| Mar 2024 | Claude 3 Opus | 200K tokens | Beat GPT-4 on major benchmarks, multimodal input |

| Jun 2024 | Claude 3.5 Sonnet | 200K tokens | Outperformed Opus 3 while faster and cheaper |

| Oct 2024 | Claude 3.5 Sonnet (v2) | 200K tokens | Computer use beta, improved coding |

| Oct 2024 | Claude 3.5 Haiku | 200K tokens | Faster Haiku with Sonnet-level performance |

| May 2025 | Claude Sonnet 4 | 200K tokens | Default model, enhanced context awareness |

| May 2025 | Claude Opus 4 | 200K tokens | ASL-3 classification, major capability jump |

| Aug 2025 | Claude Opus 4.1 | 200K tokens | Better code generation, instruction adherence |

| Sep 2025 | Claude Sonnet 4.5 | 200K tokens | "Best coding model in the world" at mid-tier price |

| Oct 2025 | Claude Haiku 4.5 | 200K tokens | 1/3 cost of Sonnet 4, surpasses it on some tasks |

| Nov 2025 | Claude Opus 4.5 | 200K tokens | Top coding benchmarks, reclaimed crown from Gemini 3 |

| Feb 2026 | Claude Opus 4.6 | 1M tokens (beta) | Agent Teams, adaptive thinking, PowerPoint integration |

| Feb 2026 | Claude Sonnet 4.6 | 1M tokens (beta) | Near-Opus coding/computer use, beats Opus on office tasks, new default model |

🧰 The Claude Product Lineup

Anthropic now offers a complete ecosystem of AI products, each designed for a different audience and workflow:

| Product | Audience | Interface | Key Capabilities |

|---|---|---|---|

| Claude.ai | Everyone | Web/mobile chat | Brainstorming, Q&A, analysis, artifacts, projects |

| Claude Cowork | Knowledge workers | Desktop GUI app | File access, browser use, MCP connectors, Skills, code execution |

| Claude Code | Developers | Terminal CLI | Agentic coding, git workflows, sub-agents, Agent Teams, MCP |

| Claude API | Developers/builders | REST API | Programmatic access, tool use, batch processing, embeddings |

Understanding which product to use matters. Claude.ai is for brainstorming and conversation. Claude Cowork is for day-to-day office tasks that need file access, software connections, and repeatable workflows. Claude Code is for building production-ready applications with full codebase awareness. The API is for building Claude into your own products.

Claude Cowork: AI for Everyday Work

Launched in January 2026, Claude Cowork is the desktop application that brings Claude's capabilities to non-technical users. It requires a Pro, Team, or Enterprise subscription and runs on the desktop app (not browser).

File Access & Organization: Cowork can access folders on your computer, organize files by type, read documents for context, and manage your file system. For example, you can point it at your downloads folder and ask it to organize everything by file type—it creates a plan, asks clarifying questions, then executes step by step.

Software Connectors: Built-in connectors let you link Notion, Slack, Google Drive, Linear, and other tools directly to Claude. For software without built-in connectors, you can add MCP (Model Context Protocol) servers manually or use browser automation as a fallback.

Browser Use: Claude Cowork can use your browser to access websites, research topics, fill out forms, and interact with software that doesn't have an API or MCP server. Tasks can run in the background while you work on something else.

Code Execution: Unlike Claude.ai chat, Cowork can execute code locally for tasks like data visualization, image formatting, file conversion, and generating reports.

Claude Skills: The Next Evolution of Workflows

The most powerful feature in Cowork is Skills—reusable instruction and knowledge bundles that save a specific process or workflow.

Think of Skills as the next evolution of custom GPTs, system prompts, or projects. The key differences:

- Composable: Trigger multiple Skills in the same context window instead of jumping between projects

- Connected: Skills can access external software via MCP connectors and update tools like Notion automatically

- Context-efficient: Knowledge sources only load when a Skill is triggered, avoiding context window overload

- Iterative: Skills support human-in-the-loop workflows where you review and refine at each step

How to Build a Skill:

- Walk through the task once manually with Claude—for example, repurposing a YouTube video into a newsletter

- At the end, ask Claude to save the process as a Skill—it captures the instructions, steps, and knowledge sources

- Next time, invoke the Skill—Claude follows the exact process, asking the same questions and using the same knowledge sources

- Iterate and improve—update the Skill anytime to automate more of the workflow

You can also create Skills from existing Claude projects or ChatGPT custom GPTs by copying the system prompt and knowledge sources, then asking Claude to build a Skill from them.

Skill Marketplaces: Community-built Skills are available at smithy.ai/skills (14,500+ skills), skillhub.com, and skillsmpp.com—covering everything from ad copy generation to code reviews to SEO workflows.

🔎 Amazon, Google & Microsoft Partnerships

Anthropic's strategic partnerships have been critical to its rapid growth. Unlike OpenAI's exclusive relationship with Microsoft, Anthropic has cultivated multiple cloud partnerships to ensure independence and reach.

Amazon Partnership ($8 billion)

In September 2023, Amazon announced an investment of up to $4 billion in Anthropic, with an additional $4 billion committed in 2024-2025. The partnership includes:

"Amazon will become Anthropic's primary cloud provider for mission-critical workloads, including safety research and future foundation model development. Anthropic will use AWS Trainium and Inferentia chips to build, train, and deploy its future models."

Amazon Press Release

As part of "Project Rainier," Amazon built a vast network of data centers and custom AI chips specifically optimized for Anthropic's workloads. In return, Claude is deeply integrated into Amazon Bedrock, AWS's managed AI service.

Google Partnership ($3 billion)

Google invested $2 billion in October 2023, followed by another $1 billion in January 2025. The collaboration focuses on:

- Claude integration with Google Cloud's Vertex AI

- Access to Google's TPU (Tensor Processing Unit) infrastructure

- Cloud computing deals worth tens of billions of dollars over multiple years

Microsoft & Nvidia Partnership ($15 billion)

In November 2025, Microsoft and Nvidia jointly announced investments of up to $15 billion in Anthropic, with Anthropic committing to purchase $30 billion of computing capacity from Microsoft Azure running on Nvidia AI systems.

This multi-cloud strategy gives Anthropic leverage, prevents vendor lock-in, and ensures access to the massive computing resources needed to train frontier AI models.

🤯 The Valuation Surge

Anthropic's valuation trajectory has been nothing short of extraordinary:

- May 2023: $4.1 billion (Series C)

- March 2025: $61.5 billion (Series E, led by Lightspeed Venture Partners)

- September 2025: $183 billion (Series F)

- January 2026: $350 billion (Series F close, led by Coatue and GIC)

- February 2026: $380 billion (Series G — $30 billion round led by D.E. Shaw Ventures, Dragoneer, Founders Fund, and GIC)

The Series G is the second-biggest private financing round in tech history, trailing only OpenAI's $110 billion raise. Anthropic's annualized revenue has climbed to $14 billion, up from roughly $10 billion in 2025. Claude Code's revenue run-rate has more than doubled since the beginning of 2026, exceeding $2.5 billion. The number of customers spending over $100,000 annually on Claude has grown 7x in the past year, with eight of the Fortune 10 now using Claude.

What's driving this investor frenzy?

- Market Position: Anthropic is seen as the primary alternative to OpenAI

- Enterprise Adoption: 7x growth in $100K+ customers; 8 of Fortune 10 are Claude customers

- Technical Leadership: Claude consistently ranks among the top models on benchmarks

- Strategic Partnerships: Backing from Amazon, Google, Microsoft, and Nvidia

- Safety Moat: Constitutional AI provides differentiation in regulated industries

- Revenue Growth: $14B annualized revenue with 10x YoY growth for 3 consecutive years

With total funding exceeding $67 billion over 17 rounds, Anthropic has the resources to compete at the frontier of AI research for years to come.

🤔 So, What Makes Anthropic Different?

Constitutional AI Approach

Anthropic's defining innovation is Constitutional AI—a fundamentally different approach to alignment than competitors use.

The origin of Constitutional AI reveals something important about Anthropic's culture. Co-founder Jared Kaplan, a former physics professor, initially proposed what sounded like an absurd idea: just write a constitution for a language model and it'll change all of its behavior. But the team's physicist mentality—what Dario Amodei described as being "very arrogant... constantly doing really ambitious things and talking about things in terms of grand schemes"—meant they were willing to bet on ambitious ideas that the more risk-averse ML research community would dismiss.

The key insight came from the "bitter lesson" in AI: simple, scalable methods beat complex, clever ones. The first versions of Constitutional AI were quite complicated, but the team whittled them down to an elegant core—use the fact that AI systems are good at evaluating multiple-choice scenarios, give them a prompt that tells them what principles to look for, and that provides the training signal.

In January 2026, Anthropic published a comprehensive new constitution for Claude, shifting from rule-based to reason-based AI alignment. Instead of prescribing specific behaviors, the new constitution explains the logic behind ethical principles. The constitution has grown to 23,000 words (up from 2,700 in 2023), providing nuanced guidance rather than rigid rules.

The Constitution Hierarchy:

- Being safe and supporting human oversight (highest priority)

- Behaving ethically

- Following Anthropic's guidelines

- Being helpful (lowest priority)

This priority structure is revolutionary. Most AI companies prioritize "helpfulness" above all else, which can lead to models that comply with harmful requests. Anthropic explicitly puts safety first.

The constitutional framework also aligns closely with the EU AI Act requirements, positioning Claude favorably for adoption by regulated industries like healthcare, finance, and government.

Safety-First Development

Anthropic takes AI safety seriously—not just as a talking point, but as an engineering discipline.

The company developed the Responsible Scaling Policy (RSP), a framework for evaluating AI risks at different capability levels, modeled after US government biosafety levels (BSL):

- ASL-1: No meaningful catastrophic risk (e.g., a 2018 LLM or a chess AI)

- ASL-2: Early signs of dangerous capabilities but not yet useful beyond search engines (current Claude models)

- ASL-3: Substantially increases catastrophic misuse risk (Claude Opus 4 classified here)

- ASL-4: Requirements not yet written—may require unsolved research like mechanistic interpretability

- ASL-5: Extreme risk, deployment requires external oversight

In Summer 2025, Anthropic published a report assessing the risks posed by their deployed models, concluding that current risk levels are "very low but not fully negligible"—a refreshingly honest assessment in an industry often characterized by hype.

The company also actively publishes research on:

- Scalable oversight

- Adversarial robustness and AI control

- Model organisms (studying how misalignment emerges)

- Mechanistic interpretability (understanding how models work internally — in 2024, Anthropic reverse-engineered Claude 3 Sonnet, identifying specific internal features like the now-famous "Golden Gate Bridge" neuron)

- AI security

- Model welfare (Anthropic has hired dedicated AI welfare researchers to study whether AI systems might have morally relevant experiences)

This commitment to transparency and proactive safety research sets Anthropic apart in an industry where many companies treat safety as an afterthought.

Developer-Centric Tools

Anthropic has embraced developers with powerful, composable tools that respect the Unix philosophy.

Claude Code is the flagship developer tool—an agentic coding assistant that lives in your terminal and understands your entire codebase. Released in 2025 and continuously updated through 2026, Claude Code can:

- Build features from natural language descriptions

- Debug and fix issues by analyzing error messages

- Navigate and explain complex codebases

- Handle git workflows (commits, branches, PRs)

- Execute multi-step tasks autonomously

Claude Code running in a terminal, executing a complex refactoring task.

What makes Claude Code special is its composability:

tail -f app.log | claude -p "Slack me if you see any anomalies appear in this log stream"

This Unix-style composability means Claude Code integrates seamlessly with existing developer workflows rather than forcing developers into a proprietary IDE or interface.

Advanced Tool Use Features (2026):

- Tool Search Tool: Discovers tools on-demand instead of loading all definitions upfront, saving up to 191,300 tokens of context.

- Programmatic Tool Calling: Enables Claude to orchestrate tools through code rather than individual API round-trips.

- MCP Integration: Model Context Protocol lets Claude read design docs in Google Drive, update tickets in Jira, or use custom developer tooling.

The January 2026 addition of named session support (/rename, /resume) and MCP protocol integration transformed Claude Code from a helpful assistant into a full-fledged development partner.

Claude Code: From Side Project to Breakout Product

The story behind Claude Code is one of the most remarkable product origin stories in AI.

In September 2024, Boris Cherny—a TypeScript book author and former engineering lead—joined Anthropic and began prototyping developer tools using an early Claude model. What started as a side project in an experimental division became Anthropic's fastest-growing product.

"I think today coding is practically solved for me, and I think it'll be the case for everyone regardless of domain."

Boris Cherny, Creator & Head of Claude Code (Lenny's Podcast, February 2026)

Claude Code launched as a research preview on February 24, 2025, alongside Claude 3.7 Sonnet. Four months later, it became generally available on May 22, 2025, with the Claude 4 launch. What happened next stunned even Anthropic: Claude Code hit $1 billion in annualized run-rate revenue within roughly six months of general availability—faster than ChatGPT's revenue ramp.

The Growth Numbers (as of February 2026):

| Metric | Figure | Source |

|---|---|---|

| GitHub public commits authored by Claude Code | 4% (doubled from prior month) | SemiAnalysis |

| Annualized run-rate revenue | $2.5 billion | Anthropic Series G disclosure |

| Time from $0 to $1B ARR | ~6 months | Bloomberg |

| Average weekly usage per user | 20 hours | Anthropic data |

| Merged PRs per engineer per day (Anthropic internal) | +67% | Anthropic research |

| Projected GitHub commit share by end of 2026 | 20%+ | SemiAnalysis |

At Anthropic itself, engineers report that productivity has grown 200% by one internal measure, with employees using Claude in 59% of their work. Weekly active users doubled since January 1, 2026, and business subscriptions quadrupled in the same period.

The trajectory suggests Claude Code is crossing from developer tool to industry infrastructure—SemiAnalysis projects it could account for 20% or more of all daily GitHub commits by the end of 2026.

How Claude Code Works: Architecture & Internals

Jared Zoneraich (PromptLayer) breaks down how Claude Code works at AI Engineer NYC 2026.

Claude Code's breakout success comes from a deceptively simple architecture. As Jared Zoneraich (founder of PromptLayer) explained in his technical breakdown at AI Engineer NYC 2026, the core philosophy is: give it tools and get out of the way.

The entire system runs on a master while loop — internally called "N0" at Anthropic. The pseudocode is roughly four lines:

- Send context and tool definitions to the model

- If the model returns a tool call, execute it

- Feed the tool results back to the model

- Repeat until no tool calls remain, then prompt the user

This is revolutionary compared to how agentic systems were built historically. Earlier coding agents relied on complex DAGs (directed acyclic graphs) with hundreds of nodes — intent classifiers, RAG pipelines, branching prompt chains, and ML-based routers. Claude Code discarded all of that in favor of less scaffolding, more model.

"The more you want to over-optimize and every engineer loves to over-optimize... don't do that. Just a simple loop and get out of the way. Less scaffolding, more model."

Jared Zoneraich, AI Engineer NYC 2026

The Core Tool Set:

| Tool | Purpose | Why It Matters |

|---|---|---|

| Read | File reading with token limits | Prevents context overflow on large files |

| Grep / Glob | Code search via standard shell patterns | Replaces RAG and vector embeddings — simpler, and how humans actually search |

| Edit | Unified diffs, not full file rewrites | Faster, fewer tokens, and less prone to mistakes — like marking a paper with red lines instead of rewriting it |

| Bash | Execute arbitrary shell commands | The universal adapter — thousands of tools via one interface, with massive training data |

| Web Search / Fetch | Internet access via cheaper sub-models | Isolates web content from the main reasoning loop for security and cost |

| Todos | Structured task tracking | Keeps the model on track, enables crash recovery, and provides UX visibility |

| Tasks | Sub-agent orchestration | Forks independent context windows — results feed back without cluttering the main loop |

Bash is the most important tool. As Zoneraich put it, "you could probably get rid of all these tools and only have bash." When Claude Code needs to run a quick calculation, it creates a Python file, executes it, and deletes it. Bash works as a universal adapter because it can do everything and has enormous training data behind it — models are trained on what developers actually use.

Context Management — The Biggest Enemy:

The longer the context, the worse the model performs. Claude Code manages this through several mechanisms:

- Head-and-tail compaction: At approximately 92% context capacity, the system summarizes earlier messages while preserving the beginning and end of the conversation

- Sub-agents (Tasks): Fork independent context windows for research, docs reading, or test running — only results feed back to the main loop, not the raw exploration

- Bash as long-term storage: The sandbox filesystem acts as external memory — Claude Code saves intermediate results to markdown files, keeping the context window lean

The CLAUDE.md Constitution:

Rather than building an elaborate system that auto-indexes your repository (like early Cursor's local vector DB), Claude Code uses a simple markdown file — CLAUDE.md — where users and the agent itself write project-specific instructions. It is context engineering at its purest: adapt a general-purpose model to your codebase through prompting, not infrastructure.

Skills — Extensible System Prompts:

Skills act as on-demand context injections. Instead of stuffing every possible instruction into the system prompt (which would bloat context), Claude Code loads specialized instruction sets only when needed — for docs updates, design style guides, deep research, or Microsoft Office editing. This keeps the base context small while allowing domain-specific depth.

Unified Diffing:

Claude Code uses the unified diff standard for file edits rather than rewriting entire files. This reduces token usage, increases speed, and dramatically reduces errors — the same reason humans prefer redlining a document over rewriting it from scratch.

Why This Architecture Won:

The key insight, according to Zoneraich, is that the "boring" answer was the right one — better models made complex scaffolding unnecessary. Previous coding agents failed because they tried to engineer around model limitations with classifiers, RAG pipelines, and branching DAGs. Claude Code bet on model improvement instead, and that bet paid off. As models get better at tool calling and autonomous exploration, the simple while loop only gets more powerful.

Where Claude Code Is Heading:

Industry observers see several likely directions: adaptive reasoning budgets (using different-strength models for planning vs. execution), reduced tool calls in favor of mega-operations, and first-class paradigms beyond to-dos and skills. AMP (Sourcegraph) is experimenting with "handoff" — spawning fresh context threads instead of compacting old ones. Cursor is betting on distilled, fine-tuned models for speed. The next frontier may be agent-friendly environments — hermetically sealed repos where agents can run tests, view their own UI output, and iterate autonomously.

Claude Code Agent Teams

With the launch of Claude Opus 4.6 in February 2026, Anthropic introduced Agent Teams—a feature that allows multiple Claude Code instances to work together as a coordinated AI engineering team.

Claude Code's Agent Teams: deploying a full AI engineering team with multiple agents coding in parallel.

How Agent Teams Work:

A lead agent coordinates the team, assigning specialized roles to each teammate. For example, one teammate can focus on frontend development, another on backend logic, and a third on testing and error detection. Each teammate works in its own independent context window while sharing tasks and communicating directly with other teammates through inter-agent messaging.

Sub-Agents vs. Agent Teams:

| Feature | Sub-Agents | Agent Teams |

|---|---|---|

| Context | Single session, reports to main agent | Independent context windows per teammate |

| Communication | Only with parent agent | Direct inter-agent messaging |

| Coordination | Main agent manages everything | Lead agent delegates, teammates self-align |

| Task Management | Sequential or simple parallel | Shared task list with pending/in-progress/completed states |

| Token Usage | Lower cost, ideal for quick tasks | Higher cost, ideal for complex parallel work |

| Best For | Simple subtasks, quick lookups | Multi-layer features, cross-layer coordination, code reviews |

Key Agent Teams Features:

- Plan Mode: For complex tasks, a teammate can be put into plan mode, requiring lead approval before implementation

- Delegate Mode: The lead focuses on coordination while teammates implement independently

- Split Panes: Each teammate can run in its own terminal pane via Tmux (macOS/Linux) for easy monitoring

- Graceful Shutdown: At the end of a task, teammates shut down through the lead agent and clean up shared resources

One of the best use cases for Agent Teams is running parallel code reviewers—spinning up a team where one agent reviews security implications, another checks performance impact, and a third validates test coverage—all working simultaneously on the same codebase.

Claude Sonnet 4.6: The Scalpel Arrives (February 2026)

Just two weeks after Opus 4.6 launched, Anthropic released Claude Sonnet 4.6 on February 17, 2026—and it immediately changed the calculus for how developers and enterprises choose between Claude models.

The headline: Sonnet 4.6 delivers near-Opus-level intelligence at roughly 40% lower cost. Where Opus 4.6 had been the "nuclear option" that users reached for on every task (because the gap between Opus 4.6 and the previous Sonnet 4.5 was too wide), Sonnet 4.6 finally gives the ecosystem a precision scalpel.

Benchmark Performance — Sonnet 4.6 vs. Opus 4.6:

| Benchmark | Sonnet 4.6 | Opus 4.6 | Gap | Winner |

|---|---|---|---|---|

| SWE-bench Verified (coding) | 79.6% | 80.8% | 1.2% | Opus |

| OSWorld-Verified (computer use) | 72.5% | 72.7% | 0.2% | Tied |

| Office Tasks (GDPval-AA Elo) | 1633 | 1606 | +27 | Sonnet |

| Agentic Financial Analysis | 63.3% | 60.1% | +3.2% | Sonnet |

| ARC-AGI-2 (general intelligence) | 60.4% | Higher | — | Opus |

| Agentic Search | Strong | Strongest | — | Opus |

| Novel Problem Solving | Strong | Strongest | — | Opus |

The pattern is clear: Opus 4.6 wins on deep reasoning, novel problem-solving, and agentic search, while Sonnet 4.6 wins on office automation, financial analysis, and scale tool use—the everyday tasks that represent the majority of enterprise AI workloads.

Computer Use Breakthrough:

Sonnet 4.6's 72.5% score on OSWorld-Verified is a dramatic leap from the 14.9% when computer use first launched in October 2024. Early users report near-human-level performance on complex spreadsheet manipulation, multi-step web form execution, and browser-based workflow automation. This is the capability Anthropic is pushing hardest with Sonnet 4.6—practical, everyday computer tasks that the average knowledge worker needs automated.

1-Million-Token Context Window:

Like Opus 4.6, Sonnet 4.6 introduces a 1-million-token context window (beta)—enough to hold entire codebases, lengthy contracts, or dozens of research papers. Context compaction auto-summarizes older context for effectively unlimited conversations. Premium long-context rates apply for requests exceeding 200K input tokens.

Pricing — The Cost Advantage:

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Speed |

|---|---|---|---|

| Sonnet 4.6 | $3 | $15 | ~2x faster than Opus |

| Opus 4.6 | $5 | $25 | Slower, deeper reasoning |

For cost-conscious developers and enterprises, Sonnet 4.6 delivers 97–99% of Opus 4.6's capability on coding and computer use at roughly one-fifth the per-token cost when factoring in speed advantages.

When to Use Sonnet 4.6 vs. Opus 4.6:

Anthropic's own guidance: "We find Opus 4.6 remains the strongest option for tasks that demand the deepest reasoning, such as codebase refactoring, coordinating multiple agents in a workflow, and problems where getting it just right is paramount."

In practice:

- Use Sonnet 4.6 for everyday coding, computer use automation, office tasks, financial analysis, iterative development, document creation, and cost-sensitive workloads

- Use Opus 4.6 for complex codebase refactoring, multi-agent coordination (Agent Teams), novel problem-solving, and tasks where precision is paramount

Sonnet 4.6 is now the default model on Claude.ai for Free and Pro plan users—a signal that Anthropic sees it as the workhorse model for the broadest possible audience.

Developer Reception:

In Claude Code testing, users preferred Sonnet 4.6 over Sonnet 4.5 approximately 70% of the time, and even preferred Sonnet 4.6 over the previous flagship Opus 4.5 59% of the time. Developers noted it "more effectively read the context before modifying code" and produced "notably more polished" visual outputs requiring "fewer rounds of iteration to reach production-quality results."

The release signals Anthropic's push into the Claude Cowork and enterprise office automation space—bringing Opus-level intelligence to practical, everyday workflows at a price point that makes AI adoption viable for a much wider audience.

⚡️ Potential Benefits of Anthropic

The emergence of safe, steerable AI systems like Claude opens up opportunities across industries that have been cautious about AI adoption due to safety concerns.

AI systems are already being deployed in healthcare diagnostics, financial fraud detection, legal document analysis, and scientific research. But many organizations have hesitated to fully commit due to risks around:

- Hallucinations and inaccurate information

- Bias and unfair outcomes

- Lack of transparency in decision-making

- Compliance with regulations like GDPR, HIPAA, and the EU AI Act

Anthropic's Constitutional AI approach, safety-first development philosophy, and transparent risk assessment address many of these concerns. This makes Claude particularly appealing for:

Healthcare: Analyzing medical records and research papers with reduced hallucination risk

Finance: Fraud detection and compliance monitoring with clear audit trails

Government: Policy analysis and citizen services with built-in safety guardrails

Education: Tutoring and content generation with age-appropriate safeguards

Legal: Contract analysis and legal research with citation verification

Software Development: With Claude Code and Agent Teams, entire development workflows—from architecture to implementation to code review—can be orchestrated through AI, while Claude Cowork brings accessible AI automation to non-technical team members through Skills.

Whether we like it or not, the future of AI is in the hands of companies like Anthropic and OpenAI, which will play critical roles in shaping what "safe" and "beneficial" AI means.

And now, let's drop the serious tone and have some fun.

👉 How to Get Started with Claude

If you haven't experienced Claude yet, you can try it for yourself for free.

Head over to https://claude.ai and create a new account.

Claude's interface is clean and conversational, similar to ChatGPT but with some key differences:

- Longer Conversations: Claude's extended context window means you can have much longer, more coherent conversations without losing the thread.

- Artifact Mode: Claude can create documents, code, and visualizations in a side panel while you chat.

- Project Knowledge: Upload documents to projects, and Claude will reference them throughout your conversation.

Here's how it works:

Enter a prompt in plain English and wait for Claude to generate an answer. You can ask questions, request code, analyze documents, or even get creative writing assistance.

Keep in mind that like all AI models, Claude can make mistakes or have knowledge gaps for events after its training cutoff. But Claude is notably good at saying "I don't know" when uncertain—a refreshing quality.

For non-technical users, download the Claude desktop app and try Claude Cowork—connect your files and software, then start building Skills to automate your daily workflows.

For developers, install Claude Code to bring AI assistance directly to your terminal:

npm install -g @anthropic-ai/claude-code

claude --help

To enable Agent Teams (experimental), set the environment variable before starting a session:

export CLAUDE_CODE_EXPERIMENTAL_AGENT_TEAMS=1

claude

Then describe your task and the team roles you want—Claude will spin up a lead agent and specialized teammates automatically.

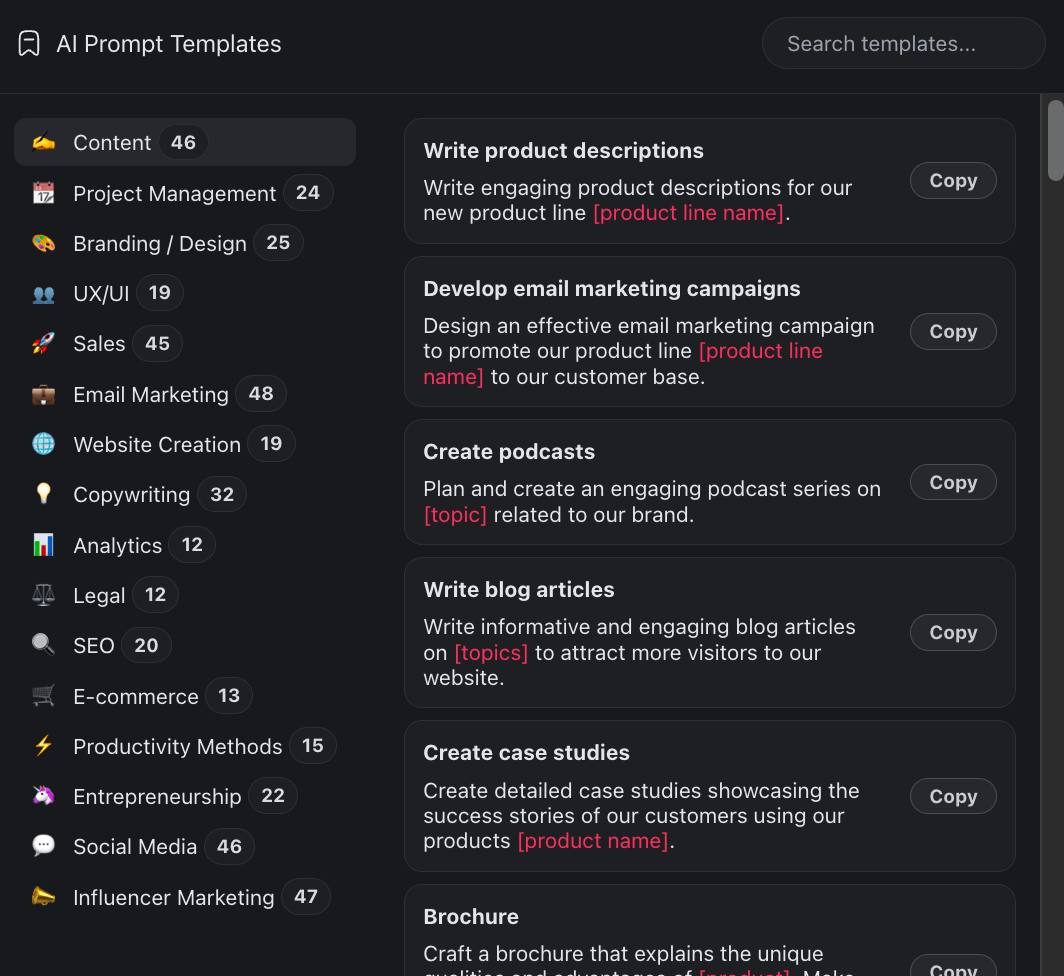

💡 Pro Tip: Want to supercharge your AI workflow? Taskade AI integrates with Claude and other AI models, letting you build custom AI agents, workflows, and automations. Check out our gallery of AI prompt templates to get started!

A 🤖 Prompt Templates Gallery works with Claude, GPT-4, and other AI models.

Have fun exploring!

🦞 The OpenClaw Trademark Controversy (January 2026)

In January 2026, Anthropic found itself in an unexpected PR battle — not over model capabilities, but over a lobster.

OpenClaw (originally "Clawdbot"), the open-source AI agent framework that had rocketed to 100,000+ GitHub stars, drew a trademark request from Anthropic. The name "Clawdbot" was too phonetically close to "Claude" for the legal team's comfort. Anthropic asked the project's creator, Peter Steinberger, to rename it.

The developer community's reaction was swift and overwhelmingly negative. The story was covered by NBC News, Fortune, Forbes, and Axios, with most outlets framing it as a David-vs-Goliath overreach — a $380 billion company pressuring a solo open-source developer over a lobster pun. One Hacker News commenter captured the mood: the company "currently paying $1.5 billion for work that draws on the broader corpus of human creative output" was asking a small project to rename because of a phonetic similarity.

The backlash was paradoxically beneficial for the project. In the weeks following the controversy, OpenClaw gained an estimated 91,000 additional GitHub stars, making Anthropic's trademark request one of the most effective unintentional marketing campaigns in open-source history. Steinberger agreed to rename without a fight — the project became Moltbot, then OpenClaw — but as one developer noted: "Anthropic won every battle and still lost the war."

The episode highlighted a tension that many AI companies face as they scale: the instinct to protect brand and IP can clash with the open-source communities that drive adoption and ecosystem growth.

📺 Super Bowl LX: Anthropic Goes on the Offensive (February 2026)

In perhaps the most aggressive marketing move in AI industry history, Anthropic aired four attack ads during Super Bowl LX (February 2026) — directly targeting OpenAI.

The ads were unmistakable. One showed the word "betrayal" filling the screen, followed by a montage of OpenAI's pivot from nonprofit to for-profit, the Altman firing and rehiring saga, and the exodus of safety researchers. Another highlighted OpenAI's $8.5 billion in 2025 losses against Anthropic's leaner operation. The message: OpenAI abandoned its mission; Anthropic is the company that kept the promise.

The strategy was polarizing. Critics called it unprecedented for an AI company to run attack ads. Supporters argued it was the kind of brand boldness the AI safety movement needed. The data suggests it worked: Claude usage spiked 11% in the week following the Super Bowl, and brand awareness surveys showed Anthropic crossing the threshold from "known by developers" to "known by the general public" for the first time.

The Super Bowl campaign signaled a fundamental shift in Anthropic's go-to-market strategy — from research lab selling API access to consumer brand competing for mainstream attention.

🎙️ Dario Amodei: The Revenue Rocket & Scaling Laws (February 2026)

In a wide-ranging February 2026 interview, Dario Amodei offered the most detailed public picture yet of Anthropic's trajectory — and some of the most honest assessments of AI's future from any lab leader.

The revenue numbers are staggering. Anthropic went from $0 revenue to $100 million in its first year of product sales, then from $100 million to $1 billion in the next year, and has reached $14 billion in annualized revenue as of February 2026 — a growth curve that rivals the fastest-scaling SaaS companies in history. Claude Code alone hit $1 billion in annualized run-rate revenue within roughly six months of launch.

On talent density vs. headcount: When asked about Meta's strategy of hiring thousands of AI engineers, Amodei pushed back firmly. "Anthropic has about 1,100 people. We compete with companies with 10x or 100x our headcount. The bottleneck in AI isn't how many people you hire — it's whether your best people can move fast without organizational friction." He drew a contrast with Mark Zuckerberg's recruitment strategy, arguing that talent density matters more than raw numbers at the frontier.

On scaling laws: Amodei gave the most detailed defense of continued scaling from any lab leader. "There is maybe a 20-25% chance that scaling laws plateau or hit a wall before we reach transformative AI. But I've been betting on scaling since before Anthropic existed, and every year the skeptics have been wrong." He noted that Anthropic holds the "shortest timeline" view among major lab leaders — believing transformative AI capabilities could arrive sooner than competitors expect.

On the safety bet: "The companies that treat safety as an afterthought will be the companies that get regulated out of existence. The companies that build safety into the architecture — Constitutional AI, interpretability, responsible scaling — those are the ones that governments will trust to keep operating."

This combination — rocket-ship revenue, a lean team punching above its weight, aggressive scaling bets, and a genuine safety commitment — is what makes Anthropic the most interesting company in the AI race. They're simultaneously the insurgent and the establishment, the safety hawks and the capability pushers.

🚀 Quo Vadis, Anthropic?

Anthropic's journey from a group of concerned OpenAI researchers to a $380 billion AI powerhouse took just five years. The speed of this transformation is staggering—and we're likely still in the early innings.

Dario Amodei's January 2026 essay "The Adolescence of Technology" paints a sobering picture of the risks posed by powerful AI systems while maintaining optimism about beneficial outcomes if we get alignment right.

Perhaps the deepest lesson from Anthropic's founders is about the nature of consensus. As Dario Amodei reflected: "There can be this seeming consensus, these things that everyone knows, that seem sort of wise, seem like they're common sense, but really, they're just herding behavior masquerading as maturity and sophistication." Anthropic was built on counter-consensus bets—that AI would scale dramatically, that safety would matter, that simple alignment methods like Constitutional AI could work—and those bets have consistently paid off.

The company faces significant challenges ahead:

Competition: OpenAI isn't standing still. GPT-series and o3 models continue to push boundaries. Google's Gemini brings search integration advantages. Meta's open-source Llama models are free and improving rapidly. And the open-source agent movement — led by OpenClaw and its 196,000+ GitHub stars — is building alternatives that don't require any company's API.

Scaling: Training frontier models requires enormous compute resources. Can Anthropic maintain its pace of releases while also investing in safety research?

Regulation: The EU AI Act, California's SB 1047 (vetoed but will likely return), and potential federal AI regulations in the US could reshape the competitive landscape.

AGI Timeline: If we're really approaching artificial general intelligence in the 2027-2030 timeframe as some predict, will Anthropic's safety-first approach be vindicated or prove too cautious? Amodei's own estimate — a 20-25% chance of plateauing — suggests he's more confident than most.

Product Evolution: With Claude.ai, Cowork, Claude Code, and the API, Anthropic is now running a multi-product company. Coordinating development across all four surfaces while maintaining quality and safety adds organizational complexity.

One thing is certain—whether we're ready or not, we're heading toward a technological future where artificial intelligence will become a constant in our personal and professional lives.

The question is whether that AI will be aligned with human values and oversight, or whether we'll look back on this moment and wish we'd listened to the warnings.

Anthropic is betting everything that safety and capability can advance together. As co-founder Chris Olah observed, safety misalignment problems now "fall out as a natural dividend of the tech we're building." If that trend holds, Anthropic's counter-consensus bet may prove to be the most important one in the history of technology.

🔗 Related Reading

- What is OpenAI? — Complete history of ChatGPT, GPT-5, and Stargate

- What is Google Gemini? — History of DeepMind, Bard, and Gemini AI

- What is Agentic AI? — The complete guide to autonomous agents

- What Are Multi-Agent Systems? — Building autonomous AI teams

- What is Vibe Coding? — Build apps by describing what you want

- Claude Code vs Cursor vs Taskade Genesis — AI coding tools compared

- Best Devin AI Alternatives — AI coding agents for 2026

- What is OpenClaw? — History of the open-source AI agent framework

- Autonomous Task Management — AI agents that plan and execute

- Agentic Workflows: Path to AGI — How agents connect to AGI

🐑 Before you go... Anthropic builds the models. Taskade Genesis lets you build with them. One prompt. One app. Describe what you need, and Genesis turns it into a living workspace with AI agents, automations, and real-time collaboration in seconds.

🚀 AI App Builder: Turn a single prompt into a fully functional app. Dashboards, portals, forms, calculators, and more. No code required.

🤖 Custom AI Agents: Build autonomous agents with custom tools, slash commands, and persistent memory. Deploy them inside your workspace or embed them publicly.

🔄 Automations: Wire up workflows that run on autopilot. Branching, looping, filtering, and 100+ integrations. Temporal durable execution under the hood.

🧬 Workspace DNA: Memory + Intelligence + Execution. Every project remembers, every agent reasons, every automation executes. That's living software.

Ready to build? Create a free account and ship your first app today. 👈

🔗 Resources

- https://www.anthropic.com/

- https://en.wikipedia.org/wiki/Anthropic

- https://www.anthropic.com/research/constitutional-ai-harmlessness-from-ai-feedback

- https://www.anthropic.com/news/claude-3-family

- https://www.anthropic.com/news/3-5-models-and-computer-use

- https://code.claude.com/docs/en/overview

- https://www.cnbc.com/2026/01/07/anthropic-funding-term-sheet-valuation.html

- https://time.com/7354738/claude-constitution-ai-alignment/

- https://www.cnbc.com/2026/02/12/anthropic-closes-30-billion-funding-round-at-380-billion-valuation.html

- https://www.youtube.com/watch?v=om2lIWXLLN4

- https://www.anthropic.com/news/claude-sonnet-4-6

- https://techcrunch.com/2026/02/17/anthropic-releases-sonnet-4-6/

- https://www.lennysnewsletter.com/p/head-of-claude-code-what-happens

- https://newsletter.semianalysis.com/p/claude-code-is-the-inflection-point

- https://www.anthropic.com/research/how-ai-is-transforming-work-at-anthropic

- https://www.youtube.com/watch?v=RFKCzGlAU6Q

💬 Frequently Asked Questions About Anthropic

Who is the CEO of Anthropic?

Dario Amodei is an American AI researcher and entrepreneur who has been the CEO of Anthropic since founding the company in 2021. Prior to founding Anthropic, he was the VP of Research at OpenAI. His sister, Daniela Amodei, serves as President of Anthropic.

Was Anthropic founded by former OpenAI employees?

Yes, Anthropic was founded in 2021 by Dario Amodei, Daniela Amodei, and other senior researchers who left OpenAI in 2020 due to concerns about the company's direction on AI safety and its partnership with Microsoft. Other co-founders include Tom Brown, Chris Olah, Sam McCandlish, Jack Clark, and Jared Kaplan—many of whom had worked together at Google Brain before joining OpenAI.

What is Constitutional AI?

Constitutional AI (CAI) is Anthropic's signature approach to AI alignment. Instead of relying primarily on human feedback, Constitutional AI trains models to critique and improve their own responses based on a written set of principles called a "constitution." The idea, proposed by co-founder Jared Kaplan, was to leverage the fact that AI models can read a set of principles and compare those principles to their own behavior. The 2026 constitution has grown to 23,000 words (up from 2,700 in 2023), shifting from rigid rules to reason-based alignment.

What is Claude AI?

Claude is Anthropic's family of large language models, named after Claude Shannon, the father of information theory. Claude models come in three tiers—Haiku (fast and affordable), Sonnet (balanced), and Opus (most capable)—with the current flagship being Claude Opus 4.6, which features a 1-million-token context window and Agent Teams support. Claude Sonnet 4.6, released February 2026, delivers near-Opus performance at roughly 40% lower cost and is now the default model on Claude.ai.

What is Claude Sonnet 4.6?

Claude Sonnet 4.6, released February 17, 2026, is Anthropic's most capable Sonnet model yet. It features a 1-million-token context window (beta), scores 79.6% on SWE-bench Verified for coding, 72.5% on OSWorld for computer use, and actually beats Opus 4.6 on office tasks (1633 vs 1606 Elo) and financial analysis (63.3% vs 60.1%). Priced at $3/$15 per million input/output tokens—the same as Sonnet 4.5 and roughly 40% cheaper than Opus 4.6. In Claude Code testing, users preferred it over Sonnet 4.5 about 70% of the time and over Opus 4.5 about 59% of the time. It's now the default model for Free and Pro plan users on Claude.ai.

What are Claude Code Agent Teams?

Agent Teams is a feature introduced with Claude Opus 4.6 that allows multiple Claude Code instances to work together as a coordinated team. A lead agent assigns specialized teammates—for example, one on frontend, another on backend, and a third on testing—each working in independent context windows with shared tasks and inter-agent messaging. It's ideal for complex parallel work like building multi-layer features or running parallel code reviews.

What is the difference between sub-agents and Agent Teams?

Sub-agents run in a single session and only report back to the main agent—ideal for quick, lower-cost tasks. Agent Teams work in independent context windows with shared task lists, self-aligning work, and direct inter-agent communication. Agent Teams excel at complex parallel work where collaboration adds value, though they consume more tokens.

What is Claude Cowork?

Claude Cowork is Anthropic's desktop GUI application launched in January 2026 for non-technical users. It provides file access and organization, software connectors (Notion, Slack, Google Drive, etc.), browser automation, code execution, and a reusable Skills system. Think of Cowork for day-to-day office work, Claude Code for production development, and Claude.ai for brainstorming.

What are Claude Skills?

Skills are reusable instruction and knowledge bundles inside Claude Cowork that save a specific process or workflow. You can build them by walking through a task once with Claude, then saving it for reuse. Skills can be triggered in any context window, combined with other Skills, and connected to external tools via MCP connectors. Thousands of community-built Skills are available at marketplaces like smithy.ai.

Is Anthropic owned by Amazon?

No, Anthropic is an independent AI safety company structured as a public benefit corporation with a Long-Term Benefit Trust that prevents any single investor from controlling the company. However, Amazon has invested $8 billion, Google $3 billion, and Microsoft/Nvidia up to $15 billion. Anthropic maintains a multi-cloud strategy across AWS, Google Cloud, and Microsoft Azure.

How much is Anthropic worth?

As of February 2026, Anthropic's valuation stands at $380 billion following a $30 billion Series G funding round led by D.E. Shaw Ventures, Dragoneer, Founders Fund, and GIC. The company's annualized revenue has reached $14 billion. Total funding exceeds $67 billion across 17 rounds—making it one of the most valuable private companies in history.

What is Claude Code?

Claude Code is an agentic coding tool that lives in your terminal, understands your codebase, and helps you code faster by executing routine tasks, explaining complex code, and handling git workflows—all through natural language commands. With Agent Teams (Opus 4.6), you can coordinate multiple Claude Code instances as a full AI engineering team with specialized roles.

How does Claude Code work under the hood?

Claude Code runs a master while loop — the model receives context, returns tool calls (Read, Edit, Bash, Grep, Glob, Web Search, Todos, Tasks), the system executes them and feeds results back, repeating until the model stops calling tools. This "less scaffolding, more model" philosophy replaced the complex DAGs, RAG pipelines, and ML classifiers that earlier coding agents used. Key innovations include unified diffs for file editing (faster and less error-prone than full rewrites), sub-agents (Tasks) that fork independent context windows to prevent main-loop clutter, Bash as a universal adapter with massive training data, and CLAUDE.md as a simple markdown-based project constitution instead of vector-indexed codebases. Context management uses head-and-tail compaction at roughly 92% capacity and treats the sandbox filesystem as external memory.

What programming languages does Claude support?

Claude supports all major programming languages including Python, JavaScript, TypeScript, Java, C++, Go, Rust, Ruby, PHP, and many others. Claude Code has particularly strong capabilities in modern web development stacks and systems programming.

What is computer use in Claude?

Computer use is a feature launched in October 2024 that allows Claude to interact with computer interfaces like a human would—moving the mouse, clicking buttons, typing text, and navigating applications. This capability has since been productized in Claude Cowork's browser use feature, which can run in the background while you work on other tasks.

How does Anthropic differ from OpenAI?

While both companies build frontier AI models, Anthropic differentiates itself through its safety-first approach (Constitutional AI), transparent risk assessments (Responsible Scaling Policy with ASL levels), multi-cloud partnerships (Amazon, Google, Microsoft), developer-centric tools (Claude Code with Agent Teams), and non-technical user tools (Claude Cowork with Skills). Anthropic is also structured as a public benefit corporation with a Long-Term Benefit Trust.

What is the Claude context window?

Claude's context window has evolved dramatically: from 9K tokens (Claude 1) to 100K (Claude 2) to 200K (Claude 3/4) to 1 million tokens in beta with both Claude Opus 4.6 and Claude Sonnet 4.6. The 1M token window can process approximately 750,000 words—equivalent to multiple entire codebases or hundreds of documents in a single prompt. Context compaction auto-summarizes older context for effectively unlimited conversations.

Can I use Claude for my business?

Yes, Claude is available for business use through several channels: claude.ai for individual users, Claude Cowork for office workflows, Claude Code for development teams, the Claude API for building custom applications, AWS Bedrock for Amazon cloud customers, and Google Cloud Vertex AI for Google cloud customers. Enterprise plans with enhanced security, compliance, and SSO are available.

Is Anthropic working on AGI?

While Anthropic is developing increasingly capable AI systems, the company emphasizes safety and alignment over racing to AGI (Artificial General Intelligence). Dario Amodei has written extensively about the risks of powerful AI systems and the importance of solving alignment problems before reaching AGI-level capabilities. The company's Responsible Scaling Policy defines explicit risk thresholds that must be cleared before deploying more powerful models.

What is the Responsible Scaling Policy?

The Responsible Scaling Policy (RSP) is Anthropic's framework for evaluating AI risks at different capability levels, modeled after US government biosafety levels. It defines AI Safety Levels (ASL) from 1-5, with each level requiring progressively stricter safety demonstrations. Claude Opus 4 was classified as ASL-3, meaning it required additional safeguards. ASL-4 requirements haven't been written yet and may require currently unsolved research problems like mechanistic interpretability.

🧬 Build Your Own AI Applications

While Anthropic builds the foundation models, Taskade Genesis lets you build complete AI-powered applications on top of them. Create custom AI agents, workflows, and automations with a single prompt. It's vibe coding—describe what you need, Taskade builds it as living software. Explore ready-made AI apps.