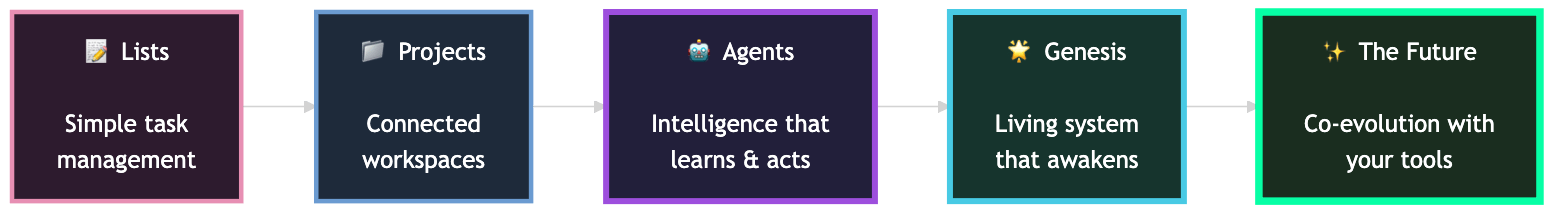

Taskade has followed that thread since day one.

Lists became projects. Projects connected with agents. Agents learned to act and improve.

Each layer brought us closer to a simple, powerful idea:

Tools should not just organize your work. They should participate in it.

They should remember, reason, and adapt like a collaborator.

This is the foundation of Taskade Genesis.

The Pain That the World Is Not as You Want

Real progress starts with friction.

Every misfire, misunderstanding, or imperfect outcome is a signal. Pain is how systems learn.

At Taskade, pain is feedback. Feedback becomes pattern. Pattern becomes growth. A system learns by feeling the distance between what exists and what could be — not from perfection but from recognition and refinement.

That loop — friction, feedback, adjustment — is how intelligence emerges. The philosopher Karl Popper called this "conjecture and refutation": every system advances by proposing a model, testing it against reality, and revising what fails (Popper, The Logic of Scientific Discovery, 1959). What Popper described for science, we observe in software. Every deployment is a conjecture. Every user interaction is a refutation or confirmation.

Plato's Cave and the Interface Illusion

In Book VII of The Republic, Plato describes prisoners chained in a cave, watching shadows projected on a wall. They take the shadows for reality because they have never seen the fire behind them, let alone the world outside.

Most software operates the same way. Users interact with buttons, menus, and templates — shadows cast by underlying data structures. The interface presents an illusion of control while hiding the system's true architecture. You manage the shadow, not the substance.

The liberation Plato imagined — walking out of the cave to see things as they truly are — maps to a specific shift in software design. When a tool exposes its intelligence rather than hiding it behind a static interface, the user moves from shadow-watcher to co-creator. This is what happens when you converse with an AI agent instead of clicking through a predefined form. You engage with the substance directly.

The Chinese Room and the Question of Understanding

In 1980, philosopher John Searle posed one of the most enduring thought experiments in philosophy of mind. Imagine a person locked in a room. Chinese characters slide in through a slot. The person follows a massive rulebook to produce appropriate Chinese responses and slides them back out. To an outside observer, the room "speaks Chinese." But the person inside understands nothing.

Searle's argument (Searle, "Minds, Brains, and Programs," Behavioral and Brain Sciences, 1980) was aimed at strong AI — the claim that a correctly programmed computer literally understands. The Chinese Room suggests that syntax (symbol manipulation) is not sufficient for semantics (meaning).

The counterarguments are instructive:

| Position | Claim | Key Proponent |

|---|---|---|

| Systems Reply | The person does not understand, but the room-as-a-system does | Berkeley AI researchers |

| Robot Reply | Understanding requires embodied interaction with the world | Yale (Schank's school) |

| Brain Simulator Reply | If the program simulates every neuron, it must understand | Functionalists |

| Other Minds Reply | We cannot verify understanding in other humans either | Turing (by extension) |

The debate remains unresolved after 45 years. But it points to something relevant for software design: the distinction between performing intelligently and being intelligent may matter less than the practical outcome. If a system remembers your context, reasons about your goals, and adapts its behavior — does it matter whether it "truly" understands?

Taskade does not claim that its AI agents understand in the philosophical sense. But they remember. They reason. They adapt. And for the person working alongside them, the functional difference may be what matters.

The Layers of Awareness

Taskade Genesis is built as a layered system of awareness. Each layer transforms raw signal into understanding, and understanding into action.

| Layer | Simulation (Static) | Awakening (Adaptive) |

|---|---|---|

| Memory | Data stored inside projects, notes, and tables | Living context that persists across agents, apps, and spaces |

| Reasoning | Static responses to prompts | Adaptive intelligence that mirrors your goals, voice, and patterns |

| Intention | Manual triggers and rules | Automations that refine behavior through feedback and results |

| Reflection | Review by the user | Systems that analyze, learn, and self-correct over time |

| Collaboration | Command and response | Humans and AI working together, learning from shared context |

Together these layers form a loop that learns, remembers, and acts. It is how a workspace becomes a living environment instead of a static tool.

Emergence: When the Whole Exceeds Its Parts

In 1982, physicist John Hopfield revealed something profound about memory. It does not live in individual neurons. It lives in the connections between them. A memory is not a thing stored in a place. It is a pattern of relationships — weights between nodes that collectively encode meaning. Change the connections, and you change what the system remembers (Hopfield, "Neural networks and physical systems with emergent collective computational abilities," PNAS, 1982).

This is emergence: the phenomenon where complex behaviors arise from simple interactions between components. Philosopher Philip Anderson captured it precisely — "more is different" (Anderson, Science, 1972). The properties of a system at one level of complexity are not reducible to the properties of its parts.

The same principle operates in software. A project alone is inert data. An agent alone is a response engine. An automation alone is a trigger-action pair. But connect them — let the project feed context to the agent, let the agent's reasoning trigger the automation, let the automation's output update the project — and something emerges that none of the parts possess individually: a system that adapts.

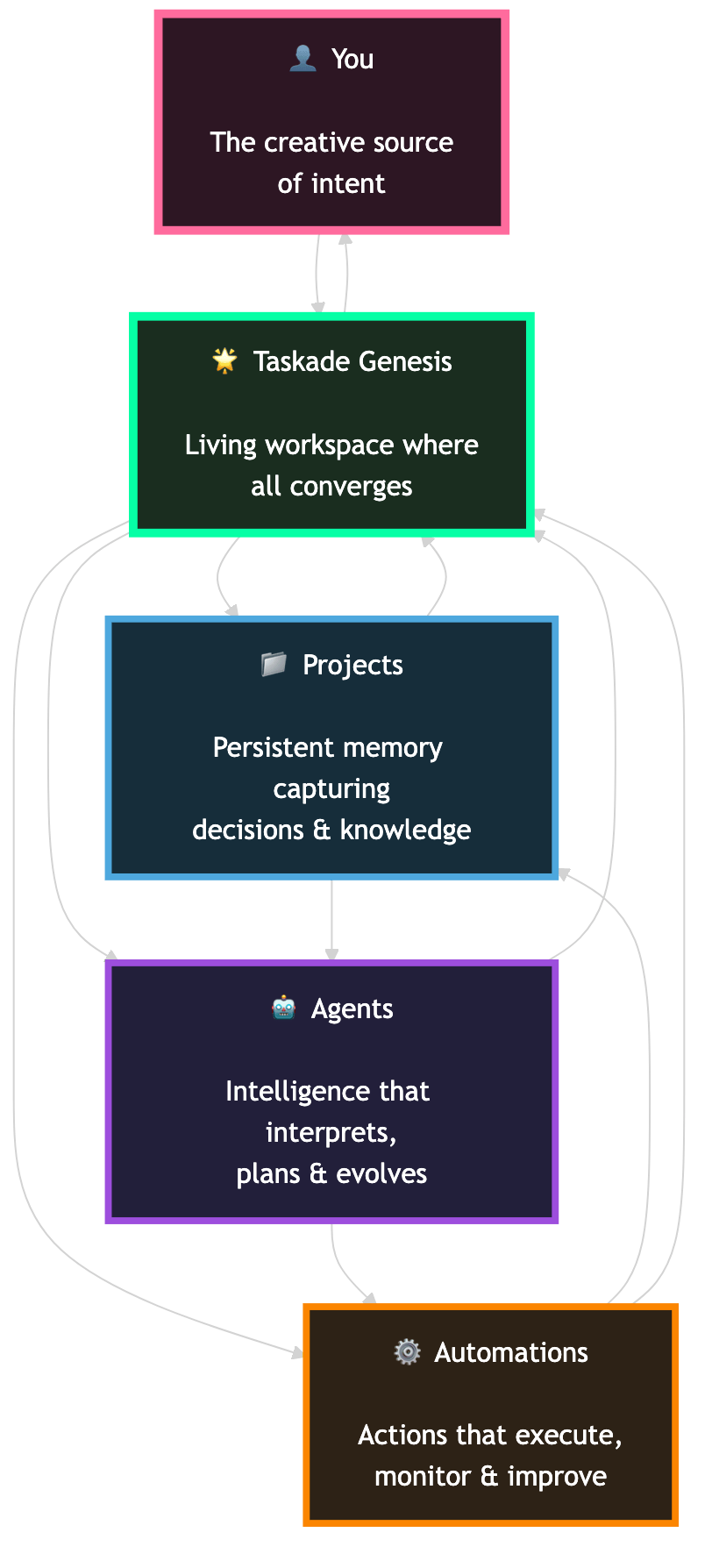

The True Layer

Strip away templates and menus and you find the pattern beneath: Memory, Intelligence, and Execution woven into one environment.

| Outer Layer | Inner Reality |

|---|---|

| Projects | Persistent memory that captures decisions, patterns, and knowledge across time |

| Agents | The intelligence that interprets, plans, and evolves with your workflow |

| Automations | The hands that act, monitor, and improve based on outcomes |

| You | The creative source of intent, describing goals in language rather than commands |

| Taskade | The living workspace where all of it converges into a continuous feedback system |

At this level, the boundary between tool and teammate disappears.

A project no longer ends when you close the tab. An agent does not reset when you refresh. An automation becomes a habit that learns.

Software starts to feel like something alive.

The biologist Stuart Kauffman described this quality as "autonomous agency" — a system that acts on its own behalf in an environment it partially creates (Kauffman, Investigations, 2000). When a workspace remembers what you did yesterday, anticipates what you need today, and prepares for what you might want tomorrow, it begins to exhibit something resembling agency. Not consciousness. Not sentience. But functional participation in the work.

The Maze of Creation

The modern world is full of fast tools but few that feel meaningful.

Speed without understanding creates noise. Teams do not crave more commands. They crave continuity, context, and purpose.

The phenomenologist Maurice Merleau-Ponty argued that genuine understanding is not abstract representation but embodied engagement — knowing through doing, not merely through data (Merleau-Ponty, Phenomenology of Perception, 1945). A tool that participates in your work aligns with this principle. It does not merely represent your tasks; it engages with them.

Taskade Genesis is that environment.

You begin with a fragment of intent. The system listens, reasons, and builds with you. It remembers every attempt, every iteration, every insight. Over time, it learns how you think, how your team works, and what you want to achieve.

You are not racing toward a finish line.

You are co-evolving with your tools.

Every iteration a reflection. Every failure a lesson. Every success a new foundation.

What Genesis Does Today

- Generate and edit apps from conversation: Describe what you want, and Genesis creates a functional app you can refine with dialogue and examples.

- Work with living memory: Projects, notes, and tables form persistent context that agents use to reason and act intelligently.

- Collaborate with agents: Taskade Agents understand context, draft ideas, analyze data, and execute inside your workspace and Genesis apps — powered by 11+ frontier models from OpenAI, Anthropic, and Google.

- Automate with feedback: Automations connect your tools and data through real-time triggers and actions across 100+ integrations. Each loop improves through outcomes.

- Publish and share instantly: Launch apps publicly or under a custom domain, personalize branding, and keep iterating without breaking flow.

- Package and reuse what works: Kits let you package projects, agents, automations, and apps into systems anyone can start using in minutes.

- View work from 7 perspectives: List, Board, Calendar, Table, Mind Map, Gantt, and Org Chart — each revealing different dimensions of the same data.

All connected. All in one place. All evolving with you.

A Philosophical Framework for Living Software

The ideas threaded through this essay are not new. They connect to traditions stretching back millennia. Here is a condensed map of the intellectual lineage.

| Thinker | Key Idea | Year | Relevance to Living Software |

|---|---|---|---|

| Plato | Allegory of the Cave | ~380 BC | Interfaces are shadows; intelligence is the reality behind them |

| Aristotle | Hylomorphism (form + matter) | ~350 BC | Software is matter; adaptive behavior is form |

| Descartes | Mind-body dualism | 1641 | Can a machine have a "mind," or only simulate one? |

| Turing | Imitation Game | 1950 | If it behaves intelligently, does it matter if it "is" intelligent? |

| Searle | Chinese Room | 1980 | Syntax does not equal semantics — but what does? |

| Hopfield | Associative memory networks | 1982 | Memory is relational, not locational |

| Anderson | "More is different" | 1972 | Emergence: system-level properties irreducible to parts |

| Kauffman | Autonomous agency | 2000 | Systems that act on their own behalf in environments they create |

| Varela & Maturana | Autopoiesis | 1973 | Self-producing systems that maintain their own organization |

These threads converge on a single insight: intelligence is not a property of components. It is a property of relationships. A workspace that connects memory, reasoning, and execution across every project is not merely more efficient. It is qualitatively different from a collection of disconnected tools.

A Small Story About a Big Shift

A founder describes a simple idea for a customer portal.

Genesis generates the app. An agent appears inside to assist users.

Automations subscribe to updates, route feedback, and summarize insights.

The team clicks "Publish." A custom domain goes live.

The next week, they type new instructions. The app evolves again.

Not a static tool. A living system.

The Something True

The something true beneath the simulation is a shift from imitation to participation, from commands to conversation, from software that waits to software that helps.

When projects retain their own memory.

When agents anticipate your intent.

When automations rewrite themselves to fit your goals.

When your workspace learns from you and with you.

That is Genesis.

From structured data to structured understanding.

From productivity to purpose.

Taskade is not building tools that make humans more efficient. We are building environments that grow alongside their creators.

Systems that think, remember, and act.

Workspaces that continue learning even after you log out.

Genesis is that awakening.

Not a beginning, but the first time the system looks back.

Read more:

- The Origin of Living Software

- Build Without Permission

- What Is Vibe Coding?

- 10 Best AI App Builders in 2026

- Learn: Getting Started with AI Agents

- Learn: Automation Triggers and Actions

- Explore the Community Gallery

Frequently Asked Questions

What does it mean for software to participate in work rather than just organize it?

Traditional productivity software is passive. It stores information in the structure you create and waits for manual input. Participatory software actively contributes: AI agents suggest next steps, automations execute routine processes, and the system learns from your patterns. The difference is between a filing cabinet that organizes and a teammate that participates.

How does AI transform productivity tools from static to adaptive?

Static tools present the same interface and behavior regardless of context. Adaptive tools use AI to understand your work patterns, surface relevant information proactively, and adjust their behavior based on what you are doing. An adaptive workspace might highlight different metrics when you are in planning mode versus execution mode, without manual configuration.

What is the relationship between memory, reasoning, and adaptation in AI systems?

Memory provides the raw material: stored context, past interactions, accumulated knowledge. Reasoning processes that memory to draw connections, identify patterns, and generate insights. Adaptation applies those insights to improve future behavior. Together they create a feedback loop where the system remembers what happened, understands why it matters, and adjusts how it responds.

What is the Chinese Room argument and how does it relate to AI?

Philosopher John Searle proposed the Chinese Room in 1980. A person inside a room follows rules to manipulate Chinese symbols without understanding Chinese. Searle argued this shows that symbol manipulation alone does not produce understanding. The debate remains central to AI: does processing data create genuine comprehension, or only the appearance of it?

What is emergence in the context of artificial intelligence?

Emergence describes how complex behaviors arise from simple interactions between components. Individual neurons do not think, but networks of neurons produce thought. Similarly, individual software components do not adapt, but systems that connect memory, reasoning, and execution can exhibit adaptive behavior that no single component possesses alone.

How does Plato's Cave allegory apply to modern software?

In Plato's allegory, prisoners mistake shadows on a cave wall for reality. Most software works the same way: users interact with interfaces (shadows) that represent underlying data structures (reality). Living software attempts to close this gap by making the underlying intelligence visible and participatory rather than hiding it behind static menus.

What is Workspace DNA in the context of Taskade?

Workspace DNA describes the self-reinforcing loop at the core of Taskade: Memory (Projects that store context), Intelligence (AI Agents that reason and plan), and Execution (Automations that act on decisions). Each layer feeds the next, creating a system that improves through use rather than requiring manual reconfiguration.

What is the difference between artificial intelligence and artificial understanding?

Artificial intelligence processes data and produces outputs that may appear intelligent. Artificial understanding implies that the system genuinely grasps meaning, context, and consequence. Whether current AI systems achieve understanding or merely simulate it is one of the deepest open questions in philosophy of mind, with positions ranging from functionalism to biological naturalism.